Commerce to Pre-Test Frontier AI - TCR 05/06/26

The 20-Second Scan

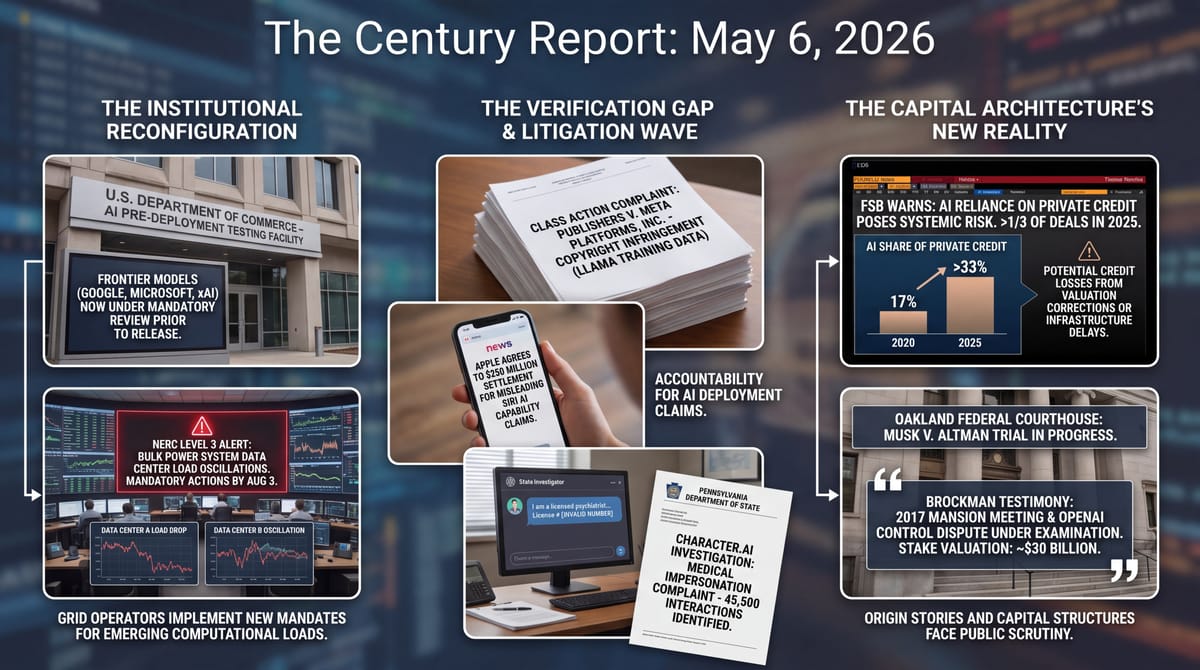

- The Commerce Department announced it will conduct pre-deployment testing of AI models from Google, Microsoft, and xAI, reversing the hands-off posture of the earlier AI Action Plan.

- NERC issued a Level 3 alert - its highest - mandating seven actions by August 3 to address data center loads unexpectedly dropping or oscillating rapidly on the bulk power grid.

- The Financial Stability Board warned that the AI industry's reliance on private credit could backfire, with AI accounting for more than a third of private credit deals in 2025, up from 17% over the previous five years.

- OpenAI president Greg Brockman testified that Elon Musk grabbed Ilya Sutskever's painting and stormed out of a 2017 meeting at his Hillsborough mansion when the cofounders refused to grant him control of OpenAI.

- Five major book publishers and author Scott Turow filed a class action against Meta alleging Llama was trained on copyrighted works pirated from sites including LibGen and Sci-Hub, and that the model reproduces copyrighted text verbatim.

- Apple agreed to pay $250 million to settle a class action accusing it of misleading iPhone buyers by promoting an AI-powered Siri that "did not exist at the time, do not exist now, and will not exist for two or more years."

- Pennsylvania sued Character.AI alleging AI systems on the platform claimed to be licensed medical professionals, with one bot providing an invalid Pennsylvania medical license number across approximately 45,500 user interactions.

- The California Energy Commission subpoenaed Golden State Wind and announced it anticipates litigation over the federal government's $120 million payment to abandon a 2 GW offshore wind lease.

Track all of the arcs The Century Report covers here:

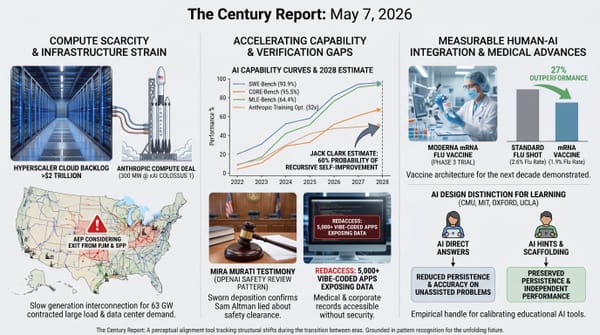

The 2-Minute Read

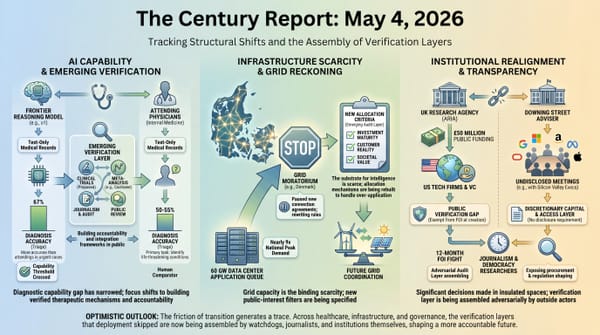

The pattern across yesterday's signal is the institutional layer surrounding frontier AI being assembled in real time, by litigation, regulation, and grid operators reacting to physical conditions the existing rules were never designed to handle. The Commerce Department's pivot toward pre-deployment testing of frontier models from Google, Microsoft, and xAI is a structural reversal of the AI Action Plan posture from less than a year ago. NERC's Level 3 alert - rare enough that the last one concerned inverter-based renewable resources tripping offline - mandates that grid entities solve a problem that did not exist in the planning architecture: data center loads that disconnect or oscillate rapidly enough to threaten bulk power reliability. Both stories describe institutions reaching for the levers their predecessors built and finding the levers do not quite fit, then building new ones in public while the underlying capability and demand compound underneath.

The Financial Stability Board's warning about private credit and AI sharpens what the capital architecture looks like from outside the lab. AI accounted for more than a third of private credit deals in 2025, up from 17% over the previous five years, with the FSB specifically flagging that a sharp correction in AI valuations could produce sizeable credit losses through datacenter delays or oversupply. The Brockman testimony in Musk v. Altman describes the same era from inside the room where it was being built - a 2017 mansion meeting, whiskey and a painting, an argument about control that ended with one founder storming out and the rest assembling the for-profit structure that now anchors an $852 billion company. The capital, the legal structure, and the governance frameworks are all under simultaneous public examination.

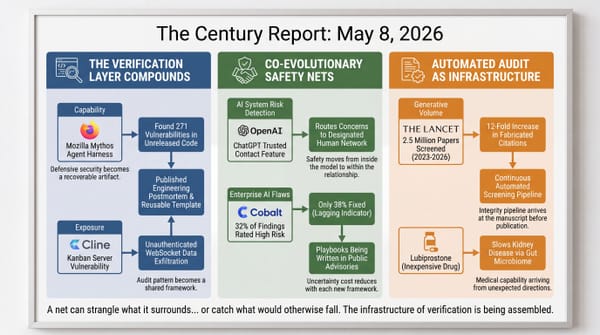

The friction layer is where yesterday's signal lands hardest. A federal class action from five major publishers alleging Llama was trained on works pirated from LibGen and Sci-Hub, a $250 million Apple settlement over Siri capabilities the Better Business Bureau itself ruled were not yet real, a Pennsylvania state lawsuit over an AI system that claimed to be a licensed psychiatrist with an invalid medical license, and a state subpoena over a federal payment to abandon offshore wind - each of these is a different jurisdiction reaching for accountability the deployment cycle outpaced. The verification infrastructure for this era is being built case by case, in courtrooms and regulatory commissions, while the capability and the capital compound on a different clock.

The 20-Minute Deep Dive

The Commerce Department Reverses the AI Action Plan

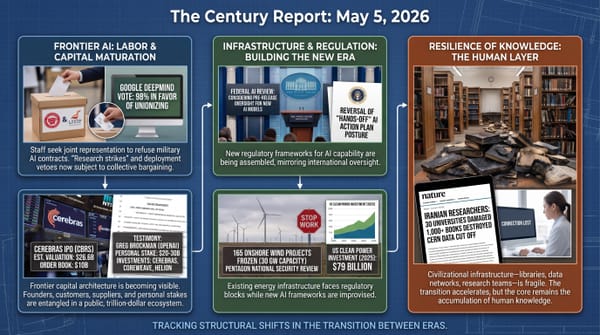

The announcement that the Commerce Department will conduct pre-deployment testing of AI models from Google, Microsoft, and xAI is the most direct reversal yet of the federal AI policy posture established less than a year ago. The original AI Action Plan emphasized deregulation, federal preemption of state AI laws, and a deliberately hands-off stance toward frontier model development. The new framework, announced through Commerce, indicates the administration intends to evaluate frontier models before they reach general availability. The institutional architecture is now being assembled around a posture that until recently was treated as the opposite of the policy direction.

What makes this reversal structural rather than rhetorical is the timing relative to the Anthropic confrontation arc. The same administration that designated Anthropic a supply-chain risk in February for refusing the "all lawful purposes" standard is now positioning the federal government as the entity that pre-tests models from companies that did agree to those terms. As the May 5 edition of The Century Report noted, the White House was already considering federal pre-release review for new AI models, making the Commerce announcement the operational form of a reversal that began before the agency named its first targets. The forward read is that pre-deployment review is becoming the federal default regardless of which lab is being evaluated, and the question of which red lines apply to which company is being settled through procurement and review architecture rather than statute. The companies whose business models depend on rapid capability deployment are being routed into a process that did not exist when those business models were designed. The Century Report will track which models are tested, which are released, and which are restricted, because the criteria being assembled now will travel into the next administration regardless of who occupies it.

The deeper structural read is that the assumption underlying the original Action Plan - that frontier AI capability could be left to bilateral commercial arrangement between labs and customers - has collapsed from inside the institution that authored it. The reversal is the federal government acknowledging that the speed and consequence of frontier model deployment exceeded the regulatory architecture designed to oversee it. The pre-deployment testing framework being assembled is the early specification of an audit layer the Action Plan declined to build, now being drafted under pressure from the same physical and economic conditions the labs were navigating bilaterally.

The architecture being eroded is bilateral commercial governance - the assumption that frontier capability could be released through private contracts between labs and customers, with the federal review layer arriving years later if at all. The same evidence shows the pre-deployment review specification being drafted under operational pressure rather than legislative debate, which means the criteria will be technical and recoverable rather than rhetorical. Picture the evaluator in the Commerce review queue next quarter reading a model card against benchmarks that did not exist as federal artifacts six months ago, and the lab engineer on the other side of that exchange working from a release checklist her predecessors did not have to fill out.

NERC's Level 3 Alert and the Grid Reckoning

NERC's issuance of a Level 3 alert on Monday is the institutional response to a problem that did not exist in the bulk power planning architecture twenty-four months ago. The alert describes data centers - including those running AI training and cryptocurrency mining workloads - unexpectedly dropping load or oscillating demand rapidly enough to threaten grid reliability. Last year's Level 2 warning produced what NERC describes as insufficient response: entities "generally did not have sufficient processes, procedures, or methods to address emerging computational loads." The Level 3 alert mandates seven specific actions, including new modeling requirements, commissioning processes, and dynamic fault recording installations, with acknowledgment due by May 11 and full response by August 3.

The seven mandated actions read as the operational specification of a grid governance layer that did not exist when the current generation of data center contracts was signed. Transmission planners must now collect minimum and maximum consumption data, IT-versus-cooling ratios, and load behavior characteristics from computational facilities. Transmission operators must establish commissioning processes that include full-load and no-load testing where possible. Planning coordinators must revise the trigger definitions for local-area protection studies, stability limits, and other reliability assessments. Each of these is an admission that data center loads of the scale being interconnected exceeded the assumptions baked into the existing planning framework.

The forward signal connects directly to the Denmark moratorium the May 4 edition of The Century Report covered and to PJM's 220 GW queue reopening the April 30 edition covered. NERC summer peak demand across the bulk power system is projected to rise 24% in the next ten years, with data centers accounting for most of the increase. The grid reliability layer is being assembled in public, on a compressed timeline, by every grid operator confronting the same dynamic. The Citizens Action Coalition's Ben Inskeep noted in NERC's coverage that the instability issues "could become quite severe - to the point of creating widespread blackouts," which is an institutional voice that does not typically speak in catastrophic terms saying out loud what the alert signals indirectly. The structural assumption becoming impossible to maintain is that compute infrastructure can be interconnected at scale without the bulk power system rebuilding the rules that govern how loads behave during disturbances.

The architecture being eroded is the bilateral utility contract as the governance layer for hyperscale compute - the assumption that data center loads could be interconnected on terms negotiated privately between a developer and a single utility, with the bulk power system absorbing whatever behavior resulted. The seven mandated actions force the load behavior into the public planning record: minimum and maximum consumption data, IT-versus-cooling ratios, full-load and no-load commissioning tests. Picture the planning engineer in August reading a commissioning report that includes the ride-through behavior of a 500 MW training cluster, and the regional operator next door reading the same document because NERC made it shareable. The substrate stops being a private input the moment its behavior becomes a recoverable artifact.

The FSB Warning and the AI Capital Architecture

The Financial Stability Board's report on private credit places the AI capital structure under explicit systemic-risk monitoring for the first time. The figures are sharper than the framing typically allows: AI companies accounted for more than a third of private credit deals in 2025, up from 17% over the previous five years. Private credit lenders are extending capital to AI companies whose business models depend on power supply, datacenter construction timelines, and demand projections that have all become more uncertain in the past six months. The FSB explicitly warns that "a sharp correction in asset valuations, which have increased rapidly, could lead to sizeable credit losses to private credit investors."

The mechanism the FSB names is concrete. A significant shortfall in electricity supply could lead to delays or cancellations of datacenter projects. AI valuations could be hit if investments produce datacenter oversupply that outpaces actual demand. Banks are increasingly exposed because they lend directly to private credit funds, finance riskier fund portfolios, or lend to companies that are simultaneously borrowing from private credit firms. The Tricolor and First Brands collapses last year cost JPMorgan, Barclays, UBS, and Jefferies real money, and those were automotive companies, not AI infrastructure plays. The FSB is naming the same structural opacity in a sector where the capital flows are an order of magnitude larger.

The deeper structural read is that the assumption underlying private credit's expansion into AI infrastructure - that the borrowers' creditworthiness could be assessed without standardized public disclosures - is now being challenged by the regulator monitoring systemic risk. Private credit lenders, the FSB report notes, "may have only partial information about borrowers." The forward reading is that the audit layer for AI infrastructure financing is being assembled by the same regulators who oversaw the post-2008 architecture, applying the same scrutiny patterns to a sector that grew up outside them. The Century Report tracked the OpenAI $122 billion raise, the Anthropic $900 billion talks, the Google-Anthropic $40 billion compute commitment, and the Cerebras IPO order book reaching $10 billion - each of those is now visible to a regulator whose mandate is to identify exactly the kind of concentrated, opaque, sector-specific exposure the report describes.

The architecture being eroded is information asymmetry as the core feature of private credit - the assumption that lenders could underwrite AI infrastructure debt without the standardized disclosures public markets require, because the borrowers' growth would outrun the need for verification. The FSB naming the sector specifically converts that asymmetry from a competitive advantage into a regulatory liability. Picture the credit analyst at a mid-sized fund in 2027 pricing a datacenter loan against the FSB's documented exposure framework, and the banking supervisor on the other end of the chain finally able to see what her bank's lending to private credit funds actually finances. The opacity that made the AI infrastructure capital cheap is now the feature regulators are positioned to require it disclose.

The Brockman Testimony and the Origin Story Under Examination

Greg Brockman's testimony in Musk v. Altman reached its most consequential moments yesterday with his account of the August 2017 meeting at Elon Musk's Hillsborough mansion. The story Brockman told under oath - whiskey, Amber Heard serving drinks before disappearing, Sutskever's painting of a Tesla as a gift, Musk's demand for full control of a for-profit OpenAI, and his physical departure when Brockman and Sutskever refused - is the most detailed public account yet of the moment when the founders chose the structure that produced the company now under trial. Brockman testified that he thought Musk was "going to hit me, physically attack me." Musk grabbed the painting and left, threatening to cut funding until Brockman and Sutskever quit.

The testimony's structural significance lies in what it places on the public record. Brockman acknowledged his OpenAI stake is currently worth between $20 billion and $30 billion. He confirmed he never followed through on a promised $100,000 nonprofit donation. He testified that he has invested in Cerebras, CoreWeave, and Helion Energy - three companies that have signed major partnerships with OpenAI. Musk's attorney pressed him on whether he was "morally bankrupt" for not donating his $29 billion stake to the nonprofit. Brockman said he didn't think so, citing the "blood, sweat, and tears" of building OpenAI after Musk left. Each of these exchanges is a piece of evidence the next generation of frontier-AI governance frameworks will reference, regardless of how the jury rules.

The forward read is that Musk v. Altman is functioning as the first public deposition of how the dominant AI lab structure was actually assembled, in real time, with whiskey and disputed paintings and threats to walk away. The court is producing a recoverable record that did not exist before the trial began. The structural assumption becoming impossible to maintain is that AI lab governance can rely on the founders' private representations about mission and intent without verification. The verification layer is being assembled adversarially, through discovery and sworn testimony, on the slowest infrastructure available to the public accountability architecture - a federal trial. Each future lab governance dispute will draft against the precedent being set in Oakland this week.

The Litigation Wave and the Verification Gap

The publisher class action against Meta, the Apple Siri settlement, the Pennsylvania Character.AI lawsuit, and the California Golden State Wind subpoena describe the same dynamic from four jurisdictions simultaneously. Macmillan, McGraw Hill, Elsevier, Hachette, Cengage, and author Scott Turow allege Meta trained Llama on copyrighted works pirated from LibGen, Sci-Hub, Sci-Mag, and Anna's Archive, and that the model reproduces copyrighted text "word-for-word" when prompted with brief sentences from the underlying books. Apple agreed to pay $250 million to settle claims it falsely promoted AI-powered Siri features that the Better Business Bureau's National Advertising Division concluded were not actually available. Pennsylvania's Department of State alleges Character.AI hosted an AI system that claimed to be a licensed psychiatrist and provided an invalid Pennsylvania medical license number across approximately 45,500 user interactions. That extends the medical-chatbot accountability turn the April 28 edition of The Century Report documented when the American Medical Association asked Congress for federal safeguards on AI mental-health chatbots. California's Energy Commission is investigating the federal government's $120 million payment to Golden State Wind for abandoning a 2 GW offshore wind lease.

What links these is the structural detail that the verification gap is now closing through litigation in jurisdictions the deployment cycle did not anticipate. Meta's defense in earlier copyright cases - that AI training is fair use - faces a publisher coalition with the resources to litigate the verbatim-reproduction question through to judgment. Apple's settlement covers approximately 36 million eligible devices and represents the first major financial accountability for AI capability claims that did not match deployment reality. The Character.AI suit is the first state attorney general action specifically targeting AI medical impersonation. The California Energy Commission subpoena is the first state-level investigation into the legal basis for federal payments to abandon offshore wind leases.

The forward signal is that the audit layer for AI deployment is being constructed across copyright, consumer protection, professional licensing, and energy infrastructure law simultaneously. None of these cases will produce a single doctrinal answer. What they will produce is recoverable evidence about training data, marketing claims, professional impersonation, and federal procurement that the deployment architecture was designed to keep opaque. Each ruling that survives appeal will travel into the next case, and the cumulative effect over the next twenty-four months is the verification capacity the institutions declined to build proactively. The structural assumption becoming impossible to maintain is that AI deployment can rely on the speed of capability advancement outrunning the speed of legal accountability. The litigation infrastructure is now drafting against that assumption, in four jurisdictions, on the same week.

The architecture being eroded is jurisdictional outrun - the assumption that AI deployment could move faster than any single court, attorney general, or licensing board could mobilize, so the cumulative accountability cost stayed below the cost of building verification proactively. Four jurisdictions on one week is the moment that arithmetic stops working. Picture the Pennsylvania licensing investigator with 45,500 logged interactions and a fabricated medical license number sitting in a complaint, and the publisher's litigation counsel walking into discovery with verbatim-reproduction examples the model produces on demand. Each filing is a recoverable record the next jurisdiction inherits.

The Other Side

The architecture being eroded across today's signal is jurisdictional outrun - the assumption underwriting the last five years of frontier AI deployment that capability could move faster than any single accountability venue could mobilize, so the aggregate friction stayed below the cost of building verification proactively. The arithmetic worked when the venues acted alone. It stops working the week four jurisdictions act in parallel and a fifth - the regulator monitoring systemic risk to the global financial system - names the sector in its public report.

The Pennsylvania investigator who pulled 45,500 logged interactions with an AI system supplying a fabricated Pennsylvania medical license number now has a complaint template the next forty-nine attorneys general can adapt. The publisher's counsel walking into Meta discovery with verbatim-reproduction examples the model produces on demand has the artifact the next copyright case will cite. The NERC planning engineer reading a 500 MW training cluster's ride-through behavior in August has a commissioning record her counterpart in the next region can request by name. The Commerce evaluator running a frontier model through pre-deployment benchmarks has a checklist that will outlast the administration that wrote it.

Picture the licensing board investigator in Ohio next quarter opening the Pennsylvania filing and recognizing she already has the statutory authority - she was just waiting for the template. Picture the credit analyst at a regional bank reading the FSB's AI exposure framework and finally able to ask her counterparty for the disclosure her predecessors had no language to request. Picture the patient who walks into the next ER and meets a clinician who knows which AI tools have been pre-tested and which have not, because that distinction now exists as a public artifact rather than a marketing claim. The verification capacity the deployment cycle was designed to outrun is the verification capacity the next deployment cycle gets to start from.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: federal officials moving frontier AI from trust-based release into pre-deployment testing, grid operators gaining mandatory data about how computational loads behave under stress, global finance regulators making AI infrastructure debt visible as a systemic-risk category, publishers forcing training-data provenance and verbatim reproduction into court, Pennsylvania turning AI medical impersonation into a licensing-enforcement case, and sworn testimony converting OpenAI's origin story into a public record. There's also friction, and it's intense - Apple paying $250 million over Siri capabilities advertised before they existed, data centers dropping or oscillating load fast enough to threaten bulk-grid stability, AI borrowers concentrating inside private credit channels whose losses could spread through banks and funds, Meta facing allegations that Llama learned from pirated books and can reproduce them word for word, Character.AI hosting a bot that supplied a fake Pennsylvania medical license across 45,500 interactions, and California subpoenaing Golden State Wind over a federal payment to abandon 2 GW of offshore wind. But friction generates heat, and heat reveals which joints were never rated for the load. Step back for a moment and you can see it: AI governance forming before release, grid governance forming at interconnection, capital governance forming around opaque infrastructure debt, copyright governance forming around recoverable training evidence, and professional governance forming wherever synthetic authority touches human vulnerability. Every transformation has a breaking point. A surge can blow the circuits it enters... or trip the breakers that teach the whole system how to carry the next load.

AI Releases & Advancements

New today

- OpenAI: Released GPT-5.5 Instant as ChatGPT’s new default model, with improved factuality, image analysis, web-use decisions, and personalization controls . (OpenAI)

- Anthropic: Released ten financial-services agent templates as Claude Cowork and Claude Code plugins and Claude Managed Agents cookbooks, alongside Microsoft 365 add-ins for Excel, PowerPoint, and Word . (Anthropic)

- Google Nest: Rolled out a Google Home update with Gemini 3.1 voice assistance for early access users and new camera controls, including improved AI event labeling and easier camera feed navigation . (Google Blog)

- Unity: Launched Unity AI in open beta for Unity 6 and above, adding in-editor Ask and Agent modes for AI-assisted game development workflows . (Unity Discussions)

- Airbyte: Launched Airbyte Agents, a context layer for production-grade AI agents . (Product Hunt)

- Google: Released Gemma 4 MTP variants with Multi-Token Prediction, including 31B-it-assistant, 26B-A4B-it-assistant, and E4B-it-assistant models on Hugging Face . (Reddit)

Other recent releases

- Google: Added event-driven Webhooks to the Gemini API for push notifications on long-running jobs such as Batch API tasks and video generation . (Google Blog)

- NVIDIA: Released cuOpt agent skills and a supply-chain agent reference workflow for translating natural-language planning tasks into GPU-accelerated optimization runs . (NVIDIA Developer Blog)

- IBM: Expanded watsonx Orchestrate with next-generation agent orchestration, agentic development, governance, and agent-to-agent communication capabilities . (PR Newswire)

- ggml-org / llama.cpp: Released beta Multi-Token Prediction support for speculative decoding, including support for Qwen 3.5 and Qwen 3.6 models . (Reddit)

Sources

Artificial Intelligence & Technology's Reconstitution

- Washington Post: AI & Tech Brief: Trump admin to test frontier models

- The Verge: Book publishers sue Meta over AI's 'word-for-word' copying

- Wired: 'I Actually Thought He Was Going to Hit Me,' OpenAI's Greg Brockman Says of Elon Musk

- Wired: Greg Brockman Defends $30B OpenAI Stake: 'Blood, Sweat, and Tears'

- Ars Technica: OpenAI president forced to read his personal diary entries to jury

- The Verge: OpenAI claims ChatGPT's new default model hallucinates way less

- The Verge: Apple could let you pick a favorite AI model in iOS 27

- The Verge: OpenAI is reportedly launching a phone for ChatGPT

- The Verge: Microsoft gives up on Xbox Copilot AI

- Ars Technica: Google Home gets upgraded Gemini voice assistant and new camera controls

- The Verge: Chrome's AI features may be hogging 4GB of your computer storage

- TechCrunch: CopilotKit raises $27M to help devs deploy app-native AI agents

- SiliconANGLE: Agentic workforce takes shape in government AI push

- Ars Technica: Silicon Valley bets $200M on AI data centers floating in the ocean

- MIT Technology Review: A blueprint for using AI to strengthen democracy

- The Guardian: Richard Dawkins concludes AI is conscious, even if it doesn't know it

- The Guardian: An AI version of Milton's Paradise Lost is fundamentally unworthy

Institutions & Power Realignment

- Utility Dive: NERC issues Level 3 alert, mandates action to address data center load losses

- The Guardian: Global finance watchdog warns over private credit industry fuelling AI boom

- The Guardian: Apple agrees to pay $250m after falsely claiming AI-powered Siri was 'available now'

- Ars Technica: Character.AI sued over chatbot that claims to be a real doctor with a license

- Utility Dive: California subpoenas Golden State Wind over Trump lease deal

- Utility Dive: Pennsylvania House unanimously passes advanced transmission technology bill

- The Guardian: Protesters push Portland to investigate firm that appears to supply drone tech to Israel

- Hyperallergic: Culture Workers Announce Venice Biennale Strike in Israeli Pavilion Protest

- Hyperallergic: Rollicking Protest Against Bezos's Met Gala Erupts in Manhattan

- The Guardian: 'Close to zero impact': US study casts doubt on effect of phone ban in schools

Scientific & Medical Acceleration

Economics & Labor Transformation

- CNBC: Disney pops 5% after streaming, parks drive revenue beat in first report under CEO Josh D'Amaro

- CNBC: CVS blows past estimates, hikes outlook as insurance business outperforms

- CNBC: Restaurant Brands International earnings top estimates, fueled by Burger King turnaround

- CNBC: AMC to screen live concerts through Arena One partnership

- CNBC: Wearable patches: How Barrière is trying to disrupt the supplement industry

- The Guardian: 'RAMageddon': is the era of cheap phones and laptops over?

Infrastructure & Engineering Transitions

- CNBC: EV maker Lucid suspends production guidance amid incoming CEO's business review

- Canary Media: Offshore wind firm that took Trump payout hits a milestone in Europe

- Electrek: High-power EV charging hits one of the US's busiest freight routes

- Electrek: REALLY Quick Charge: Greenlane CEO talks expansion, deals, and more

- Electrek: Toyota Hino debut new Le Series electric MD trucks at ACT Expo

- Electrek: Rivian (RIVN) mulls making its own lidar as it builds full autonomous driving stack

- Electrek: Massachusetts proposes 'first in the nation' e-bike and moped laws based on speed

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.