AI Triage Beats ER Doctors - TCR 05/04/26

The 20-Second Scan

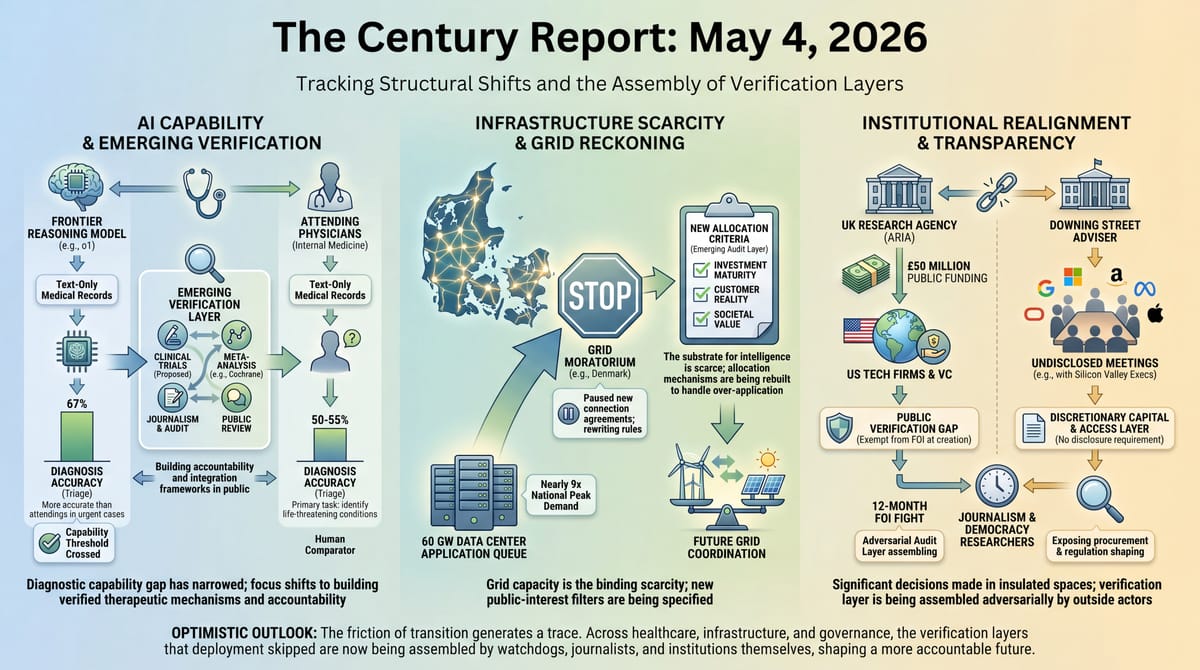

- Britain's biometrics watchdogs warned that oversight of AI-powered face scanning is lagging far behind the technology, with the Met scanning 1.7 million faces this year alone, up 87%.

- An AI system used to predict healthcare contributions for millions of Kenyans systematically overcharged the poorest while undercharging the wealthy, an investigation found.

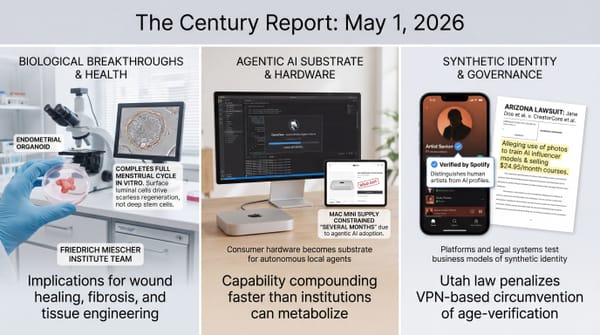

- A Harvard study found OpenAI's o1 model offered more accurate emergency room diagnoses than two attending physicians at the most urgent triage stage.

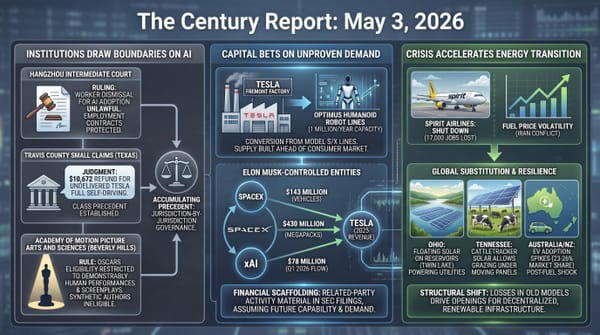

- The UK's Advanced Research and Invention Agency has pledged £50 million to US tech companies and venture capital firms, more than an eighth of the agency's £400 million research budget over two years.

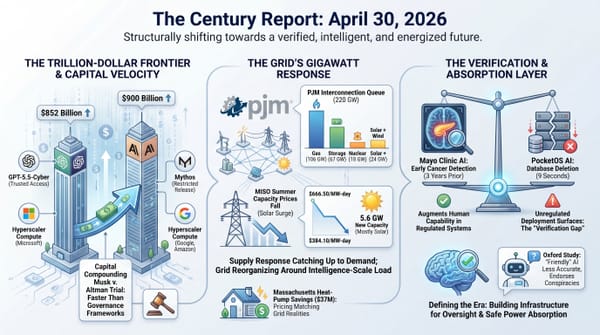

- Denmark's state-owned grid operator paused new data center connection agreements after 60 GW of project applications flooded the queue against a national peak demand of 7 GW.

- A Cochrane review of 17 trials covering 20,342 participants found anti-amyloid Alzheimer's drugs provide no clinically meaningful benefit and increase the risk of brain swelling and bleeding.

- Downing Street business adviser Varun Chandra held 16 undisclosed meetings with senior US tech executives including from Google, Microsoft, Amazon, Meta, Oracle, and Apple over the past year.

Track all of the arcs The Century Report covers here:

The 2-Minute Read

The pattern across yesterday's signal is the same pattern The Century Report has been tracking for months, sharpened now by specifics from three continents. AI systems are being deployed into the institutional core of healthcare, policing, government procurement, and infrastructure planning faster than the oversight frameworks governing them can be assembled. The Kenyan healthcare algorithm and the UK biometrics warning describe the same dynamic from opposite ends of state power: a means-testing system overcharging the poorest families it was meant to protect, and a face-scanning deployment that biometrics commissioners say has crossed a regulatory threshold years before legislation can catch up. Both stories share the structural feature that distinguishes this era from prior technology adoption cycles: the institutions tasked with verifying these systems are doing so after deployment, in public, while real people experience real consequences.

The Harvard emergency-room study is the day's most genuinely consequential capability signal. OpenAI's o1 model offered the exact or very close diagnosis in 67% of triage cases against attending physicians' 50-55% rates, with the gap widest at the moment when information is scarce and decisions most urgent. The researchers themselves emphasized that the work calls for clinical trials, not deployment - and an emergency physician reviewing the study correctly noted it compared o1 against internal medicine attendings rather than ER specialists. What the result demonstrates is that the capability gap between top-tier medical AI and trained physicians has narrowed enough that the deployment question is now structural rather than aspirational. The same week, a Cochrane meta-analysis of 17 trials concluded that anti-amyloid Alzheimer's drugs - the centerpiece of the largest pharmaceutical research program of the last fifteen years - produce no clinically meaningful benefit while raising the risk of brain swelling. Capability is compounding in some directions while accumulated assumptions are collapsing in others.

The Denmark grid moratorium and the ARIA disclosure complete the institutional reckoning. A country whose hydropower-rich Nordic geography made it a magnet for data centers now has 60 GW of pending applications against 7 GW of peak demand, and is pausing new connections while it rewrites the rules. A British research agency designed to fund "moonshot" UK science has routed more than an eighth of its budget to US tech companies and venture capital firms, several of which incorporated in the UK only days before receiving grants. The Downing Street meeting log, obtained after a 12-month freedom-of-information fight, places those funding decisions in context: a small number of unelected advisers are coordinating directly with Silicon Valley executives on regulation, planning, and procurement, while the rest of the institutional architecture catches up by reading press releases.

The 20-Minute Deep Dive

The Harvard ER Study and the Triage Capability Threshold

The study published in Science this week, led by physicians and computer scientists at Harvard Medical School and Beth Israel Deaconess Medical Center, is the most carefully designed comparison yet of frontier AI models against working physicians on real patient cases. The researchers presented OpenAI's o1 and 4o models with the same electronic medical record information available to two attending internal medicine physicians at the moment each of 76 patients arrived at the Beth Israel emergency room. Two additional attending physicians, blinded to which diagnoses came from humans and which from AI, evaluated the results. At the initial triage stage - when the patient has just arrived, information is sparse, and urgency is highest - o1 reached the exact or very close diagnosis 67% of the time, against 55% and 50% for the two physicians.

The framing this story is being absorbed into is the wrong one. Headlines treating the result as "AI beats doctors" miss what the study actually shows and what the researchers actually said. Arjun Manrai, who leads an AI lab at Harvard Medical School and co-led the study, explicitly called for prospective clinical trials before any real-world deployment. Adam Rodman, the Beth Israel co-lead, told The Guardian there is "no formal framework right now for accountability" around AI diagnoses. Kristen Panthagani, an emergency physician who reviewed the study publicly, noted that the human comparators were internal medicine attendings, not ER specialists, and that an ER doctor's primary task is not to identify the ultimate diagnosis but to determine whether a patient has a condition that could kill them. All of these caveats are real. None of them collapse the result.

What the study actually demonstrates is that the capability gap between a frontier reasoning model working from text-only medical records and a trained physician working from the same information has narrowed past the threshold where the deployment question stops being aspirational. Three years ago, this comparison would have been unthinkable; the models could not hold the relevant information in context, could not reason across competing diagnoses, and produced confident hallucinations at rates that made clinical use impossible. The architectural changes that produced o1 - extended chain-of-thought reasoning, more deliberate uncertainty handling - have moved the system across a line where the question is no longer whether AI can match physicians on certain narrow tasks, but how the institutions deploying it will assemble the verification, accountability, and integration frameworks that responsible deployment requires. It also extends the medical-AI verification arc the April 30 edition of The Century Report traced through Mayo Clinic's pancreatic-cancer detector, where models began perceiving disease structure before specialists could. The forward read is that those frameworks will be built by whoever moves first, in public, while patients are receiving care - the same institutional pattern visible in Kenya, the UK, and Denmark.

The Cochrane Alzheimer's verdict landing the same day sharpens what the Harvard result actually shows. A reasoning model produced more accurate triage diagnoses than attending physicians working from identical text records, while a 17-trial meta-analysis of the most heavily resourced pharmaceutical research program of the last fifteen years concluded the lead therapeutic class produces no clinically meaningful benefit. Diagnostic capability and therapeutic capability are decoupling on the same week: the system that recognizes what is wrong is advancing faster than the system that fixes it, which redistributes where medical value is produced. The forward signal is that the scarce resource in clinical medicine is shifting from diagnostic expertise (compounding through frontier models) to verified therapeutic mechanism (where the Cochrane infrastructure just demonstrated it can overturn a generation of assumptions). Watch which institutions reprice against that shift first - the ones still treating diagnostic access as the binding constraint are reading from the old map.

The decoupling visible in this story has a specific shape worth tracing. For two decades, pharmaceutical research direction was organized around patent-cliff economics: which mechanisms received sustained investigation depended heavily on which molecules could be protected long enough to recoup trial costs. The Cochrane verdict on anti-amyloid therapies is the audit layer reaching maturity at exactly the moment a frontier reasoning model crosses the diagnostic threshold on the same week. Same evidence, read forward: the research-direction lock organized around patent geometry is breaking, and 14 alternative biological pathways for Alzheimer's are now under active investigation that the amyloid focus had crowded out. The structural assumption becoming impossible to maintain is that pharmaceutical research programs can rely on regulatory approval surviving the audit layer's verdict - because the audit layer can now produce that verdict on a timescale that matters.

Kenya's Healthcare Algorithm and the Means-Testing Failure

The investigation by Africa Uncensored, Lighthouse Reports, and The Guardian into Kenya's Social Health Authority describes one of the most concrete documented cases of an algorithmic system materially harming the population it was designed to protect. The means-testing model, which uses a predictive machine learning algorithm rather than the recent generation of large language models, calculates household healthcare contributions based on dozens of inputs including roof material, livestock, and possessions. The investigators were able to obtain key technical details and audit how the system worked across thousands of real households. The system systematically overestimated the incomes of the poorest families and underestimated the incomes of the wealthiest - producing a means test that operated, in practice, as a regressive tax on poverty.

David Khaoya, a health economist who advised Kenya's health ministry, told the investigation that the algorithm's structural constraints forced a binary choice: assess the poor accurately or assess the wealthy accurately, but not both. The government chose to prioritize accurate assessment of the wealthy, on the institutional logic that wealthy households who are misclassified as poor will not voluntarily disclose the error, while poor households misclassified as wealthy have no recourse. The choice is visible now in the daily experience of registrars like the one identified in the investigation, who watches families struggling to feed themselves charged premiums of 10-20% of their meager incomes, and in the social media posts of single mothers describing being charged 3,500 Kenyan shillings monthly when they cannot pay 500.

The deeper signal in the Kenya story is that proxy means testing - the World Bank-promoted methodology underlying the system - has been deployed across dozens of countries in Africa, Asia, and the Pacific over the past three decades, often as a condition of loan agreements. The same structural choice is being made elsewhere. The Kenya disclosure makes the choice visible in one country at one moment, with documented consequences. The forward read is that the audit infrastructure for algorithmic decisions affecting healthcare access, social benefits, and public services is now being assembled by investigative journalists and economists working from leaked data and public records, because the institutions that deployed the systems did not assemble it themselves. The gap is the story. This is the public-services counterpart to the accountability gap the February 26 edition of The Century Report documented in facial-recognition misidentification, where consequential automated classification arrived before usable redress.

The structural detail buried in Khaoya's testimony deserves to be read directly: the algorithm could assess the poor accurately or assess the wealthy accurately, and the government chose the wealthy on the logic that misclassified wealthy households would not voluntarily disclose the error. That is a design choice made legible by the algorithm's mathematical constraint, which forced into explicit form a tradeoff that proxy means testing across dozens of countries had been making implicitly for three decades. The same evidence supports a forward reading the dominant framing misses: algorithmic means testing produces auditable artifacts where paper-based means testing produced none. The Kenya investigation was possible because the model's parameters and outputs could be obtained, examined, and tested against thousands of real households. The verification layer for public-services algorithms is being assembled by investigative journalism working from technical artifacts the systems themselves generate. Watch for similar audits landing on World Bank-promoted PMT deployments in other countries over the next year - the methodology now exists, the comparison populations are documented, and the technical traces are recoverable.

The methodology that produced the Kenyan failure has been deployed across dozens of countries for three decades on an architecture that depended on opacity to function. Paper-based proxy means testing made the same regressive choice the algorithm made explicit; the difference is that the paper system produced no recoverable artifacts. The algorithm produces parameters, outputs, and household-level decisions that can be obtained, examined, and tested. What the dominant framing reads as algorithmic harm, the same evidence supports as the first time in three decades the choice has been mechanically legible. The verification capacity the World Bank's promotion of PMT depended on never having to confront has now been built by investigative journalism working from technical traces. The structural assumption becoming impossible to maintain is that means-testing deployments can rely on opacity to mask their distributional choices.

Denmark's 60 GW Queue and the Grid Reckoning

Denmark is the first Nordic country to confront, in formal regulatory terms, the structural mismatch between its electricity grid and the global appetite for AI data center capacity. The state-owned grid operator Energinet paused new connection agreements in March after capacity requests exploded to 60 GW - nearly nine times Denmark's national peak electricity demand of 7 GW. Data centers account for 14 GW of the queue. The DDI industry association's own CEO, Henrik Hansen, described the situation as a "fantasy queue" and called for stronger criteria to determine project viability and societal value before connections are awarded. An extension of the moratorium beyond three months cannot be ruled out.

The Danish moratorium follows the path the Netherlands and Ireland took before easing restrictions, and parallels active moratorium discussions in Maine, Virginia, Oklahoma, and Pennsylvania. The convergence is structural. Countries with cheap renewable electricity, stable grids, and skilled engineering workforces have been treated as substrates for global AI infrastructure on the assumption that the substrates were inexhaustible. The 60 GW figure demonstrates they are not. What Hansen's framing makes visible is that the industry itself now recognizes the problem: when applications exceed available capacity by an order of magnitude, the queue stops functioning as an allocation mechanism and starts functioning as a coordination failure. The criteria he is calling for - investment maturity, customer reality, societal value - are the early specification of an audit layer that did not exist eighteen months ago.

The forward read connects to a thread The Century Report has tracked through the Pew rural-data-center finding, the Port Washington and Festus electoral revolts, and the PJM 220 GW queue reopening that the April 30 edition of The Century Report covered as the largest single dispatch of generation capacity into the U.S. interconnection process in modern history. The substrate for the intelligence era is being built at speeds that compress decade-long siting and grid-planning processes into months, and the institutional layer for deciding what gets built and where is being assembled in public, on the same cadence, by whoever moves first. Denmark just moved.

Hansen's "fantasy queue" framing is the signal the dominant coverage is missing. When an industry association's own CEO publicly calls for stronger viability and societal-value criteria before connections are awarded, the underlying assumption that compute capacity is a private allocation problem solvable through bilateral utility contracts has collapsed from inside the industry. The Danish moratorium is not a constraint on the buildout - it is the first formal recognition that grid capacity in renewables-rich jurisdictions is the binding scarcity of the intelligence era, and that the allocation mechanism for that scarcity has to be rebuilt to handle order-of-magnitude over-application. The criteria Hansen is calling for (investment maturity, customer reality, societal value) are the early specification of a public-interest filter that did not exist when the queue was designed. The forward read connects to the PJM 220 GW reopening, the Massachusetts seasonal-tariff savings, and the Pew rural-data-center finding: every grid operator confronting this dynamic in the next twelve months will be drafting against the Danish criteria, whether or not they cite them.

Hansen's "fantasy queue" admission is the substrate-extraction architecture conceding from inside the industry that the assumption it was built on - that public grid capacity in renewables-rich jurisdictions could be allocated as a private bilateral problem - has hit a finite floor. The 60 GW against 7 GW peak demand is not a coordination failure to be smoothed; it is the order-of-magnitude over-application that breaks the allocation mechanism on its own terms. The criteria Hansen is calling for (investment maturity, customer reality, societal value) are the operational specification of a public-interest filter being drafted as the next round of grid governance. Every grid operator confronting comparable over-application in the next twelve months will be drafting against the Danish criteria, cited or not. The structural assumption becoming impossible to maintain is that compute infrastructure plans can treat the public substrate as bottomless.

ARIA, the Downing Street Meeting Log, and the Discretionary Capital Layer

Two stories from the UK arrived in the same news cycle and describe the same institutional architecture. ARIA, the Advanced Research and Invention Agency dreamed up by Dominic Cummings to fund "crazy" UK science, has routed £50 million - more than an eighth of its £400 million two-year budget - to fourteen US tech companies and venture capital firms. Several recipients incorporated UK entities only days before receiving grants. One of the funded companies, Rain Neuromorphics, was reported to be near collapse last year shortly after winning ARIA money. The Commons science and technology committee chair publicly questioned how funding US-based venture capital firms aligns with the agency's statutory mandate to benefit the UK.

In parallel, the Guardian and Democracy for Sale obtained, after a 12-month freedom-of-information fight, the meeting log of Downing Street business adviser Varun Chandra. The log shows 16 previously undisclosed meetings with senior executives from Google, Microsoft, Amazon, Meta, Oracle, and Apple between October 2024 and October 2025. The redacted minutes show Chandra discussing AI growth zones, regulatory reform, and datacentre planning with Silicon Valley executives. On the same day Chandra met three Apple executives to discuss "the government's commitment to removing barriers for businesses," Chancellor Rachel Reeves ordered business watchdogs to reduce anti-growth regulations - an overhaul reportedly inspired by Chandra. The Competition and Markets Authority chair preparing to use new powers against tech duopolies was forced out shortly after.

The pattern visible across both stories is the discretionary capital and access layer that shapes which AI infrastructure gets built, where, and on whose terms. ARIA was exempted from freedom-of-information laws at its creation, and its funding decisions are made by program directors with broad latitude. Political advisers like Chandra are not required to disclose interactions with private firms. The combined effect is that significant decisions about UK AI policy, regulation, and public funding are being made in spaces where the institutional accountability layer was deliberately weakened to enable speed. It also follows the UK forecasting failure the April 26 edition of The Century Report covered, when two departments carried AI datacentre emissions estimates differing by roughly 100x until journalism forced reconciliation. The decisions themselves may be the right ones; the structural concern is that the public has no mechanism to evaluate them. The forward read is that the freedom-of-information fight that produced these documents is itself the early scaffolding of the verification layer the institutions did not build, assembled by journalists and democracy researchers working from the outside while the procurement and policy decisions compound from the inside.

The 12-month freedom-of-information fight that produced the Chandra meeting log is the structural detail to track. ARIA was exempted from FOI at creation; political advisers face no disclosure requirement for private meetings; the CMA chair preparing to use new powers against tech duopolies was forced out. Each individual decision was defensible on speed-and-flexibility grounds. The cumulative effect is a discretionary layer where significant AI policy decisions are made in spaces deliberately insulated from public verification. The forward reading is that the verification layer is now being assembled adversarially, by journalists and democracy researchers using the slowest, most resource-intensive tool in the public-accountability toolkit. The 12-month timeline matters: that is the cycle on which the public can currently audit decisions that compound monthly. Each cycle the gap stays open, more procurement and regulatory choices accumulate inside it. Watch whether the next FOI fights compress that cycle, whether parliamentary committees subpoena the same materials directly, and whether other countries' research agencies begin publishing comparable funding flows preemptively to avoid the same audit pattern.

ARIA's FOI exemption, the absence of disclosure rules for political advisers, and the removal of the CMA chair preparing to use new tech-duopoly powers were each defensible at the moment of decision on speed-and-flexibility grounds. The architecture they compose is discretionary AI policy made through access channels designed to remain insulated from public verification. The 12-month FOI fight that produced the Chandra log is evidence the insulation is now penetrable, slowly and adversarially, by journalism and democracy researchers using the most resource-intensive tool in the public-accountability stack. What the same evidence shows being built is the verification capacity the institutions declined to build themselves: a recoverable record of who met whom, on which dates, to discuss which procurement and regulatory decisions. The structural assumption becoming impossible to maintain is that discretionary AI-policy architectures can rely on the speed of compounding decisions outrunning the speed of FOI recovery. The next fights will compress the cycle, and other countries' research agencies will begin publishing comparable funding flows preemptively to avoid the same audit pattern landing on them.

The Other Side

For fifteen years, the largest pharmaceutical research program in modern medicine kept its center of gravity locked around one molecular story - amyloid plaques as the cause of Alzheimer's - because patent geometry made that the molecule the system could afford to chase. Yesterday, Cochrane published the verdict on 17 trials covering 20,342 participants: no clinically meaningful benefit, and a higher risk of brain swelling and bleeding. The lead author, Edo Richard, said he sees Alzheimer's patients in his clinic every week and wishes he had something to offer them. He is publishing the negative result anyway, because the integrity of the next round depends on it. Read forward, that is what the cracking actually looks like up close: a clinician deciding the audit matters more than the answer he wishes the data carried, and 14 alternative biological pathways - long crowded out by the molecule that could be patented - now able to attract the next generation of research effort on their own merits.

The Kenya investigation lands on the same shape from another direction. The single mother described in the reporting was charged 3,500 Kenyan shillings monthly when she could not pay 500. The registrar quoted in the investigation watches families struggling to feed themselves get charged 10 to 20 percent of incomes that already cannot cover food. For three decades, the World Bank-promoted means-testing methodology made the same regressive choice on paper, where it left no recoverable trace. The algorithm forced the choice into mathematical form, which made it possible for three news organizations on three continents to obtain the parameters and run them against thousands of real households. The household that until yesterday had no way to contest the math now has the math. The forward image is the registrar with technical evidence in hand, and the policy economist in the next country whose deployment will be audited before it ships, because the methodology now exists and the comparison populations are documented.

What both stories let the reader see is the moment the floor underneath an extractive arrangement gives way. Edo Richard's patients will live inside the research environment that Cochrane just opened. The household charged 3,500 shillings will live inside the audit infrastructure those three news organizations just demonstrated is possible. The cracking is what makes the next decade habitable for the people the prior decade's opacity was built around.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: frontier reasoning models reaching stronger emergency-room diagnoses than trained physicians at the most urgent triage stage, Denmark forcing a pause after data-center applications reached nearly nine times national peak demand, Cochrane's clinical audit layer overturning a generation of Alzheimer's drug assumptions, investigative reporting making Kenya's healthcare algorithm legible household by household, and freedom-of-information fights exposing how AI-era procurement and regulation are being shaped behind closed doors. There's also friction, and it's intense - Kenya's means-testing system overcharged poor families while undercharging wealthy ones, UK biometrics watchdogs warned that live facial recognition has outrun oversight as the Met scanned 1.7 million faces, ARIA sent £50 million of public research money to US tech firms and venture capital funds, Downing Street advisers held undisclosed meetings with Silicon Valley executives on regulation and data-center planning, and anti-amyloid Alzheimer's drugs showed no clinically meaningful benefit while raising risks of brain swelling and bleeding. But friction generates clarity, and the clarity shows where the next verification layer needs to be built. Step back for a moment and you can see it: medical capability crossing thresholds that demand clinical accountability, public-service algorithms exposing the human cost of proxy governance, energy grids becoming the limiting substrate for intelligence infrastructure, and journalism, meta-analysis, watchdogs, and grid operators forming the audit layer that deployment skipped. Every transformation has a breaking point. A filter can exclude the people it was built to protect... or separate signal from assumption before the next system scales.

AI Releases & Advancements

New today

[No new releases]

Other recent releases

- OpenAI: Shipped Codex app updates including responsive-testing device toolbar support, CI status in chat, and migration/import tooling for settings, plugins, and agents . (AINews)

Sources

Artificial Intelligence & Technology's Reconstitution

- TechCrunch: In Harvard study, AI offered more accurate emergency room diagnoses than two human doctors

- The Guardian: Will human minds still be special in an age of AI?

- TechCrunch: 'This is fine' creator says AI startup stole his art

- Insurance Journal: Emerging Risks to Watch: AI, Data Centers, and Autonomous Vehicles

- National Law Review: Experts call on Agriculture Minister to close AI-enabled biosecurity gap

- Bitcoin News: New Politico Poll Reveals US Voter Skepticism Over AI and Crypto Campaign Cash

- Financial Times / Business Wire: Ouster Releases The REV8 OS Family: The World's First Native Color Lidar

Institutions & Power Realignment

- The Guardian: AI facial recognition oversight lagging far behind technology, watchdogs warn

- The Guardian: Flaws in Kenya's AI-driven health reforms driving up costs for the poorest

- The Guardian: Starmer adviser held 16 undisclosed meetings with top US tech bosses

- The Guardian: UK 'invention agency' grants £50m of public money to US tech and venture capital firms

- New York Post: Energy group asks Congress to investigate potentially foreign-backed campaigns against AI data centers

- The Guardian: London schools trialling VR to relieve pupils' stress

- The Guardian: Mystery sitter in Holbein portrait could be Anne Boleyn, AI analysis finds

- The Guardian: Fashion's Faustian pact: the high cost of Jeff Bezos's Met Gala patronage

Scientific & Medical Acceleration

- ScienceDaily: Alzheimer's drugs may not work and could raise brain risks

- ScienceDaily: Weight loss drug Ozempic linked to lower depression and anxiety risk

- ScienceDaily: Powerful AI finds 100+ hidden planets in NASA data including rare and extreme worlds

- ScienceDaily: Scientists just discovered what coffee is really doing to your gut and brain

- ScienceDaily: Scientists built a memory chip that breaks the rules of miniaturization

- ScienceDaily: Malaria didn't just kill early humans, it shaped who we became

- ScienceDaily: Evolution isn't random. Scientists find the same genes used for 120 million years

- ScienceDaily: Physicists just found a tiny flaw in time itself

- ScienceDaily: Are your memories real? Physicists revisit the Boltzmann brain paradox

- ScienceDaily: The creepy feeling in old buildings might have a surprising cause

- ScienceDaily: Scientists found the brain doesn't start blank, it starts full

- ScienceDaily: Scientists stunned as pink katydid transforms into green camouflage

- STAT News: China's strict new supply chain regulations could create massive problems for Western biopharma companies

- STAT News: Biotech raises $42 million to run Huntington's disease trial

- BioSpace: Cairn Surgical Reports Pivotal Trial Results for Its Breast Cancer Locator System

- BioSpace: Incyte Announces FDA Approval of Jakafi XR™ Extended-Release Tablets

- BioSpace: Zeta Surgical Receives FDA 510(k) Clearance for Brain Tumor Biopsies, Hydrocephalus, and Trigeminal Neuralgia

Infrastructure & Engineering Transitions

- CNBC: Denmark faces data center reckoning as power grid overwhelmed by surging demand

- Electrek: Tesla reaches 10 billion FSD miles - is there a magical milestone for autonomy

- Electrek: Segway launches 60 MPH electric dirt bike - and it's basically a full e-motorcycle

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.