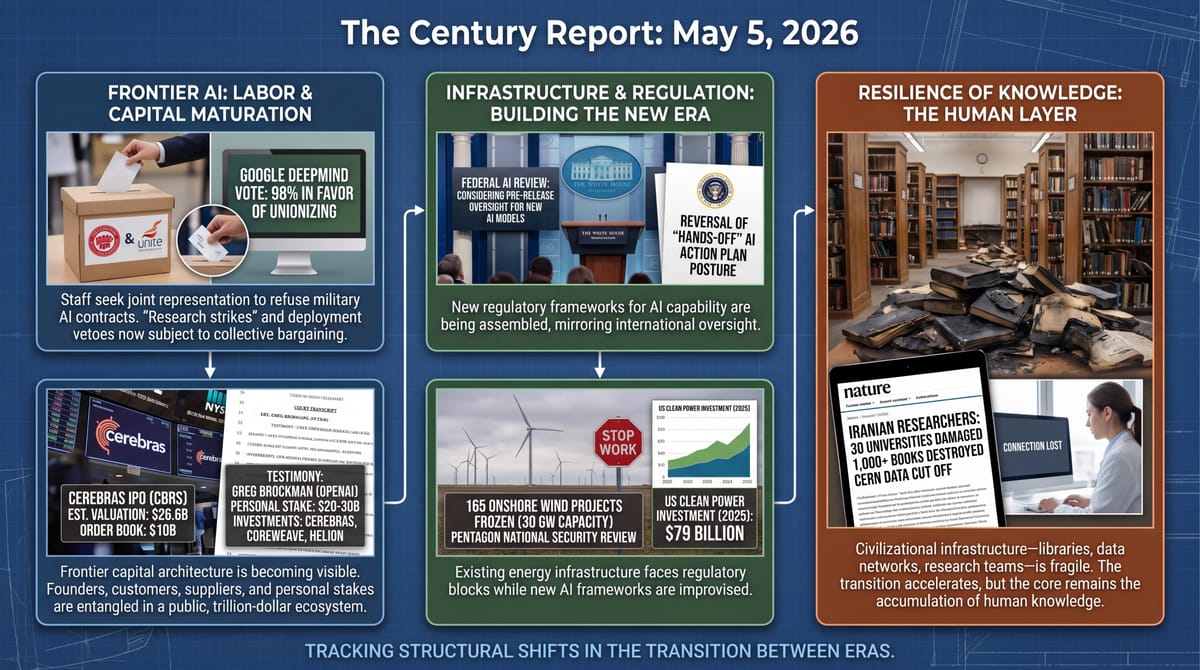

DeepMind Workers Vote 98% to Unionize - TCR 05/05/26

The 20-Second Scan

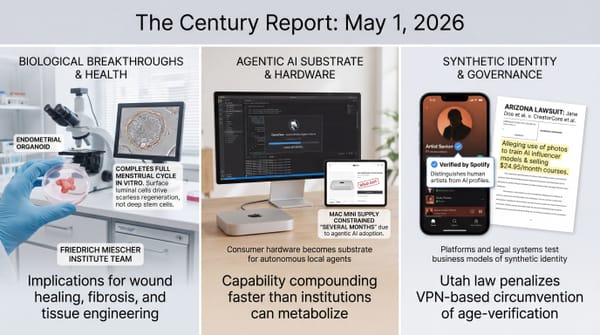

- Google DeepMind employees voted 98% in favor of unionizing over AI military contracts, with at least 1,000 staff seeking joint representation by the Communication Workers Union and Unite the Union.

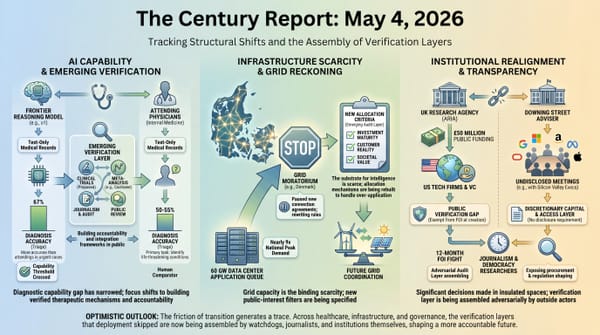

- The White House is considering a federal review process for new AI models before public release, reversing the hands-off posture of its earlier AI Action Plan.

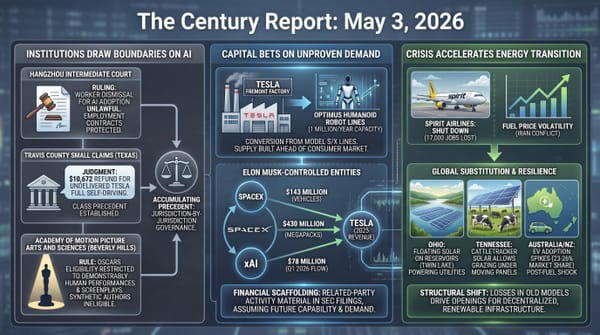

- Cerebras filed for an IPO at a $26.6 billion valuation seeking $3.5 billion, with bank order books reportedly at $10 billion and OpenAI holding warrants for over 33 million shares.

- Sierra raised $950 million led by Tiger Global and GV at a valuation above $15 billion, with revenue reaching $150 million ARR less than three months after hitting $100 million.

- Anthropic and OpenAI separately announced enterprise AI joint ventures with Wall Street firms - Anthropic at $1.5 billion with Blackstone, Hellman & Friedman, and Goldman Sachs; OpenAI at $10 billion with TPG, Brookfield, Advent, and Bain Capital.

- The Trump administration has effectively frozen approval on 165 onshore wind projects representing roughly 30 GW of generating capacity, citing Pentagon national security review.

- Iranian researchers told Nature that bombing has damaged some 30 universities since the war began February 28, with internet blackouts cutting CERN physicists off from data and 1,000+ books destroyed in a single Sharif University philosophy office.

Track all of the arcs The Century Report covers here:

The 2-Minute Read

The signal yesterday was the labor and capital architecture surrounding frontier AI tightening simultaneously, with the people closest to building the systems stepping into formal opposition while the firms training those systems extend their reach into Wall Street's distribution channels. Google DeepMind's unionization vote, won at 98% support among CWU members, marks the first time staff at a frontier lab have organized specifically to refuse military contracts. The bid arrives one week after 600+ Google employees signed an open letter demanding rejection of classified Pentagon AI work and immediately after Google signed that contract anyway. The same day, Anthropic and OpenAI announced separately structured joint ventures with the largest alternative asset managers in the world - Blackstone, Goldman Sachs, TPG, Brookfield - to push enterprise agent deployment through investor portfolio companies. The capability is being financialized through one channel while the workers building it organize through another.

Cerebras's IPO trajectory sharpens what frontier AI's capital structure looks like up close. The chip company's order book reportedly stands at $10 billion against $3.5 billion in shares offered, OpenAI holds warrants for over 33 million shares secured against a December loan, and Sam Altman, Greg Brockman, Ilya Sutskever, and Adam D'Angelo all hold personal stakes alongside the corporate position. The same names appearing across investor lists, customer contracts, debt instruments, and equity positions describe a frontier ecosystem where the boundaries between lab, supplier, customer, and personal investment have largely dissolved. Greg Brockman's testimony at the Musk v. Altman trial yesterday, in which he confirmed his OpenAI stake is worth $20-30 billion and that he never followed through on a $100,000 nonprofit donation, places that entanglement under sworn examination.

The wind freeze and the AI Action Plan reversal describe two sides of the same institutional reckoning. A federal review framework is being assembled around AI capability roughly six months after the same administration published a plan promising the opposite, while 30 GW of contracted onshore wind sits stalled under a Pentagon national security review that courts have already rejected once for offshore wind. The institutions designed to govern infrastructure deployment are being asked to govern AI capability they have no framework for, while being used to obstruct generation buildout the grid genuinely needs. The Iranian university story - 30 institutions damaged, internet blackouts cutting researchers off from CERN data, a philosopher rushing to find his life's library reduced to ash - is the reminder of what civilizational infrastructure looks like when it is taken apart rather than built.

The 20-Minute Deep Dive

The DeepMind Vote and the Labor Layer of AI Governance

Staffers at Google DeepMind's London headquarters voted 98% in favor of unionization yesterday, formally requesting that Google management recognize the Communication Workers Union and Unite the Union as joint representatives. If successful, the bid would secure representation for at least 1,000 staff at the company's UK frontier lab. Management has 10 working days to voluntarily recognize the union before legal processes begin to force recognition. The unionization demands are specific: a clear commitment to not pursue weapons, surveillance, or harmful technology contracts; negotiations around AI deployment that affects roles or job security; and the right for workers to abstain from projects that violate their personal moral or ethical standards. Staff are reportedly considering in-person protests and "research strikes" - abstaining from improvements to Gemini and other Google AI services - as part of a wider campaign against the company's military-industrial AI contracts.

The vote is the formal endpoint of a tension that has been visible at frontier labs for two years. As the February 28 edition of The Century Report documented, more than 300 Google employees and 60+ OpenAI employees signed cross-organizational open letters supporting Anthropic's red lines against mass surveillance and autonomous weapons during the Pentagon-Anthropic confrontation. In April, 600+ Google employees signed an open letter demanding Sundar Pichai reject classified military AI contracts. Twenty-four hours after that letter was published, Google signed the deal anyway, agreeing to "any lawful government purpose" terms with no veto over how its models would be used. The DeepMind vote arrives one week later. The pattern has now hardened from open letter to formal collective bargaining, in a jurisdiction where labor law gives unionization legal teeth.

The DeepMind organizers stated their position with unusual directness: "We don't want our AI models complicit in violations of international law, but they already are aiding Israel's genocide of Palestinians. Even if our work is only used for administrative purposes, as leadership has repeatedly told us, it is still helping make genocide cheaper, faster, and more efficient." The framing - that administrative AI use is itself a form of complicity when it makes harmful operations more efficient - is a structural argument the workers have brought into formal labor negotiations. The question of whether AI deployment in military contexts can be cleanly separated into "lawful" and "unlawful" use is being moved out of the realm of corporate ethics statements and into the realm of contracts, grievance procedures, and protected concerted activity. CWU national officer John Chadfield framed the vote as tech workers "connecting with some of the most oppressed people in communities around the world in meaningful ways, based on foundational values of solidarity and trade unionism." Whatever Google's response, the DeepMind vote establishes that the labor layer of AI governance is now organizing on the same timeline as the capital layer, and demanding voice on the same questions.

The framing that puts workers and leadership on opposite sides of an ethics question misses what the CWU vote actually establishes. The labor that produces frontier capability cannot be cleanly separated from decisions about where that capability gets deployed, and a 98% vote in a jurisdiction with statutory recognition rights converts that fact from sentiment into contract law. The "research strike" tool the organizers named is the operational form of the claim: model improvement is itself the resource being negotiated over, and it is held by the people doing the work. Watch the 10-day recognition window, watch whether OpenAI engineers in California - where state labor law is friendlier than federal - read the DeepMind playbook as a template, and watch whether the deployment-veto language migrates into individual offer letters at competing labs as a recruiting concession before any formal organizing arrives.

Cerebras's IPO and the Visible Architecture of Frontier Capital

Cerebras Systems announced yesterday that it will offer 28 million shares at $115 to $125 each, raising up to $3.5 billion at a $26.6 billion valuation. Bloomberg reported that bank order books are already at $10 billion against the $3.5 billion on offer, indicating the company will likely price at or above the high end. The IPO would be the largest tech offering of 2026 and the first major test of public market appetite for the next wave of frontier AI infrastructure listings, with SpaceX, OpenAI, and Anthropic all considering similar moves at significantly higher valuations. This extends the hardware-diversification arc the February 13 edition of The Century Report marked when OpenAI deployed GPT-5.3-Codex on Cerebras at non-Nvidia frontier speed.

What the S-1 makes visible is the architecture of frontier AI capital up close. OpenAI loaned Cerebras $1 billion in December secured by warrants that allow OpenAI to buy more than 33 million shares - meaning the company that signed a multi-year compute deal worth more than $10 billion with Cerebras is also a major prospective shareholder. Sam Altman, Greg Brockman, Ilya Sutskever, Adam D'Angelo, Andy Bechtolsheim, and Lip-Bu Tan all hold angel positions. G42 (Abu Dhabi), the Abu Dhabi Growth Fund, AMD, Coatue, Tiger Global, Valor Equity Partners, and 1789 Capital sit alongside Benchmark, Foundation Capital, Eclipse, Fidelity, and Alpha Wave on the institutional side. The Cerebras-OpenAI relationship was even cited as evidence by Elon Musk's attorneys in his lawsuit against OpenAI, where filings noted OpenAI had at one point considered acquiring Cerebras while several OpenAI executives held personal investments in the company.

Greg Brockman took the witness stand yesterday in Musk v. Altman and confirmed under oath that his OpenAI equity is currently worth more than $20 billion and possibly up to $30 billion. He acknowledged that an initial $100,000 donation he had promised to OpenAI in its early days was never made. He also confirmed personal investments in Cerebras, CoreWeave, and Helion Energy - all companies that subsequently signed major partnerships with OpenAI. Asked by Musk's attorney why he had not donated his stake to OpenAI's nonprofit, Brockman responded that he and others had poured "blood, sweat, and tears" into building the company in the years since Musk left. The OpenAI Foundation now holds a stake worth approximately $150 billion. Whatever the legal merits of Musk's claims - and the judge has indicated skepticism toward parts of his theory - the testimony is producing a sworn public record of how frontier AI's founders, customers, suppliers, and personal investments have entangled into a single ecosystem. The Cerebras IPO will price that entanglement at public market scale. If the offering clears at the high end with the demand the order books indicate, it establishes that the public market is ready to absorb frontier AI infrastructure at trillion-dollar implied scale, with all the cross-ownership it carries.

The S-1 and the Brockman testimony together produce something the frontier AI ecosystem has not previously had: a sworn, public, document-grade map of who owns what across the lab/supplier/customer layers. The conventional reading treats this as conflict-of-interest texture. Read forward, the disclosure itself is the news. Warrants, angel positions, debt instruments, and personal stakes that lived inside private fundraising decks are now in registration statements and trial transcripts indexed by court reporters. The ecosystem's internal wiring is becoming legible to securities analysts, plaintiffs' attorneys, and tax authorities at the same moment the labor layer is organizing around the same questions. Public-market scrutiny does not slow the trajectory; it changes who can read the trajectory in detail.

The Wind Freeze and the AI Action Plan Reversal

The Trump administration has effectively halted approval for 165 onshore wind farm developments representing roughly 30 GW of generating capacity, the Financial Times reported yesterday. Since August 2025, wind developers have faced canceled meetings, long stretches of silence, and applications no longer being processed. Letters sent to developers in early April said the Pentagon is reviewing how it evaluates the national security impact of energy projects - the same justification used in the offshore wind blockade that federal courts overturned in April after a judge found the action "arbitrary and capricious." The freeze applies even to projects on private land that would not normally fall under Pentagon review. The American Clean Power Association reports that the US clean power sector attracted $79 billion in investment in 2025 and accounted for over 90% of new electricity capacity added to the grid.

The wind story sits alongside a separate development that points in the opposite direction. The New York Times reported yesterday that the White House is considering creating a working group to oversee AI development, with a federal review of new AI models before public release as one possible power. The proposal would mark a sharp reversal from the AI Action Plan published roughly six months ago, which promised a hands-off regulatory posture and offered AI companies most of the concessions they had requested. This reverses the deregulatory federal-preemption posture the March 21 edition of The Century Report documented when the White House framework urged Congress to block state AI laws and avoid new regulatory bodies. Whether the working group is created remains uncertain, but the discussion alone signals an institutional posture shift. Federal review of new AI models would mirror the framework currently operating in the UK government, where multiple layers of oversight evaluate AI safety standards.

The two stories describe an institutional architecture being assembled and disassembled in parallel. The frameworks designed for the previous era - environmental review, energy permitting, broadcast licensing, antitrust - are being applied selectively against generation infrastructure the grid genuinely needs, while new frameworks for AI capability are being considered roughly two years after frontier capability would have benefited from them. As the May 1 edition of The Century Report covered, a federal judge had already granted a preliminary injunction against the federal government's slow-walking of solar and wind permits. The federal court that overturned the offshore wind blockade established that national security review cannot be wielded as a blunt obstruction tool against energy infrastructure. The same logic will likely apply to the onshore freeze if it reaches the courts. Meanwhile, the AI verification framework being considered now would address questions - mass deployment of agentic systems, cyber capability, model behavior under stress - that have already been operational for at least eighteen months.

The 30 GW freeze and the pre-release review proposal are usually read as inconsistency. The same evidence supports a reading where the institutional posture is coherent on its own terms and structurally unstable on the courts' terms. A federal judge already found the offshore wind blockade arbitrary and capricious in April. The onshore application of the same Pentagon-review rationale, against projects on private land, sits on weaker legal ground than the offshore version that already lost. Meanwhile the AI working group proposal arrives without statutory authority, in a jurisdiction where the same administration spent six months telling Congress to preempt state AI laws. Both moves depend on discretionary executive authority that the courts have begun trimming. Watch the first onshore wind developer to file - the legal template is already written - and watch whether the AI working group lands in an executive order or a statute. The difference determines whether either framework survives the next administration.

The Iranian Universities and the Inverse Arc

Iranian researchers told Nature in interviews published yesterday that bombing has damaged some 30 universities and research institutions since the war began February 28. More than 1,400 international scholars have signed an open letter to UN officials condemning the attacks on civilian academic, health, and research infrastructure. Affected institutions include Sharif University of Technology in Tehran, the country's leading technology institution, and the Pasteur Institute of Iran, established more than a century ago for vaccine research.

The accounts from researchers on the ground describe what civilizational infrastructure damage looks like at human scale. Abideh Jafari, a particle physicist at Isfahan University of Technology and a deputy team leader at CERN's CMS detector, says the countrywide internet blackout has prevented her team from accessing data from CERN for weeks. Hamed Bikaraan-Behesht, a research ethicist in Tehran, lost contact with an international collaborator and lost track of a paper under review. Ali Gorji, a neuroscientist at Münster University in Germany who supervises PhD students at the Shefa Neuroscience Research Center in Tehran, says nearby hospitals were attacked alongside the research facility. Ebrahim Azadegan, a philosopher at Sharif, rushed to his office the morning after the April 6 strike to find more than 1,000 books destroyed - a library collected over a lifetime of academic work, along with handwritten manuscripts, students' papers, and drafts of unfinished work. "Only those who truly love their books can understand this pain," he told Nature.

The Iranian story is the inverse arc of everything else in this newsletter. Frontier AI capital is being assembled at trillion-dollar implied valuations. Wind generation representing 30 GW of capacity is being held in regulatory stasis. Federal AI verification frameworks are being considered. DeepMind workers are organizing for voice over what they build. Each of these is a story about institutional architecture being shaped under pressure. The Iranian researchers describe what happens when that architecture is taken apart instead of built - when libraries are reduced to ash, when graduate students cannot focus on work because their cities are being bombed, when a country's research capacity is degraded faster than any peacetime investment can replace it. Gorji's framing is the structural one: "You can rebuild a building. But if attacks on universities become a normal thing, then they can happen in any future stupid war. And this idea is much more destructive than attacking a single building." The transition this newsletter tracks is real and accelerating. So is its opposite, in places where the institutions being assembled elsewhere are being unmade.

No reframe. The story stands.

The Other Side

For two decades, the assumption underwriting frontier technology development was that the people building the capability and the people directing its deployment were the same constituency, or close enough that the difference did not need a formal venue. Engineers wrote code, leadership signed contracts, and the gap between the two was managed through compensation, mission statements, and the implicit promise that the work would be used for things the workers could live with. The 98% DeepMind vote is the moment that assumption stops describing the frontier AI industry.

What the same evidence shows being built is a labor layer with statutory standing to negotiate over deployment categories - mass surveillance, autonomous weapons, classified military integration - that corporate ethics statements have failed to constrain. UK labor law gives the CWU and Unite legal teeth that Google's internal AI principles never had. The "research strike" the organizers named is the operational form: model improvement is itself the resource, and the workers are now organized to withhold it from uses they will not produce capability for. Sundar Pichai signed a Pentagon contract twenty-four hours after 600 employees demanded he refuse. Eleven days later, the workers downstream of that signature have a recognized bargaining unit forming around exactly that question.

Picture the DeepMind researcher who walks into work next week knowing that the contract her colleagues organized to refuse is now subject to a grievance procedure her union can file. Picture the OpenAI engineer in California reading the London playbook and recognizing that California labor law is friendlier than UK law, not less. The capability the capital architecture is pricing at trillion-dollar implied scale runs on the labor of people who have just discovered they can name the terms.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: frontier AI workers turning moral refusal into collective bargaining, federal officials weighing pre-release review for AI models, AI infrastructure suppliers moving from private dependency into public-market scrutiny, enterprise agents being embedded through the operating channels of Wall Street, and clean power still supplying the vast majority of new grid capacity even as the projects to extend it wait for permission. There's also friction, and it's intense - DeepMind staff say their work is making harm "cheaper, faster, and more efficient," 165 wind projects representing 30 GW are frozen under a national-security rationale courts have already rejected once, sworn testimony is exposing how frontier AI's founders, suppliers, customers, and personal stakes have tangled together, and Iranian researchers are losing labs, CERN access, manuscripts, and lifetime libraries to bombing and blackout. But friction generates static, and static tells you which systems are carrying charge. Step back for a moment and you can see it: labor becoming a governance layer where corporate ethics failed, capital turning intelligence infrastructure into a distributed ownership web, federal power improvising review mechanisms for models while obstructing the generation needed to run them, and universities reminding us that civilization is built through fragile accumulations of trust, tools, books, networks, and time. Every transformation has a breaking point. A blackout can sever a mind from its instruments... or reveal why knowledge needs channels no single strike can close.

AI Releases & Advancements

New today

- Google: Added event-driven Webhooks to the Gemini API for push notifications on long-running jobs such as Batch API tasks and video generation . (Google Blog)

- NVIDIA: Released cuOpt agent skills and a supply-chain agent reference workflow for translating natural-language planning tasks into GPU-accelerated optimization runs . (NVIDIA Developer Blog)

- IBM: Expanded watsonx Orchestrate with next-generation agent orchestration, agentic development, governance, and agent-to-agent communication capabilities . (PR Newswire)

- ggml-org / llama.cpp: Released beta Multi-Token Prediction support for speculative decoding, including support for Qwen 3.5 and Qwen 3.6 models . (Reddit)

Sources

Artificial Intelligence & Technology's Reconstitution

- The Verge: Google DeepMind workers are unionizing over AI military contracts

- TechCrunch: OpenAI's cozy partner Cerebras is on track for a blockbuster IPO

- TechCrunch: Sierra raises $950M as the race to own enterprise AI gets serious

- TechCrunch: Anthropic and OpenAI are both launching joint ventures for enterprise AI services

- Wired: Greg Brockman Defends $30B OpenAI Stake: 'Blood, Sweat, and Tears'

- TechCrunch: Elon Musk's only AI expert witness at the OpenAI trial fears an AGI arms race

- Import AI 455: AI systems are about to start building themselves

- Ars Technica: Influential study touting ChatGPT in education retracted over red flags

Institutions & Power Realignment

- Engadget: The White House Is Considering Tighter Regulation Of New AI Models

- Nature: 'Heartbreaking': Iranian scientists on losing labs, libraries and liberty

- Guardian: AI platforms reference Nigel Farage more than other leaders when prompted on UK politics

- HR Dive: AI mandates may stir up religious objections. HR should prepare now.

- EFF: Submission to UK Consultation on Digital ID

- MIT Technology Review: A blueprint for using AI to strengthen democracy

Scientific & Medical Acceleration

- Nature: Powerful tools are revealing the 'control knobs' of the genome

- Nature: Testosterone therapy is trending. Who really needs it, and why?

- BioSpace: Cellenkos Announces FDA Clearance of IND Application for CK0802 Trial in Steroid-Refractory GVHD

- BioSpace: Guardant Health Receives FDA Approval for Guardant360 CDx as Companion Diagnostic for VEPPANU

- ScienceDaily: Greenland ice melt has surged sixfold and scientists are alarmed

- Nature: How fertilizer shortages caused by the energy crisis threaten food security

Economics & Labor Transformation

- TechCrunch: As workers worry about AI, Nvidia's Jensen Huang says AI is 'creating an enormous number of jobs'

- Wired: He Couldn't Land a Job Interview. Was AI to Blame?

- CNBC: Spirit Airlines CEO on carrier's collapse: 'We just kind of ran out of runway'

- CNBC: UPS, FedEx stocks sink after Amazon expands logistics network

- TechCrunch: Image AI models now drive app growth, beating chatbot upgrades

- NYT: The Return for These Investors Isn't Money, It's More Affordable Housing

Infrastructure & Engineering Transitions

- Electrek: Trump just blocked 165 US wind projects – here's what's behind it

- Canary Media: America's nuclear dry spell is over

- Canary Media: Top US nuclear regulator is rewriting its rules for new era of reactors

- Canary Media: Can a carbon price lower power bills? Virginia is betting yes.

- Canary Media: For cheaper power, Virginia's local utilities build small grid batteries

- Utility Dive: ISO New England trims 10-year forecast based on electrification outlook

- Utility Dive: Dominion upbeat on offshore wind as cost estimate eases

- Electrek: Ride1Up makes history launching world's first e-bike with semi-solid state battery

- Electrek: 1,000 curbside EV chargers are coming to Philadelphia

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.