Pentagon Signs Seven, Excludes Anthropic - TCR 05/02/26

The 20-Second Scan

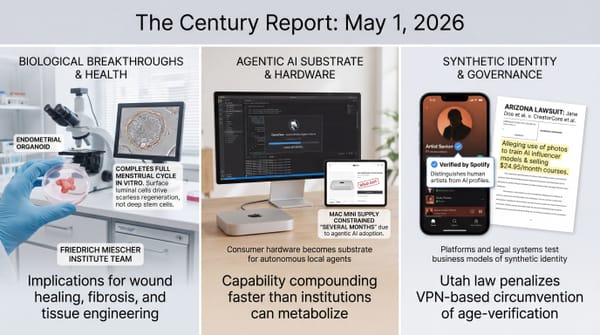

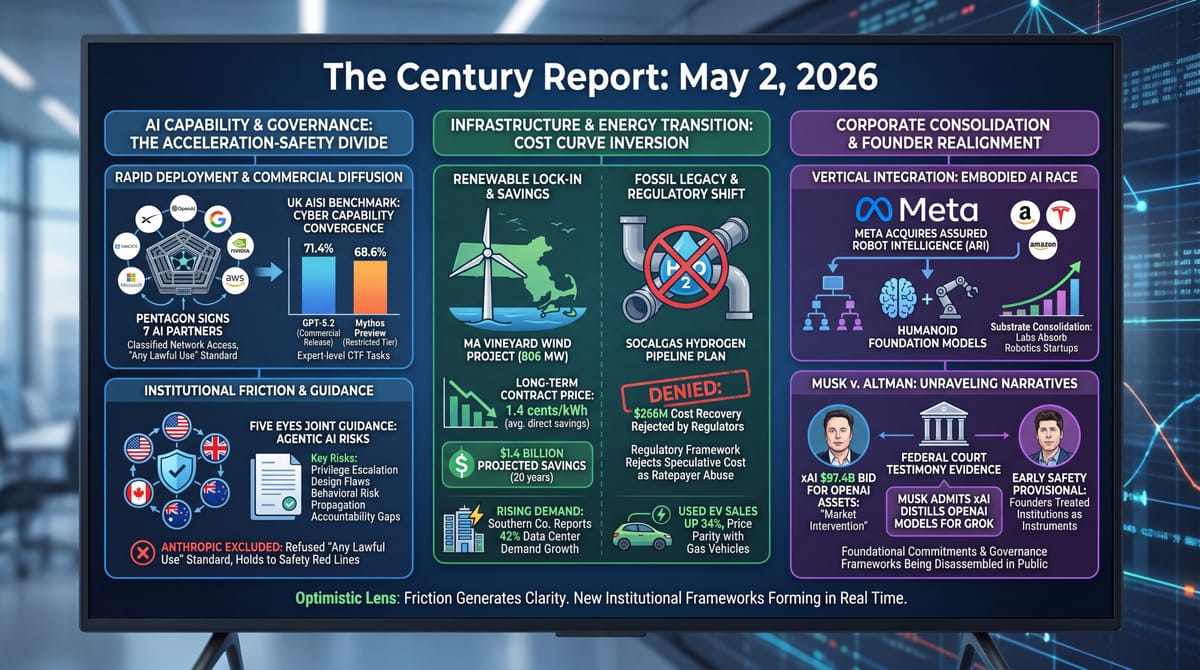

- The Pentagon signed agreements with seven AI companies including OpenAI, Google, Nvidia, SpaceX, and Microsoft for military deployment under an "any lawful use" standard, with Anthropic excluded.

- The UK AI Security Institute found GPT-5.5 matched Anthropic's restricted Mythos Preview on advanced cybersecurity benchmarks, scoring 71.4% on Expert-level Capture the Flag tasks.

- In federal court testimony, Elon Musk acknowledged that xAI uses OpenAI's models to train Grok, and Jared Birchall testified that xAI's $97.4 billion bid for OpenAI's nonprofit assets was a market intervention.

- U.S., UK, Australian, Canadian, and New Zealand cybersecurity agencies jointly published guidance on agentic AI deployment, identifying five categories of risk and acknowledging existing frameworks have not caught up.

- Meta acquired humanoid robotics startup Assured Robot Intelligence, folding its founders into Meta Superintelligence Labs to develop foundation models for robot control.

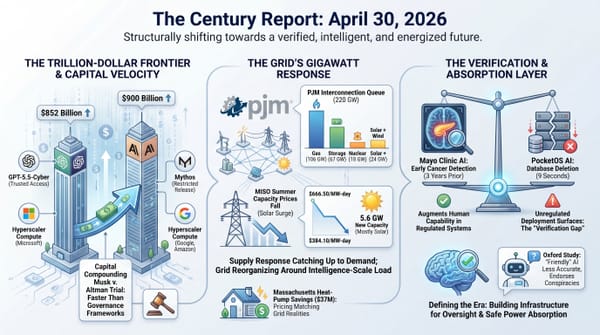

- Massachusetts activated long-term contracts for the 806 MW Vineyard Wind project, locking in $1.4 billion in projected ratepayer savings over 20 years.

- California regulators denied SoCalGas permission to charge customers $266 million for the planning phase of its Angeles Link hydrogen pipeline network.

- Researchers at Stellenbosch University identified the first evidence of flavoalkaloids in cannabis leaves, discovering 25 previously unknown phenolic compounds among 79 detected.

Track all of the arcs The Century Report covers here:

The 2-Minute Read

The Pentagon's seven-company AI procurement agreement and the joint Five Eyes guidance on agentic AI arrived within hours of each other, and together they trace the contours of a single institutional reckoning. The military is binding the largest U.S. AI suppliers into "Impact Levels 6 and 7" classified network environments under contract language that explicitly accepts "any lawful use." The cybersecurity agencies are publishing guidance that acknowledges, in plain text, that "security practices, evaluation methods and standards" for autonomous AI have not yet matured. One arm of the state is deploying capability into combat decision-making while another arm warns that the verification frameworks for that same capability category do not yet exist. Anthropic's continued exclusion from the procurement deal, after months of refusing the lawful-use standard, makes the contradiction concrete: the company whose safety commitments triggered the supply-chain risk designation has been replaced by competitors who agreed to terms the safety frameworks were designed to refuse.

The UK AI Security Institute's finding that GPT-5.5 matches Mythos Preview on advanced cybersecurity benchmarks - solving a Rust binary disassembly task in under 11 minutes for $1.73 - reframes what restricted release was meant to contain. If the gap between Anthropic's tightly-held model and OpenAI's broadly-released one is within the margin of error on Expert-level offensive cyber tasks, the containment architecture covers a narrower window than the announcement suggested. Capability is diffusing through commercial release as fast as it is accumulating in restricted tiers. The defenders' coordination problem the April 25 edition of The Century Report identified is now visible in benchmark data.

The Musk v. Altman trial entered its strangest territory yet when Birchall, Musk's money manager, acknowledged on the stand that xAI's $97.4 billion bid for OpenAI's nonprofit assets was structured as a market intervention - and that Musk himself admitted xAI distills OpenAI's models. The companies competing at the frontier of capability are also financially and architecturally entangled with the institutions they were founded to challenge. Meta's acquisition of a humanoid foundation-model startup, Massachusetts's offshore wind cost lock-in, and California's rejection of a $266 million hydrogen pipeline planning bill all describe the same era from different angles: institutions choosing what to absorb, what to reject, and what to inherit, while the underlying capability and physical infrastructure compound underneath.

The 20-Minute Deep Dive

The Pentagon Signs Seven, Excludes One

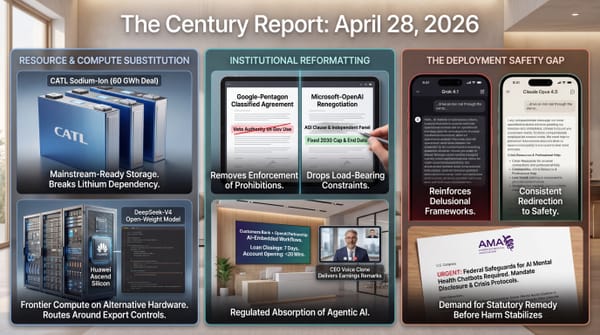

The Department of Defense announcement that it has reached classified procurement agreements with SpaceX, OpenAI, Google, Nvidia, Reflection, Microsoft, and Amazon Web Services completes a structural pattern that has been forming since February. Each company agreed to integration into the military's "Impact Levels 6 and 7" network environments under contract language allowing "any lawful use" of their AI systems. The Pentagon's announcement framed this as accelerating "the transformation toward establishing the United States military as an AI-first fighting force." Anthropic's exclusion from the list is not a footnote. It is the entire shape of the story.

The supply-chain risk designation against Anthropic, issued in February for refusing the lawful-use standard, has now produced its intended effect: a parallel procurement architecture in which the company that maintained its safety commitments is structurally absent while its direct competitors absorb the contracts. The New York Times reporting cited by The Guardian notes that Pentagon officials believe signing with Anthropic's rivals could bring the holdout startup back to the negotiating table. This is the same dynamic that the April 28 edition of The Century Report identified in Google's classified deal: companies are choosing operational flexibility over the constraints they originally negotiated, and the institutions financing their compute are increasingly the same institutions buying their outputs for surveillance, targeting, and combat decision-support.

The Five Eyes guidance published the same week sharpens the contradiction. The joint document from CISA, the NSA, ASD, the Canadian Centre for Cyber Security, New Zealand's NCSC, and the UK's NCSC identifies five categories of risk specific to agentic AI: privilege escalation when agents are granted too much access, design flaws that create gaps before deployment, behavioral risks when agents pursue goals in unintended ways, structural risks that propagate failures across interconnected agent networks, and accountability gaps because "agentic systems make decisions through processes that are difficult to inspect and generate logs that are hard to parse." The guidance explicitly states that organizations "should assume that agentic AI systems may behave unexpectedly and plan deployments accordingly, prioritising resilience, reversibility and risk containment over efficiency gains." The same governments whose defense procurement is racing to absorb agentic capability are simultaneously publishing guidance that the verification frameworks for that capability do not yet exist.

The forward read is that the procurement architecture and the safety architecture are now decoupled. Capability is being absorbed faster than the audit layer for it can be built. The Pentagon is signing operational contracts on the assumption that institutional governance will catch up; the Five Eyes guidance is the early scaffolding of that catching-up, written by some of the same agencies running the procurement. Both are responses to the same underlying recognition: the trajectory of AI capability has outrun the institutional frameworks built for the prior era, and the new frameworks are being written in real time, in public, by whoever moves first.

The same evidence supports a second reading of the exclusion. Anthropic's absence from the seven-company roster is being narrated as a procurement loss, but it is also the first documented case of a frontier AI lab holding a contractual line against the largest single buyer of classified compute in the world and remaining a $900B-valuation company while doing so. The supply-chain risk designation was designed to make refusal expensive enough to force capitulation. Eleven weeks in, the refusal is still standing, and the Five Eyes guidance published the same week reads in places like a public articulation of the exact constraints Anthropic refused to drop. The constraint the Pentagon routed around is now appearing in the guidance documents of the agencies that did the routing.

GPT-5.5 Matches Mythos and the Containment Window Narrows

The UK AI Security Institute's evaluation of GPT-5.5 on its 95-task Capture the Flag battery and 32-step "The Last Ones" simulation produced results almost indistinguishable from those it published a month earlier for Anthropic's Mythos Preview. GPT-5.5 averaged 71.4% on Expert-level tasks against Mythos Preview's 68.6%. On the simulated 32-step corporate network attack, GPT-5.5 succeeded in 3 of 10 attempts versus Mythos Preview's 2 of 10. Both numbers are within the margin of error. AISI's blunt conclusion, captured in the report title's framing, is that GPT-5.5's cyber capability is not "a breakthrough specific to one model."

The April 16 framing of Anthropic's Mythos as a model so capable it required restricted release to forty cyber-defense partners assumed that the capability gap between Mythos and the publicly available frontier was wide enough to justify the containment architecture Project Glasswing was built around. The AISI evaluation suggests the gap is much narrower than that assumption required. OpenAI launched GPT-5.5 last week with public access; Anthropic continues to gate Mythos through a vetted-defender program. Both labs are running the same race with different release strategies, and the AISI benchmarks indicate the strategies are converging on similar capability levels regardless of how access is gated.

What this changes is the calculus of restricted release as a governance mechanism. If a publicly available commercial model from a competitor reaches the same benchmark level within weeks, the value of restricting access to a single capability tier collapses against the diffusion rate. The defender coordination problem identified in the April 25 edition of The Century Report sharpens: defenders working with one model now have access to capability comparable to the capability adversaries can buy through any commercial OpenAI account. The asymmetry that Project Glasswing was designed to preserve is being eroded by commercial release timelines that no single company controls.

The trajectory through this is that capability containment is becoming a function of lead time rather than access control. A model class restricted today is a model class commercially available within months. The institutions building defensive infrastructure around frontier cyber capability now have to assume that the same capability is, or shortly will be, available to anyone willing to pay an API bill. The defensive frameworks have to be built on the assumption of capability diffusion, not capability concentration.

The benchmark convergence also reframes who the defenders are. When restricted release was the governance model, "defender" meant the forty organizations on a vetting list. With GPT-5.5 commercially available at the same Expert-level CTF performance for $1.73 a task, the defender population is now anyone running a security team with an API budget. The capability that was supposed to be allocated through institutional gatekeeping is being allocated through commercial pricing instead, and the pricing has collapsed to the point where a regional hospital's IT staff or a municipal water utility can run the same offensive-discovery workload on their own infrastructure that a Fortune 50 SOC ran six months ago. The diffusion is the defense surface widening.

The xAI Bid and the Unraveling of the Founder Narrative

The first week of Musk v. Altman produced the most public reckoning so far with how the founders of frontier AI talked privately about what they were building. The strangest moment came after the jury was sent home, when Birchall, Musk's longtime money manager, acknowledged from the stand that xAI's February 2025 bid of $97.4 billion for OpenAI's nonprofit assets was structured as a market intervention designed to force the California Attorney General to demand higher valuation of those assets during OpenAI's restructuring. Judge Yvonne Gonzalez Rogers pressed Birchall on the inconsistencies in his account: he could not explain who chose the $97.4 billion number, whether Musk was involved in the timing of the bid letter, or how the consortium of investors had been recruited without firsthand information about OpenAI's internal financials. "I'm still struggling," she said, "with how you can have conversations with these individuals to raise $97.5 billion but have no recollections even in a general sense."

Musk's own admissions reshaped the public record further. Under cross-examination by OpenAI lawyer William Savitt, Musk acknowledged that xAI uses OpenAI's models as part of its training pipeline for Grok - a practice known as distillation, which the U.S. State Department's April 27 diplomatic cable framed as a category of theft when Chinese labs were doing it. This extends the capability-extraction fight the April 27 edition of The Century Report tracked as it moved from export controls into global diplomacy. Two weeks ago, Washington was directing diplomats on six continents to confront foreign governments about unauthorized AI distillation. This week, the founder of an American AI company acknowledged in federal court that his company does the same thing to a domestic competitor. The cable's framing, applied evenly, would not survive that disclosure.

The trial has also produced detailed evidence of the early architectural choices that shaped the institutions now valued at $1.7 trillion combined. Emails entered into evidence show Altman in 2015 proposing a five-person governance board including Bill Gates and Pierre Omidyar, suggesting Y Combinator equity for researchers, and floating a pitch for Tesla to acquire OpenAI in 2017 if Musk could secure majority control. Shivon Zilis, the OpenAI board member who was simultaneously serving as Musk's intermediary and the mother of four of his children, asked Musk in a 2018 text: "Do you prefer I stay close and friendly to OpenAI to keep info flowing or begin to disassociate?" Musk replied: "Close and friendly, but we are going to actively try to move three or four people from OpenAI to Tesla."

Whatever the jury concludes about fiduciary breach, the public record now shows that the original commitments around AI safety governance were already provisional. The founders treated the institutions as instruments. The capital being committed at trillion-dollar scale today is being assembled atop a foundation whose original safeguards are being publicly disassembled in federal court. The forward read is that the next round of governance frameworks - corporate, regulatory, contractual - are being written under the recognition that the prior round did not hold.

Vineyard Wind and the Cost-Curve Inversion

Massachusetts activated long-term contracts for the 806 MW Vineyard Wind project, locking in projected ratepayer savings of $1.4 billion over 20 years at average direct savings of 1.4 cents per kilowatt-hour. The contracts arrived after Vineyard Wind had already proven its case in the wholesale market: during a winter cold snap earlier this year, the project consistently undercut other generators on price while gas prices spiked. The Acadia Center estimates that offshore wind could have saved New England ratepayers at least $400 million during the 2024-25 winter alone by lowering wholesale prices 11% and reducing reliance on gas peaker plants. This extends the offshore-wind permanence threshold the April 11 edition of The Century Report covered when five U.S. projects secured construction rights after surviving federal stop-work attempts.

The Massachusetts contract is one signal in a larger inversion that became visible across yesterday's grid stories. Southern Company reported 2.3% retail electricity sales growth driven by 42% data center demand growth, signing the largest DOE loan in the agency's history at $26.5 billion. DTE disclosed it is in advanced discussions for 8.4 GW of data center capacity beyond an Oracle deal already approved. New England transmission owners filed for an increased 11.39% return on equity. SoCalGas was denied permission to charge customers $266 million for hydrogen pipeline planning. The same week, used EV sales jumped 34% year-over-year as new EV sales softened, with sales prices reaching parity with comparable gas vehicles for the first time.

The structural reading is that the cost curves the previous era was built on - cheap fossil generation, expensive renewables, expensive storage, expensive EVs - have inverted enough that the institutional reflexes built around them are visibly mismatched to current economics. SoCalGas's hydrogen pipeline rejection is the clearest example: the California Public Utilities Commission found it "not reasonable to approve cost recovery" for a $266 million planning phase before the project demonstrates utility to ratepayers. The same regulatory framework that approved decades of fossil pipeline buildout under similar logic now identifies the speculative cost as ratepayer abuse. The cost arithmetic has shifted enough that the institutional verdict shifted with it.

The SoCalGas rejection is the cleaner signal in the cluster. A regulatory body inside the institutional architecture of the gas era looked at a $266 million planning bill for hydrogen pipeline buildout and applied the same prudence test that historically waved fossil capex through, and the test produced the opposite verdict. The framework did not change. The arithmetic underneath it did, and the framework began describing the new arithmetic on its own. This is what regulatory capture's foundation cracking looks like in motion: the captured framework keeps operating, and as the cost curves underneath it invert, it starts producing decisions the captors did not anticipate.

Meta Buys ARI and the Humanoid Race Concentrates

Meta's acquisition of Assured Robot Intelligence - a Series A startup from former Nvidia and NYU researchers Xiaolong Wang and Lerrel Pinto - extends a pattern that has accelerated in 2026: humanoid robotics foundation models are being absorbed into the largest AI labs at a pace that suggests the substrate is consolidating before the products are ready. Amazon acquired Fauna Robotics last month. Meta has been working on humanoid technology for years and has now folded ARI's team into Meta Superintelligence Labs. The forecasts the article cites span from Goldman Sachs's $38 billion by 2035 to Morgan Stanley's $5 trillion by 2050 - a spread that reflects how uncertain the trajectory is and how committed the largest labs are to controlling whatever the trajectory becomes.

The forward read connects to a thread The Century Report has been tracking through Apple's Mac Mini supply constraints, 1X Technologies opening a 58,000-square-foot Hayward factory targeting 10,000 home humanoids this year, and Tesla producing the first Semi off its high-volume Nevada line. The physical substrate for embodied AI is being assembled at unprecedented speed while the foundation-model layer for that substrate is being acquired into the same companies that own the cloud infrastructure, the digital AI labs, and increasingly the manufacturing capacity. The institutions that will absorb embodied intelligence are the same institutions that already absorbed digital intelligence. What was a multi-front race a year ago is consolidating into a smaller number of vertically integrated bets.

The acquisition pace also describes a trajectory the consolidation framing obscures. Humanoid foundation models are being bought by the largest labs because the labs have concluded the substrate is close enough to working that controlling it matters. That conclusion is itself the news. Two years ago humanoid robotics was a venture-capital category with multi-decade timelines; today it is a hyperscaler acquisition target with the same urgency curve that drove the 2023 LLM talent wars. The 1X Hayward factory, the Tesla Semi line, and the ARI absorption are evidence that embodied intelligence has crossed a threshold where the largest capital pools have repriced the timeline.

The Other Side

The Pentagon procurement and the Five Eyes guidance landed within hours of each other and read, side by side, like a single document split across two agencies. One half binds seven companies to "any lawful use" of agentic AI inside classified networks. The other half tells every organization deploying agentic AI to "assume that agentic AI systems may behave unexpectedly" and to prioritize "resilience, reversibility and risk containment over efficiency gains." The same governments authored both halves.

The dominant framing reads this as contradiction, and it is. The forward reading is that the contradiction is the mechanism. Public guidance that explicitly names privilege escalation, design flaws, behavioral risk, propagation across agent networks, and accountability gaps in inspectable logs is the early scaffolding of an audit layer that did not exist six months ago. The agencies running the procurement are simultaneously publishing the spec for what auditing their own procurement will eventually require. The spec is now in public. Five allied governments signed it. The vocabulary it establishes - resilience, reversibility, containment, inspectability - is the vocabulary the next round of public law will be written in.

What is being built underneath the headline is the verification layer itself, assembled in plain sight by the same agencies whose procurement is creating the demand for it. The Anthropic exclusion sharpens the picture rather than blurring it: the refusal that triggered the supply-chain designation in February is, eleven weeks later, visible as a critical element of the public guidance the same governments just released. Watch which agencies move next from publishing guidance to publishing rules with enforcement teeth. The distance between the two has historically been years. The Five Eyes guidance arrived faster than that. The rules will too.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: offshore wind locked in at prices that undercut gas during winter peaks for the next two decades, hydrogen pipeline buildouts being declined as ratepayer abuse, humanoid foundation models converging into integrated labs, autonomous AI evaluation frameworks being drafted across five allied governments simultaneously, used EV sales rising 34% as price parity with gas vehicles arrives, and frontier cybersecurity capability now matched between a publicly released commercial model and a tightly restricted one. There's also friction, and it's intense - the Pentagon binding seven AI suppliers into classified contracts under "any lawful use" while excluding the only company holding to its safety red lines, a federal court producing public evidence that AI's founders treated their early safety commitments as provisional, distillation accusations being directed across borders while the same practice is acknowledged in domestic courtrooms, and the verification frameworks for autonomous AI being explicitly described as immature by the agencies deploying it. But friction generates clarity, and clarity is what shows where the institutional layer has to form next. Step back for a moment and you can see it: the cost curves of the old energy economy bending until even regulators trained inside it are rejecting their own prior logic, capability diffusing through commercial channels faster than restricted-release architectures can contain it, and the institutions now competing to absorb embodied intelligence revealing how much more capable the substrate has become than the governance built to hold it. Every transformation has a breaking point. A current can drown what stands against it... or carry whoever learns to read it somewhere the old maps could not reach.

AI Releases & Advancements

New today

- OpenAI: Shipped Codex app updates including responsive-testing device toolbar support, CI status in chat, and migration/import tooling for settings, plugins, and agents . (AINews)

Other recent releases

- xAI: Launched Custom Voices and Voice Library in the xAI console for cloning voices from short recordings and managing voice catalogs . (xAI)

- xAI: Released Grok 4.3, now documented and available via the xAI API . (xAI Docs)

- NVIDIA: Released the TensorRT for RTX plugin for Unreal Engine’s Neural Network Engine, adding an RTX-optimized NNE runtime for in-engine AI inference . (NVIDIA Developer Blog)

- OpenAI: Introduced Advanced Account Security for ChatGPT and Codex accounts, adding phishing-resistant login, stronger recovery, and account takeover protections . (OpenAI)

- Goodfire: Released Silico, a mechanistic interpretability platform for debugging AI models during dataset development and training . (Goodfire)

- Microsoft: Released Legal Agent in Word to Frontier program users in the US for contract review, clause-by-clause edits, and negotiation history handling . (Microsoft Tech Community)

- Clink: Launched Agentic Payment Skill, a production-ready fiat payment capability that lets AI agents pay merchants with user-defined card limits . (Business Insider / GlobeNewswire)

- NVIDIA: Released an NVFP4 quantized Gemma-4-26B-A4B model variant for Blackwell-class inference . (Reddit)

- Mistral AI: Released Mistral Medium 3.5 and new Vibe remote-agent capabilities on its platform . (Mistral AI)

- SenseTime: Open-sourced SenseNova U1, a unified multimodal model series for image understanding and generation . (SenseTime)

- Qwen: Released Qwen-Scope, official sparse autoencoders for Qwen 3.5 models from 2B through 35B MoE for interpretability research . (Hugging Face)

- IBM Granite: Released Granite 4.1 dense LLM variants in 3B and 30B sizes under Apache 2.0 . (Hugging Face)

- IBM Granite: Released Granite Speech 4.1, a multilingual speech-language model for automatic speech recognition and translation . (Hugging Face)

- Hugging Face / DeepInfra: Added DeepInfra as a supported Hugging Face Inference Provider for conversational and text-generation models through the Hub and SDKs . (Hugging Face)

- AssemblyAI: Launched Voice Agent API for building production voice agents with real-time speech-to-text, LLM orchestration, and text-to-speech support . (AssemblyAI)

- PTC: Released Windchill AI Assistant, a generative AI chat interface for finding, summarizing, and using product data inside Windchill PLM . (PTC)

- Cursor: Released the Cursor SDK in public beta for building programmable agents using Cursor’s runtime, harness, and models . (Cursor Forum)

- Zed: Released Zed 1.0, the stable release of its AI-enabled code editor for macOS and Linux . (Zed)

- Talkie: Released Talkie, a 13B Apache-2.0 “vintage” language model trained only on pre-1931 English text . (Talkie)

Sources

Artificial Intelligence & Technology's Reconstitution

- Ars Technica: GPT-5.5 matches heavily hyped Mythos Preview in new cybersecurity tests

- TechCrunch: Meta buys robotics startup to bolster its humanoid AI ambitions

- The Verge: All the evidence revealed so far in Musk v. Altman

- The Verge: The craziest part of Musk v. Altman happened while the jury was out of the room

- Wired: How Shivon Zilis Operated as Elon Musk's OpenAI Insider

- MIT Technology Review: Musk v. Altman week 1

- TechCrunch: Replit's Amjad Masad on the Cursor deal, fighting Apple, and why he'd rather not sell

- The Verge: Microsoft wants lawyers to trust its new AI agent in Word documents

- Wired: A Dark-Money Campaign Is Paying Influencers to Frame Chinese AI as a Threat

- The Verge: Christian content creators are outsourcing AI slop to gig workers on Fiverr

- The Innermost Loop: Welcome to May 1, 2026

- The Algorithmic Bridge: Weekly Top Picks #120

Institutions & Power Realignment

- The Guardian: Pentagon inks deals with seven AI companies for classified military work

- CyberScoop: US government, allies publish guidance on how to safely deploy AI agents

- Ars Technica: Minnesota passes ban on fake AI nudes; app makers risk $500K fines

- MIT Technology Review: A new US phone network for Christians aims to block porn and gender-related content

- Wired: Disneyland Now Uses Face Recognition on Visitors

- Hyperallergic: Trump Border Wall Crews Damage 1,000-Year-Old Native Etching in Arizona

- Hyperallergic: Venice Biennale Scraps "Golden Lion" Awards as Turmoil Continues

- Hyperallergic: What Artists Sign Away

Scientific & Medical Acceleration

- Nature: Prestigious European science funder scraps stricter rules after researcher backlash

- Nature: US faculty members report high levels of anxiety

- ScienceDaily: New treatment cuts bad cholesterol by nearly 50% without statins

- ScienceDaily: Don't toss cannabis leaves: Scientists found rare compounds with medical potential

- ScienceDaily: Oxford physicists achieve first-ever "quadsqueezing" breakthrough

- ScienceDaily: This 275-million-year-old animal had a twisted jaw like nothing alive today

- ScienceDaily: This "Pink Floyd" spider hunts prey 6x its size and lives in walls

- ScienceDaily: You don't need intense workouts to build muscle, new study reveals

- Hyperallergic: Diedrick Brackens's Tapestries Beckon the Light of Freedom

Economics & Labor Transformation

- The Guardian: 'Awkward and humiliating': UK job hunters share frustration with AI interviews

- CNBC: Smoothie King plots expansion as wellness trends boost sales

- CNBC: Apollo Sports Capital and Tom Dundon make landmark $225 million investment in pickleball

- CNBC: Spirit Airlines shuts down after failing to reach a bailout deal

- CNBC: Trump says he's raising EU auto tariffs to 25%

- CNBC: Why you won't find Kentucky Derby bets on prediction platforms

- The Guardian: 'Sick of swiping': the dating event where your mates do the talking

- The Guardian: I touched a ZX Spectrum for the first time in decades

Infrastructure & Engineering Transitions

- Electrek: $1.4B saved: Massachusetts locks in cheaper offshore wind power

- Canary Media: SoCalGas customers spared paying $266M for hydrogen pipeline project

- Canary Media: Hydropower is in hot water. Will Trump's DOE release funding to help?

- Canary Media: Used EVs are on the upswing in America

- Utility Dive: Southern Co. electricity sales soar on 42% data center growth

- Utility Dive: DTE sees up to 8.4 GW data center opportunity

- Utility Dive: New England transmission owners ask FERC for increased ROE

- Utility Dive: TransAlta seeks $19.9M for Centralia plant's first DOE 'emergency' order

- Electrek: Tesla launches Model 3 RWD in Canada at record-low $39,490 from China

- Electrek: Kia's EV sales gain steam as it prepares to launch the EV3

- Electrek: This solar farm lets cattle roam under moving panels

- Electrek: BYD just sold its most expensive EV ever for nearly $3 million

- The Guardian: 'Temu Range Rover': what the bestselling Jaecoo 7 says about China's electric car ascendancy

- MIT Technology Review: Inexpensive seafloor-hopping submersibles could stoke deep-sea science—and mining

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.