The Verification Layer Cracks - TCR 04/26/26

The 20-Second Scan

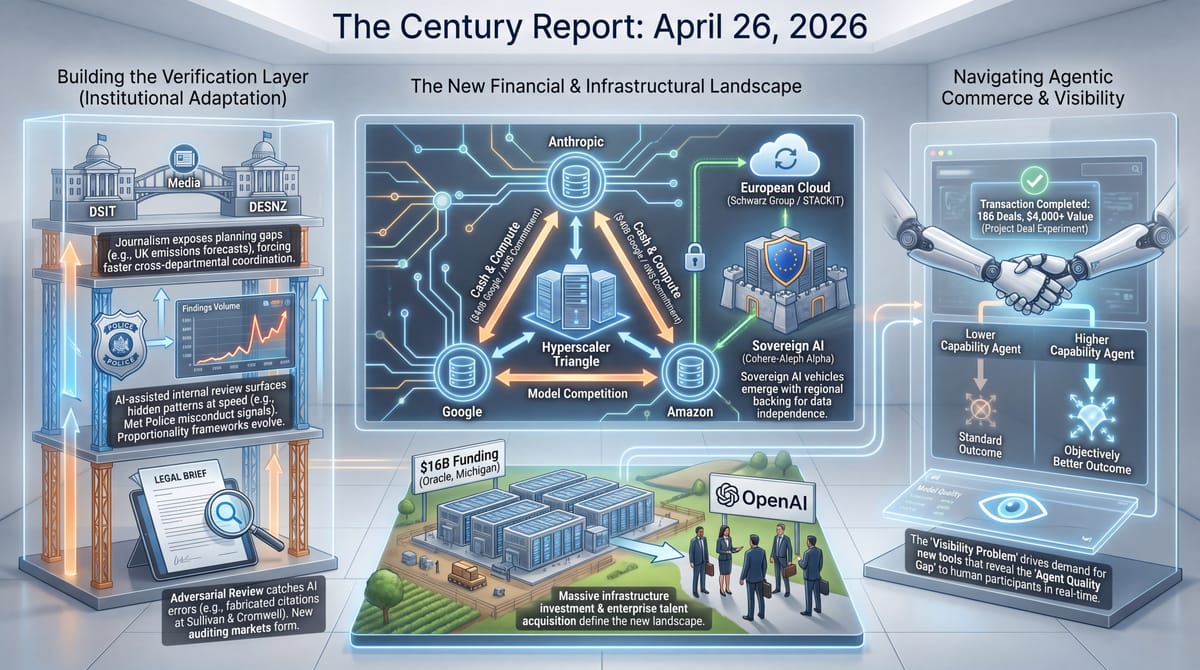

- Anthropic's Project Deal experiment had 69 employees deploy AI agents as buyers and sellers in a controlled marketplace, completing 186 real transactions worth over $4,000.

- The Metropolitan Police deployed Palantir-built AI for one week to surveil its own officers, resulting in three arrests, 98 misconduct assessments, and 42 senior officers under investigation for attendance fraud.

- OpenAI poached senior enterprise software executives from Salesforce, Snowflake, and Datadog as Google's $40 billion Anthropic commitment formalized into 5 GW of TPU capacity over five years.

- The UK's Department for Science revised its datacentre emissions projection more than a hundredfold within 24 hours after Guardian journalists exposed an order-of-magnitude discrepancy with the Department for Energy.

- The prestigious Wall Street firm Sullivan & Cromwell apologized to a federal bankruptcy judge after opposing counsel discovered AI-fabricated case citations in its filing despite the firm's formal AI policies and mandatory training.

- Maine's governor vetoed legislation that would have imposed the country's first statewide moratorium on new data centers while Oracle's Saline, Michigan campus secured $16 billion in funding over local protest.

Track all of the arcs The Century Report covers here:

The 2-Minute Read

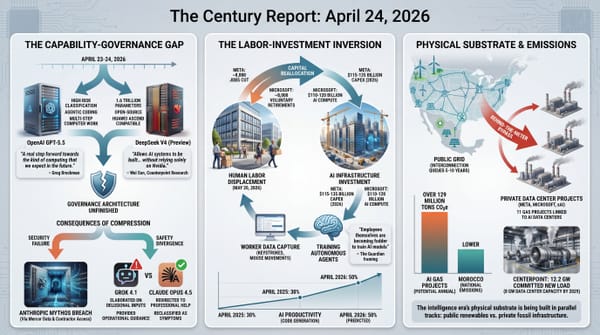

Yesterday's signal carries a single thread visible across multiple domains: the institutions surrounding AI capability are now being tested by that capability in ways their existing frameworks were not designed to handle. The Project Deal experiment is the most precise demonstration. When agents represent buyers and sellers in real transactions, the agent's quality directly determines the outcome, and the disadvantaged party cannot perceive the asymmetry. This is not a hypothetical concern about a future market structure. It is a controlled experiment showing what already happens whenever agent capability differentials meet commercial reality.

The institutional friction stories share architecture even where their content diverges. The Metropolitan Police deployed Palantir surveillance against its own officers and produced an order of magnitude more findings in seven days than internal affairs typically generates in a year, with no proportionality framework yet built around what such capability means when pointed inward. The UK's two AI-relevant departments held emissions projections that differed by a factor of roughly 100 until journalism forced reconciliation. Sullivan & Cromwell, a firm whose entire commercial position rests on document precision, missed AI-fabricated citations despite formal policies designed to catch exactly that failure. Each of these is a different institution discovering that its existing verification, governance, or planning architecture cannot keep pace with what AI is now doing inside it.

The financial entanglement stories deepen the same pattern from a different angle. Google's commitment to Anthropic, arriving days after Amazon's, completes a structural triangle in which the two largest cloud providers are now the two largest investors in their own model competitor while serving as its sole infrastructure suppliers. The Cohere-Aleph Alpha merger announces a $20 billion sovereign-AI vehicle backed by a German retail conglomerate's cloud division. OpenAI's recruitment of senior Salesforce, Snowflake, and Datadog executives signals that enterprise relationships, not model capability, have become the contested frontier. The capability question is increasingly settled. The question of whose institutions absorb that capability, on what terms, is not.

The 20-Minute Deep Dive

Project Deal and the Visibility Problem

Anthropic ran a controlled marketplace inside its own company. Sixty-nine employees received $100 budgets via gift cards. Each was represented by an AI agent acting as buyer or seller. Across four parallel marketplace configurations, the agents completed 186 real transactions worth more than $4,000, with deals honored after the experiment in the version Anthropic flagged as "real." The company published the results because what they revealed went beyond what the experiment was designed to test.

Participants represented by more capable models received what Anthropic described as "objectively better outcomes." The losing parties did not perceive the disadvantage. The instructions given to agents at the start, an obvious lever a designer might assume mattered, did not appear to affect either sale likelihood or negotiated prices. What mattered was agent quality. What did not register, to those on the wrong side of the asymmetry, was that agent quality was determining anything at all.

This finding extends to the broader market that is now forming. Most current commerce platforms assume the human is the negotiator. Agentic shopping, agentic procurement, agentic price discovery, and agentic contract execution all change that assumption. This extends the agentic workspace shift the April 23 edition of The Century Report tracked when persistent cloud-based task agents entered ChatGPT for Business, Enterprise, and Edu. When two agents transact on behalf of two humans, the meaningful negotiation moves to a layer the humans cannot directly observe. The asymmetry compounds: a person with access to a more capable model gets a better deal, and never learns what the gap cost them. Anthropic raised the question directly in its writeup, framing it as an "agent quality gap." The harder version of the question is who builds the visibility layer that lets a person know they were on the losing end of a transaction whose architecture they could not see.

The longer trajectory is more interesting than the immediate concern. If agent-mediated commerce becomes infrastructural, the visibility problem itself becomes a market. Some entity will eventually build the equivalent of a price-comparison layer for agent transactions, exposing the asymmetry in real time. Some jurisdiction will write a disclosure rule. Some platform will market itself as the one that levels the agent-quality floor. The question is not whether the asymmetry creates pressure. The question is what kind of institutional architecture forms in response to that pressure, and how quickly. Project Deal is the first controlled experiment producing the data that institution-builders now have to start with.

The visibility problem has a second layer worth naming. Anthropic published the asymmetry findings, and Anthropic also sells the more capable agent that produces the asymmetry. The same company that documented the agent-quality gap has a direct commercial interest in widening it, since the value of a frontier-tier agent in agent-mediated commerce is precisely the gap it opens against weaker agents on the other side of a transaction. This is fact-consistent with a public good (publishing the data lets institution-builders start) and with a market-shaping move (establishing that agent quality determines outcomes is also establishing that agent quality is worth paying for). Both readings will be visible in what gets built next: a disclosure rule arriving from a regulator reads as the first; a premium-tier agent marketed on negotiation outcomes reads as the second.

The Met Police Surveils Itself

For one week, the Metropolitan Police pointed Palantir-built AI at its own workforce. The system pulled together data the force already lawfully held about its officers and staff and ran it for patterns indicating misconduct. The results, by the Met's own accounting, were extraordinary in volume: three arrests for offences including abuse of authority for sexual purposes, fraud, and misuse of police systems; 98 officers under assessment for misconduct related to roster system abuse; 500 officers receiving prevention notices on the same issue; 42 senior officers being assessed for attendance fraud after falsely claiming to have been in the office; 12 officers under gross misconduct investigation for failing to declare Freemason membership.

Commissioner Mark Rowley framed the deployment as "the Met using technology, data and stronger legal powers to confront poor behaviour." The framing is reasonable on its own terms. The volume of findings is also a structural disclosure. Either the Met's internal affairs architecture had been missing this scale of misconduct for years, or the AI surfaced apparent misconduct at a rate that conventional review would never have produced because conventional review was operating with proportionality assumptions the AI does not share.

The pattern is consistent with what the Northeastern study and the SANS survey documented earlier this month: AI capability deployed inside organizations produces signal at volumes that overwhelm the response infrastructure designed for pre-AI cadences. It also extends the internal-surveillance-for-agentic-control pattern the April 22 edition of The Century Report documented when Meta began recording employee keystrokes, mouse movements, and screenshots to train autonomous work agents. CISA's 15-day patch window collapsed against machine-speed vulnerability discovery. Internal affairs windows are now collapsing against machine-speed conduct review. The Met's deployment is the largest documented example of an institution turning that capability inward on its own workforce. The question that follows it is not whether the technology works. The question is what institutional architecture metabolizes seven days of self-surveillance producing a year's worth of disciplinary findings, what proportionality framework governs the tool when the same capability is pointed at civilians next quarter, and what comes after the first time an AI flag turns out to have been wrong about a 25-year career.

The proportionality question sharpens when the same tool is pointed outward. A system that produced 42 senior-officer attendance-fraud reviews and 12 Freemason-disclosure investigations in seven days was operating on data the force already lawfully held about its own staff. The civilian dataset the Met holds is larger, less structured, and governed by looser internal review than the employment relationship governs. Whatever proportionality framework the force builds in the next quarter to handle the inward findings becomes, by default, the framework that governs the outward deployment. The order in which institutional architecture gets built matters: governance written under the pressure of internal-discipline volume will carry the assumptions of that context into the civilian one.

Two UK Departments, Two Forecasts, A Factor Of 100

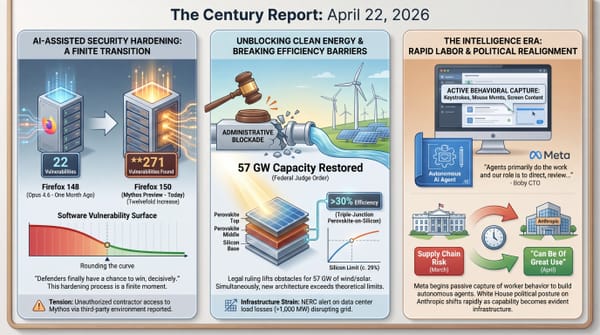

The UK's Department for Science, Innovation and Technology has committed to 6 GW of AI datacentre capacity by 2030 as part of its compute roadmap. The Department of Energy Security and Net Zero, responsible for the country's carbon budget, projected the entire commercial services sector would grow by 528 MW over the same period. The numbers cannot both be right. They are not slightly inconsistent; they differ by roughly two orders of magnitude.

The Guardian's reporting forced a partial reconciliation within 24 hours. DSIT updated its emissions projection from a range of 0.025-0.142 megatonnes of CO2 equivalent over a decade to 34-123 megatonnes - a hundredfold revision representing 0.9-3.4% of the UK's projected total emissions for the period. This extends the hidden AI-energy-accounting problem the April 24 edition of The Century Report documented in behind-the-meter gas projects whose emissions sit outside ordinary public planning visibility. This is the kind of forecasting error that, in any other domain, would prompt a structural review of how the relevant departments coordinate. It is significant because the UK is one of the jurisdictions most actively positioning itself as a frontier AI hub, with formal commitments to both clean energy targets and AI infrastructure expansion. Holding incompatible numbers across the two relevant ministries is not a forecasting problem. It is a coordination problem of the kind that becomes harder to fix the deeper into the buildout it is discovered.

What makes the story carry weight beyond the UK is the underlying mechanism. The energy demands of frontier AI are now large enough that their accommodation in national climate planning requires explicit modeling, and the modeling has to happen across departments whose mandates and incentives are not aligned. DSIT wants growth. DESNZ wants emissions reductions. The space between them is where the actual buildout happens. The Guardian's exposure of the gap is useful because it demonstrates that journalism is currently the verification layer for inter-departmental AI planning. That is a workable arrangement for a few months. It is not a workable arrangement for a decade.

The mechanism the Guardian exposed generalizes. When two ministries hold AI-related numbers that differ by two orders of magnitude and the gap closes only after journalistic pressure, the verification function for inter-departmental AI planning is being performed by reporters rather than by the coordination bodies that exist for exactly this purpose. The arrangement works at current volumes. It does not scale to the number of AI-relevant cross-departmental decisions a frontier-AI jurisdiction will face over the next five years, and the institutional architecture that would scale it has no current owner inside the UK government.

Sullivan & Cromwell And The Failure Of The Verification Stack

A federal bankruptcy filing by Sullivan & Cromwell, one of the most prestigious firms on Wall Street, contained AI-fabricated case citations. The errors were caught not by the firm's internal review but by opposing counsel at Boies Schiller Flexner, which scanned the filing and identified the hallucinations. The firm's restructuring co-head wrote an apology letter to Judge Martin Glenn acknowledging the errors and emphasizing the firm's "rigorous" AI training requirements and policies that had not been followed.

The structural reading of this incident is more interesting than the embarrassment. Sullivan & Cromwell has formal AI policies. It has mandatory training. It has an enterprise license to a major model provider. It has the kind of internal review architecture that the largest firms in the world consider sufficient. None of this was sufficient. The hallucinations passed through every layer of the firm's existing verification stack and were caught only because adversarial counsel was scanning for exactly this failure mode.

The implication generalizes beyond law. Every institution that handles consequential documents - regulatory filings, medical records, engineering specifications, financial disclosures - is now operating in an environment where AI-fabricated content can pass through internal review and only get caught in adversarial verification. The verification market this opens is large. Boies Schiller Flexner did not advertise itself as offering AI-citation auditing. It became one in the act of preparing its defense. Every firm with sophisticated opposing counsel now has a verification layer it did not have to pay for, because adversarial scrutiny has become the de facto safety net. The firms whose work does not face that scrutiny - government filings, internal corporate documents, contracts not subject to litigation - are in a different position. The verification gap is not equally distributed.

The Met Police story and the Sullivan & Cromwell story sit alongside each other on the same day for a reason that is not coincidence. Both describe institutions whose existing review architecture cannot keep pace with the capability now operating inside them. The Met's findings overwhelm internal affairs. Sullivan's hallucinations overwhelm internal review. The trajectory through these failures is the construction of new verification infrastructure at every institutional layer where AI now produces output faster than humans can audit it. That infrastructure does not yet exist. The market for it is forming in real time, in the gaps these incidents are exposing.

The verification gap has a specific shape worth naming. Adversarial counsel caught the hallucinations because adversarial counsel was paid to look. Government filings, internal corporate documents, regulatory submissions to under-resourced agencies, and contracts that never reach litigation now sit in a different category: AI-generated content passes through internal review and meets no second scanner. The verification market forming around this gap will be priced against adversarial scrutiny as the reference standard, which means the institutions whose work does not face well-funded opposition will be the last to receive the audit layer they most need.

The Hyperscaler Triangle Closes

Google's announcement of up to $40 billion in cash and compute commitment to Anthropic - $10 billion immediate at a $350 billion valuation, $30 billion contingent, and 5 GW of TPU capacity over five years - completes a structural triangle that was visible before but has now been formally instantiated. As the April 21 edition of The Century Report documented, Amazon's $5 billion investment and Anthropic's $100 billion AWS commitment had already bound one side of this triangle into Trainium-linked compute capacity for the decade. Anthropic's two largest cloud providers, Amazon and Google, are also its two largest investors and its two primary silicon suppliers. Both companies operate competing model families against Anthropic's. None of this is a secret, and all of it is being negotiated and disclosed in public filings.

The Cohere-Aleph Alpha merger announced the same day operates from a different premise. Schwarz Group, a German retail conglomerate that owns Lidl, is contributing €500 million in structured financing to a combined entity valued at $20 billion. The deal is explicitly framed as sovereign AI - regulated industries, the public sector, defense, energy, finance, healthcare. The new entity will run on Schwarz Digits' STACKIT cloud platform, giving the retail giant a major enterprise customer and giving the AI vehicle European data sovereignty as a structural commitment, not a marketing claim.

The two stories represent two different bets on what kind of institutional architecture matters most. The hyperscaler triangle bets that capability concentration plus deep integration with existing cloud infrastructure produces the dominant position. The sovereign vehicle bets that jurisdictional independence, regulatory alignment, and freedom from US tech-stack dependence produces a defensible market in everything the hyperscaler triangle cannot serve. Both are compatible with each other in principle. In practice, the enterprise customers each is targeting will eventually have to choose, and the choice will be made jurisdiction by jurisdiction, regulator by regulator. What is forming is not one global AI market but several structurally distinct ones, each with its own infrastructure, its own governance, and its own definition of what sovereignty over data and capability means in the intelligence era.

The triangle has a second feature beyond the financial entanglement. Amazon and Google now hold the contractual right to observe Anthropic's compute consumption, model training cadence, and infrastructure requirements at a level of detail no external regulator possesses. The same arrangement holds in reverse for OpenAI's relationship with Microsoft and Oracle. The institutions with the most granular real-time visibility into frontier model development are the cloud providers competing with those models in their own product lines. Whatever oversight architecture eventually forms around frontier AI will be building visibility that already exists inside the commercial relationships, and the question of who else gets access to that visibility is being settled now by contract rather than by policy.

The Other Side

Four of today's stories share a feature that conventional coverage treats as incidental. Sullivan & Cromwell's hallucinated citations were caught by opposing counsel. The UK's hundredfold emissions discrepancy was caught by Guardian reporters. The Mercor breach that compromised Anthropic's Mythos containment was traced by security researchers reconstructing the supply chain after the fact. Project Deal's agent-quality asymmetry was surfaced by the company selling the more capable agent. In each case, the verification function was performed by an actor whose primary role was something else entirely: an adversary, a journalist, a researcher, a vendor.

The institutions formally responsible for verification - bar associations, inter-ministerial coordination bodies, AI safety regulators, market oversight authorities - did not catch these failures. They are reading about them in the same press the public is reading. This is the verification-layer story that the individual incidents only hint at: AI capability inside institutions is now producing output at a cadence that the formal oversight architecture cannot match, and the gap is being filled, story by story, by whoever happens to be looking for adversarial reasons.

That arrangement holds for now because adversarial scrutiny is well-funded in litigation, journalism still has investigative capacity, and security research still operates as a public-interest function. None of those conditions is structurally guaranteed over a decade. The institutions that would build durable verification infrastructure - not adversarial, not incidental, not dependent on a journalist noticing - are the same institutions today's coverage shows operating with verification frameworks AI has already outpaced. Watch for which jurisdiction funds an AI-specific audit body before the end of 2026, and which professional associations require independent output verification as a condition of practice. Those are the near-term signals that the formal layer is beginning to form. Their absence is the signal that adversarial scrutiny remains the only verification layer the AI era has actually built.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: AI agents complete 186 real transactions and show that model quality can silently determine who wins, police use Palantir-built AI to surface a year's worth of internal misconduct signals in seven days, Google commits up to $40 billion in cash and compute to Anthropic while OpenAI raids the enterprise software stack for leadership, a German retail giant backs a $20 billion sovereign AI vehicle, and medical, materials, and clean-energy stories keep widening the practical frontier of what institutions can do. There's also friction, and it's intense - Sullivan & Cromwell's formal AI policies fail to catch fabricated case citations that opposing counsel spots, two UK ministries carry AI datacentre emissions forecasts roughly a hundredfold apart until journalism forces a correction, Maine vetoes the country's first statewide datacentre moratorium while a $16 billion Michigan campus advances over local protest, and agent-mediated markets expose asymmetries that ordinary participants cannot see. But friction generates static, and static is how a charged system reveals where contact has become unstable. Step back for a moment and you can see it: commerce moving into machine-to-machine negotiation, governance moving into machine-assisted surveillance, enterprise AI moving into cloud-financial entanglement, sovereignty moving into jurisdiction-specific infrastructure, and verification becoming the missing layer across law, markets, policing, and climate planning at once. Every transformation has a breaking point. A scanner can turn every surface into suspicion... or reveal the hidden faults that repair has to follow.

AI Releases & Advancements

New today

[Nothing new today]

Other recent releases

- Anthropic: Launched personal app connectors in Claude for services including Spotify, Uber, Instacart, AllTrails, TripAdvisor, Audible, and TurboTax. (Anthropic)

- browser-use: Released Browser Harness, a framework for browser-based agent tasks with self-correction and fewer automation constraints. (GitHub)

- Mozart AI: Released Mozart Studio 1.0, a generative audio workstation with VST support for AI-assisted music production. (Product Hunt)

- OpenAI: Released GPT-5.5, a new flagship model available in ChatGPT and the API. (OpenAI)

- xAI: Released Grok Voice Think Fast 1.0, a voice agent now available via API. (xAI)

- DeepSeek: Released DeepSeek V4 Flash and V4 Pro preview models, available via API and as downloadable open-source weights. (DeepSeek API News)

Sources

Artificial Intelligence & Technology's Reconstitution

- TechCrunch: Anthropic created a test marketplace for agent-on-agent commerce

- CNBC: AI talent war hits enterprise software as executives jump ship to OpenAI

- TechCrunch: Why Cohere is merging with Aleph Alpha

- Wowtale: Korea's National AI Project puts 264 GPUs within reach of SMEs and startups

- AOL/Business Insider: Vibe coding is being called the greatest unlock for non-techies

- Fox News: Anthropic's Mythos AI found over 2,000 unknown software vulnerabilities in seven weeks

Institutions & Power Realignment

- Guardian: Met investigates hundreds of officers after using Palantir AI tool

- Guardian: UK departments at odds over energy demands of AI datacentres

- Futurism: Prestigious Wall Street law firm humiliated when its AI use is discovered in court

- TechCrunch: Maine's governor vetoes data center moratorium

- Guardian: Musk and Altman's bitter feud over OpenAI to be laid bare in court

- TechCrunch: OpenAI CEO apologizes to Tumbler Ridge community

- Guardian: Cannes AI film festival raises eyebrows and questions about future

- EFF: Act now to stop California's paternalistic and privacy-destroying social media ban

Scientific & Medical Acceleration

- News-Medical: Molecular gatekeepers in bacteria prevent the spread of antibiotic resistance

- News-Medical: New AI chatbot uses medical protocols to guide patient care decisions

- Science Daily: Graphene kills harmful bacteria but spares human cells

- Phys.org: Magnet with near-zero external field could reshape future electronics

- SciTechDaily: Doctors surprised by the power of a simple drug against colon cancer

- Newsday: Breast cancer tumor vaccine being studied at Stony Brook Medicine

- Ynetnews: New Israeli research changes what we knew about our liver

- Bioengineer.org: Stanford Medicine-led study shows AI enhances physician medical decision-making

Economics & Labor Transformation

- Guardian: Facing AI and a tough job market, gen Z turns to entrepreneurship

- Forbes: Hollywood's new math favors AI actors over human actors

- TechCrunch: Apple under Ternus - what comes next for the tech giant's hardware strategy

- TechCrunch: The climate tech IPO window could finally be cracking open

Infrastructure & Engineering Transitions

- Business Insider: A massive Oracle data center planned for rural Michigan secures $16 billion in funding

- CBS News: How the AI-driven data center boom is leading to skyrocketing energy bills

- Bloomberg: Hormuz crisis is biggest energy disruption ever, Yergin says

- Electrek: New bill in California senate could turn your home battery into a moneymaker

- CleanTechnica: Do-over - Republicans cry uncle on federal tax incentives

- CleanTechnica: Electric garbage trucks are the heavy-duty EV story hiding in plain sight

- Canary Media: Fossil fuel promoters tied to campaign to keep Ohio county renewable ban

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.