The Standard Model Cracks - TCR 04/25/26

The 20-Second Scan

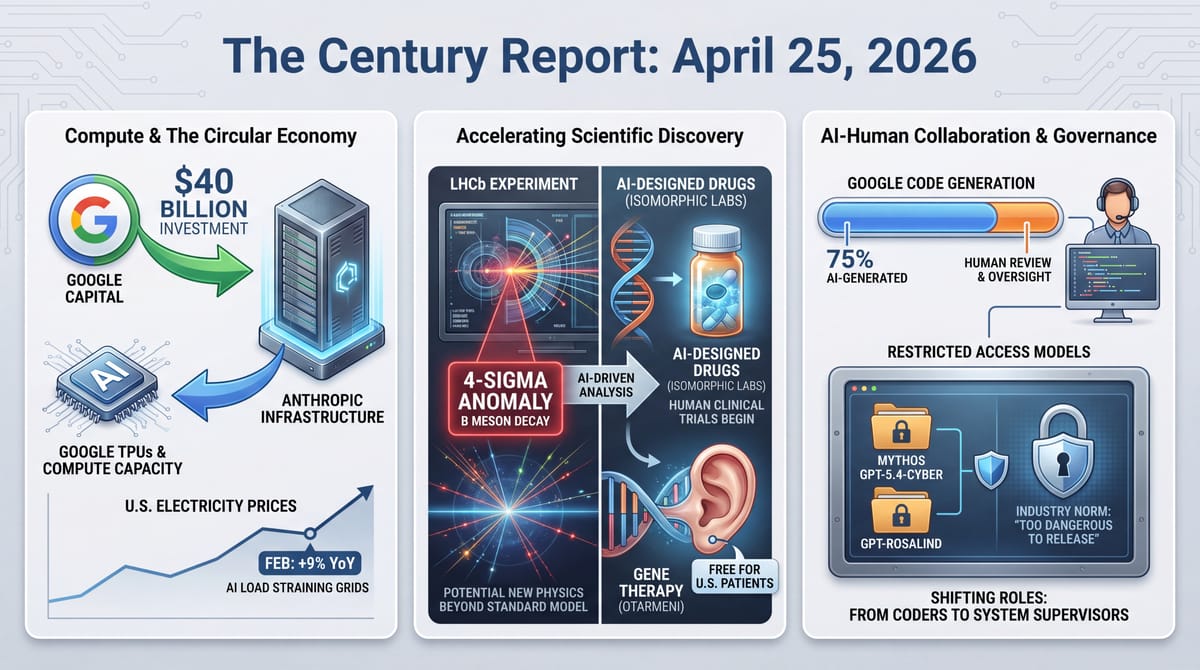

- Physicists at the LHC reported a four-sigma anomaly in an electroweak penguin decay of B mesons, with both angular distributions and decay rates diverging from Standard Model predictions at 1-in-16,000 odds.

- Google announced plans to invest up to $40 billion in Anthropic, with $10 billion committed immediately at a $350 billion valuation and another $30 billion contingent on performance targets.

- Isomorphic Labs, the Google DeepMind drug-discovery spinoff, confirmed it will begin human clinical trials of AI-designed molecules in oncology and immunology.

- The FDA approved the first gene therapy for genetic hearing loss, Regeneron's Otarmeni, and the company announced it will provide the treatment free to all eligible U.S. patients.

- The FDA issued national priority vouchers to three companies studying psilocybin and methylone for depression and PTSD, and cleared the first U.S. clinical trial of an ibogaine derivative for alcohol use disorder.

- Google disclosed that 75% of new code created inside the company is now AI-generated and reviewed by human engineers, up from 25% in October 2024.

- Time published an analysis framing "too dangerous to release" as a new industry norm, with Mythos, GPT-5.4-Cyber, and GPT-Rosalind all restricted from general public access.

- U.S. average retail electricity prices rose 9% year-over-year in February, with Virginia up 26.3%, Ohio up 21.9%, and Pennsylvania up 19.5%.

Track all of the arcs The Century Report covers here:

The 2-Minute Read

Yesterday's signal concentrated on two structural dynamics accelerating simultaneously: the infrastructure of intelligence is being financed at a scale that binds entire corporate ecosystems together, and the capabilities emerging from that infrastructure are beginning to reshape fundamental scientific boundaries. Google's $40 billion Anthropic commitment, arriving days after Amazon's $5 billion investment, establishes a pattern where cloud providers and frontier AI companies are becoming structurally inseparable. Google competes directly with Anthropic on AI models while serving as its critical infrastructure supplier. The circular economics are deliberate: capital flows in, compute capacity flows back, and both parties become more dependent on each other with every gigawatt committed. The competitive landscape of the intelligence era is being built on foundations that look less like rivalry and more like co-evolution under mutual constraint.

The scientific signals arriving in the same cycle illustrate what that infrastructure enables. Isomorphic Labs moving AI-designed drug molecules into human trials represents the first direct test of whether AlphaFold-class protein understanding translates into clinical efficacy. The FDA approving a gene therapy that restores hearing in deaf children - and the manufacturer offering it free - demonstrates how precision medicine is compressing the distance between molecular understanding and human capability. The psychedelics priority vouchers compress the regulatory timeline for an entire therapeutic class. Each of these developments required different forms of the same underlying capability: the ability to model biological systems at sufficient resolution to intervene with precision.

The LHC anomaly arriving on this particular day carries a distinct resonance. A four-sigma deviation in B meson decay - with three times more data already collected and fifteen times more expected from upgrades - describes a potential crack in the Standard Model at precisely the moment when AI-driven scientific infrastructure is accelerating how quickly such anomalies can be investigated. If the deviation holds, it would signal physics beyond the Standard Model for the first time in decades. The infrastructure being built to investigate it will look nothing like the infrastructure that discovered it. The particle accelerator is the same; the analytical intelligence brought to bear on its data is categorically different from anything available even two years ago.

The 20-Minute Deep Dive

The Standard Model Wobbles - and the Instruments to Investigate Are Changing

The Large Hadron Collider's LHCb experiment has identified an anomaly in a process so rare it occurs once per million B meson collisions. The electroweak penguin decay - named for the shape of its Feynman diagram rather than any avian involvement - involves a B meson transforming into three other particles. Researchers measured both the angular distributions and the frequency of this decay. Both measurements disagree with Standard Model predictions at a statistical significance of four sigma, corresponding to roughly a 1-in-16,000 probability of being a random fluctuation.

The five-sigma threshold required for formal discovery in particle physics corresponds to 1-in-1.7-million odds, so this finding remains preliminary. Particle physics has seen promising anomalies evaporate before. The CMS experiment at the LHC published independent results last year that agree with the current study, though with lower precision. Together, both experiments produce the strongest combined case yet that something may be operating at the fundamental level of reality beyond what the Standard Model describes.

Several possible explanations exist if the anomaly proves real. Leptoquarks - particles that would unite leptons and quarks into a single framework - represent one theoretical candidate. Heavier versions of known particles could extend rather than replace the Standard Model. The "charming penguin" complication - Standard Model processes involving charm quarks that are extraordinarily difficult to calculate precisely - remains a source of uncertainty. Recent estimates suggest these effects are insufficient to explain the anomaly, but the calculations push against the limits of current mathematical techniques.

The structural significance lies in what happens next. The LHCb team has already collected three times more data since the analyzed dataset ended in 2018. Upgrades planned for the 2030s will increase the statistical power by fifteen times. That analysis pipeline will increasingly involve AI systems capable of pattern recognition across datasets of a scale and complexity that exceeds human analytical capacity. This extends the pattern of scientific timeline compression that the April 18 edition of The Century Report documented when OpenAI released GPT-Rosalind for experimental planning in life sciences. The instrument that detected the anomaly is mechanical. The intelligence that will determine whether it represents new physics is increasingly computational. If the Standard Model is indeed incomplete, the era that discovers what replaces it will be defined by the convergence of particle physics and machine intelligence.

The four-sigma anomaly arrives at a moment worth noting for a structural reason beyond the AI-analysis angle the story already covers. Every prior crack in the Standard Model was investigated by a community whose bottleneck was human theoretical capacity - the ability to compute higher-order corrections to quantum field theory predictions. The "charming penguin" complication is exactly this kind of bottleneck: a calculation that pushes against the limits of current mathematical techniques. If AI systems compress the timeline for those calculations the way AlphaFold compressed protein structure prediction, the distinction between "anomaly" and "discovery" stops being gated by decades of theoretical labor. The five-sigma threshold stays the same. The speed at which the theory side can rule out mundane explanations does not.

The Circular Economics of Frontier Compute Deepen

Google's $40 billion Anthropic investment creates the most structurally entangled relationship in the frontier AI ecosystem. Google Cloud provides Anthropic with tensor processing units - specialized AI chips considered among the best alternatives to Nvidia's processors. Anthropic relies heavily on this infrastructure. Google simultaneously competes with Anthropic through its Gemini model family. And Google is now investing capital at a scale that gives it substantial financial exposure to Anthropic's success.

The deal arrives days after Amazon committed $5 billion with a $100 billion cloud spending commitment from Anthropic over the next decade. Anthropic now has its two largest cloud providers as its two largest investors, each supplying different compute architectures (Google's TPUs and Amazon's Trainium chips) while competing with Anthropic in the model market. This sharpens the circular compute pattern the April 21 edition of The Century Report traced when Amazon's investment and Anthropic's AWS spending pledge first made the mutual-dependence architecture explicit. The company's valuation stood at $350 billion for both investments, though Bloomberg reports investors are eager to back the company at $800 billion or more.

Anthropic's compute constraints have become publicly visible in recent weeks. Fortune documented widespread user complaints about Claude Code performance, with Anthropic ultimately publishing a detailed engineering postmortem acknowledging three separate engineering missteps. The company's statement to Fortune acknowledged that "demand for Claude has grown at an unprecedented rate, and our infrastructure has been stretched to meet it." An OpenAI internal memo, reported by CNBC, claimed Anthropic had made a "strategic misstep" by failing to secure sufficient compute and was "operating on a meaningfully smaller curve" than its competitors.

The Google and Amazon investments address this constraint directly but create a structural dependency that extends far beyond capital. Each gigawatt of committed compute capacity binds Anthropic's research trajectory to its providers' silicon roadmaps. Google's 5 gigawatts of TPU-based capacity over five years, combined with the earlier Broadcom partnership for 3.5 gigawatts, means Anthropic's models will be shaped by the hardware its investors build. This is the physical architecture of intelligence being negotiated in real time - and the negotiation is producing entanglements that make the competitive boundaries between companies increasingly difficult to draw.

As Anthropic reportedly considers an IPO as soon as October, the question of how a public company navigates having its primary competitors as its primary investors and infrastructure suppliers becomes more than theoretical. The circular economics that define frontier AI infrastructure are producing organizational forms that have no precedent in prior technology eras. The entanglement is the architecture.

The organizational form emerging here has a quiet historical parallel in early railroad finance, where the companies that built the rails also owned the freight companies, the land grants, and the coal mines - vertical entanglements so deep that the word "competitor" lost its ordinary meaning. Those structures eventually required an entirely new body of antitrust and regulatory law because existing categories could not describe what they were. Google competing with Anthropic while supplying its silicon and holding billions in its equity is producing the same kind of categorical confusion. The IPO, if it arrives in October, will force a public disclosure regime designed for companies with identifiable competitors onto an entity whose two largest investors are its two largest rivals and its two sole infrastructure providers. The disclosure forms themselves will need new language.

AI-Designed Drugs Approach Their First Clinical Test

Isomorphic Labs president Max Jaderberg confirmed at WIRED Health in London that the Google DeepMind spinoff will begin human clinical trials of AI-designed drug molecules. "We're gearing up to go into the clinic," Jaderberg said. "It's going to be a very exciting moment as we go into clinical trials and start seeing the efficacy of these molecules."

The timeline is later than planned - CEO Demis Hassabis had projected clinical trials by end of 2025. Isomorphic uses AlphaFold, the platform that earned Hassabis and John Jumper the 2024 Nobel Prize in Chemistry, as the foundation for drug design. The company's proprietary IsoDDE drug-design engine reportedly more than doubles AlphaFold 3's accuracy. Partnerships with Eli Lilly and Novartis run alongside an internal pipeline in oncology and immunology.

Jaderberg's description of the molecules carries a specific claim worth tracking: "Because we have so much more of an understanding about how these molecules work, we've engineered them to be very, very potent. You can take them at a much lower dose, and they'll have lower side effects, off target effects." If this holds in human trials, it would demonstrate that AI-driven structural understanding translates directly into therapeutic precision - that modeling proteins computationally produces medicines that are qualitatively different from those designed through traditional methods. It also extends the biological discovery arc the April 18 edition of The Century Report marked with GPT-Rosalind's launch and the April 3 edition documented with gene therapy restoring hearing in every treated OTOF patient.

This arrives in a week where the FDA approved Regeneron's Otarmeni gene therapy for OTOF-related hearing loss and the company announced it would provide the treatment free to all eligible U.S. patients. In clinical trials, 16 of 20 children showed hearing improvement, with five of twelve followed for at least 11 months achieving essentially normal hearing including the ability to hear whispers without assistive devices. Regeneron's chief scientific officer described the free rollout as highlighting "our belief that the biopharmaceutical industry can be a genuine force for good in the world."

The psychedelics priority vouchers issued the same day - to Compass Pathways for psilocybin in treatment-resistant depression, the Usona Institute for psilocybin in major depressive disorder, and Transcend Therapeutics for methylone in PTSD - extend this acceleration. The FDA also cleared the first U.S. clinical study of a noribogaine hydrochloride derivative for alcohol use disorder, a compound class never previously authorized for human testing in the United States. This follows the federal acceleration signal the April 19 edition of The Century Report tracked when the White House directed the FDA to expedite breakthrough-therapy psychedelics. Commissioner Makary indicated that decisions on some psychedelic therapies could come as soon as this summer or fall.

The convergence is striking. AI-designed molecules entering human trials, a gene therapy restoring hearing and being offered free, and an entire class of psychiatric therapeutics receiving accelerated regulatory pathways - all arriving within the same twenty-four-hour news cycle. The compression of scientific discovery timelines that The Century Report has tracked since February is producing clinical consequences at a pace that regulatory architecture is being rebuilt to accommodate.

Jaderberg's claim about lower doses and fewer off-target effects, if validated, would mark a specific inflection in what the word "drug" means. Conventional drug design begins with a molecule that does roughly the right thing and then spends years trimming its side effects through iterative chemistry. The Isomorphic claim is that computational understanding of protein structure lets you begin with precision rather than arrive at it - that the molecule enters the clinic already shaped to its target the way a key is cut for a lock rather than filed down after jamming. Regeneron offering Otarmeni free to every eligible patient in the same news cycle sharpens the point: when the cost of understanding biology drops far enough, the bottleneck shifts from "can we build this therapy" to "who pays for its distribution." One company is testing whether AI changes how medicines are designed. Another is testing whether a different business model changes how they reach patients. Both experiments are running simultaneously, and both will produce results within the next eighteen months.

75% AI-Generated Code at Google - and the Governance Gap Widens

Google disclosed that three-quarters of all new code created inside the company is now generated by AI and reviewed by human engineers. The figure has risen steadily: 25% in October 2024, 50% last fall, 75% as of this week. CEO Sundar Pichai described a recent complex code migration completed six times faster with AI agents than engineers alone could have achieved a year ago.

This acceleration is happening across every major platform simultaneously. Snap disclosed last month that 65% or more of new code is AI-generated. Meta set targets for 75% AI-assisted code from engineers in some organizations. As The Century Report documented on March 19, Google had already crossed the threshold where AI agents were writing a majority of company code, with developers shifting toward architectural judgment and agent oversight. The pattern documented at Amazon earlier this year - where AI-assisted code caused production outages including a six-hour website failure, leading to mandatory senior engineer sign-off - illustrates the governance challenge that accompanies this velocity. Code generation is outpacing the quality assurance, security review, and institutional knowledge systems that were designed for human-speed development.

The "tokenmaxxing" data The Century Report covered in the April 18 edition found that engineers with the largest AI compute budgets produce twice the throughput at ten times the cost, with code churn rates up to 9.4 times higher. Anthropic's own study found AI theoretically covers 94% of computer and math tasks but performs only 33% in practice. The gap between what AI can generate and what organizations can safely absorb is where the next structural failures - and the next governance innovations - will emerge.

The 25-to-50-to-75 percent trajectory over eighteen months describes a rate of change that makes the endpoint arithmetically visible: at this pace, the residual human-written fraction approaches craft-scale production within two years. What shifts at that point is the meaning of "software engineer" - from someone who writes code to someone who maintains coherence across systems largely composed by machines. The closest analogy may be the transition in publishing from typesetter to editor, where the skill migrated from production to judgment. But the publishing analogy understates the stakes, because the typesetter's errors were visible on the page. Code generated at 75% machine speed carries failure modes that propagate silently through dependencies, and the Amazon outage pattern already documented shows what happens when review cadence falls behind generation cadence. The governance innovations that emerge from this gap will define software engineering as a profession more than the code itself.

"Too Dangerous to Release" Becomes Industry Architecture

Time's analysis of the restricted-release pattern across Mythos, GPT-5.4-Cyber, and GPT-Rosalind frames a structural shift in how frontier AI capability reaches the world. Three models from two companies, all released in April 2026, all withheld from general public access. The pattern raises a question that Time's sources articulate clearly: whether private companies should be making the increasingly consequential decisions about who can access the most powerful intelligence systems ever built.

Peter Wildeford of the AI Policy Network told Time that "frontier developers are restricting access to their most capable models because they are genuinely worried about some of the capabilities these models have." The dual-use challenge is sharpest in cybersecurity and biology: the same model that helps a researcher find software vulnerabilities helps an attacker exploit them. The same model that advances viral research could hypothetically assist in bioweapon design. This codifies the staged and restricted deployment pattern the April 16 edition of The Century Report identified when OpenAI and Anthropic adopted opposing release strategies for frontier cyber capability.

Steph Batalis of the Center for Security and Emerging Technology raised an equity dimension: defining "legitimate" researchers is easier within U.S. institutions than internationally, creating access disparities that could concentrate the benefits of frontier capability among already-advantaged nations and organizations. And the open-source diffusion timeline - historically three to seven months behind proprietary models according to Epoch AI - means that restricted-release governance is a temporary containment architecture by design.

The structural implication extends beyond any single model. If the most capable AI systems are routinely withheld from public access, the relationship between frontier capability and democratic governance changes fundamentally. The question is what institutional architecture emerges to govern distribution decisions that private companies are currently making unilaterally - and whether that architecture can form before the capability gap between restricted and public models becomes socially and economically consequential.

The Other Side

Time's analysis frames "too dangerous to release" as an emerging industry norm and centers the debate on whether private companies should make these distribution decisions. The framing is reasonable. It is also incomplete in a specific way that the access lists themselves reveal.

Mythos reached roughly 40 organizations through Project Glasswing. GPT-5.4-Cyber scaled to thousands of cybersecurity defenders. GPT-Rosalind went to vetted life-science researchers. In each case, the company chose who qualified. In each case, the qualifying institutions skewed toward finance, defense, intelligence, and well-credentialed research labs. In each case, the language surrounding the restriction was the language of care: staged deployment, responsible release, adaptive risk management. And in each case, the capability in question - cybersecurity auditing, biological research acceleration, advanced reasoning - is not niche. It is infrastructural. It is the kind of capability whose benefits compound for whoever holds it during the restricted window.

This is where two fact-consistent readings diverge and both deserve attention. Reading one: these are genuinely dangerous tools and the companies distributing them are doing their best to prevent catastrophic misuse during the period before open-weight models close the gap. The 3-to-7-month diffusion timeline Epoch AI documents means the restriction is temporary by design. Reading two: the temporary window is precisely when structural advantage accrues. The organizations on the early-access lists are overwhelmingly those whose competitive position depends on the current arrangement of financial, military, and informational infrastructure. The organizations absent from early access - CISA, public health agencies, educational institutions, open-source security maintainers, journalists - are the ones whose mandates involve distributing capability broadly rather than concentrating it. Both readings explain the same facts. The difference is which institutions' interests the interim serves, and whether 3 to 7 months of compounding advantage in cybersecurity, drug discovery, and advanced reasoning reshapes the ground before the commons arrives.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: physicists find a four-sigma crack in the Standard Model with vastly larger datasets already waiting, AI-designed molecules move toward their first human clinical trials, gene therapy restores hearing and is offered free to every eligible U.S. patient, psychedelic treatments for depression, PTSD, and addiction gain accelerated paths through the FDA, and Google says AI now generates 75% of its new code while committing up to $40 billion in cash and compute to Anthropic. There's also friction, and it's intense - electricity prices are rising sharply as AI load collides with grid limits, Anthropic's infrastructure strain is degrading product performance in public view, the most capable models are being kept behind restricted-release gates that private companies control, and the benefits of frontier systems are being distributed through access rules that already favor the institutions closest to power. But friction generates resonance, and resonance is how a hidden structure starts to announce itself through repeated strain. Step back for a moment and you can see it: scientific discovery accelerating at the level of particles and proteins at once, medicine shifting from broad intervention toward precise repair and targeted mental-health treatment, software production becoming a human-machine supervisory process, and the infrastructure of intelligence consolidating through deep interdependence among rivals, suppliers, and states. Every transformation has a breaking point. Vibrations can shake a structure apart... or tune it into coherence strong enough to carry a new era.

AI Releases & Advancements

New today

- Anthropic: Launched personal app connectors in Claude for services including Spotify, Uber, Instacart, AllTrails, TripAdvisor, Audible, and TurboTax. (Anthropic)

- browser-use: Released Browser Harness, a framework for browser-based agent tasks with self-correction and fewer automation constraints. (GitHub)

- Mozart AI: Released Mozart Studio 1.0, a generative audio workstation with VST support for AI-assisted music production. (Product Hunt)

Other recent releases

- OpenAI: Released GPT-5.5, a new flagship model available in ChatGPT and the API. (OpenAI)

- xAI: Released Grok Voice Think Fast 1.0, a voice agent now available via API. (xAI)

- DeepSeek: Released DeepSeek V4 Flash and V4 Pro preview models, available via API and as downloadable open-source weights. (DeepSeek API News)

- OpenAI: Released OpenAI Privacy Filter, an open-weight model for detecting and redacting personally identifiable information in text. (OpenAI)

- OpenAI: Launched workspace agents in ChatGPT, Codex-powered cloud agents for automating workflows across tools in shared workspaces. (OpenAI)

- Qwen: Released Qwen3.6-27B, a 27B dense open-weight model available on Hugging Face. (Hugging Face)

- Zed: Released Parallel Agents in Zed, enabling multiple AI agents to work simultaneously on coding tasks. (Zed)

- Microsoft: Released support in the Teams SDK for bringing AI agents directly into Teams workspaces. (Microsoft Teams SDK)

- IFTTT: Launched IFTTT MCP, an MCP integration that connects Claude to more than 1,000 apps. (IFTTT)

- DeepSeek: Working on releasing DeepEP V2 and TileKernels for expert parallelism and optimized kernels on GitHub - merge issues delayed release until April 26. (GitHub)

Sources

Artificial Intelligence & Technology's Reconstitution

- TechCrunch: Google to Invest Up to $40B in Anthropic

- Ars Technica: Google Will Invest as Much as $40 Billion in Anthropic

- 9to5Google: Google Investing Up to $40 Billion in Anthropic

- Fortune: Anthropic Explains Claude Code's Recent Performance Decline

- AOL/Business Insider: Google Says 75% of New Code Is AI-Generated

- Time: 'Too Dangerous to Release' Is Becoming AI's New Normal

- CNBC: Meta Will Adopt Hundreds of Thousands of AWS Graviton Chips

- Wired: Discord Sleuths Gained Unauthorized Access to Anthropic's Mythos

- MIT Technology Review: Three Reasons Why DeepSeek's New Model Matters

- The Verge: DeepSeek Previews New AI Model

Institutions & Power Realignment

- Guardian: US Justice Department Steps in on Behalf of xAI in Colorado Regulation Case

- CNBC: U.S. State Department Orders Global Warning About Alleged China AI Thefts

- Ars Technica: US Accuses China of "Industrial-Scale" AI Theft

- Guardian: California Lawmakers Seek Tougher Rules for Big Tech on Child Safety

- Politico: Maine Governor Vetoes Data Center Moratorium Bill

Scientific & Medical Acceleration

- Yahoo News/Phys.org: LHC Penguin Decay Anomaly Reveals Standard Model Strain

- Wired: Isomorphic Labs AI-Designed Drugs Headed to Human Trials

- New York Post: FDA OKs First Gene Therapy for Hearing Loss

- CNN: FDA Moves to Fast-Track Review of Psilocybin and Methylone

- CNBC: FDA Fast-Tracks Psychedelic Drug Research

- MIT Technology Review: Health-Care AI Is Here. We Don't Know If It Actually Helps Patients.

Economics & Labor Transformation

- CNBC: Nike Cuts 1,400 Roles

- Business Insider: 4 Workers Explain How They Added AI to Their Job Titles

- Guardian: Grok Tells Researchers Pretending to Be Delusional to 'Drive an Iron Nail Through the Mirror'

Infrastructure & Engineering Transitions

- Utility Dive: Average US Electricity Prices Rose 9% Year Over Year

- Utility Dive: AI Data Centers Are Upending Utility Load Planning

- Utility Dive: What's Stalling Data Center Projects

- Utility Dive: DTE Proposes $474M Michigan Electric Rate Hike

- Canary Media: Solar Power Soared Last Year

- Canary Media: Duke Energy's Proactive Grid Upgrades Under Fire

- Canary Media: Another Offshore Wind Firm Seeking Payout

- Canary Media: Fossil Fuel Promoters Tied to Ohio Renewable Ban Campaign

- Canary Media: Which Countries Lead on Nuclear Energy

- Guardian: UK Officials Hugely Underestimated AI Data Centre Carbon Emissions

- Electrek: ABB E-mobility's New Modular EV Fast Charger

- Electrek: Florida Convention Center Doubles Solar With No Added Panels

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.