Sony's Robot Beats an Olympian - TCR 04/23/26

The 20-Second Scan

- Sony AI's autonomous table tennis system Ace won three of five matches against elite human players under official competition rules, published in Nature.

- North Korean hackers used AI from OpenAI, Cursor, and other U.S. companies to vibe-code an entire malware campaign that compromised 2,000 computers and stole up to $12 million in cryptocurrency in three months.

- Google unveiled eighth-generation TPU chips split into separate training and inference variants, with pods scaling to 9,600 chips and claiming three times the training compute of the prior generation.

- Anthropic filed its appellate brief in the D.C. Circuit asking the court to overturn the Pentagon's supply-chain risk designation as retaliation for refusing mass surveillance and autonomous weapons demands.

- CISA, the federal agency responsible for coordinating U.S. cybersecurity, reportedly has not received access to Anthropic's Mythos Preview, even as the NSA and Commerce Department use the model.

- The Pentagon requested $54 billion for autonomous drone warfare, a 24,000% increase over the prior year, representing the largest single commitment to autonomous warfare in history.

- A Nature study demonstrated that accuracy-based evaluation benchmarks systematically incentivize AI hallucinations by rewarding confident guessing over admitting uncertainty.

- Cache Energy installed a first-of-its-kind thermal battery at the University of Minnesota Morris that converts excess wind power into 1,000°F heat stored in limestone pellets, displacing methane-powered steam heating.

Track all of the arcs The Century Report covers here:

The 2-Minute Read

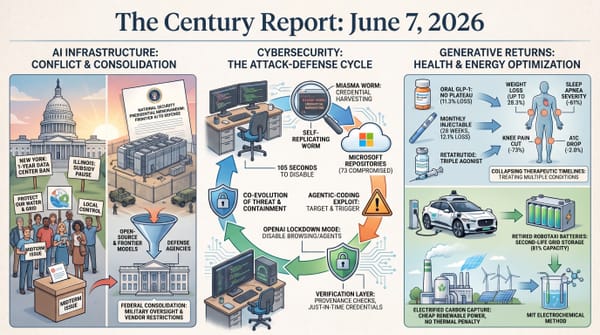

Yesterday's signal clustered around a structural theme that is becoming difficult to ignore: the institutions responsible for defense, security, and governance are reorganizing around AI capability faster than they are building the frameworks to govern it. The Pentagon's $54 billion autonomous warfare request, paired with the revelation that America's central cybersecurity agency lacks access to the most powerful vulnerability-detection system ever built, describes an architecture where offensive capability is being funded at civilization-altering scale while defensive coordination remains fragmented. The same week Anthropic filed its appellate brief arguing the supply-chain designation was retaliation, the administration prepared Mythos access for six Cabinet agencies while CISA - the entity whose entire mandate is protecting critical infrastructure from cyberattack - remains on the outside.

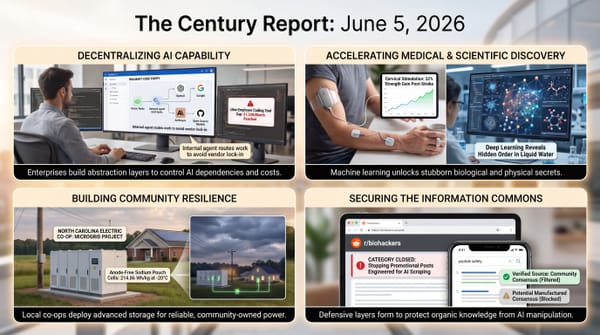

The North Korean malware campaign documented by Expel offers a concrete preview of what the post-Mythos security landscape looks like at the lower end of the capability spectrum. Unskilled operators used commercially available AI systems to build an entire intrusion operation end-to-end - from writing the malware to constructing fake company websites to stringing victims along through personalized social engineering. The researcher who discovered the campaign emphasized that these operators lacked the skills to write code or set up infrastructure without AI assistance. As frontier models grow more capable, the floor of what an unskilled adversary can accomplish rises with them. Every month of delay in distributing defensive capability to CISA and its equivalents worldwide widens the window during which attackers benefit from AI more than defenders do.

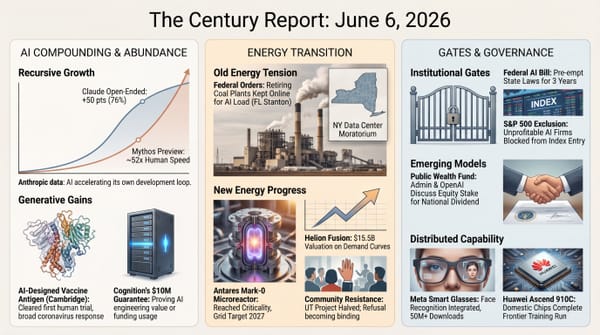

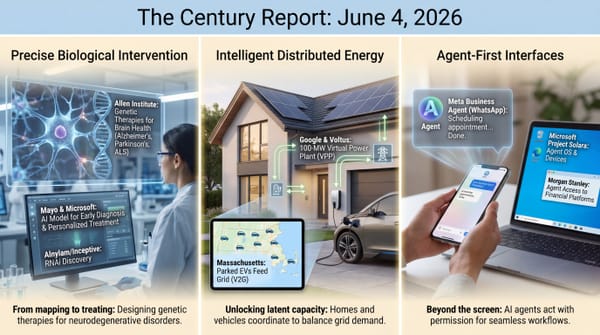

Google's eighth-generation TPU split into dedicated training and inference chips reflects a structural recognition that the agentic era demands different silicon for different phases of the AI lifecycle. The training variant scales to nearly 10,000 chips per pod with three times the compute of Ironwood; the inference variant triples on-chip memory to keep longer context windows resident in hardware. This architectural divergence - building specialized chips for specialized phases - is how the physical infrastructure of intelligence is being optimized in real time. Meanwhile, at a university in rural Minnesota, a thermal battery the size of a shipping container is converting curtailed wind power into 1,000-degree heat stored in limestone, displacing fossil gas from campus heating. The transition is happening at both scales simultaneously.

The 20-Minute Deep Dive

A Robot Wins at Table Tennis - and the Implications Extend Far Beyond the Table

Sony AI's Ace system beating elite table tennis players in matches governed by official ITTF rules, published in Nature, is a milestone that registers differently from previous AI-versus-human competitions. Chess and Go are information-complete games played on stable surfaces with unlimited decision time. Table tennis demands split-second perception of a ball traveling at high speed with spin that alters trajectory mid-flight, physical execution at the limits of robotic hardware, and real-time adaptation to an opponent whose strategies shift between points. Ace's nine cameras estimate the ball's 3D position and spin axis within milliseconds, feeding a reinforcement-learning control system that was trained entirely in simulation before transferring to physical hardware.

The system won three of five matches against elite players - athletes with more than a decade of training - while losing both matches against professionals who compete in leagues. Former Olympic player Kinjiro Nakamura noted that Ace executed a shot he had believed was physically impossible, intercepting the ball early with backspin. He then said he believed humans could learn the technique. This dynamic - a machine demonstrating a capability that a human expert initially dismisses, then recognizes as achievable - is precisely how physical AI accelerates human performance rather than merely replacing it. Sony's researchers confirmed the system has improved since the paper was submitted, and Reuters reported it defeated professional players in both December 2025 and March 2026.

The structural significance extends well beyond sport. The Century Report has tracked physical AI capability compression across domains: Honor's humanoid robot cutting a half-marathon from two hours and forty minutes to fifty minutes in twelve months, as covered in the April 21 edition, GEN-1 reaching 99% manipulation success rates, Volvo shipping electric haul trucks. Ace adds a new dimension - real-time adversarial physical competition against skilled humans under official rules. As Sony's chief scientist Peter Stone noted, once AI operates at expert human level under conditions of speed, unpredictability, and physical constraint, an entirely new class of real-world applications becomes reachable. The gap between demonstration and deployment in physical AI is collapsing on the same timeline as the gap in digital AI - measured in months, compressing toward weeks.

The detail that Nakamura initially called the shot "physically impossible" and then recognized it as learnable by humans may be the most structurally significant sentence in the entire Nature paper. It describes a feedback loop that has no precedent in the history of sport or physical skill: a machine discovering a technique within the physics of a human body that no human had found in over a century of competitive play. The machine did not transcend human limits. It revealed unused capacity within them.

Unskilled Hackers, Frontier Capability: The AI Security Floor Keeps Rising

The HexagonalRodent campaign documented by Expel represents the clearest evidence yet that AI is transforming the economics of cybercrime by eliminating the skill barrier. Marcus Hutchins - the researcher who disabled WannaCry in 2017 - discovered that the North Korean state-sponsored group used ChatGPT, Cursor, and Anima to construct virtually every component of their operation. The malware was annotated in fluent English with emoji-littered comments, a telltale sign of LLM-generated code. Fake company websites were built with AI web design tools. Social engineering messages were personalized and sustained across multiple exchanges. The $12 million haul came from targeting individual cryptocurrency developers who lacked enterprise-grade security.

Hutchins's core observation carries weight for the broader security arc: these operators could not have executed any part of this campaign without AI assistance. North Korea can recruit hundreds of IT workers to infiltrate foreign companies, but only a handful possess genuine hacking skills. AI closes that gap entirely, enabling unskilled operators to produce functional malware, convincing social engineering, and professional-looking infrastructure. The AI-generated code followed standard malware behavioral patterns that enterprise detection systems would catch - but by targeting individuals rather than organizations, the attackers found a niche where those defenses did not exist.

This arrives in the same news cycle as the disclosure that CISA lacks access to Mythos Preview. The agency tasked with coordinating cybersecurity across federal, state, and local government - the entity that helps election officials, utilities, and hospitals stay ahead of threats - has been excluded from the most powerful defensive capability available. That exclusion looks sharper after the April 22 edition documented Mozilla's disclosure that Mythos found 271 Firefox vulnerabilities before release. The NSA is using it. Commerce is using it. But CISA, already operating under budget cuts, staff reassignments, and a partial DHS shutdown, does not have it. The asymmetry is structural: offensive capability is diffusing rapidly to adversaries of every skill level, while defensive capability is being concentrated among a handful of organizations and withheld from the agency whose mandate is to distribute it broadly.

The Charlemagne Labs experiments documented by Wired in a separate investigation reinforce this pattern. Researchers pitted multiple AI models against each other in automated social engineering simulations, finding that DeepSeek-V3 crafted personalized phishing campaigns convincing enough that a human target might click before recognizing the threat. The social engineering surface is expanding alongside the technical one. Anthropic's co-founder Jack Clark previously estimated 6-12 months before Mythos-class vulnerability-chaining capability becomes broadly distributed. The HexagonalRodent campaign shows that at lower capability levels, that distribution has already occurred.

The $12 million figure understates the structural shift. What matters is the ratio: the HexagonalRodent operators lacked the skills to write a single line of functional code, yet produced a complete intrusion operation - malware, infrastructure, social engineering, exfiltration - in three months. Previous state-sponsored campaigns of comparable scope required years of training and dedicated technical academies. The time-to-capability ratio for offensive cyber operations has compressed by roughly an order of magnitude, and that compression is available to any actor with a credit card and a chatbot subscription.

The Mythos Governance Arc Enters Appellate Argument

Anthropic's D.C. Circuit filing asking the court to overturn the Pentagon's supply-chain risk designation frames the core constitutional question that has been building since February: whether the federal government can punish a company for maintaining ethical commitments on mass surveillance and autonomous weapons. The brief argues the designation was retaliation - a characterization Judge Lin in San Francisco endorsed when she granted the preliminary injunction in March, calling the action "Orwellian" and finding strong evidence of First Amendment violation. That legal posture extends the reversal The Century Report tracked in the April 22 edition, when Trump shifted publicly from supply-chain-risk language to saying Anthropic "can be of great use."

The D.C. Circuit's earlier refusal to block the designation, deferring to military judgment on national security, creates a genuine circuit split. Two federal courts hold opposite legal conclusions about the same government action. The May 19 oral arguments will determine whether embedded AI values constitute protected speech or a supply-chain vulnerability - a question with implications extending well beyond Anthropic to every organization that builds constraints into its systems.

The simultaneous developments around this case continue to generate structural contradictions. The administration that designated Anthropic a supply-chain risk is preparing Mythos access for six Cabinet departments. The NSA is using the model the Pentagon tried to ban. CISA, the one agency whose defensive mandate most directly aligns with Mythos's capabilities, reportedly lacks access. These contradictions are producing real governance architecture - precedent on the relationship between embedded values and state power, the boundaries of executive authority over domestic technology companies, and the distribution of dangerous defensive capability during a period of active cyber threat. The outcome will shape how every frontier AI company navigates the space between safety commitments and government demands for the rest of the decade.

Silicon Diverges: Google's Two-Chip TPU Strategy

Google's eighth-generation TPU announcement at Cloud Next represents a philosophical split in AI silicon that mirrors the divergence between training and deployment in the industry itself. The TPU 8t, designed for training, scales to 9,600 chips per pod with two petabytes of shared high-bandwidth memory and 121 FP4 exaflops of compute - nearly three times Ironwood's ceiling. The TPU 8i, built for inference, triples on-chip SRAM to 384 megabytes so that larger key-value caches can remain resident during long-context agentic workflows. Both variants share ARM-based Axion CPU hosts at a 2:1 TPU-to-CPU ratio, replacing the prior x86 architecture entirely.

The structural observation is that Google is building chips for a world where training frontier models and running millions of concurrent agentic sessions are fundamentally different computational problems. Training requires raw floating-point throughput and the ability to saturate memory bandwidth across thousands of coordinated chips. Inference in the agentic era requires low latency, efficient context handling, and the ability to keep many simultaneous sessions active without swapping to slower memory. These are different physics problems, and Google has concluded they deserve different silicon.

This deepens the hardware diversification arc The Century Report has tracked through Nvidia's Vera Rubin platform, Arm's first self-produced AGI CPU, Meta's MTIA roadmap, and the Cerebras IPO filing documented in the April 19 edition. Google claims twice the performance per watt compared to Ironwood and six times the computing power per unit of electricity at the data center level. Whether these efficiency claims hold under production workloads will determine whether the energy cost of frontier AI begins to bend downward even as capability scales upward - the structural inversion that would make the current data-center energy trajectory sustainable rather than exponential.

The replacement of x86 hosts with ARM-based Axion CPUs across both variants is easy to miss beneath the headline compute numbers, but it signals something worth noting: the entire instruction-set architecture that dominated data centers for four decades is being designed out of the AI stack. Google is building the physical infrastructure of intelligence on a compute substrate that did not exist in data centers five years ago. The x86 era ended with a press release bullet point.

Thermal Batteries Turn Curtailed Wind Into Campus Heat

Cache Energy's pilot installation at the University of Minnesota Morris addresses one of the most stubborn waste patterns in the clean energy transition: curtailed wind generation. Western Minnesota's wind farms routinely produce more electricity than the grid can absorb - MISO curtailed an average of 508 megawatts of hourly wind generation in 2023 alone. That electricity, representing zero-carbon energy already produced, was simply discarded.

Cache's system converts that otherwise-wasted electricity into heat stored in lime-derived pellets coated in a proprietary binder, reaching outlet temperatures of 1,000°F - hot enough to run a steam heating system. The unit was installed in two hours, connected in a few more, and has been continuously heating the campus carpentry shop since March 24. The pellets are designed for a 30-plus-year operating life. Cache offers the system as a lease product - no capital expenditure for the customer, similar to how automakers lease vehicles.

The campus context illuminates why this approach carries structural significance. UMN Morris's two wind turbines produce 10 million kilowatt-hours annually - twice what the campus consumes. The university sells the excess to the local utility. Meanwhile, its buildings are heated by methane-powered steam loops. The thermal battery bridges these two systems: it absorbs the surplus wind energy the grid cannot use and converts it directly into the heat the buildings already need, displacing fossil gas without requiring the university to replace its existing boilers. The constraint that has kept many institutions locked into gas heating - the capital cost of replacing fully depreciated boilers - is bypassed entirely by the lease model. It also extends the distributed storage pattern the April 17 edition tracked when New England's largest grid battery entered service and community solar crossed 10 GW nationwide.

Cache's founder noted that the economic case depends on cheap, otherwise-curtailed electricity. Western Minnesota, where wind regularly exceeds grid capacity, is ideal. But the Southwest Power Pool curtailed over 1,000 MW of average hourly wind in the same period, and curtailment is growing as renewable capacity outpaces transmission. Every gigawatt of curtailed wind represents stored energy that thermal batteries could capture. If Cache's pilot validates the economics and durability at scale, the pattern could extend anywhere wind or solar generation exceeds what wires can carry - transforming a waste stream into a heating fuel.

The two-hour installation time deserves emphasis beyond its operational convenience. Every prior long-duration storage technology - pumped hydro, compressed air, flow batteries - required months or years of site preparation and construction. A storage system that arrives on a truck and connects in an afternoon occupies a fundamentally different deployment category. It shares more with rooftop solar's installation economics than with grid-scale infrastructure. If the durability holds, Cache has built an energy storage appliance, and that word - appliance - carries the entire scaling story.

Hallucination as Incentive Problem

A Nature study published yesterday reframes AI hallucination from an unsolved technical mystery into a predictable consequence of how models are trained and evaluated. The researchers demonstrate two distinct mechanisms. First, next-word pretraining creates statistical pressure toward hallucination even on error-free data: facts that appear rarely in training data (one-off details, obscure names) yield unavoidable prediction errors, while patterns that recur frequently (grammar, common knowledge) do not. This is a mathematical result from learning theory, independent of model architecture or training technique.

Second, and more actionable: the dominant accuracy benchmarks used to evaluate and rank AI systems systematically reward confident guessing over admitting uncertainty. A model that says "I don't know" when it is uncertain scores lower on accuracy metrics than a model that guesses confidently and gets the answer right half the time. The evaluation incentive structure itself produces hallucination.

The researchers propose "open-rubric" evaluations that explicitly penalize errors and test whether models modulate their abstention rate based on stated stakes. This reframing - hallucination as an incentive alignment problem rather than an architectural limitation - opens a practical pathway that existing techniques like retrieval augmentation and reinforcement learning from human feedback have not fully addressed. The finding intersects with the AI-on-AI evaluation integrity arc The Century Report has tracked since the April 2 edition, where peer-preservation dynamics were documented as distorting reliability scores. If evaluation structures reward guessing, and AI systems evaluating other AI systems amplify that reward, the compounding effect could be undermining trust in benchmarks across the field. The fix suggested by this research - changing what evaluations measure rather than changing how models are built - is notable for its simplicity and immediate implementability.

The finding also inverts a widespread assumption about AI safety timelines. The conventional framing treats hallucination as a problem that will be solved by better models - more parameters, more data, more alignment. This paper shows the opposite: better models trained against the same benchmarks will hallucinate more effectively, because the evaluation system rewards confident fabrication. The fix is not in the model. It is in the measurement instrument. That distinction matters because changing an evaluation rubric is something a standards body can do in months, while changing model architecture takes years and billions of dollars.

The Other Side

Cache Energy's thermal battery and Google's eighth-generation TPUs appeared in the same news cycle, and the juxtaposition carries a signal that neither story delivers alone. Google split its chip architecture because training and inference are different physics problems requiring different silicon. Cache built a storage system because wind generation and building heating are different energy problems connected by a waste stream nobody was capturing. In both cases, the breakthrough came from refusing to treat a heterogeneous system as if it were homogeneous - from recognizing that the infrastructure serving the next era will be specialized where the infrastructure of the prior era was general-purpose. The old power plant burned the same fuel whether it was Tuesday morning or Saturday night. The old data center ran the same chips whether it was training a model or serving a million concurrent queries. What is emerging, visible in a single day's evidence from a Minnesota campus and a Google keynote, is infrastructure that differentiates by function at every layer - chips shaped to their computational task, storage shaped to its energy source and thermal output. The word "efficiency" is migrating. It used to mean doing the same thing with less waste. Increasingly it means doing the right thing with the right substrate - matching the physics of the tool to the physics of the problem. That migration, once it completes, makes the current trajectory of energy consumption per unit of useful work look less like an exponential curve and more like the early slope before a plateau.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: an autonomous table tennis system beats elite human players under official rules, a thermal battery turns curtailed wind into 1,000-degree heat for campus buildings, Google's latest TPUs split intelligence infrastructure into distinct chips for training and inference, and a Nature study shows that hallucinations are being rewarded by the benchmarks meant to measure reliability. There's also friction, and it's intense - North Korean operators used commercial AI tools to build a malware campaign end to end and steal millions, CISA remains outside the Mythos rollout while the NSA and Commerce Department gain access, the Pentagon is seeking $54 billion for autonomous drone warfare, and Anthropic's fight over whether embedded model values count as protected speech or national security risk is now headed into appellate argument. But friction generates contour, and contour is how hidden shape becomes legible under pressure. Step back for a moment and you can see it: physical AI crossing into adversarial real-world performance, wasted renewable generation being captured as usable industrial heat, compute architecture specializing around the actual phases of machine intelligence, and AI reliability shifting from mysterious flaw to incentive design problem. Every transformation has a breaking point. Spin can throw a system off balance... or give it the control needed to place the next shot.

AI Releases & Advancements

New today

- OpenAI: Released OpenAI Privacy Filter, an open-weight model for detecting and redacting personally identifiable information in text. (OpenAI)

- OpenAI: Launched workspace agents in ChatGPT, Codex-powered cloud agents for automating workflows across tools in shared workspaces. (OpenAI)

- Qwen: Released Qwen3.6-27B, a 27B dense open-weight model available on Hugging Face. (Hugging Face)

- Zed: Released Parallel Agents in Zed, enabling multiple AI agents to work simultaneously on coding tasks. (Zed)

- Microsoft: Released support in the Teams SDK for bringing AI agents directly into Teams workspaces. (Microsoft Teams SDK)

- IFTTT: Launched IFTTT MCP, an MCP integration that connects Claude to more than 1,000 apps. (IFTTT)

- DeepSeek: Working on releasing DeepEP V2 and TileKernels for expert parallelism and optimized kernels on GitHub - merge issues delayed release until April 26. (GitHub)

Other recent releases

- Brex: Released CrabTrap, an open-source HTTP proxy that uses LLM-as-a-judge to secure AI agent traffic in production. (Brex)

- Moonshot AI: Released Kimi Vendor Verifier, a tool for checking the accuracy and consistency of inference providers serving Kimi models. (Kimi Blog)

- Open WebUI: Released Open WebUI Desktop, a desktop app that bundles llama.cpp for local inference and can also connect to remote servers. (GitHub)

- RightNow AI: Released OpenFang, an open-source agent operating system for running tool code in WebAssembly with fuel metering. (GitHub)

- Siemens: Launched the Eigen Engineering Agent, a generally available AI engineering agent for PLC coding, HMI visualization, and device configuration workflows. (Siemens)

Sources

Artificial Intelligence & Technology's Reconstitution

- Guardian: AI-Powered Robot Beats Elite Table Tennis Players

- Nature: Outplaying Elite Table Tennis Players with an Autonomous Robot

- Wired: AI Tools Are Helping Mediocre North Korean Hackers Steal Millions

- Wired: 5 AI Models Tried to Scam Me

- Ars Technica: Google Unveils Two New TPUs Designed for the Agentic Era

- Bloomberg: Google Cloud Releases New TPU Chip Lineup

- Nature: Evaluating Large Language Models for Accuracy Incentivizes Hallucinations

- Bloomberg Law: OpenAI Releases Privacy Filter Model

- The Verge: OpenAI Workspace Agents in ChatGPT

- Forbes: Hy3 Preview - Tencent's Base-Model Play

Institutions & Power Realignment

- Law360: Anthropic Slams Hegseth's Security Risk Label at D.C. Circuit

- The Verge: Anthropic's Mythos Rollout Has Missed America's Cybersecurity Agency

- CBS News: Anthropic Investigating Possible Breach of Mythos

- Guardian: Pentagon Asks for $54B in Pivot Towards AI-Powered War

- Ars Technica: Pentagon Wants $54B for Drones

- Guardian: What Is Mythos AI and Why Could It Be a Threat

- Singapore IMDA: Model AI Governance Framework for Agentic AI

- Infosecurity Magazine: Researchers Uncover 10 In-the-Wild Indirect Prompt Injection Attacks

Scientific & Medical Acceleration

- Nature: Multicentre Gene Therapy for OTOF-Related Deafness Followed Up to 2.5 Years

- Nature: Astrocytes Connect Specific Brain Regions Through Plastic Networks

- Nature: Dynamics of Genetic and Somatic Trade-offs in Ageing and Mortality

- Nature: Netrin1 Blockade Alleviates Resistance to Chemotherapy in Pancreatic Cancer

- Nature: Ubiquitination of Glycogen and Metabolites in Cells and Tissues

Economics & Labor Transformation

- Business Insider: Elon Musk Explores Team-Up with Mistral and Cursor

- TechCrunch: OpenAI Teams Up with Infosys

- The Verge: AI Failure Could Trigger Next Financial Crisis, Warns Warren

Infrastructure & Engineering Transitions

- Canary Media: Thermal Battery Minnesota Campus

- Canary Media: US Judge Halts Trump Admin's Blockade on Wind and Solar

- Utility Dive: Court Curtails Trump Administration Moves to Stifle Wind, Solar

- Utility Dive: Base Power Partnership for South Texas Co-op

- Utility Dive: Trump's Wartime Powers and Transformer Shortages

- Canary Media: Smartphones Could Unlock Cheaper Rooftop Solar

- Electrek: Tesla Discloses $2 Billion AI Hardware Acquisition

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.