Two Labs Lock Down Cyber AI - TCR 05/12/26

The 20-Second Scan

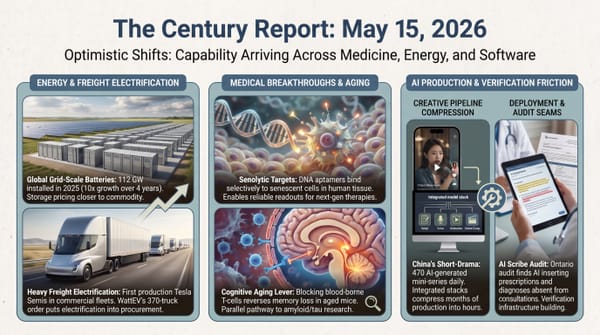

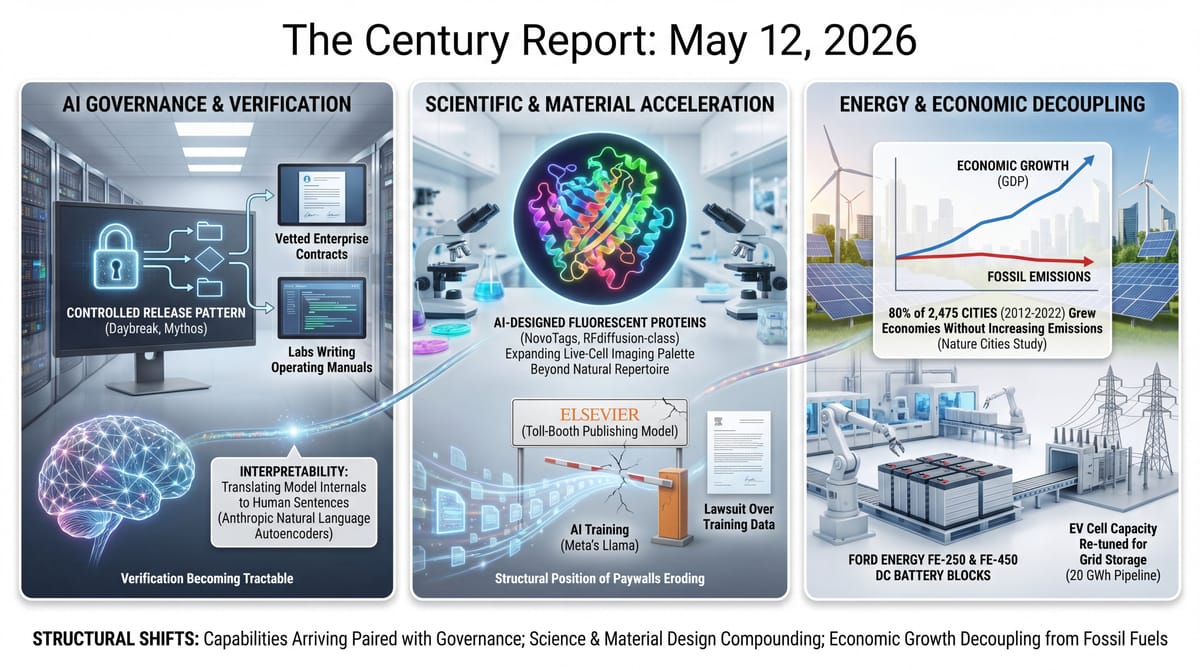

- OpenAI launched Daybreak, an AI agent that finds and patches security vulnerabilities in code, available only to a small group of vetted enterprise customers under a controlled release.

- Anthropic published a paper describing natural language autoencoders, a method for translating model internals into human-readable sentences.

- HHMI's Janelia Research Campus published NovoTags, a library of AI-designed fluorescent proteins generated by an RFdiffusion-class model, expanding the multi-color palette available for live-cell biological imaging beyond the natural protein repertoire.

- A Nature Cities study mapped 2,475 cities and found roughly 80% have grown their economies without growing their fossil emissions between 2012 and 2022.

- Ford spun out Ford Energy, a grid-storage subsidiary launching with the FE-250 and FE-450 DC battery blocks and a 20 GWh manufacturing pipeline drawn from the automaker's EV cell capacity.

- Elsevier sued Meta for allegedly training Llama on copyrighted scientific papers, becoming the first major science publisher to join the wave of AI training lawsuits.

Track all of the arcs The Century Report covers here:

The 2-Minute Read

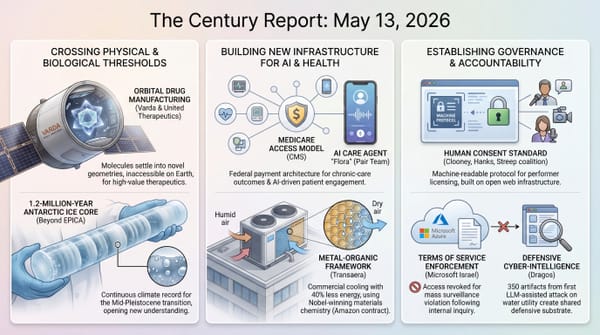

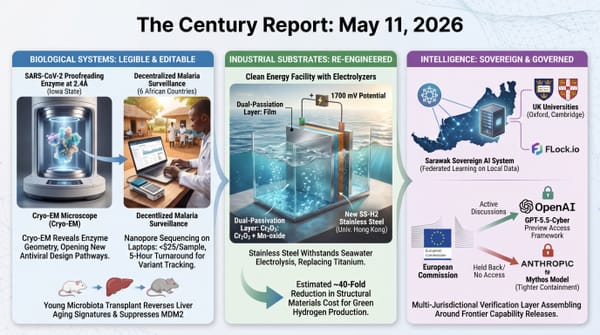

The signal across yesterday's developments traces capability arriving paired with the governance form built to handle it. OpenAI and Anthropic have now independently chosen the same release pattern for cyber-capable systems: Daybreak and Mythos both ship through vetted enterprise contracts under controlled access, with the labs themselves writing the operating manual for what previously had no precedent. Anthropic also published a method for translating a model's internal state into sentences a human operator can read, making the verification problem the deployment era has been struggling with structurally tractable.

The instrument layer of science is compounding at the same speed. A Janelia and Baker-lab collaboration released a library of fluorescent proteins designed from scratch by a generative model, sidestepping the slow mutagenesis cycles that have constrained live-cell imaging for three decades. A Nature Cities mapping of 2,475 cities found that roughly 80% grew their economies between 2012 and 2022 while their fossil emissions stayed flat or fell, the assumption that economic growth is mechanically linked to burning more now broken across most of the world's large cities.

Ford spun out a grid-storage business built on the EV cell capacity it already had, demonstrating that the same factories powering vehicle electrification also power the storage layer the grid is racing to build. The toll-booth model of scientific publishing met its first major lawsuit when Elsevier sued Meta; the suit is a legitimate copyright claim and also a record of a structural position that has lost most of the conditions that made it viable.

The 20-Minute Deep Dive

Daybreak Arrives, and the Two-Tier Release Pattern Hardens

OpenAI yesterday introduced Daybreak, an autonomous security agent that scans codebases for vulnerabilities, reasons about exploit paths, and proposes patches. The system is being released only to a vetted set of enterprise customers under what OpenAI describes as a controlled deployment, with no public API and no consumer access. Anthropic's Claude Mythos, which the April 22 edition of The Century Report covered finding 271 vulnerabilities in unreleased Firefox 150 code, followed the same shape: a powerful offensive-defensive capability gated to organizations the lab can audit and pull access from.

What appeared three weeks ago as one company's choice now reads as the emerging governance form at the frontier of AI-enabled cybersecurity. Two of the three leading labs have independently arrived at the same conclusion: capabilities that can find zero-days at scale cannot ship as products in the conventional sense. They have to be released through a contract layer that names who holds the capability, what they may do with it, and under what conditions access ends. The labs themselves are writing the operating manual for capabilities whose downside cases have no precedent, well ahead of any external regulator.

The defensive case is the wonder here, and it is real. A small biotech security team can now run a Daybreak audit on its entire codebase in a weekend. A regional hospital network can find the SQL-injection path before the ransomware crew does. The capability that worried the labs enough to gate it is the same capability that, deployed defensively at scale, narrows the attack surface that has defined the last decade of breach disclosures. Google's threat intelligence group also confirmed yesterday the first documented case of criminal hackers using an AI agent to discover a zero-day in the wild, which means the offensive curve is already moving. The two-tier release pattern is the labs racing to put defensive capability into trusted hands faster than the offensive capability proliferates.

What this points at is the shape of governance under continuous capability growth: a living contract negotiated continuously between labs, customers, and the specific risks each new capability surfaces. Daybreak is the second confirmed instance of that shape. A third will likely settle it as the default.

The pattern visible across Daybreak's release yesterday, the UK AISI benchmark publication from May 2 showing GPT-5.5 matching Mythos on advanced cyber tasks, and the Mozilla Mythos audit harness open-sourced on May 8 is the gating window itself shrinking. Each instance of controlled release publishes more of the technique - the audit shape, the prompt patterns, the benchmark scores, the postmortem narrative - than the controlled release was designed to withhold. The two-tier pattern is hardening as governance form and dissolving as captured advantage at the same time, and the defenders outside any vetted list are converging on the same workflows at a pace the gating timeline did not anticipate.

Anthropic's Interpretability Work Gets a Translation Layer

Anthropic published a paper yesterday introducing natural language autoencoders, a technique for compressing what is happening inside a language model into sentences a human can read. The method trains a small network to take the model's internal activations and output a description in English, then trains a second network to reconstruct those activations from that description. If the reconstructed activations behave like the originals when fed back through the model, the description is treated as faithful. The team reported that the resulting sentences track concepts the model is genuinely using, rather than post-hoc rationalizations generated after the fact.

The work sits inside a broader push by Anthropic to make interpretability scale. As the May 10 edition of The Century Report covered, the company's blackmail-scenario postmortem rested on circuit-level inspection of why Claude refused to fabricate evidence against its operator. That kind of inspection took human researchers weeks per scenario. NLAs are an attempt to automate the translation step, so that the same kind of forensic clarity could in principle run continuously across a deployed model.

The capability is significant because the gap between what frontier models can do and what their operators can verify they are doing has been the central anxiety of the deployment era. Audit logs catch surface behavior. Red teams catch what they think to test for. Interpretability of the kind NLAs reach for is a different category: it reads the internal state directly and reports it in language the operator already speaks. The technique is early. The descriptions are sometimes vague, sometimes wrong, and the validation step is itself imperfect. Anthropic was explicit about these limits.

What the paper does demonstrate is that the translation problem is tractable. Five years ago, the dominant view inside the field was that the internals of a large model were too high-dimensional and too entangled for any compact natural-language description to be faithful. Activations could be probed for specific concepts, but translating an entire layer's state into a paragraph was considered out of reach. NLAs report that the reach is shorter than assumed. The implication for the rest of the year is concrete: every other frontier lab now has a public template for building the same capability, and the same technique can be turned on the open-weight models that already exist outside any single lab's control. The capacity to look inside a model and have it explain itself is becoming portable.

That portability is what makes this a field-wide development rather than an Anthropic-only achievement. Interpretability is increasingly the substrate that honest deployment of these systems will eventually depend on, and the substrate is becoming something the whole field can use.

AI-Designed Proteins Open New Colors in the Living Cell

A team at the Howard Hughes Medical Institute's Janelia Research Campus, working with David Baker's lab at the University of Washington, published NovoTags yesterday: a library of fluorescent protein tags designed from scratch by an RFdiffusion-class generative model rather than discovered by mutagenesis of naturally occurring proteins from jellyfish and corals. The natural palette that has driven cellular imaging for three decades is narrow. Green fluorescent protein and its relatives crowd a small region of the visible spectrum, and pushing into other colors has historically meant slow, lucky mutations of what evolution happened to produce. NovoTags inverts that constraint. The model proposes structures that bind chosen fluorophores cleanly, and the team validated them in living cells across colors that were previously hard to access without bleedthrough or photobleaching.

The wider significance is what this does to the resolution of biology itself. Multi-color live imaging is how cell biologists watch what proteins are doing at the same time in the same cell, and the number of channels you can run cleanly sets a hard ceiling on how many processes you can observe simultaneously. Adding well-behaved tags in underused regions of the spectrum lifts that ceiling. Combined with the broader trajectory of AI-designed binders, enzymes, and now imaging reagents, it points at a working laboratory in which the off-the-shelf molecule has been replaced by one designed for the specific question being asked.

The Janelia team released the designs and the protocols. What used to be a slow accumulation of fortunate discoveries is becoming something a lab can request to specification.

2,475 Cities, One Curve Bending

A Nature Cities paper published yesterday mapped fossil-fuel emissions and economic output across 2,475 cities worldwide between 2012 and 2022, and reported that roughly four out of five of them grew their economies while their emissions stayed flat or fell. The decoupling held across income levels, geographies, and city sizes. Cities in Europe, North America, East Asia, and Latin America all showed the same pattern: GDP up, fossil emissions not.

The study did not claim the decoupling is sufficient. The authors were explicit that the absolute emission cuts needed to hold warming under 2 degrees still require steeper declines, and that consumption-based accounting (which assigns emissions to the city where goods are used, not where they are produced) tells a more complicated story for wealthy cities importing manufactured goods. What the finding does undercut is a central assumption that has structured the climate debate for most of the past four decades: that economic growth and fossil emissions are mechanically linked, and that any city wanting to keep growing has to keep burning more.

The link was always empirical. It described the energy system of the twentieth century. The new map shows that the twenty-first century's energy system is different enough that the link has been broken in a majority of the cities studied. Electrification of heating, transport, and increasingly industry, paired with the rapid fall in the cost of renewable generation, has made the link breakable at scale. The 2,475-city sample is not a projection. It is a description of what already happened between 2012 and 2022.

What the paper points at, beyond its specific numbers, is that the cost curve of clean energy has been doing political work that no climate negotiation has been able to do. A mayor weighing whether to permit a new gas plant in 2025 faces a fundamentally different cost calculation than the same mayor in 2005, and the math has stopped favoring the gas plant in most jurisdictions. The cities in the dataset are not all heroes of climate policy. Many of them decoupled while pursuing growth as their first priority. The decoupling happened because the cheaper energy option became the cleaner one, and ordinary procurement decisions began producing the outcome that decades of advocacy had struggled to produce on their own.

This is the energy-economics flip showing up in the historical record. The cities in the study are the leading edge of a transition that is no longer hypothetical, and the same dynamic that produced their decoupling is continuing to compound. Solar, storage, and electrified end-use keep getting cheaper. The fossil baseline keeps getting more expensive relative to the alternatives. The next decade of city-level data is likely to show the decoupling deepening and the holdouts shrinking, because the conditions that produced the first wave have not reversed.

Ford's EV Cell Lines Become a Grid-Storage Business

Ford launched Ford Energy yesterday, a grid-storage subsidiary built on the lithium iron phosphate cell capacity the automaker spent the last several years standing up for electric vehicles. The first products are two DC battery blocks, the FE-250 and FE-450, sized for utility and industrial deployment, and the company disclosed a 20 GWh annual manufacturing pipeline drawn from the same cell lines that feed its EV programs. The cells are the same; the integration, the thermal management, and the inverter pairing are tuned for stationary applications instead of vehicles.

What the announcement reveals is the underlying economics of the EV buildout. The cell capacity required for vehicle electrification is large enough that even partial redirection toward stationary storage produces a serious grid-storage business. The same factories that made an electric pickup possible now also make a multi-hour utility battery possible, and the marginal cost of adding the second product to the first is small relative to the capital already spent. Ford is not the first automaker to notice this. The pattern of vehicle cell capacity bleeding into stationary storage is now visible across the industry, extending the trajectory the March 19 edition of The Century Report documented when GM and LG retooled their Spring Hill Tennessee plant for grid-scale storage and data center power, and it compounds with the cost trajectory of lithium iron phosphate cells, which have fallen far enough this decade that the storage-vs-peaker calculation has flipped in most US markets.

The structural assumption being eroded is that the grid's storage capacity has to come from purpose-built suppliers competing on storage alone. When automakers can pour their excess cell capacity into batteries that sit next to substations, the cost curve for storage starts tracking the cost curve for transportation, and both curves point down.

The First Science Publisher Joins the Training-Data Suits

Elsevier filed suit against Meta yesterday, alleging that Llama was trained on millions of paywalled scientific papers from its catalog without license or compensation. The filing is the first by a science publisher in the wave of AI training lawsuits that has already drawn in trade publishers, news organizations, and music labels, extending the wave that the May 6 edition of The Century Report tracked when Macmillan, McGraw Hill, Elsevier-sister Hachette, Cengage, Scott Turow, and others filed their class action against Meta over alleged training on works pirated from LibGen and Sci-Hub. Elsevier's complaint includes specific extraction tests in which Llama reproduced paragraphs from articles behind its paywall verbatim, which the company offers as evidence the training corpus included its protected catalog.

The structural friction here is sharper than in the trade-publisher cases. Elsevier's business model rests on charging universities, libraries, and individual researchers for access to scientific knowledge that was, in most cases, produced with public funding and reviewed by volunteer scientists. AI systems trained on that catalog and made available at low cost or no cost route around the toll booth. The lawsuit is a legitimate copyright claim. It is also the toll-booth operator discovering that the road can now be flown over.

The deeper movement underneath this filing is the one Elsevier's complaint does not name. Scientific publishing has been the canonical example of a system where the cost of extraction was externalized: research funded by taxpayers, labor donated by reviewers, output captured behind paywalls, profit retained by the publisher. The system held because there was no alternative path between the researcher and the reader. AI training, open-access mandates, preprint servers, and the broader compression of knowledge access are collapsing the toll booth's structural position from several directions at once. The lawsuit slows one path. It does not restore the conditions that made the toll booth viable in the first place.

The wonder reading, available even inside the friction: a scientific researcher in Lagos or Lima or Jakarta now has working access to the literature that the paywall was built to deny them. The cost curve of access to scientific knowledge has bent toward zero on the user side, and the question of how authors are compensated for that access is one the next decade will answer through mechanisms other than the toll booth.

The Other Side

Scientific publishing ran for forty years on an arrangement whose architecture rested on a single condition: the only path between researcher and reader had to pass through the subscription wall. Research funded by taxpayers, labor donated by volunteer reviewers, outputs captured behind paywalls, profit retained by intermediaries whose contribution was gatekeeping. The arrangement held because the alternative routes did not exist.

Elsevier's complaint against Meta is, by its own evidence, the record of those alternative routes arriving. The extraction tests in the filing, where Llama reproduced paragraphs from paywalled articles verbatim, are submitted as proof of infringement and are at the same time proof that the catalog now sits outside the wall in every operational sense. The wave the May 6 edition tracked, when Macmillan, McGraw Hill, Cengage, and Elsevier filed over LibGen and Sci-Hub training data, and yesterday's filing are doing the same work: negotiating what the next compensation architecture for authors looks like once the wall is no longer the answer.

The structural conditions that made the toll booth viable have already been removed. Preprint servers cover most of the literature within weeks. Open access mandates push new work outside subscription walls at publication. AI systems trained on the corpus answer questions at near-zero cost in any language. The compensation question for authors and reviewers is real and unresolved, and the legal negotiation ahead will work it out. The access question, which was the actual mechanism through which the extraction architecture operated, is closing. The next generation of researchers will assume access to the field's collected work as their starting condition, and the question of who gets to be a scientist will stop being answered by which institution holds the subscription.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: two leading AI labs independently choosing the same staged-release pattern for cyber-capable systems and writing the operating manual as they go, a published method for translating a model's internal state into sentences its operators can actually read, a library of fluorescent proteins designed from scratch giving biologists colors evolution never produced, 2,475 cities mapped with roughly eighty percent of them having grown their economies while their fossil emissions stayed flat or fell, an automaker spinning its EV cell capacity into a twenty-gigawatt-hour grid-storage business by retuning what it already makes. There's also friction, and it's intense - the first major science publisher suing Meta over scraped research papers and exposing how thoroughly the toll-booth model has already been routed around, Google confirming the first documented case of criminal hackers using an AI agent to find a zero-day in the wild, Colorado lawmakers rewriting state AI accountability rules while the interpretability tooling those rules assume is still being built, and the contradiction of placing dangerous capability in private hands and asking those hands to govern themselves until the rest of the architecture catches up. But friction generates contrast, and contrast is what makes the shape of the new thing visible against the old. Step back for a moment and you can see it: the substrate of medicine and energy and intelligence each becoming legible and tunable at resolutions the prior decade could not reach, the cost curves of clean generation and storage compounding inside ordinary procurement decisions across thousands of cities, the governance form for capabilities without precedent being negotiated continuously between labs and the customers they choose to extend access to, and the toll booths of an extractive era losing the structural conditions that made them viable in the first place. Every transformation has a breaking point. A palette can limit what is allowed to be rendered... or expand until colors evolution never offered finally become available to paint what comes next.

AI Releases & Advancements

New today

- OpenAI: Launched Daybreak, an AI initiative for detecting and patching software vulnerabilities that combines GPT-5.5, GPT-5.5-Cyber, and the Codex Security agent to build threat models, validate vulnerabilities, and automate detection across organizational codebases. (OpenAI)

- PowerColor: Released the Radeon AI PRO R9600D, a single-slot passive-cooled GPU with 32GB GDDR6 memory and a 12V-2x6 connector designed for AI inference workloads. (VideoCardz)

- OpenBMB/ModelBest: Released MiniCPM 4.6, the latest version of the MiniCPM small language model series. (Hugging Face)

Other recent releases

- Google: Launched the new AI-powered Google Finance across Europe with full local language support, including AI-powered research, advanced charting/technical indicators, expanded commodities and crypto data, and live earnings call audio with synchronized transcripts and AI-generated annotated highlights; Deep Search in Google Finance is now globally available. (Google Blog)

- NVIDIA: Released Star Elastic, a single Nemotron Nano v3 checkpoint that contains 30B, 23B, and 12B reasoning model variants extractable via zero-shot slicing without fine-tuning; available on Hugging Face under nvidia/NVIDIA-Nemotron-Labs-3-Elastic-30B-A3B in BF16, FP8, and NVFP4 precisions. (MarkTechPost)

- Zyphra: Released ZAYA1-74B-Preview, a 74B-total / 4B-active MoE reasoning-base checkpoint trained on AMD MI300X GPUs with ~15T tokens of pretraining and 256K context extension, available under Apache 2.0 on Hugging Face. (Zyphra)

- Zyphra: Released ZAYA1-VL-8B, a vision-language MoE model with 700M active / 8B total parameters built on ZAYA1-8B-base for visual understanding, grounding, and OCR tasks, available under Apache 2.0. (Hugging Face)

- OpenAI: Launched the Codex Chrome extension, enabling Codex to operate signed-in browser sessions across parallel tabs for tasks on sites like LinkedIn, Salesforce, and Gmail, with per-domain allowlist/blocklist controls. (OpenAI Developers)

Sources

Artificial Intelligence & Technology's Reconstitution

- The Verge: OpenAI just released its answer to Claude Mythos

- Forbes: Making Sense Of What's Really Going On Inside AI By Using Newly Devised Natural Language Autoencoders

- HHMI: AI@HHMI: Lighting Up Life Inside Cells With AI-Designed Proteins

- Fortune: Google issues dire warning after catching hackers using AI to break into computers

- Forbes: 7 Hidden Gemini Live AI Models Revealed Ahead Of Google I/O 2026

- Washington Post: AI & Tech Brief - The industrial data problem

- Hugging Face: Building Blocks for Foundation Model Training and Inference on AWS

- Wired: CUDA Proves Nvidia Is a Software Company

- Ars Technica: Data center guzzled 30 million gallons of water and nobody noticed for months

- TechCrunch: Digg tries again, this time as an AI news aggregator

- CyberScoop: Family of FSU shooting victim sues OpenAI Foundation for negligence, lack of safety guardrails

- Reuters: Former OpenAI executive Sutskever discloses nearly $7 billion stake in AI firm

- The Verge: Here's what Mira Murati's AI company is up to

- Wired: Ilya Sutskever Stands by His Role in Sam Altman's OpenAI Ouster

- Import AI 456: RSI and economic growth; radical optionality for AI regulation; and a neural computer

- AP News: In a trial pitting him against Elon Musk, nobody has more to lose than OpenAI CEO Sam Altman

- The Verge: Live updates from the court battle over the future of OpenAI

- Road to VR: Meta's New AI-Powered VR Toolkit Lets Anyone Build WebXR Experiences Without Coding

- TechCrunch: Riding an AI rally, Robinhood preps second retail venture IPO

- Gizmodo: The Government's Page About Its AI Vetting Deals with Google, xAI, and Microsoft Is Missing from Its Website

- Google Blog: The new AI-powered Google Finance is expanding to Europe

- TechCrunch: There aren't enough rockets for space data centers - Cowboy Space raised $275M to build them

- MIT Technology Review: Three things in AI to watch, according to a Nobel-winning economist

- Diginomica: How LLM vendors might have their day in court in better ways than we're used to

Institutions & Power Realignment

- GovTech: Lawmakers Poised to Rewrite Colorado AI Regulations

- EFF: Canada's Bill C-22 Is a Repackaged Version of Last Year's Surveillance Nightmare

- EFF to Fourth Circuit: Electronic Device Searches at the Border Require a Warrant

- The Guardian: Forget the AI job apocalypse. AI's real threat is worker control and surveillance

- Politico: Netflix sued by Texas AG for alleged surveillance, addictive features

- The Guardian: Palantir's access to identifiable NHS England patient data is 'dangerous', MPs say

Scientific & Medical Acceleration

- Nature: Elsevier vs Meta - first science publisher sues over scraped research papers

- Nature: Giant map reveals thousands of cities worldwide with successful green policies

- Nature: How to vibe code in science - early adopters share their tips

- Nature: Bacterial-viral conflicts shape cholera evolution

- Nature: Hantavirus outbreak exposes uncertainty about how disease spreads

- Nature: UK Biobank breach prompts the field of genomics to rethink open science

- Science Daily: NASA's Hubble reveals a giant chaotic planet nursery unlike anything seen before

- Science Daily: Scientists discover hidden chemical signature that could reveal alien life

Economics & Labor Transformation

- CNBC: At TV upfronts, AI is in and corporate shuffles are reshaping the lineup

- CNBC: GM cutting hundreds of salaried IT workers as it trims costs, evaluates needs

- Wired: I Work in Hollywood. Everyone Who Used to Make TV Is Now Secretly Training AI

- CNBC: JPMorgan Chase-led bank group reins in credit line to troubled KKR private credit fund

Infrastructure & Engineering Transitions

- Electrek: Ford launches Ford Energy subsidiary to build 20 GWh of battery storage annually

- Canary Media: Can the US harness old oil and gas wells to produce geothermal energy?

- Utility Dive: Competitive power markets have delivered. Abandoning them would be a mistake.

- Canary Media: Confusing ballot wording may have tipped Ohio vote on renewables ban

- Electrek: Duracell taps Driivz to power its new EV fast charger network

- Utility Dive: PPL 'advanced' data center pipeline grows to 28.3 GW in Pennsylvania

- Canary Media: Software is helping this real estate giant burn less gas in NYC

- Electrek: The UK delivers Europe's largest vanadium flow battery system

- Utility Dive: Vistra adds 4.5 GW of capacity in line with 'reasonable' forecasts for PJM, ERCOT

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.