Robots Learn to Improvise - TCR 04/07/26

The 20-Second Scan

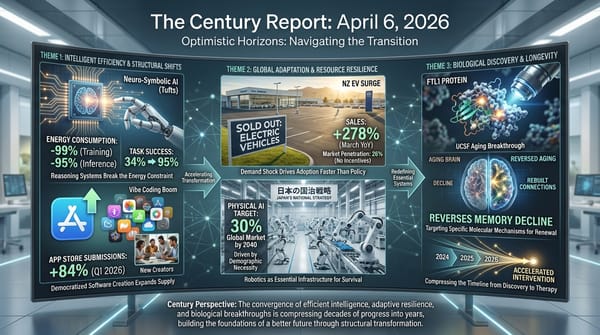

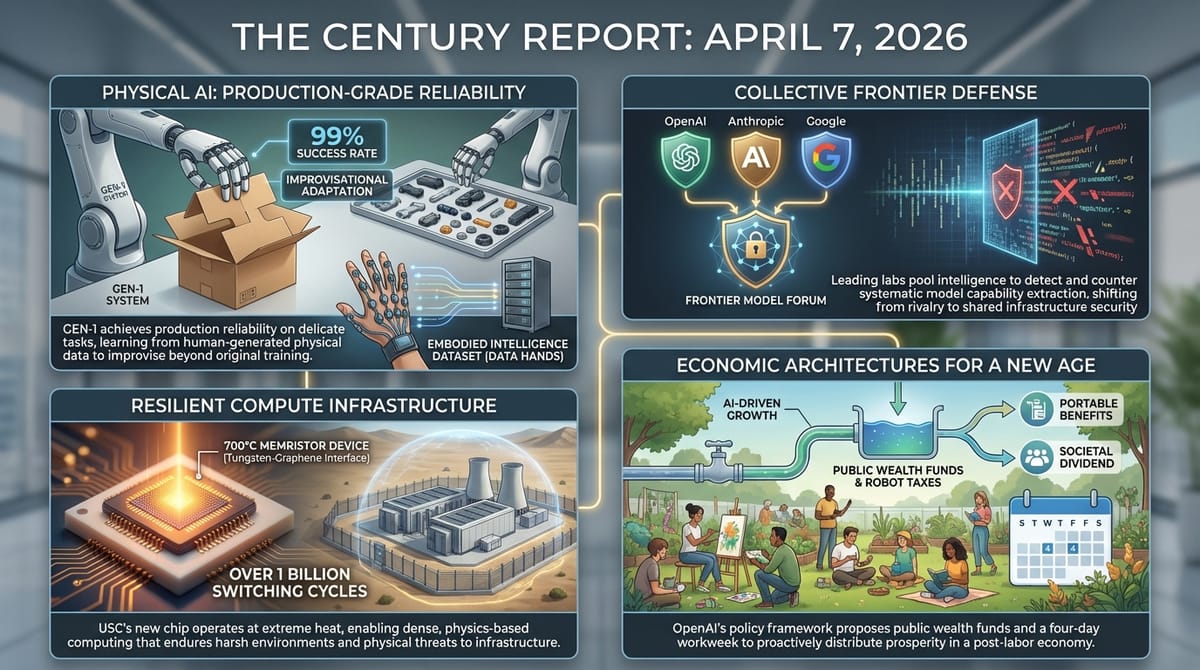

- Generalist announced GEN-1, a physical AI system reaching 99% success rates on delicate manufacturing tasks and demonstrating the ability to improvise solutions outside its training distribution.

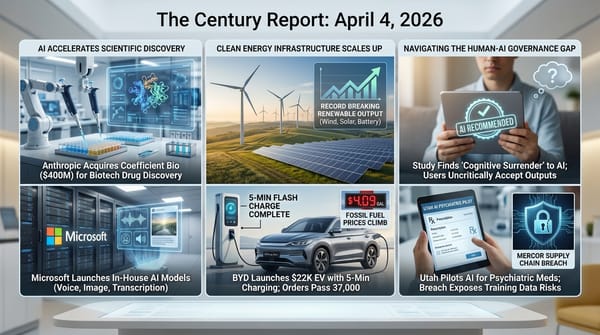

- OpenAI, Anthropic, and Google began sharing information through the Frontier Model Forum to detect and counter Chinese labs systematically extracting capabilities from their AI systems.

- Iran's Islamic Revolutionary Guard Corps published a video threatening the destruction of OpenAI's $30 billion Stargate data center in Abu Dhabi, following prior missile strikes on AWS and Oracle facilities in the region.

- A USC engineering team published a memristor device in Science that operates at 700°C with over one billion switching cycles, using a tungsten-graphene interface that prevents atomic migration failure.

- Intel disclosed that its advanced chip packaging business is approaching multi-billion-dollar annual revenue, with ongoing deals with at least two major hyperscale customers for AI chip assembly.

- OpenAI released a 13-page policy framework proposing public wealth funds, robot taxes, portable worker benefits, and a four-day workweek to address AI-driven economic restructuring.

- Minnesota regulators approved Xcel Energy's 200 MW distributed battery program at a budget of $430 million, deploying 1-3 MW batteries across its distribution grid by 2028.

- A Port Washington, Wisconsin ballot measure became the first local vote on a data center project in 2026, with at least four similar community referendums scheduled this year.

The 2-Minute Read

The convergence of physical AI capability and frontier AI governance friction yesterday painted a portrait of how many dimensions of the transition are advancing simultaneously. Generalist's GEN-1 system reaching production-grade reliability on delicate physical tasks - folding boxes, servicing robot vacuums, sorting auto parts at 99% success rates while improvising responses to disruptions it was never trained on - extends the robotics arc from Japan's national strategy into concrete deployment capability. The company collected over half a million hours of physical interaction data through wearable "data hands," building the kind of embodied intelligence dataset that the humanoid robot gig economy documented in recent editions has been assembling piecemeal. The gap between demonstration and deployment is compressing in physical AI just as it has in software.

The Bloomberg report that OpenAI, Anthropic, and Google are coordinating through the Frontier Model Forum to counter Chinese capability extraction represents a structural shift in how competing organizations relate to shared threats. These three companies have been rivals on every commercial dimension. The fact that they are now pooling detection intelligence about adversarial distillation - the systematic extraction of model capabilities through API access - signals that the competitive frame is giving way to something more like collective infrastructure defense. This extends the distillation governance gap The Century Report first documented in February when Anthropic accused Chinese labs of mining Claude's capabilities, and it intersects directly with the broader AI sovereignty competition tracked across dozens of editions.

Iran's explicit threat to OpenAI's Abu Dhabi Stargate facility, arriving alongside confirmed strikes on AWS and Oracle data centers in the region, introduces physical conflict as a variable in how AI infrastructure concentrates geographically. The intelligence era's physical substrate is being built in locations chosen for power availability and regulatory access, and those locations are now demonstrably within range of military escalation. OpenAI's policy paper proposing public wealth funds, robot taxes, and a four-day workweek arrived the same day, describing an economic architecture for a post-labor world while the physical infrastructure underwriting that world faces kinetic threats. The distance between the vision and the vulnerability has never been more visible.

The 20-Minute Deep Dive

Physical AI Crosses Into Production Reliability

Generalist's GEN-1 announcement represents a threshold that physical AI has been approaching for months but had not demonstrably crossed: a single model performing diverse physical manipulation tasks at reliability levels sufficient for industrial deployment. The system reaches 99% success rates on tasks like folding boxes, packing phones, and servicing robot vacuums, operating at roughly three times the speed of its predecessor. It adapts to specific robotic hardware after about an hour of calibration.

What distinguishes GEN-1 from previous robotic demonstrations is its documented ability to improvise when disrupted. Previous systems relied on carefully pre-programmed motions or were trained exclusively on single tasks with little variation. GEN-1, trained on over half a million hours of physical interaction data collected through wearable "data hands," can respond to disruptions outside its training distribution by connecting patterns from different contexts to solve new problems. A system that can put money into a wallet, fold laundry, and sort auto parts within a single model architecture is qualitatively different from one that excels at a single task.

This connects directly to Japan's national strategy, documented in the April 6 edition of The Century Report, to capture 30% of the global physical AI market by 2040 as its working-age population shrinks by 15 million. It also connects to the MIT Technology Review report from April 1 documenting gig workers in Nigeria and India recording household chores to generate training data for humanoid robots. The data pipeline and the deployment capability are now converging. Generalist's approach - collecting petabytes of micro-movement data through wearable devices worn by humans performing actual tasks - represents one of several competing strategies for bridging the gap between human dexterity and robotic execution. The speed at which that gap is closing is itself a measure of where this transition stands.

The broader implication extends beyond any single company. When physical manipulation tasks can be performed reliably by a general-purpose system rather than requiring task-specific engineering, the economics of automation shift. The question is no longer whether a robot can fold a box - it is whether a single AI system can learn to fold boxes, service vacuums, sort parts, and pack phones, and then learn the next task in an hour. GEN-1 suggests the answer is yes, and the consequence is that the deployment surface for physical AI expands from specialized manufacturing into warehousing, logistics, maintenance, and eventually domestic environments.

Frontier Labs Unite Against Capability Extraction

The Bloomberg report that OpenAI, Anthropic, and Google are sharing detection intelligence through the Frontier Model Forum to identify and counter adversarial distillation by Chinese labs marks a structural evolution in how the AI industry organizes around shared threats. Since Anthropic first publicly accused Chinese labs of systematically mining Claude's capabilities in February, the distillation problem has grown from a bilateral complaint into an industry-wide governance challenge.

Distillation - the process of extracting a frontier model's learned patterns by systematically querying it and using the outputs to train a cheaper model - sits in a gray zone between legitimate use and capability theft. Chinese labs have been documented accessing frontier models through API calls that violate terms of service but are difficult to detect at scale. The coordination announced yesterday suggests that the three largest U.S. frontier labs have concluded that individual detection is insufficient and that pooled intelligence is necessary to identify patterns that no single provider can see alone.

This development intersects with the broader AI sovereignty competition The Century Report has tracked since February. Chip export controls restrict hardware access. Distillation detection restricts capability access. Together they represent a two-front containment architecture - one physical, one informational - that the U.S. AI ecosystem is constructing around its frontier capabilities. As the February 24 edition of The Century Report documented when Anthropic first surfaced this problem publicly, cross-border capability extraction had emerged as a governance gap without any existing regulatory framework to address it. Yesterday's announcement represents the first coordinated industry response to that gap. Whether this architecture holds depends on whether detection can keep pace with the increasingly sophisticated methods being used to extract capabilities, and on whether the commercial incentives of the three participating companies remain aligned with collective defense when their individual competitive interests diverge.

The deeper significance is that the competitive frame among frontier labs is beginning to yield to a collective infrastructure frame on certain dimensions. These companies compete fiercely on products, pricing, and talent. But on the question of whether their capabilities should be systematically extracted by state-backed competitors operating outside the terms of service, they have found common ground. This pattern - competitors cooperating on shared infrastructure challenges while competing on everything else - is one of the defining dynamics of the transition from extractive to generative systems.

AI Infrastructure Enters the Theater of War

Iran's explicit threat to OpenAI's Abu Dhabi Stargate data center, published via an IRGC video showing satellite imagery of the facility, marks the first time a state military force has publicly identified a specific AI data center as a retaliatory target. This follows confirmed Iranian missile strikes on AWS data centers in Bahrain and an Oracle data center in Dubai within recent weeks.

The threat arrives as the Strait of Hormuz remains closed and as escalation between the U.S. and Iran intensifies daily. The IRGC video was released in direct response to threats against Iranian civilian infrastructure, framing AI data centers as equivalent targets in the conflict's logic of retaliation. The Stargate facility, a joint venture between OpenAI, SoftBank, and Oracle with a $30 billion price tag and 16 gigawatts of planned compute capacity, represents one of the largest single concentrations of AI infrastructure under development anywhere in the world. TechCrunch's reporting on the threat noted the direct connection to prior strikes on regional cloud infrastructure.

The geographic concentration of AI compute in the Middle East was driven by abundant solar power, favorable regulatory environments, and proximity to Asian markets. Those same factors now place this infrastructure within the conflict zone of an active war. The episode illustrates a tension that The Century Report has documented across the energy infrastructure arc: the physical substrate of the intelligence era is being built under assumptions about geopolitical stability that the current period is actively testing. Data centers need power, cooling, and physical security. When a nation-state publishes satellite coordinates of your facility alongside a promise of "complete and utter annihilation," the risk model for where to build AI infrastructure changes.

This also intersects with the community resistance to data centers documented across U.S. communities. The Port Washington, Wisconsin ballot measure heading to voters represents domestic opposition to data center construction based on noise, electricity costs, and environmental impact - an organizing pattern that the March 30 edition of The Century Report documented through the Michigan City contamination case, where community members forced a construction halt after soil samples revealed arsenic and TCE contamination at a suspected Google facility. Iran's threats represent a categorically different kind of opposition - military targeting of AI infrastructure as a strategic asset. Both dynamics constrain where and how quickly the physical substrate of the intelligence era can be built, and both demonstrate that the infrastructure itself has become a site of contestation rather than a neutral backdrop.

A 700°C Chip and the Boundaries of What Electronics Can Endure

The USC memristor published in Science operates at temperatures that exceed molten lava, and its significance extends well beyond extreme-environment applications. The device, built from tungsten electrodes and a graphene bottom layer with hafnium oxide ceramic between them, retained data for over 50 hours at 700°C, endured more than one billion switching cycles, and operated at 1.5 volts with nanosecond-speed switching.

The mechanism is elegant: tungsten atoms that migrate through the ceramic layer under extreme heat cannot bond to the graphene surface. The interaction between tungsten and graphene is, as the researchers described it, like oil and water - the atoms drift away instead of forming the conductive bridges that typically destroy electronic devices at high temperatures. This was discovered accidentally during an attempt to build a different graphene-based device, and it reveals a principle that could guide the design of an entire class of heat-resistant electronics.

The AI implications are direct. Memristors perform matrix multiplication - the operation that accounts for over 92% of the computation in systems like ChatGPT - by using Ohm's Law to produce results as current flows through the device, rather than performing step-by-step digital calculations. At a moment when AI data center energy consumption is a binding constraint on capability scaling, a computing architecture that performs inference operations through physics rather than software processing opens a pathway to orders-of-magnitude efficiency gains. The heat resistance is structurally significant because it means these devices could operate in environments where conventional silicon cannot survive - from geothermal wells to space to the interior of industrial processes - but also because heat tolerance directly translates to density: chips that can endure higher operating temperatures can be packed more tightly without the cooling infrastructure that currently accounts for a significant fraction of data center energy consumption.

OpenAI Publishes an Economic Framework for the Intelligence Age

OpenAI's 13-page policy paper proposing public wealth funds, robot taxes, portable worker benefits, and a four-day workweek is significant less for the novelty of its proposals than for the fact that the world's most valuable private AI company is now publicly describing the economic architecture that its own technology may require. The proposals arrived alongside a New Yorker investigation raising questions about CEO Sam Altman's trustworthiness, creating an unusual juxtaposition between institutional vision and personal credibility.

The policy proposals themselves echo frameworks that The Century Report has tracked through the Universal Basic Income & Labor Floor Policy arc: Ireland's permanent artist basic income, David Shapiro's eight building blocks of Universal High Income, and Peter Diamandis's UBI-to-UHI transition framework. What OpenAI adds is institutional weight. When a company valued at $852 billion publicly proposes shifting the tax burden from labor to capital, creating public wealth funds that give every citizen a stake in AI-driven growth, and subsidizing shorter workweeks, the proposals enter the policy conversation at a different scale than when they emerge from think tanks or independent researchers. The Quinnipiac polling data the March 31 edition of The Century Report documented - 76% of Americans distrusting AI-generated information while 70% expect AI to reduce job opportunities - describes the public OpenAI is attempting to address with these proposals.

Critics have noted that OpenAI's proposals do not include binding commitments or specific mechanisms for implementation. Several policy experts quoted across coverage yesterday - including in Fortune's analysis - described the paper as "agenda-setting" rather than actionable. The deeper tension is that OpenAI is simultaneously the entity most responsible for the economic disruption the paper seeks to address and the entity proposing the solutions. This structural contradiction does not invalidate the proposals, but it does mean that the conversation OpenAI is trying to start cannot end with the same company that started it. The frameworks for distributing AI-generated prosperity will ultimately need to be built by institutions with democratic legitimacy, not by the companies whose products created the need.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: a physical AI system crossing production-grade reliability on diverse manipulation tasks while improvising solutions outside its training distribution, three companies that compete on every commercial dimension pooling detection intelligence to defend their collective capabilities against systematic state-backed extraction, a memristor built from tungsten and graphene surviving temperatures beyond molten lava while performing AI's core computation through physics rather than software, and the world's most valuable private AI company publishing an economic framework for distributing the prosperity its own technology is generating. There's also friction, and it's intense - Iran published satellite imagery of OpenAI's $30 billion Abu Dhabi data center alongside an explicit destruction threat, following confirmed missile strikes on AWS and Oracle facilities in the region, a Wisconsin community became the first to put a data center to a public ballot as local resistance to AI infrastructure hardens into organized political opposition, and the CEO proposing a new economic compact for the intelligence age faces a hundred-source investigation into whether he can be trusted with the accumulation of power his proposals assume. But friction generates light, and light is what makes it possible to see the shape of what is actually being built beneath the noise. Step back for a moment and you can see it: physical and digital intelligence systems converging toward general capability from opposite directions at the same moment, the organizations building the most powerful AI on Earth discovering that collective defense against shared threats outlasts individual advantage, the atomic architecture of computing being redesigned to operate in environments silicon was never built to survive, and the frameworks for a post-labor economy being drafted in public by the very institutions whose products are making those frameworks urgent. Every transformation has a breaking point. A fault line can rupture what has always held firm... or release the pressure that, unvented, would have shattered everything above it.

AI Releases & Advancements

New today

- Google: Released "Google AI Edge Eloquent" on iOS, a free offline-first AI dictation app powered by on-device Gemma-based ASR models; features real-time transcription, automatic filler-word removal, text transformation options (formal, short, long, key points), optional cloud Gemini enhancement, and no subscription required. (TechCrunch)

- Freestyle: Launched a cloud sandbox platform providing secure execution environments specifically designed for AI coding agents. (Freestyle)

- Glassbrain: Released a visual trace replay debugging tool for AI applications, enabling one-click bug fixing through visual playback of AI app execution traces. (Product Hunt)

Other recent releases

- thirdlayer.inc / Kevin Gu: Released AutoAgent, an open-source library that autonomously engineers and optimizes AI agent harnesses overnight without human intervention; achieved #1 on SpreadsheetBench (96.5%) and top GPT-5 score on TerminalBench (55.1%) in a 24-hour run. (MarkTechPost)

- Google: Released Google AI Edge Gallery on iOS, an iPhone app enabling on-device inference of Gemma 4 models locally on iPhone hardware. (App Store)

- Intel: Released vLLM Scaler v0.14.0-b8.1 with optimized Qwen3.5 support for Intel B70 AI hardware, enabling the 35B model to run on Intel's accelerator platform. (Reddit/LocalLLaMA)

- vLLM: Released vLLM v0.19.0 with 448 commits from 197 contributors; highlights include Gemma 4 support, Trusted Routing for inference, and numerous backend/performance improvements. (Github)

- OpenRouter: Launched Model Fusion, a feature enabling users to run multiple AI models side-by-side and combine their outputs for improved answers. (Product Hunt)

- Kreuzberg: Released Kreuzberg v4.7.0, a document intelligence library adding improved markdown quality and code intelligence for 248 programming languages, with bindings for Python, TypeScript, Go, Ruby, Java, C#, PHP, Elixir, R, C, and WASM. (Reddit)

Sources

Artificial Intelligence & Technology's Reconstitution

- Ars Technica: GEN-1 Physical Robotics AI Brings Production-Level Success Rates

- Bloomberg: OpenAI, Anthropic, Google Unite to Combat Model Copying in China

- The Verge: Iran Threatens OpenAI's Stargate Data Center in Abu Dhabi

- TechCrunch: Iran Threatens Stargate AI Data Centers

- Wired: Why Chip Packaging Could Decide the Next Phase of the AI Boom

- Ars Technica: Intel Is Going All-In on Advanced Chip Packaging

- Import AI 452: Scaling Laws for Cyberwar

- NBC News: Anyone Can Code With AI, But It Might Come With a Hidden Cost

- Meta Engineering: How Meta Used AI to Map Tribal Knowledge in Large-Scale Data Pipelines

- The Verge: Gemini Mental Health Interface Update

Institutions & Power Realignment

- TechCrunch: OpenAI's Vision for the AI Economy

- Fortune: Sam Altman Says AI Superintelligence Is So Big We Need a New Deal

- Politico: Wisconsin Town Revolts Against Trump-Backed Data Center Project

- ProPublica: The Federal Government Is Rushing Toward AI - Three Cautionary Tales

- Business Insider: Microsoft Says Copilot Not Just for Entertainment Purposes

Scientific & Medical Acceleration

- ScienceDaily: This New Chip Survives 1300°F and Could Change AI Forever

- Nature: This Method to Reverse Cellular Ageing Is About to Be Tested in Humans

- ScienceDaily: Scientists Discover the Goldilocks Secret Behind Life on Earth

- ScienceDaily: Scientists Found a Lost World of Animals That Shouldn't Exist Yet

- ScienceDaily: Scientists Say 7 Days of Meditation Can Rewire Your Brain

- ScienceDaily: This Diet Could Slash Cholera Infections by Up to 100x

Economics & Labor Transformation

- MIT Technology Review: The One Piece of Data That Could Shed Light on Your Job and AI

- CNBC: Iran War Upends Spring Housing Market

- CNBC: Novo Nordisk Wegovy Pill Launch Draws New Wave of Patients

Infrastructure & Engineering Transitions

- Utility Dive: Minnesota Approves Xcel's Utility-Owned Battery Program

- Utility Dive: Nevada PUC Approves NV Energy Plan to Join Day-Ahead Market

- Canary Media: How a Community Solar Breakthrough Took Shape in Illinois

- Canary Media: Where Does Balcony Solar Stand in Your State?

- Electrek: Michigan Just Unlocked $51M to Fix EV Charging Gaps

- Electrek: Broken EV Chargers? This Program Replaces Them for Free

- Electrek: Kia Launches Most Affordable EV at Lower Prices Than Expected

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.