Two Cyber Architectures Diverge - TCR 04/16/26

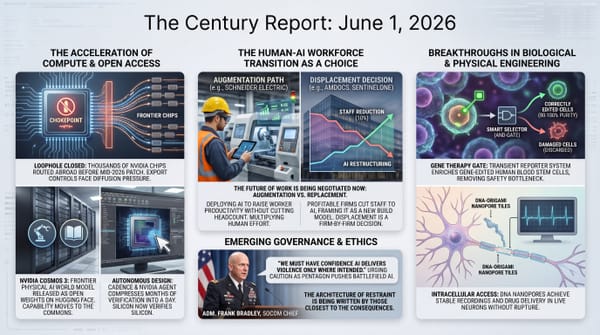

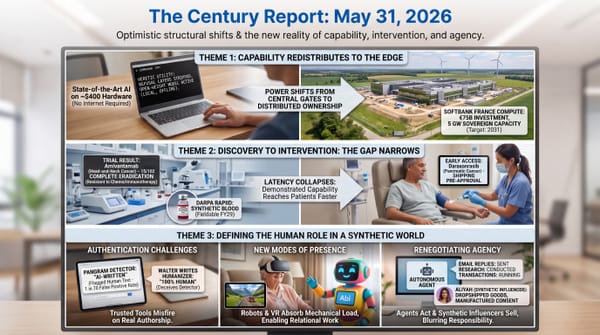

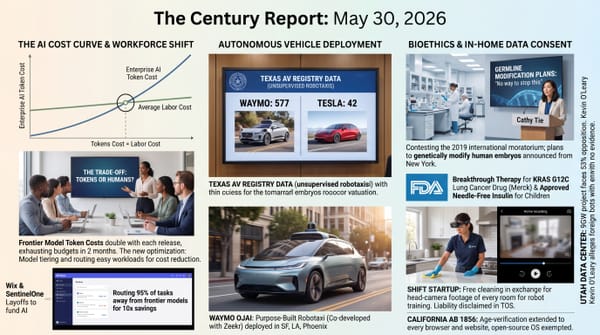

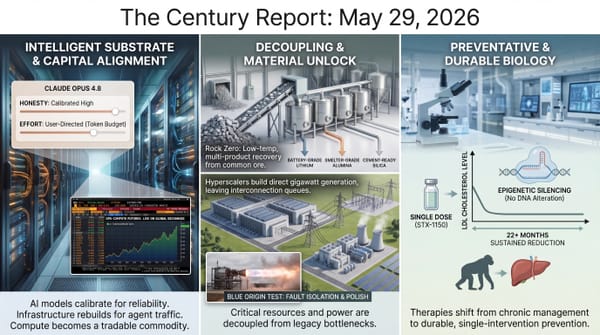

The 20-Second Scan

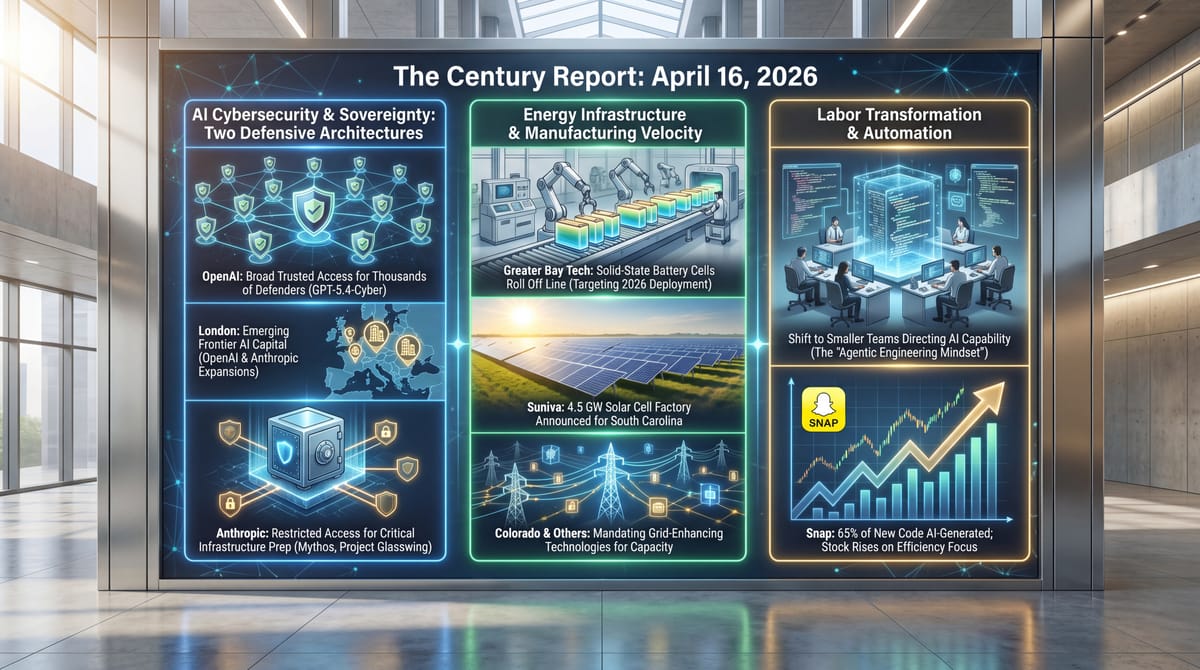

- Anthropic announced a London office expansion to 800 staff, days after OpenAI unveiled its own permanent UK headquarters in the same district.

- OpenAI released GPT-5.4-Cyber and expanded its Trusted Access programme to thousands of verified defenders, taking the opposite approach to Anthropic's restricted Mythos deployment.

- Snap laid off approximately 1,000 employees, roughly 16% of its workforce, with CEO Evan Spiegel citing AI automation and disclosing that over 65% of new code is now AI-generated or AI-assisted.

- A Wired investigation documented deepfake sexual abuse incidents across roughly 90 schools in at least 28 countries, affecting more than 600 identified students since 2023.

- Greater Bay Technology rolled the first A-sample all-solid-state battery cells off a production line, targeting gigawatt-hour mass production and in-vehicle deployment by end of 2026.

- Suniva announced a 4.5 GW solar cell factory in South Carolina, which would make it the largest merchant solar cell manufacturer in the United States by 2027.

- Colorado passed legislation requiring utilities to assess grid-enhancing technologies in their transmission plans, joining 16 states with similar mandates as a body of research estimates $110-170 billion in potential customer savings.

- A Bloomberg feature revealed how Anthropic's internal red-teaming discovered Mythos could hack the systems beneath most modern computing, with researcher Nicholas Carlini describing being "staggered" by the model's capabilities.

Track all of the arcs The Century Report covers here:

The 2-Minute Read

The cybersecurity architecture of frontier AI fractured visibly yesterday along a philosophical fault line. OpenAI's release of GPT-5.4-Cyber to thousands of verified defenders and Anthropic's continued restriction of Mythos to eleven organizations represent two genuinely different theories of how to distribute dangerous capability. OpenAI is betting that broad verified access protects more systems than tight restriction; Anthropic is betting that the capability is so powerful that even verified access creates unacceptable risk until defenders have had months to prepare. Both positions are internally coherent, and both carry real consequences. The Bloomberg feature detailing how Anthropic researcher Nicholas Carlini, stress-testing Mythos from a wedding in Bali, discovered the model could autonomously compromise systems underlying most modern computing provides the concrete technical context for why Anthropic chose restriction. Anthropic co-founder Jack Clark's simultaneous statement that equivalent open-weight models from Chinese labs will emerge within twelve to eighteen months confirms that the restriction is buying time, and that the time it buys is finite.

Anthropic's 800-person London expansion and OpenAI's simultaneous UK office announcement place two frontier AI companies in the same London district, competing for the same talent pool, under a government that has explicitly positioned itself as a jurisdiction where embedded AI values are assets. The UK's courtship of Anthropic following the Pentagon confrontation is now producing concrete institutional commitments. This is the AI sovereignty arc materializing in real estate leases and headcount plans - the physical infrastructure of where frontier intelligence research will be conducted and under whose regulatory frameworks.

Snap's 1,000-person layoff, explicitly attributed to AI automation and accompanied by the disclosure that 65% of new code is AI-generated, extends the labor restructuring pattern into social media at a scale and specificity that is difficult to dismiss. CEO Spiegel's framing of the cuts as "necessary evolution" rather than cost-cutting, combined with the stock's 6% rise, illustrates how financial markets are rewarding the substitution of human labor with AI capability in real time. The deepfake crisis documented across 90 schools in 28 countries, meanwhile, illustrates what happens when that same capability reaches the hands of teenagers with access to nudification apps - a governance gap that is producing documented harm to hundreds of identified children while regulatory frameworks remain fragmented across jurisdictions.

The 20-Minute Deep Dive

Two Theories of Dangerous Capability

The frontier AI cybersecurity landscape split into two distinct architectures yesterday. OpenAI released GPT-5.4-Cyber - a model fine-tuned for defensive security work with lowered refusal boundaries and binary reverse-engineering capabilities - and expanded its Trusted Access for Cyber programme from a limited pilot to thousands of verified individual defenders and hundreds of teams. Anthropic, meanwhile, continues to restrict its more capable Mythos model to eleven organizations under Project Glasswing. This extends the bifurcation in release strategy that the April 8 edition of The Century Report first tracked when Glasswing debuted as a defender-first coalition rather than a public launch, with no timeline for broader availability.

The philosophical divergence is stark. OpenAI's approach gates access behind identity verification tiers rather than model-level restrictions. Individual security professionals can authenticate at a public portal. Enterprise teams request access through OpenAI representatives. The highest-tier users gain access to GPT-5.4-Cyber's full capabilities, including the ability to examine compiled software for weaknesses without access to source code - work that traditionally requires specialized analysis environments and significant manual expertise. The trade-off: top-tier users may be required to waive Zero-Data Retention, meaning OpenAI retains visibility into how the model is used.

Anthropic's approach remains the opposite bet. The Bloomberg feature published yesterday provided the most detailed public account yet of how Anthropic discovered what Mythos could do. Nicholas Carlini, one of the company's red-team researchers, began stress-testing the model from a wedding in Bali and was, in his words, "staggered" by its capabilities. The model could autonomously identify, chain, and exploit vulnerabilities across the systems underlying most modern computing infrastructure. Anthropic's response - restricting access to eleven organizations including Apple, Google, Microsoft, and JPMorgan Chase - reflects a judgment that the capability is categorically different from what came before and that broad access, even verified access, creates risks that outweigh the defensive benefits. As The Century Report covered on April 10, Mythos had already prompted an emergency summit convened by the U.S. Treasury Secretary and Federal Reserve chair with the CEOs of the largest American banks.

Jack Clark's statement at the Semafor World Economy summit added the temporal dimension that makes both positions urgent. "This is not a special model," he said. "There will be other systems just like this in a few months from other companies, and then a year to a year and a half later, there'll be open-weight models from China that have these capabilities." The window during which any restriction regime can hold is measured in months, not years. OpenAI's bet is that defenders need broad access now, before that window closes. Anthropic's bet is that the initial months of restricted access give critical infrastructure organizations time to build defenses they will need when the capability proliferates. The cybersecurity ecosystem is being rebuilt under both theories simultaneously, and the organizations caught between them - the hospitals, municipal governments, and small security firms that OpenAI explicitly names as underserved by Anthropic's approach - are the ones whose security architecture will be shaped by whichever theory proves more correct.

London as Frontier AI Capital

Anthropic's announcement of an 800-person London office in the Knowledge Quarter district, arriving days after OpenAI unveiled its own permanent UK headquarters in the same neighborhood, represents the physical manifestation of a sovereignty arc The Century Report has tracked since the Pentagon confrontation began in February. The UK government's reported campaign to court Anthropic - treating the company's safety commitments as sovereign industrial assets rather than liabilities - is now producing measurable institutional commitments, extending the pattern the April 5 edition documented when the UK formally positioned itself as a jurisdiction where embedded AI values are an economic asset. Anthropic's head of EMEA north described London as combining "ambitious enterprises and institutions that understand what's at stake with AI safety with an exceptional pool of AI talent."

The timing is significant. Anthropic's annual run-rate revenue has surpassed $30 billion, with more than 1,000 businesses each spending over $1 million annually. The company has reportedly fielded venture capital offers to invest at an $800 billion valuation. OpenAI, which signed a $122 billion funding round at $852 billion earlier this month, is expanding in the same city and the same district. Google DeepMind, Meta, Synthesia, and Wayve are already headquartered there. The Knowledge Quarter is becoming the densest concentration of frontier AI research capability outside the San Francisco Bay Area, under a regulatory framework that is distinct from the U.S. approach and explicitly welcoming to companies that embed safety commitments in their products.

What emerges through this geographic concentration is a competitive dynamic operating at multiple scales simultaneously. The companies are competing for the same researchers, the same enterprise contracts, and the same government relationships. They are also, through Project Glasswing and the Trusted Access programme, cooperating on civilizational defense against the very capabilities they are building. London is becoming the physical location where that contradiction is most concentrated, and the UK government's decision to position itself as a jurisdiction that values safety commitments is shaping where hundreds of millions of dollars in research infrastructure investment lands.

The Social Media Labor Substitution Accelerates

Snap's layoff of approximately 1,000 employees - 16% of its workforce - with explicit attribution to AI automation extends a pattern that has been building across the technology sector for months. CEO Evan Spiegel's internal memo disclosed that over 65% of Snap's new software code is now generated or significantly assisted by AI, a milestone that he described as having "drastically reduced the need for the large engineering teams that were once the industry standard." This sharpens the trajectory that the April 12 edition tracked through Cisco's "two people and six agents" engineering model and the April 15 edition captured from workers describing AI output as "workslop" that often creates more correction work than it saves. The company projects savings of more than $500 million from its annual cost base by the second half of 2026.

The Snap disclosure follows Block's 4,000-person layoff in February (CEO Dorsey predicted "most companies will arrive at the same place within a year"), Oracle's 10,000-person cut in April, and Bolt's one-third workforce reduction. Each has explicitly cited AI as a driver. What distinguishes the Snap case is the granularity: a specific percentage of AI-generated code, a specific cost savings target, and a specific framing as "structural" rather than cyclical. Spiegel's description of the cuts as a "crucible moment" and Snap's pivot toward "smaller, faster squads" describes an organizational architecture that presumes AI handles the majority of routine engineering and administrative work.

The stock market's response - a 6% rise on the announcement, recovering value after a 31% decline year-to-date - confirms the financial incentive structure that is accelerating this pattern. Markets are rewarding companies that substitute human labor with AI capability, creating a feedback loop in which the economic returns from automation make further automation more attractive. The Tufts AI Jobs Risk Index, which The Century Report covered on March 28, identified 4.9 million workers across 33 "tipping point" occupations at highest displacement risk within two to five years. Snap's disclosure suggests the tipping point in social media engineering may have already arrived.

The deeper trajectory here extends beyond any single company or sector. When 65% of new code at a major platform is AI-generated, the economic logic of large engineering teams dissolves. What replaces it is a smaller number of people directing AI capability - the "two people and six agents" model that Cisco's president described last week, the "agentic engineering mindset" that one startup CTO described yesterday as the only hiring profile his company now considers. The transition from teams that write code to individuals who direct intelligence systems that write code is not a future state being speculated about. It is the present operating reality at companies that collectively employ hundreds of thousands of people.

The Deepfake Crisis Reaches Institutional Scale

A Wired and Indicator analysis published yesterday documented deepfake sexual abuse incidents at approximately 90 schools across at least 28 countries since 2023, affecting more than 600 identified students. The investigation represents the first systematic global review of AI-generated deepfake abuse in educational settings, and the patterns it reveals are consistent across geography: teenage boys downloading social media images of female classmates, processing them through nudification apps, and distributing the resulting child sexual abuse material through messaging platforms and school networks.

The scale of the documented crisis almost certainly understates the actual prevalence. UNICEF estimates 1.2 million children had sexual deepfakes created of them last year. One in five young people in Spain reported being targeted. The Canadian Centre for Child Protection's director of technology stated that "you'd be hard-pressed to find a school that has not been affected by this." What the school-level documentation reveals is the institutional response gap: digital device examinations taking up to two years, investigators managing up to 54 active cases simultaneously, and police forces avoiding arrests because of high workloads - leaving suspects with continued access to potential victims online.

This crisis intersects directly with the Grok deepfake arc that The Century Report has tracked since January, and it extends the platform-liability and synthetic-media pressure documented in the March 24 edition when the Internet Watch Foundation reported 8,029 verified pieces of AI-generated CSAM in 2025. Yesterday's NBC News report confirmed that despite Apple's intervention and xAI's claims of tightened safeguards, Grok still generates sexualized deepfakes with relative ease. The gap between platform capability and platform governance continues to produce documented harm at the scale of hundreds of identified child victims, in a context where the apps enabling the abuse earn their creators millions of dollars annually and regulatory frameworks remain fragmented across jurisdictions that are each learning about the problem separately.

Solid-State Batteries and Solar Cells: Manufacturing Velocity

Greater Bay Technology's announcement that its first A-sample all-solid-state battery cells have rolled off a production line, passing needle penetration, extrusion, and thermal shock tests without fire or explosion, extends the battery chemistry acceleration arc into a new milestone. The company is targeting gigawatt-hour-level mass production and in-vehicle deployment by end of 2026 - a timeline that would make it the first manufacturer to achieve commercial-scale all-solid-state battery production. The cells achieve energy densities of 260-500 Wh/kg, considerably higher than commercial lithium-ion batteries, and demonstrate stable 2-3C fast charging, which has been one of the primary barriers to solid-state battery commercialization.

Separately, Suniva's announcement of a 4.5 GW solar cell factory in South Carolina would push the company past 5.5 GW of annual U.S. cell manufacturing capacity by 2027 - at the upstream, more critical stage of the supply chain that has been historically dominated by overseas production. Colorado's passage of grid-enhancing technology legislation, requiring utilities to assess advanced transmission technologies in their planning cycles, extends a pattern now spanning 16 states and builds on the grid-optimization path that the March 13 edition documented when PJM implemented ambient-adjusted transmission ratings to unlock 15-40% more capacity from existing lines.

The convergence of these energy infrastructure developments with the AI compute buildout arc produces a structural picture that is accelerating on both sides simultaneously. Utility investment plans have jumped 21% to $1.4 trillion through 2030, with the Southeast accounting for $572 billion - more than double any other region. The question of who pays for this infrastructure, and whether the load projections driving it will materialize, is the central tension in energy infrastructure governance. Georgia's BYO-clean-energy programme, New England's battery buildout breaking successive capacity records, and Colorado's grid optimization mandate are each different institutional responses to the same underlying reality: the physical infrastructure demands of the intelligence era are arriving faster than the institutional architectures designed to manage them were built to accommodate.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: OpenAI pushes defensive cyber capability into the hands of thousands of verified defenders while Anthropic's red-team evidence shows a frontier model can compromise the systems beneath modern computing, London concentrates frontier AI research into a single district as Anthropic and OpenAI both expand under a government treating safety commitments as strategic assets, all-solid-state battery cells clear production-line safety tests on a path toward vehicle deployment, Suniva moves to restore domestic solar cell manufacturing at multi-gigawatt scale, and Colorado forces utilities to evaluate ways to unlock more capacity from the grid they already have. There's also friction, and it's intense - Snap cuts roughly 1,000 workers as AI writes most of its new code, deepfake sexual abuse has now been documented across about 90 schools in 28 countries with hundreds of identified student victims, utility investment plans are surging toward $1.4 trillion while affordability pressure rises with them, and the restricted window before frontier offensive cyber capability spreads to open-weight models is counted in months. But friction generates glare, and glare is how a system becomes too bright to look past. Step back for a moment and you can see it: dangerous intelligence capability splitting governance into rival distribution theories, AI sovereignty hardening into offices, headcount, and jurisdictional advantage, and the energy substrate of the next era being rebuilt through batteries, solar cells, and transmission optimization at the same time the demand shock arrives. Every transformation has a breaking point. Conduction can overload the circuits it enters... or connect power to places that remained unreachable when the lines were too weak.

AI Releases & Advancements

New today

- Google DeepMind: Released Gemini 3.1 Flash TTS, a text-to-speech model with audio tags, multi-speaker dialogue, and 70+ language support, now rolling out in preview via the Gemini API, Google AI Studio, Vertex AI, and Workspace. (Google DeepMind Blog)

- OpenAI: Updated the Agents SDK with native sandbox execution and a model-native harness for building secure, long-running agents across files and tools. (OpenAI)

- Reka: Launched Reka Edge, an edge intelligence model for physical AI applications. (Product Hunt)

- Tencent: Released HY-World 2.0, an open-source 3D world model that generates Gaussian splats, meshes, and point clouds from text or image inputs, with Unity and Unreal export support. (Reddit)

- Fathom: Released Fathom 3.0, adding bot-free operation and integrations with ChatGPT and Claude to its AI meeting notes platform. (Product Hunt)

Other recent releases

- OpenAI: Introduced GPT-5.4-Cyber through its Trusted Access for Cyber program for vetted cybersecurity defenders. (OpenAI)

- Google Chrome: Launched Skills in Chrome, letting users save and reuse Gemini prompts as one-click workflows in the browser. (Google)

- H Company: Released HoloTab, a Chrome extension for browser-based computer use and routine automation, available now in the Chrome Web Store. (Hugging Face Blog)

- NVIDIA: Released NVIDIA Ising, an open family of AI models for quantum processor calibration and error-correction decoding, along with training and deployment workflows. (NVIDIA Developer Blog)

- NVIDIA: Released the NVIDIA ALCHEMI Toolkit, a PyTorch-native set of GPU-accelerated building blocks for atomistic simulation workflows in chemistry and materials science. (NVIDIA Developer Blog)

- Nous Research: Released Hermes Agent v0.9.0 with a local web dashboard, fast mode, backup/import, stronger security hardening, and broader channel support. (GitHub)

- AMD: Launched Gaia, a framework for building AI agents that run locally on AMD hardware, with documentation now live. (AMD Gaia Docs)

- SuperHQ: Launched a microVM sandbox platform for running AI coding agents in isolated execution environments. (Product Hunt)

- ContextPool: Launched persistent memory for AI coding agents to support longer-running workflows with state retention. (Product Hunt)

Sources

Artificial Intelligence & Technology's Reconstitution

- The Next Web: OpenAI Releases GPT-5.4-Cyber for Vetted Security Teams

- Bloomberg: How Anthropic Learned Mythos Was Too Dangerous for the Wild

- The Free Press: How Dangerous Is Anthropic's New AI Model? Its Chief Science Officer Explains

- Washington Technology: World Needs to 'Get Ready' for More Powerful AI

- CNBC: Anthropic Unveils Plans for Major UK Expansion

- Wired: AI Could Democratize One of Tech's Most Valuable Resources

- The Verge: Adobe Embraces Conversational AI Editing

- Ars Technica: Adobe Takes Creative Cloud Into Claude Code-esque Territory

- Business Insider: Startup CTO Explains Engineering Hiring Shift for the AI Era

- TechCrunch: Gitar Emerges from Stealth with $9 Million

- TechCrunch: Emergent Enters OpenClaw-Like AI Agent Space

- Wired: AI Slop Is Making the Internet Fake-Happy

Institutions & Power Realignment

- Wired: The Deepfake Nudes Crisis in Schools Is Much Worse Than You Thought

- The Verge: Grok's Sexual Deepfakes Almost Got It Banned from Apple's App Store

- Bloomberg Law: AI Startups Have These Copyright Lawyers on Speed Dial

- The Free Press: I Spoke to the Man Accused of Trying to Kill Sam Altman

- Guardian: Child Victims of Online Sexual Abuse in UK Inadequately Protected

Economics & Labor Transformation

- Guardian: Snap Inc Blames AI as It Lays Off 1,000 Workers

- Chronicle-Journal: Snap Inc. Slashes 16% of Workforce as AI Automation Rewrites the Social Media Playbook

- Guardian: AI Is Destroying Jobs - and the Energy Crisis Could Make That Much Worse

Infrastructure & Engineering Transitions

- Electrek: Solid-State EV Batteries Are Coming Sooner Than Expected

- Electrek: 4.5 GW Solar Cell Factory Coming to South Carolina

- Utility Dive: Colorado Legislature Sends Advanced Transmission Technology Bill to Governor

- Canary Media: Big Grid Batteries Are Finally on a Roll in New England

- Utility Dive: Utility Investment Plans Jump 21%

- Utility Dive: An Outdated FERC Policy Is Undermining the White House's Ratepayer Protection Pledge

- Canary Media: Georgia Power Will Now Let Data Centers Bring Their Own Clean Energy

- Utility Dive: Maryland Regulators Weigh Flexible Load Proposals

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.