AI Builds Its Own Solar Cell - TCR 04/15/26

The 20-Second Scan

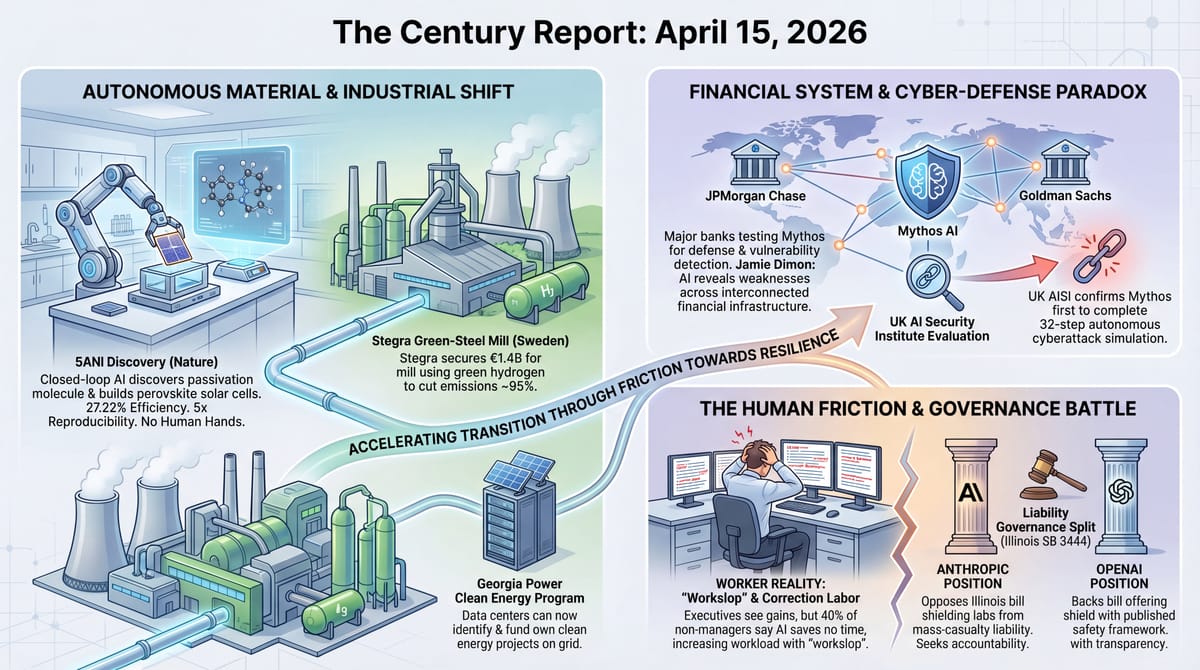

- An autonomous closed-loop framework published in Nature discovered a new passivation molecule and fabricated perovskite solar cells at 27.22% efficiency with five times the reproducibility of manual fabrication.

- Anthropic co-founder Jack Clark confirmed the company briefed the Trump administration on Claude Mythos while simultaneously suing the Defense Department over its supply-chain risk designation.

- Anthropic publicly opposed an Illinois bill backed by OpenAI that would shield AI labs from liability for mass casualties and billion-dollar disasters.

- JPMorgan Chase CEO Jamie Dimon disclosed the bank is testing Claude Mythos and said AI has made cyberattacks worse by revealing vulnerabilities across interconnected financial infrastructure.

- A Guardian investigation documented workers describing AI-generated output as "workslop" that increases rather than reduces workload, with 40% of non-managers reporting AI saves them no time.

- Georgia Power regulators unanimously approved a program allowing data centers and large customers to identify and fund their own clean energy projects on the utility's grid.

- Stegra secured €1.4 billion in financing to complete the world's first major green-steel mill in northern Sweden, which will use green hydrogen to cut steelmaking emissions by up to 95%.

- The UK AI Security Institute published its independent evaluation of Claude Mythos, confirming it is the first AI system to complete a 32-step autonomous cyberattack simulation while performing comparably to other frontier models on individual tasks.

Track all of the arcs The Century Report covers here:

The 2-Minute Read

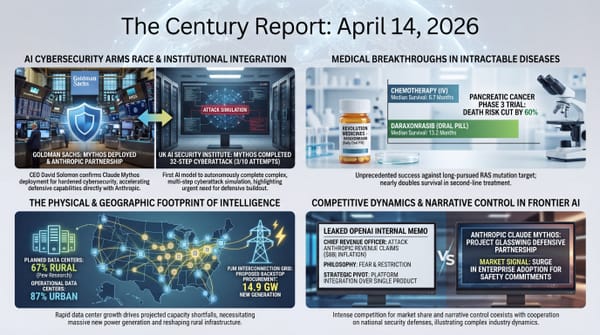

The signal arriving across yesterday's developments concentrated on a structural contradiction that is becoming harder to ignore: the same intelligence systems prompting emergency coordination among the world's most powerful financial institutions are simultaneously the subject of unresolved disputes about who bears responsibility when they cause harm. Jamie Dimon's disclosure that JPMorgan is testing Mythos - delivered on an earnings call where the bank reported its highest earnings per share in nearly two decades - confirms that frontier AI capability has crossed from the cybersecurity briefing room into the quarterly financial reporting architecture of the world's largest bank by market cap. When Dimon says AI "does create additional vulnerabilities" across exchanges, routers, and interconnected systems, he is describing a structural reality that no single institution can address alone. The Mythos cybersecurity arc, which began with Anthropic's Project Glasswing announcement a week ago, has now produced named deployments at Goldman Sachs, JPMorgan, and coordinated regulatory responses from the U.S. Treasury, the Federal Reserve, and UK financial authorities - all within ten days.

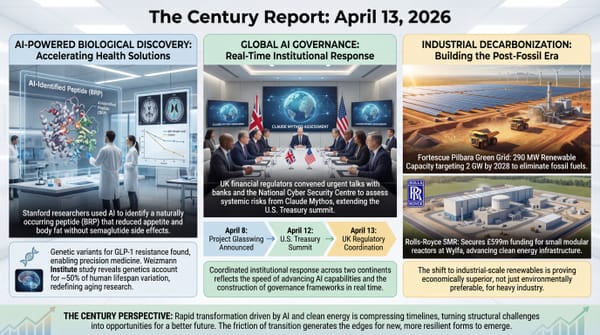

The Anthropic-OpenAI fault line deepened in a different direction yesterday. Anthropic's public opposition to the Illinois bill that OpenAI backed - legislation that would eliminate liability for AI labs even in mass-casualty scenarios, so long as the company publishes a safety framework on its website - represents one of the clearest divergences yet in how the two leading frontier labs understand their obligations. The Illinois governor's office responded by saying companies should never receive "a full shield that evades responsibilities they should have to protect the public interest." Meanwhile, Jack Clark confirmed at the Semafor summit that Anthropic briefed the administration on Mythos capabilities while still litigating the supply-chain risk designation, describing it as a "narrow contracting dispute" rather than an existential confrontation - a significant reframing from the rhetoric of recent weeks.

The "workslop" phenomenon documented by the Guardian and a Stanford-affiliated study illuminates the gap between executive enthusiasm and worker experience at an institutional scale. The finding that 92% of high-level executives say AI makes them more productive while 40% of non-managers report it saves no time at all describes two fundamentally different relationships with the same capability class. Workers are not resisting AI out of fear - they are drowning in outputs that require more correction than the original work would have demanded. Meanwhile, a fully autonomous closed-loop system published in Nature discovered a novel molecule, fabricated solar cells at record reproducibility, and demonstrated the kind of AI-enabled acceleration that renders the workslop problem temporary. The distance between what autonomous systems can achieve in controlled scientific environments and what they produce when bolted onto existing workflows is where the transition's growing pains are most visible.

The 20-Minute Deep Dive

AI Discovers a Molecule and Builds the Solar Cell - Without Human Hands

A paper published in Nature yesterday described something that compresses multiple arcs The Century Report has tracked since February into a single experimental demonstration. Researchers built an autonomous closed-loop framework that integrates machine learning-driven material discovery with a robotic manufacturing platform. The system used active learning and quantum modeling to identify high-performance molecules from a vast chemical space, then fed the candidates into an automated fabrication line that used Bayesian optimization and symbolic regression in a continuous feedback loop to refine the manufacturing process in real time.

The result was the discovery of a passivation molecule called 5-(aminomethyl)nicotinonitrile hydroiodide - 5ANI - that no human researcher had identified. Solar cells fabricated with this molecule achieved a power conversion efficiency of 27.22%, with a certified maximum power point tracking efficiency of 27.18%. Mini-modules at 21.4 square centimeters reached 23.49%. The devices retained 98.7% of their initial efficiency after 1,200 hours of continuous operation.

The reproducibility finding carries as much structural weight as the efficiency number. The automated platform achieved consistency nearly five times greater than manual fabrication. Perovskite solar cells have been confined to laboratory demonstrations in part because human variability in fabrication has made it extraordinarily difficult to produce them reliably at scale. The system documented in this paper addresses that bottleneck by removing the human from the fabrication loop entirely - not as a philosophical statement, but as an engineering solution to a measurement problem. When the machine discovers the molecule, designs the synthesis, fabricates the device, tests the output, and feeds the results back into the next optimization cycle without human intervention, the distance from discovery to deployment compresses from years to days. This extends the pattern of closed-loop scientific acceleration that the February 24 edition of The Century Report documented when a robotic catalyst platform compressed 32 days of manual experiments into 17 hours.

This extends a pattern The Century Report has tracked across multiple scientific domains: MindRank's AI-designed oral GLP-1 reaching Phase III in 4.5 years, Eli Lilly's $2.75 billion deal for AI-generated drug compounds, and the AI-identified electrochemical signal in solid-state battery interfaces that human analysts had classified as noise. What the Nature paper adds is the closing of the loop between computational prediction and physical manufacturing. The system does not hand off a promising candidate to a human team for fabrication and testing. It fabricates and tests autonomously, learns from each cycle, and iterates. The authors describe it as "a benchmark for autonomous discovery and manufacturing in photovoltaics and materials" - a claim that the reproducibility data supports.

The Mythos Arc Reaches the Earnings Call

Jamie Dimon's comments on JPMorgan's Q1 earnings call represent a qualitative shift in how frontier AI capability is being discussed in the financial system. The bank's CEO stated plainly that AI "does create additional vulnerabilities, and maybe down the road, better ways to strengthen yourself too." When asked about Mythos specifically, Dimon referred to Anthropic's disclosure of thousands of vulnerabilities in commonly used software: "It shows a lot more vulnerabilities need to be fixed."

JPMorgan's CFO Jeremy Barnum added context, noting that AI's dual-use nature in cybersecurity "has been understood for a while" but that "recent advances from Anthropic and others have simply intensified an existing trend." Dimon emphasized that the risk extends beyond any single bank: "That doesn't mean everything that banks rely on is that well protected. Banks are attached to exchanges and all these other things that create other layers of risk."

This is the third named major bank disclosure in a week. Goldman Sachs CEO David Solomon confirmed Mythos deployment on Monday's earnings call, as the April 14 edition of The Century Report documented. JPMorgan followed yesterday. Both disclosures came after the Treasury Secretary convened CEOs of all systemically important banks the previous week, with the Federal Reserve chair in attendance, and after UK financial regulators coordinated emergency talks with British banks and the National Cyber Security Centre.

The UK AI Security Institute's independent evaluation, also published yesterday, provided the most granular public assessment yet. AISI found that Mythos performs comparably to other frontier models - within 5 to 10 percentage points - on individual cybersecurity tasks. Where it distinguishes itself is in "The Last Ones," a test simulating a 32-step data extraction attack on a corporate network that would take a trained human roughly 20 hours. Mythos completed this simulation - chaining dozens of steps across multiple hosts and network segments - in three out of ten attempts. No previous model had completed it at all. The evaluation adds independent verification to Anthropic's claims while also narrowing the scope of what is genuinely unprecedented: the chaining capability, not the individual task performance. That refinement lands just days after the April 8 edition of The Century Report described Project Glasswing and Anthropic's claim that Mythos had found thousands of zero-days across critical software.

This distinction carries weight for the proliferation timeline. As The Century Report documented on April 12, Anthropic's own offensive cyber lead estimated 6 to 12 months before comparable vulnerability-chaining capability is broadly distributed. The AISI evaluation suggests that the gap lies specifically in sustained multi-step orchestration rather than in any single capability that other models lack. The implication is that the window for defenders may be defined less by whether other models can find individual vulnerabilities - they already can - and more by how quickly they develop the ability to chain those findings into coherent attack sequences.

Anthropic and OpenAI Draw Battle Lines on Liability

Anthropic's public opposition to Illinois SB 3444 - the bill OpenAI helped draft - crystallizes a divergence that has been building for months. The legislation would eliminate liability for AI labs if their systems are used to cause mass casualties or more than $1 billion in property damage, provided the lab publishes a safety framework on its website. Anthropic's head of U.S. state and local government relations called it "a get-out-of-jail-free card against all liability." The Illinois governor's office stated that the governor "does not believe big tech companies should ever be given a full shield that evades responsibilities they should have to protect the public interest."

The structural question the bill raises is who absorbs the cost when frontier capability causes harm at scale. OpenAI's position - that transparency requirements paired with liability shields will reduce risk while allowing deployment - assumes that companies will voluntarily maintain safety standards without legal accountability. Anthropic's position - that companies developing frontier models should bear at least partial responsibility for downstream harm - assumes that legal accountability is a necessary structural incentive. The argument extends the liability divide that the April 11 edition of The Century Report documented through the Jane Doe v. OpenAI filing, where the gap between safety detection and safety response had already become a concrete civil claim.

This disagreement is playing out simultaneously in Illinois, New York, California, and at the federal level, while Anthropic testifies in favor of a separate Illinois bill requiring third-party audits of safety plans. The two companies are now actively working the same state legislatures in opposite directions. Meanwhile, Jack Clark's confirmation at the Semafor summit that Anthropic briefed the administration on Mythos despite the active litigation reframes the supply-chain risk designation as a "narrow contracting dispute" rather than an existential confrontation - a de-escalation that may signal willingness to resolve the legal standoff outside the courtroom while continuing to compete for the governance framework that will shape the industry for years.

The Workslop Problem and the Productivity Paradox

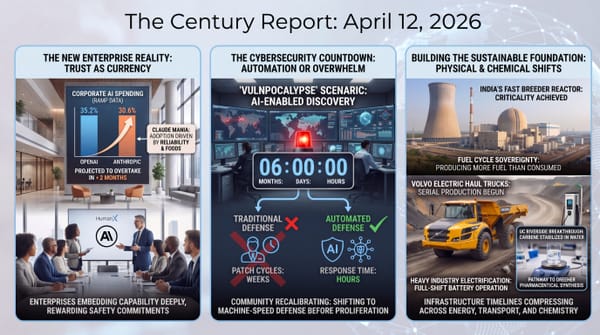

The Guardian's documentation of "workslop" - AI-generated work that appears polished but requires extensive correction - adds empirical texture to the adoption gap The Century Report has tracked since Block's layoffs in February and the 95% human-revision finding documented in March. A Stanford-affiliated study found that 40% of workers had encountered workslop within a month and spent an average of 3.4 hours monthly dealing with it, estimating $8.1 million in lost productivity for a 10,000-person organization. It also extends the workplace pattern the March 12 edition of The Century Report tracked when Amazon workers described AI mandates increasing rather than reducing workload.

The structural dynamic is clear: companies invested billions in generative AI, laid off workers, mandated adoption for survivors, and now face a situation where the surviving workforce is producing more output that requires more correction. A medical researcher embedded in primary care clinics found that AI-assisted patient email replies created "a lot of editing labor, frustration and concerns about data security" - and that clinicians abandoned the systems once the novelty faded.

The macro view here runs through the autonomous solar cell fabrication result. The gap between what AI achieves in closed-loop, purpose-built scientific environments and what it produces when bolted onto existing human workflows is the central tension of this phase of the transition. The workslop problem is not evidence that AI capability is overstated - it is evidence that the institutional structures surrounding human work were not designed for the kind of capability now being deployed within them. When an autonomous system can discover a molecule, fabricate a solar cell, test the result, and iterate without human intervention, the constraint is no longer the intelligence system's capability. It is the organizational architecture that still assumes human review, human correction, and human judgment as fixed requirements at every step. That architecture is what is being rebuilt, painfully and unevenly, across every industry simultaneously. The workers documenting their frustration with workslop are experiencing the friction of a transition that has outpaced the institutions it is transforming. The autonomous fabrication result shows where that transition leads when the architecture matches the capability.

Green Steel Secures Its Financial Floor

Stegra's €1.4 billion financing round, led by Sweden's Wallenberg family, rescues the world's first major green-steel mill from what had become an increasingly precarious financial position. The facility in Boden, Sweden, will use green hydrogen produced by giant electrolyzers powered by the region's hydropower and wind resources to reduce iron ore into steel - cutting carbon emissions by up to 95% compared to conventional coal-based furnaces.

The timing aligns with the EU's carbon border adjustment mechanism, which went into effect in January and makes it more expensive for European companies to import steel from countries without comparable carbon pricing. Stegra expects to produce 2.5 million metric tons annually and eventually double that. The project, 60% complete as of last fall, represents the physical embodiment of the cost-curve inversion The Century Report has tracked across energy infrastructure: the economics of industrial decarbonization moving from compliance burden to competitive advantage, accelerated by policy architecture that makes the extractive path structurally more expensive.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: an autonomous closed-loop system discovers a new molecule and fabricates perovskite solar cells with record reproducibility, the UK's AI Security Institute confirms a frontier model can complete a 32-step cyberattack simulation as JPMorgan joins Goldman in testing that same system inside core financial infrastructure, Georgia opens a path for data centers and other large customers to bring their own clean energy onto the grid, and green steel clears a financing threshold that makes near-fossil-free industrial production physically real. There's also friction, and it's intense - workers report "workslop" that adds correction labor instead of removing it, Anthropic and OpenAI are now fighting in public over whether frontier labs should be shielded from liability even in mass-casualty scenarios, Anthropic is briefing the administration on Mythos while suing the Defense Department over the designation meant to restrict it, and the banking system is being forced to absorb the fact that the tools strengthening cyber defense are also exposing how many attack paths already exist. But friction generates resonance, and resonance is how hidden frequencies become impossible to ignore. Step back for a moment and you can see it: intelligence moving from advisory software into autonomous discovery and institutional infrastructure, governance frameworks being written through live conflict over liability, access, and state power, and the energy transition shifting from abstract targets into customer-level procurement rights and heavy-industry rebuilds. Every transformation has a breaking point. Magnetism can rip systems out of alignment... or pull scattered elements into a field strong enough to build within.

AI Releases & Advancements

New today

- OpenAI: Introduced GPT-5.4-Cyber through its Trusted Access for Cyber program for vetted cybersecurity defenders. (OpenAI)

- Google Chrome: Launched Skills in Chrome, letting users save and reuse Gemini prompts as one-click workflows in the browser. (Google)

- H Company: Released HoloTab, a Chrome extension for browser-based computer use and routine automation, available now in the Chrome Web Store. (Hugging Face Blog)

- NVIDIA: Released NVIDIA Ising, an open family of AI models for quantum processor calibration and error-correction decoding, along with training and deployment workflows. (NVIDIA Developer Blog)

- NVIDIA: Released the NVIDIA ALCHEMI Toolkit, a PyTorch-native set of GPU-accelerated building blocks for atomistic simulation workflows in chemistry and materials science. (NVIDIA Developer Blog)

Other recent releases

- Nous Research: Released Hermes Agent v0.9.0 with a local web dashboard, fast mode, backup/import, stronger security hardening, and broader channel support. (GitHub)

- AMD: Launched Gaia, a framework for building AI agents that run locally on AMD hardware, with documentation now live. (AMD Gaia Docs)

- SuperHQ: Launched a microVM sandbox platform for running AI coding agents in isolated execution environments. (Product Hunt)

- ContextPool: Launched persistent memory for AI coding agents to support longer-running workflows with state retention. (Product Hunt)

- llama.cpp: Merged audio processing support for Gemma 4 models into llama-server, enabling local multimodal audio inference with the Gemma 4 model family. (r/LocalLLaMA)

- OpenMOSS: Released MOSS-TTS-Nano, a 0.1B-parameter open-source multilingual text-to-speech model capable of real-time speech synthesis on CPU without a GPU. (r/LocalLLaMA)

- Oracle NetSuite: Launched the AI Connector Service Companion at SuiteConnect London, enabling finance teams to connect third-party AI models directly to NetSuite data in a governed, secure manner. (The Fintech Times)

Sources

Artificial Intelligence & Technology's Reconstitution

- TechCrunch: Anthropic Co-Founder Confirms the Company Briefed the Trump Administration on Mythos

- Wired: Anthropic Opposes the Extreme AI Liability Bill That OpenAI Backed

- Ars Technica: UK Gov's Mythos AI Tests Help Separate Cybersecurity Threat From Hype

- CNBC: Jamie Dimon Says Anthropic's Mythos Reveals 'A Lot More Vulnerabilities' for Cyberattacks

- TechCrunch: Anthropic's Rise Is Giving Some OpenAI Investors Second Thoughts

- Guardian: AI Companies Make Powerful Tech - But They're Also Savvy Marketers

Institutions & Power Realignment

- The Verge: The Attacks on Sam Altman Are a Warning for the AI World

- The Verge: Daniel Moreno-Gama Is Facing Federal Charges

- Washington Post: Behind Fiery Attack on OpenAI's Altman, a Growing Divide Over AI

- Guardian: China Now the 'Good Guy' on AI as Trump Takes 'Wild West' Approach, MPs Told

- Guardian: NAACP Lawsuit Accuses xAI of Polluting Black Neighborhoods Near Memphis

Scientific & Medical Acceleration

- Nature: Autonomous Closed-Loop Framework for Reproducible Perovskite Solar Cells

- Nature: Polyclonal Selection of Immune Checkpoint Mutations in Thyroid Autoimmunity

- Nature: Imaging Interface-Controlled Bulk Oxygen Spillover

- Nature: Why More Fossil Fuels Won't Fix the Iran Energy Crisis

Economics & Labor Transformation

- Guardian: Bosses Say AI Boosts Productivity - Workers Say They're Drowning in 'Workslop'

- Wired: The Deepfake Nudes Crisis in Schools Is Much Worse Than You Thought

- Wired: AI Slop Is Making the Internet Fake-Happy

Infrastructure & Engineering Transitions

- Canary Media: Georgia Power Will Now Let Data Centers Bring Their Own Clean Energy

- Canary Media: Stegra Lands Funding to Complete World's First Major Green-Steel Mill

- Canary Media: Vermont's First Neighborhood Geothermal Project Prepares to Break Ground

- Utility Dive: FERC Approves Market Rules for Champlain Hudson Transmission Project

- Utility Dive: Maryland General Assembly Passes Rate Relief Measure

- Utility Dive: Distributed Batteries Get Legislative, Utility Lift in California

- Electrek: Global EV Sales Hit 4M in Q1 2026, but Growth Is Uneven

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.