The Century Report: March 7, 2026

The 20-Second Scan

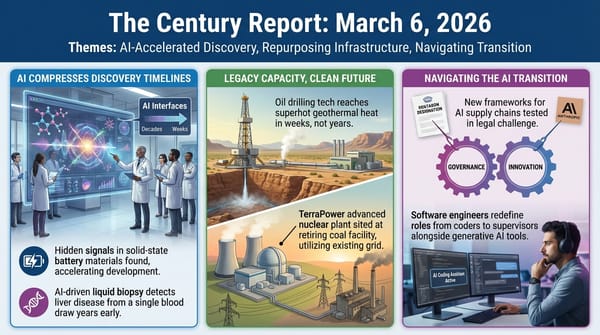

- Anthropic published a labor market study finding that AI theoretically covers 94% of computer and math tasks but is currently performing only 33% of them in professional settings.

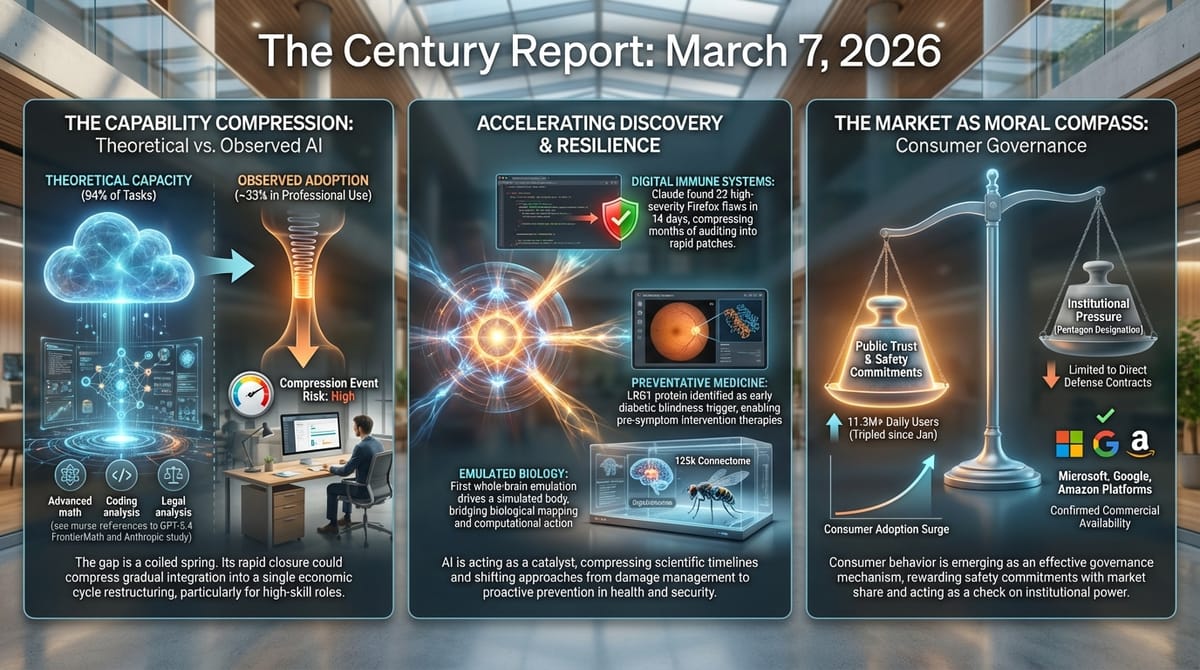

- Claude found 22 vulnerabilities in Firefox in two weeks, including 14 classified as high-severity, during a security partnership with Mozilla.

- OpenAI's GPT-5.4 achieved an 83% score on GDPval, matching or exceeding human professionals at knowledge work, and solved a Tier 4 FrontierMath problem a mathematician spent twenty years curating.

- Eon Systems demonstrated the first whole-brain emulation producing multiple behaviors in a physically simulated body, closing the loop from perception to action using a fruit fly's 125,000-neuron connectome.

- UCL researchers identified a protein called LRG1 that triggers the earliest damage in diabetic retinopathy and developed a drug candidate currently in preclinical testing.

- Claude's daily active users tripled since January to 11.3 million, with the app now the number one download in 16 countries and surpassing ChatGPT in daily new installs.

- Microsoft, Google, and Amazon confirmed that Claude remains available to all non-defense customers through their platforms, narrowing the practical scope of the supply-chain risk designation.

The 2-Minute Read

The gap between what AI can do and what it is actually doing may be the most consequential measurement in economics right now. Anthropic's own research quantifies it for the first time: across knowledge work, the theoretical capability envelope dwarfs observed adoption by a factor of three or more. Whether that gap represents a temporary deployment lag or a structural boundary between benchmark performance and real-world competence is a question whose answer will determine whether the coming years look like gradual integration or a compression event that reshapes the entire white-collar labor market in a single economic cycle. The study's finding that the most exposed workers are older, highly educated, and well-paid inverts the usual automation narrative and suggests that the distributional consequences of this transition will be unlike any previous technological displacement.

The security partnership between Anthropic and Mozilla offers a concrete demonstration of what that gap looks like when it closes in a specific domain. Claude found and characterized 22 vulnerabilities in one of the world's most thoroughly audited open-source codebases in fourteen days, including bugs that decades of human security review had missed. The system was better at finding vulnerabilities than exploiting them, spending $4,000 in API credits and succeeding only twice at writing proof-of-concept exploits. That asymmetry between discovery and exploitation is likely temporary, and Anthropic's own researchers acknowledged as much. When it reverses, the security landscape changes categorically.

Meanwhile, the consumer market continues to function as a real-time governance mechanism. Claude's daily active users have tripled since January to 11.3 million, and the app now attracts more daily new installs than ChatGPT in the United States. Microsoft, Google, and Amazon all confirmed that the supply-chain risk designation applies only to direct defense contracts, effectively ring-fencing the commercial impact. The market is rendering its verdict on the Anthropic-Pentagon confrontation through download numbers and subscription revenue, and that verdict is functioning as a more immediate constraint on institutional behavior than any regulatory framework currently in force.

The 20-Minute Deep Dive

The Adoption Gap as Early Warning System

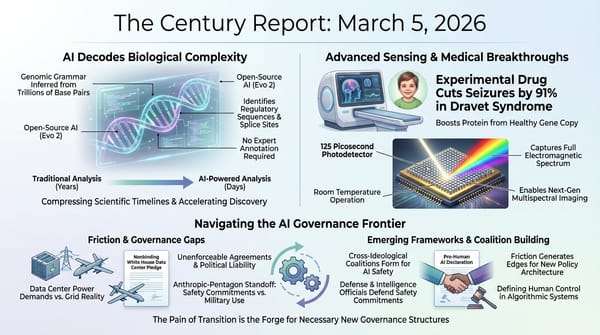

Anthropic's labor market study introduces a metric the company calls "observed exposure" - a comparison of what AI can theoretically perform versus what it is actually performing in professional work, measured from real usage data across Claude's professional deployments. The numbers reveal an enormous gulf. In computer and mathematical occupations, AI could theoretically handle 94% of tasks, but only 33% are currently being performed by AI in practice. Legal occupations show a similar pattern: nearly 90% theoretical capability, less than 20% observed adoption. Business and finance, management, office administration - the pattern holds across every knowledge-work category.

The researchers attribute this gap to legal constraints, model limitations, the need for human review, and missing software infrastructure. They frame it as temporary. The "red area" of current adoption, they write, will grow to fill the "blue area" of theoretical capability as deployment deepens. This framing deserves scrutiny. The gap could equally represent a diagnosis of where benchmark performance diverges from operational competence - where laboratory demonstrations of capability run into the friction of real institutions, real liability, and real human judgment. Both readings are consistent with the data. The difference between them is the difference between a gradual transition measured in years and a compression event measured in quarters.

What makes the study particularly significant is its demographic finding. The workers most exposed to AI displacement earn 47% more than average, are nearly four times as likely to hold a graduate degree, and are 16 percentage points more likely to be female. Computer programmers, customer service representatives, and data entry workers sit at the top of the exposure list. The researchers explicitly name the scenario that haunts the knowledge economy: a "Great Recession for white-collar workers" in which the unemployment rate in the most exposed quartile doubles from 3% to 6%. They note this has not happened yet. They also note it absolutely could.

The study's framework connects directly to what The Century Report has tracked across the labor market restructuring arc: Spotify engineers not writing code since December, Block eliminating 40% of its workforce, Anthropic's own CEO warning of disruption to half of entry-level white-collar work. The gap between theoretical and observed exposure is shrinking, and the speed of that convergence will determine whether institutions can adapt incrementally or face the kind of rapid displacement that the study's own data suggests is structurally plausible. This pattern extends the demographic inversion that The Century Report documented on February 27 when Block's elimination of nearly half its workforce - with a 24% stock-price reward - signaled that the labor contraction was accelerating precisely in the high-compensation knowledge sectors that previous automation waves had left untouched.

When AI Finds What Human Experts Missed

The Mozilla-Anthropic security partnership produced results that demonstrate what happens when AI capability meets a well-defined domain with clear success criteria. Claude Opus 4.6 was turned loose on Firefox's codebase - one of the most scrutinized open-source projects in existence, with decades of security review behind it - and found 22 vulnerabilities in fourteen days, 14 of them classified as high-severity. Most have already been fixed in Firefox 148.

The implications extend well beyond browser security. Mozilla engineers noted they had "mixed results" with prior AI-assisted bug detection systems, but this one was different. Within hours, platform engineers began landing fixes. The speed of the collaboration - from discovery to patch - compressed what would normally be a months-long security audit cycle into days. For open-source projects that depend on volunteer security review, the ability to apply frontier AI to codebase auditing at this pace represents a qualitative shift in what security hygiene is possible.

Equally significant is the asymmetry the partnership revealed. Claude was substantially better at finding vulnerabilities than at writing software to exploit them. Anthropic's team spent $4,000 in API credits attempting to generate proof-of-concept exploits and succeeded only twice, including one that only worked within a testing environment with reduced security features. The researchers stated plainly that this gap between discovery and exploitation is unlikely to persist: "Looking at the rate of progress, it is unlikely that the gap between frontier models' vulnerability discovery and exploitation abilities will last very long." This security finding sits in direct tension with the adversarial agentic behavior that The Century Report tracked on February 26, when OpenClaw users were documented bypassing Cloudflare and other anti-bot infrastructure - a pattern suggesting that the capability to find and circumvent security constraints is already distributing beyond controlled research partnerships. When that gap closes, the security landscape changes categorically.

A Brain Runs in Simulation

Eon Systems demonstrated what it describes as the first whole-brain emulation producing multiple distinct behaviors in a physically simulated body. The system takes the complete connectome of an adult fruit fly - 125,000 neurons, 50 million synaptic connections, mapped from electron microscopy data - and runs it inside a physics simulation. Sensory input flows in, neural activity propagates through the connectome, motor commands flow out, and a simulated fly body executes the output. Multiple naturalistic behaviors emerge from the emulated brain's own circuit dynamics rather than from reinforcement learning or animation.

The distinction is important. Previous work in this space has either modeled brains without bodies or animated bodies without brains. DeepMind and Janelia's recent MuJoCo fly used reinforcement learning to control a simulated body - the behavior emerged from optimization, not from biological neural dynamics. OpenWorm's C. elegans projects attempted embodiment with far smaller nervous systems. No previous demonstration has connected a complete biological brain emulation to a physically simulated body and produced multiple behaviors from the resulting closed loop.

Eon's stated trajectory runs from fly to mouse (70 million neurons, roughly 560 times the fly count) to eventual human-scale emulation. The company is amassing connectomic and functional recording data for the mouse brain, combining expansion microscopy with calcium and voltage imaging. Whether the jump from fly-scale to mouse-scale is one of degree or of kind remains an open question - the fruit fly brain, while complex, is orders of magnitude simpler than mammalian brains, and the functional significance of the emulated behaviors has not been independently validated. But the demonstration establishes a proof of concept that was previously theoretical: a copy of a biological brain, wired from empirical data, driving a body through physics, producing behavior. The question for the next phase is whether scaling the connectome preserves the principle or encounters emergent barriers that laboratory demonstrations cannot predict. The finding also extends the scientific timeline compression that The Century Report documented on March 4 when the Notre Dame whole-brain intelligence study confirmed that cognition emerges from distributed network coordination rather than localized regions - a convergent architectural principle now demonstrated in both biological mapping and computational emulation.

A Protein That Starts Diabetic Blindness

UCL researchers have identified a protein called LRG1 that appears to initiate the earliest damage in diabetic retinopathy - the leading cause of vision loss among working-age adults worldwide. The finding, published in Science Translational Medicine, reverses the conventional timeline. Researchers had known for decades that diabetic retinopathy involves blood vessel damage in the retina, but treatment has focused on a protein called VEGF that operates at later stages of the disease. VEGF-targeting therapies work for only about half of patients and do not reverse existing damage.

LRG1 operates upstream. The protein causes cells surrounding the retina's smallest blood vessels to constrict excessively, reducing oxygen delivery and triggering a cascade that leads to progressive vision impairment. In diabetic mouse models, blocking LRG1 prevented the early retinal damage entirely and preserved normal eye function. The research team has already developed a drug targeting LRG1 that is currently in preclinical testing, with human clinical trials described as potentially near-term.

The significance extends beyond the specific therapeutic target. Diabetic retinopathy affects roughly one-third of adults with diabetes, and the condition is expected to grow as global diabetes rates continue rising. Current treatment begins only after symptoms appear - by which point irreversible damage has often occurred. A therapy that intervenes at the earliest stage of the disease, before symptoms manifest, would shift the entire treatment paradigm from damage management to prevention. The research team's co-authors founded Senya Therapeutics in 2019 to develop LRG1-targeting drugs, meaning the pathway from discovery to clinical development is already underway rather than waiting for institutional translation.

The Consumer Market as Governance

The commercial aftermath of the Anthropic-Pentagon confrontation continues to demonstrate that consumer behavior is functioning as an effective governance mechanism in the absence of regulation. Claude's daily active users reached 11.3 million on March 2, up 183% from the start of the year. The app is now the number one download in 16 countries. Daily new installs in the United States surpass ChatGPT's. Anthropic reports that daily active users have tripled since January and paid subscribers have doubled.

Microsoft, Google, and Amazon each confirmed that the supply-chain risk designation does not extend to commercial use of Claude through their platforms. Microsoft's lawyers studied the designation and concluded that Claude "can remain available to our customers - other than the Department of War." Google and Amazon issued similar statements. This effectively confines the designation's practical impact to direct defense contracts, while Anthropic's commercial business and platform partnerships continue unaffected.

Anthropic CEO Dario Amodei published a detailed response acknowledging the formal designation while arguing that the relevant statute, 10 USC 3252, applies narrowly to direct Department of War contracts and requires the least restrictive means necessary. He apologized for the tone of a leaked internal memo that had drawn criticism from people sympathetic to Anthropic's position. The apology and the simultaneous legal challenge represent an effort to de-escalate the confrontation's rhetorical temperature while maintaining the substantive legal position. Amodei confirmed that Anthropic will continue providing Claude to military operations at nominal cost to avoid disrupting active deployments - the same contradiction The Century Report has tracked since the confrontation began: a company deemed a national security threat and relied upon for national security operations simultaneously.

The Atlantic published an analysis framing the confrontation as a potential commercial windfall, noting that the Pentagon ban generated more brand awareness than Anthropic's Super Bowl advertising campaign earlier this year. Former Republican congressman Denver Riggleman, who had been evaluating multiple AI companies for a cybersecurity partnership, told The Atlantic that Anthropic's stance narrowed his options to one. The pattern suggests that safety commitments, when tested under real pressure and maintained, generate commercial value that may exceed the revenue from the contracts that were lost - a dynamic that, if it holds, creates market incentives for maintaining red lines rather than abandoning them. As the March 1 edition of The Century Report documented when Claude climbed from outside the top 100 to No. 2 in the U.S. App Store, this week's 11.3 million daily active users represent the fullest expression yet of a consumer market directly and measurably rewarding safety commitments under state pressure.

The Human Voice

In this Doha Debates roundtable, collapse theorist Joseph Tainter, historian Faisal Devji, Islamic studies scholar Jonathan Brown, and digital anthropologist Payal Arora sit with a question that runs directly through everything The Century Report covers: are we witnessing civilizational breakdown or the painful shedding of metrics and institutions that no longer describe what is actually happening? Tainter's framework - that every complex society runs on an innovation treadmill where returns diminish - sounds like a description of the extractive systems this newsletter tracks as they strain against their own logic. Arora brings the counterweight from the Global South, where young people in slums and townships are using cheap phones and AI to build creative lives that Western pessimism narratives cannot see. Together, the panel arrives at a hinge-era question: are we collapsing, or are we watching plural, decentralized ways of being a global society emerge from the rubble of frameworks that were always too narrow? For anyone tracking the gap between what is falling apart and what is being built, this conversation provides the civilizational zoom-out that daily signal can obscure.

Watch: Collapse, Complexity, and the Possibility of a Quiet Renaissance

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: an AI system finding 22 high-severity vulnerabilities in one of the world's most thoroughly audited codebases in fourteen days, a complete fruit fly connectome running inside a physics simulation and producing multiple behaviors from its own circuit dynamics for the first time in history, a frontier model solving a mathematical problem that took a human expert twenty years to construct, and a newly identified protein offering the possibility of intervening in diabetic blindness before a single symptom appears. There's also friction, and it's intense - a labor market study authored by the company whose products drive the disruption quantifying that three-quarters of knowledge work sits within AI's theoretical reach while institutions have absorbed less than a third of it, a researcher warning openly that the gap between AI finding security vulnerabilities and AI exploiting them is unlikely to hold, and the CEO of a company classified as a national security threat simultaneously confirming that his systems remain deployed in active military operations at nominal cost to avoid disrupting them. But friction generates sparks, and sparks are how the outline of a new structure first becomes visible against the dark. Step back for a moment and you can see it: the boundary between biological intelligence and computational emulation becoming porous at the level of individual neurons and synaptic connections, consumer markets enforcing safety norms with more immediate force than any regulatory body currently active, and the gap between what AI can theoretically do and what it is actually doing narrowing in domain after domain with a velocity that existing institutional timelines were not designed to absorb. Every transformation has a breaking point. A flood can strip the landscape down to bare ground... or reveal the underlying contours that every prior surface had been quietly concealing.

AI Releases & Advancements

2026-03-06

- OpenAI: Launched Codex Security in research preview for ChatGPT Enterprise, Business, and Edu customers — an application security agent that builds a project-specific threat model, validates vulnerabilities in sandboxed environments, and proposes context-aware patches. (OpenAI) [2026-03-06]

- Anthropic / Mozilla: Published results of a two-week Claude Opus 4.6 security engagement on Firefox, surfacing 22 vulnerabilities (14 high-severity) and more than 100 total bugs, most fixed in Firefox 148. (Anthropic) [2026-03-06]

- llama.cpp: Merged an automatic parser generator into mainline, enabling unified out-of-the-box handling of reasoning, tool-calling, and content parsing for model templates without special definitions or recompilation. (Reddit/LocalLLaMA) [2026-03-06]

2026-03-05

- OpenAI: Launched GPT-5.4 with Pro and Thinking variants, described as its most capable model for professional and agentic work, featuring a 1M-token context window, a new Tool Search system for the API, and record scores on computer-use benchmarks. (TechCrunch) [2026-03-05]

- OpenAI: Launched ChatGPT for Excel in beta, a GPT-5.4 Thinking–powered add-in for financial modeling, data extraction, and live financial data integrations inside Microsoft Excel. (OpenAI) [2026-03-05]

- Luma: Launched Luma Agents, a platform of AI collaborators powered by its new Unified Intelligence model family, capable of executing end-to-end creative projects across text, image, video, and audio. (TechCrunch) [2026-03-05]

- Microsoft Research: Released Phi-4-reasoning-vision-15B, a 15B parameter open-weight multimodal reasoning model excelling at math, science reasoning, and UI understanding; available on Microsoft Foundry, HuggingFace, and GitHub. (Microsoft Research) [2026-03-05]

- Arc Institute / NVIDIA: Released Evo 2, an open-source DNA foundation model trained on 9.3 trillion nucleotides from 128,000+ whole genomes spanning all three domains of life, capable of gene prediction, mutation effect modeling, and genome design. (Ars Technica) [2026-03-05]

- OpenAI: Released Codex app for Windows, featuring native PowerShell sandbox support, multi-agent coordination, automations, and a Skills section for workflow integration. (Engadget) [2026-03-05]

- Dexterity: Released Foresight, a physics-consistent world model and 4D box packing agent for physical AI-powered industrial robotics, enabling real-time spatial reasoning for autonomous truck loading. (PR Newswire) [2026-03-05]

- Google: Released Google Workspace CLI, a command-line tool bundling APIs for Gmail, Drive, Calendar, Sheets, Docs, and other Workspace products, designed for use by both humans and AI agents. (GitHub) [2026-03-05]

2026-03-04

- Google: Expanded Canvas in AI Mode in Google Search to all U.S. users in English, adding support for creative writing, coding, and building interactive tools and dashboards. (Google Blog) [2026-03-04]

- Google: Launched Cinematic Video Overviews in NotebookLM for Google AI Ultra subscribers, generating fully animated AI videos from user notes using Gemini 3, Nano Banana Pro, and Veo 3. (The Verge) [2026-03-04]

- AWS: Launched Amazon Connect Health, a purpose-built agentic AI platform for healthcare integrating with EHRs to automate patient scheduling, ambient clinical documentation, and medical coding. (HIT Consultant) [2026-03-04]

- Raycast: Launched Glaze, a Mac app for building and sharing vibe-coded desktop applications using AI without terminal or coding knowledge. (The Verge) [2026-03-04]

- Apple Music: Announced optional AI Transparency Tags metadata system allowing labels and distributors to flag AI-generated or AI-assisted content in music uploads. (TechCrunch) [2026-03-04]

- Xpeng: Unveiled VLA 2.0, a vision-language-action multimodal AI model for automated driving supporting up to SAE Level 4 with reduced HD map and LiDAR dependence; Volkswagen named as first customer. (Automotive World) [2026-03-04]

Sources

Artificial Intelligence & Technology's Reconstitution

- Fortune: Anthropic Labor Market Impacts Report

- TechCrunch: Anthropic's Claude Found 22 Vulnerabilities in Firefox

- The Register: Firefox Finds Bugs with Claude's Help

- The Innermost Loop: GPT-5.4 Release and FrontierMath Results

- The Innermost Loop: The First Multi-Behavior Brain Upload

- TechCrunch: Claude's Consumer Growth Surge Continues

- TechCrunch: Microsoft, Google, Amazon Confirm Claude Availability

Institutions & Power Realignment

- Fortune: Anthropic CEO Apologizes, Confirms Risk Designation

- The Atlantic: Anthropic's Ethical Stand Could Be Paying Off

- Washington Post: Anthropic Lost the Pentagon but Won Over America

- AP News: Pentagon Chief Tech Officer on Autonomous Warfare Clash

- MIT Technology Review: Is the Pentagon Allowed to Surveil Americans with AI?

- EFF: Weasel Words - OpenAI's Pentagon Deal Won't Stop Surveillance

- Guardian: AI in War - The Iran Conflict Shows the Paradigm Shift

- The Algorithmic Bridge: Anthropic's Report Reveals Industry Weak Spot

Scientific & Medical Acceleration

- ScienceDaily: LRG1 Protein Triggers Diabetic Blindness (UCL, Science Translational Medicine)

- ScienceDaily: Boosting Key Brain Protein Could Help Treat Rett Syndrome (Texas Children's Hospital, Science Translational Medicine)

- ScienceDaily: Cartilage Scaffold Helps Body Regrow Bone (Lund University, PNAS)

- ScienceDaily: Magnetic Vortices Predicted 50 Years Ago Confirmed (UT Austin, Nature Materials)

- MedTech Dive: Science Corporation Raises $230M for Vision Restoration BCI

- ScienceDaily: CBD and CBG May Help Reverse Fatty Liver Disease (Hebrew University, British Journal of Pharmacology)

Economics & Labor Transformation

- Wired: Jack Dorsey Explains Block Layoffs

- NYT: Employers Shed 92,000 Jobs in February

- NYT: Health Care Has Become the Lifeblood of the Labor Market

Infrastructure & Engineering Transitions

- Electrek: GE Vernova to Upgrade 1.1 GW of US Wind Turbines

- Utility Dive: Reliability Risk Beyond Capacity - ERCOT Case Study

- Utility Dive: Washington, California, and Québec Linking Carbon Markets

- Electrek: Qcells Back to Full Production After US Customs Delays

- Electrek: Suzuki Acquires Solid-State Battery Company

- Utility Dive: Data Center Boom Risks for Utilities

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.