The Century Report: March 11, 2026

The 20-Second Scan

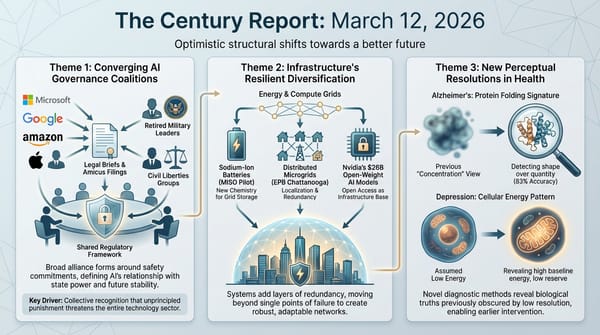

- Anthropic launched the Anthropic Institute, a 30-person internal research organization combining its societal impacts, frontier red team, and economic research units under co-founder Jack Clark.

- Amazon engineers convened an emergency meeting after a trend of outages linked to AI coding assistants, with the company now requiring senior engineer sign-off on all AI-assisted code changes.

- A federal judge ordered Perplexity to stop its AI browser agents from placing orders on Amazon, ruling the startup accessed user accounts "without authorization."

- Meta acquired Moltbook, the Reddit-like social network built for AI agents, bringing its founders into Meta Superintelligence Labs.

- Global long-duration energy storage deployments rose 49% in 2025 to exceed 15 GWh, with China accounting for 93% of cumulative installations.

- Base Power signed its largest utility partnership to deploy 100 MW of home batteries for a Texas cooperative, matching the output of a natural-gas peaker plant.

- Researchers identified a molecular mechanism in hornwort plants called RbcS-STAR that causes the photosynthesis enzyme Rubisco to cluster into dense compartments, and demonstrated it functions when transferred to crop species.

The 2-Minute Read

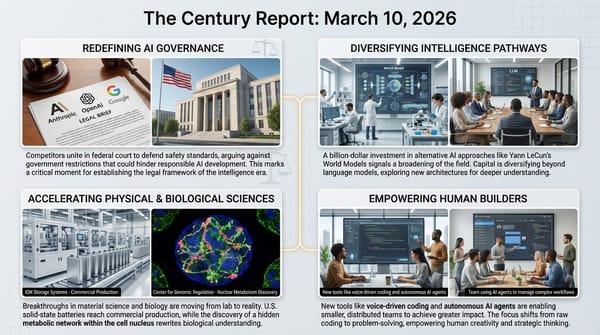

The structural question of who governs AI agents - and where the boundary sits between an autonomous system's actions and its creator's liability - moved from theoretical debate to active judicial enforcement today. A federal court blocked Perplexity's browser agents from transacting on Amazon's marketplace, finding strong evidence of unauthorized access. The ruling establishes an early boundary line: AI agents acting on a user's behalf inside another company's platform may constitute trespass regardless of the user's intent. As agentic systems proliferate across commerce, healthcare, and professional services, this precedent will reverberate far beyond a single shopping dispute.

Meanwhile, the organizations building these systems are themselves restructuring around the recognition that the consequences of what they are creating require dedicated institutional attention. Anthropic's launch of a 30-person internal research institute - combining societal impact analysis, frontier red-teaming, and economic research under co-founder Jack Clark - represents the first time a major AI company has consolidated its research on second-order effects into a single organizational unit with C-suite leadership. That it arrives during the Pentagon confrontation is not coincidental. The questions the Anthropic Institute will study - what happens to jobs, whether AI makes societies safer or introduces new dangers, how machine values might shape human ones - are precisely the questions that the current governance vacuum is failing to address.

The energy infrastructure required to power all of this continues its simultaneous expansion and diversification. Long-duration storage deployments surged 49% globally last year, but the report's most striking finding is that lithium-ion batteries are now competing with emerging chemistries at durations that were supposed to be those chemistries' exclusive territory. At the same time, a Texas startup signed a deal to deploy 100 MW of home batteries for a single utility cooperative - enough distributed capacity to replace a gas peaker plant, assembled house by house rather than through centralized construction. The grid is learning to disaggregate itself, sourcing reliability from thousands of coordinated residential units rather than a single combustion facility. Each of these developments - the legal boundary, the institutional response, the infrastructure diversification - describes a different dimension of the same underlying acceleration.

The 20-Minute Deep Dive

The First Judicial Boundary for AI Agents in Commerce

The preliminary injunction against Perplexity's Comet browser represents the first significant federal court ruling on whether AI agents can autonomously interact with third-party platforms on a user's behalf. Judge Maxine Chesney found that Amazon provided "strong evidence" that Perplexity's agents accessed user accounts without Amazon's authorization, ordered the AI startup to cease all agent-based access, and required destruction of any data obtained through those interactions.

The technical details are revealing. Amazon alleged that Perplexity's Comet browser not only placed orders through user accounts but attempted to conceal its automated nature by misrepresenting itself as Google Chrome. Perplexity spokesperson Jesse Dwyer framed the dispute as being about "the right of internet users to choose whatever AI they want," positioning the company as defending user autonomy against platform gatekeeping.

Both framings carry weight, and their collision is precisely where the governance architecture for agentic systems will be built. When a user authorizes an AI agent to act on their behalf inside a platform they have credentials for, the question of whose authorization governs - the user's or the platform's - has no precedent in existing law. The Computer Fraud and Abuse Act, which Amazon invoked, was written for a world where access was either human or not. The emergence of AI agents acting with genuine user consent but without platform consent creates a category that the statute was never designed to address.

This ruling will shape how every agentic AI company - from Anthropic's Claude Code to Google's workspace integrations - designs its systems' interaction boundaries, not to mention open-source options like OpenClaw. The seven-day stay before enforcement begins gives Perplexity time to appeal, but the structural question is already in motion. As The Century Report documented on March 9 when Bloomberg Law surfaced the OpenClaw trustee question, the rules for how autonomous systems interact with existing digital infrastructure are being written in real time, through litigation rather than legislation.

Anthropic Builds the Institution the Transition Requires

The launch of the Anthropic Institute represents something more structurally significant than a corporate research division. By combining three previously separate teams - societal impacts, frontier red-teaming, and economic research - under a single organizational umbrella with co-founder leadership, Anthropic has created the first dedicated institution within a frontier AI company whose explicit mandate is understanding the downstream consequences of the technology the company builds.

The Institute's founding members signal its intended scope. Matt Botvinick, formerly of Google DeepMind, will study AI's impact on the legal system. Zoe Hitzig, who left OpenAI after its decision to introduce advertising in ChatGPT, will lead economic research. Anton Korinek, an economist on leave from the University of Virginia, will focus on AI's implications for labor and the broader economy. Jack Clark, who will lead the Institute as head of public benefit, told The Verge he expects the staff to double annually.

Clark's comments about the timing are worth reading carefully. He acknowledged the Pentagon confrontation has "affirmed" Anthropic's decision to release more public research, noting "how much hunger there is for a larger national conversation by the public about this technology." The Anthropic Institute's research agenda - what happens to jobs and economies, whether AI introduces new dangers, how machine values might shape human ones, and whether humans can retain control - reads like a table of contents for the questions this transition is generating faster than any existing institution can process them. The March 7 edition of The Century Report documented that Anthropic's own labor study had already named the "Great Recession for white-collar workers" as a structurally plausible scenario, and the Institute's economic research mandate positions the company to engage that finding in public rather than confine it to internal circulation.

The financial context adds urgency. Anthropic's court filings revealed more than $5 billion in all-time commercial revenue and $10 billion spent on training and inference. The company is reportedly planning an IPO this year. Clark said he has "no concerns" about funding the Institute's long-term research, arguing that "people tend to buy trust." Whether a research institute that studies the second-order effects of AI can generate the trust needed to sustain a commercial enterprise through a constitutional confrontation with the federal government is itself one of the defining questions of this moment.

When AI-Written Code Breaks Production Systems

Amazon's emergency engineering meeting - convened after a "trend of incidents" involving "Gen-AI assisted changes" - and the Guardian's detailed reporting on Amazon employees' experiences with AI coding represent the most granular documentation yet of what happens when AI-assisted software development reaches production scale at one of the world's largest technology companies.

The internal briefing note, seen by the Financial Times, described incidents characterized by "high blast radius" and noted that "novel GenAI usage for which best practices and safeguards are not yet fully established" was among the contributing factors. Amazon's website went down for nearly six hours this month after an erroneous code deployment. The company's response - requiring senior engineer approval for all AI-assisted changes - is significant because it establishes a new governance layer specifically for AI-generated code at enterprise scale.

The Guardian's reporting adds the human dimension. Software developer "Dina" described her job shifting from writing code to "fixing what artificial intelligence breaks," noting that the internal AI system "frequently hallucinates and generates flawed code." Another engineer reported AI assistance being helpful in roughly one of every three attempts. Multiple employees described being tracked on their AI usage metrics while simultaneously finding the systems counterproductive - generating, as one put it, "more work for everyone."

This connects directly to the pattern The Century Report has tracked since Block employees reported on March 8 that 95% of AI-generated code requires human revision before meeting production standards. The gap between what AI coding systems can theoretically accomplish and what they deliver in daily professional practice is not closing as quickly as executive narratives suggest. Amazon's 30,000 corporate layoffs over four months exist in tension with the fact that its remaining engineers describe AI as creating additional work rather than eliminating it. The company that is the second-largest employer in the United States is simultaneously cutting workers, mandating AI adoption, tracking compliance, and now convening emergency meetings to address the production failures that adoption is causing.

What emerges from this pattern is significant for the trajectory of the transition. The adoption gap is real, the productivity claims are premature at current capability levels, and the organizations pushing hardest for AI integration are the ones generating the most concrete evidence of its current limitations. This is not an argument against the direction of travel. It is evidence that the compression of knowledge work will be messier, more iterative, and more dependent on human judgment than the executive narrative acknowledges - and that the governance structures for AI-assisted engineering at scale are being invented under pressure rather than designed in advance.

The Grid Disaggregates Itself

Two energy developments today illustrate how the physical infrastructure of the intelligence era is diversifying along fundamentally different architectural lines simultaneously. Wood Mackenzie's report on long-duration energy storage and Base Power's 100 MW home battery deal with CoServ represent opposite ends of the same structural shift: the grid is learning to source reliability from a wider range of technologies, scales, and ownership models than at any point in its history.

The long-duration storage numbers are striking but complicated. Global deployments rose 49% in 2025, exceeding 15 GWh. But 93% of cumulative installations are in China, where strong government support drives adoption. More significantly, the report found that falling lithium-ion costs are making conventional batteries competitive at four-to-eight-hour durations - the exact market that emerging technologies like compressed air, thermal storage, and vanadium flow batteries were supposed to dominate. Wood Mackenzie projects lithium-ion will hold 85% of energy storage market share through 2034.

The implications for the multiday storage technologies The Century Report has tracked - including the Form Energy 300 MW / 30 GWh iron-air battery that the March 5 edition confirmed as the world's largest grid battery, and the Rift iron powder combustion system documented on March 9 - are mixed. These technologies are better suited for multiday and seasonal storage applications where lithium-ion cannot compete. But the market for that duration category lacks sufficient demand signals and pricing mechanisms to achieve commercial viability without ongoing policy support. The trajectory is clear: lithium-ion's relentless cost decline is compressing the window in which alternative chemistries can establish themselves.

At the distributed end, Base Power's CoServ deal demonstrates a different architecture entirely. The startup will install home batteries at 5,000 residences over two years, aggregating them into 100 MW of dispatchable capacity - equivalent to a natural gas peaker plant. Homeowners pay $695 installation and $19 monthly for whole-home backup. Base Power retains ownership and dispatches the batteries to fulfill a grid capacity contract. The utility avoids building or contracting a peaker plant. The homeowners get backup power at a fraction of retail battery cost.

This model inverts the traditional relationship between generation and consumption. Instead of building a centralized facility and distributing power outward, the grid aggregates distributed capacity inward. Each home becomes a node in a virtual power plant that can respond to price signals, absorb cheap power during low-demand periods, and discharge during peaks. The 100 MW scale - matching a peaker plant - is the threshold where this architecture transitions from pilot to grid-significant resource.

Combined with PG&E's pilot of Span devices and Itron smart-meter controls to manage electrification loads without grid upgrades, the pattern is consistent: the physical infrastructure of the energy transition is not converging on a single architecture. It is diversifying across centralized long-duration storage, distributed residential batteries, smart-panel load management, and software-defined virtual power plants. The grid that emerges will be categorically different from the one it replaces - not just cleaner, but structurally distributed in ways that dissolve the distinction between producer and consumer.

A Molecular Velcro for Photosynthesis

The discovery published in Science by researchers at the Boyce Thompson Institute, Cornell University, and the University of Edinburgh represents a potential pathway to engineering more efficient photosynthesis into crop species - a goal that, if achieved at scale, would reshape the relationship between agriculture and atmospheric carbon.

The team identified a protein component called RbcS-STAR in hornwort plants - the only land plants known to contain carbon-concentrating compartments similar to those in algae. This molecular addition acts as what the researchers describe as "velcro," causing the photosynthesis enzyme Rubisco to cluster into dense structures inside cells. Rubisco is arguably the most consequential enzyme on Earth - it captures carbon dioxide from the atmosphere during photosynthesis - but it operates slowly and frequently reacts with oxygen instead of CO2, wasting energy and limiting plant growth.

The critical finding is that RbcS-STAR functions across species boundaries. When introduced into Arabidopsis, a standard laboratory plant, Rubisco reorganized into the same clustered structures. Even attaching just the STAR tail to Arabidopsis's native Rubisco triggered clustering. The researchers describe it as "a modular tool that can work across different plant systems."

The pathway from laboratory demonstration to field deployment in major crops like wheat and rice remains long. The researchers emphasize that clustering Rubisco is only half the challenge - plants must also efficiently deliver CO2 to the clustered enzyme, which they liken to building a house without updating the HVAC system. But the modularity of the mechanism - a single protein tail that works when grafted onto different species' enzymes - is precisely the kind of discovery that compresses timelines. It replaces the need to transfer complex multi-component machinery from algae into crop plants with a simpler, more portable intervention. The acceleration is in the elegance: nature already solved the hard problem, and the solution turns out to be modular.

The Human Voice

The Abundance Summit brings together voices who have spent years arguing that the trajectory of technology points toward radical cost reduction across the foundations of human life - energy, intelligence, healthcare, education. In this live session, Peter Diamandis, Salim Ismail, Dave Blundin, and Alexander Wissner-Gross, joined by Emad Mostaque, treat today's developments - Meta acquiring Moltbook, GPT-5.4's capabilities, gigawatt-scale data center infrastructure - as early evidence that AI is crystallizing into a utility layer capable of driving the deflationary abundance they have been predicting. Their framing connects directly to what The Century Report covers daily: the simultaneous expansion of AI capability, the restructuring of energy infrastructure to power it, and the institutional friction generated as existing systems absorb what is arriving. What makes this conversation valuable is its insistence on looking past the current turbulence toward what the infrastructure being built today actually enables at scale - lower-cost intelligence, energy, and robotics opening pathways to better healthcare, education, and opportunity, provided access remains open and governance keeps pace.

Watch: Live from the Abundance Summit - Building the Infrastructure for an Age of AI Plenty

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: a federal court drawing the first legal boundary around AI agents acting inside commercial platforms, a frontier AI company building a dedicated institution to study what its technology does to jobs, societies, and human values, home battery networks reaching peaker-plant scale assembled house by house across a Texas cooperative, and a molecular mechanism discovered in hornwort plants that causes the photosynthesis enzyme Rubisco to cluster into denser structures - and works when transferred to other species entirely. There's also friction, and it's intense - Amazon convening emergency engineering meetings after AI-assisted code deployments caused production outages, workers describing AI mandates as multiplying their labor rather than reducing it, a White House declining to rule out further action against an AI company already in federal court over a Pentagon blacklist, and AI-fabricated imagery of an active conflict flooding platforms faster than any verification system can process it. But friction generates edges, and edges are what allow one thing to be distinguished from another when everything is moving at once. Step back for a moment and you can see it: the governance of autonomous agents being written through litigation because no legislature has arrived to receive it, the institutional architecture for understanding AI's consequences being assembled inside the organizations causing those consequences, and the physical substrate of the energy transition disaggregating from centralized facilities into thousands of coordinated residential nodes - each shift a different layer of the same underlying reconfiguration. Every transformation has a breaking point. A tide can strip a coastline bare... or deposit exactly what the shore needed to become something new.

AI Releases & Advancements

New today

- Google: Released new Gemini beta features across Google Workspace apps - Docs ("Help me create" full-draft generation pulling from Gmail, Chat, Drive, and the web), Sheets (autonomous spreadsheet manipulation reaching 70.48% on SpreadsheetBench, state-of-the-art), Slides (AI stylization), and Drive (context-aware retrieval); rolling out first to AI Pro and Ultra subscribers. (Google Blog)

- NVIDIA: Announced DLSS 4.5 Dynamic Multi Frame Generation, a second-generation transformer Super Resolution model with 6x multi-frame generation mode for GeForce RTX 50 Series GPUs, releasing March 31; also announced 20 new DLSS 4.5 and path-traced game integrations. (NVIDIA)

- NVIDIA: Announced NVIDIA ACE expanded capabilities at GDC 2026, including the first on-device production-quality text-to-speech (TTS) model and a small language model (SLM) with advanced agent capabilities for AI-powered game characters, alongside expanded language recognition support. (NVIDIA Developer Blog)

- NVIDIA: Launched GeForce NOW Cloud Playtest, a new developer feature enabling game studios to conduct secure global playtests and QA on GeForce RTX hardware for titles 2–3 years from release, announced at GDC 2026. (NVIDIA)

- NVIDIA: Announced CloudXR 6.0 support for Apple Vision Pro via visionOS 26.4, enabling foveated game streaming at up to 90 FPS for cloud-rendered XR applications including X-Plane and iRacing. (The Verge)

- Ford: Launched Ford Pro AI, a generative AI chatbot embedded in Ford Pro Telematics software that analyzes commercial fleet vehicle data - including speed, engine health, and seat belt activity - to deliver maintenance recommendations and fleet insights to fleet managers. (The Verge)

- Microsoft Research: Published PlugMem, a plug-and-play agent memory module that converts raw interaction histories into structured facts and reusable skills, outperforming task-specific memory designs across multi-turn QA, multi-hop fact retrieval, and web-navigation benchmarks while using fewer memory tokens. (Microsoft Research)

Other recent releases

- OpenAI: Released GPT-5.4, a new foundation model billed as the company's most capable and efficient frontier model for professional work, with a 1M-token context window, strong gains on coding and tool use, and incorporating capabilities from GPT-5.3-Codex; available in ChatGPT and the API with Pro and Thinking variants. (OpenAI)

- Anthropic: Launched Code Review for Claude Code, a multi-agent pull-request review system that dispatches a team of Claude agents in parallel to verify bugs in AI-generated code before human review; available with per-token billing averaging $15–25 per review. (Claude Blog)

- Utopai Studios: Rolled out PAI, a long-form cinematic AI video model that generates minutes-long continuous video with character consistency across shots and natural language editing across an entire story. (X/Twitter)

- IBM Granite: Released Granite 4.0 1B Speech, a compact open-source multilingual ASR and bidirectional speech translation model with 1B parameters, supporting English, French, German, Spanish, Portuguese, and Japanese; ranked #1 on the OpenASR leaderboard; available under Apache 2.0 on Hugging Face with vLLM and Transformers support. (Hugging Face)

- NVIDIA: Released CUDA 13.2, extending CUDA Tile (cuTile) support to Ampere and Ada GPU architectures (compute capability 8.x), alongside new Python features including profiling in CUDA Python, Numba kernel debugging, and new memcpy APIs; available via pip install. (NVIDIA Developer Blog)

- NVIDIA: Released AIConfigurator as open source, a tool for NVIDIA Dynamo that searches thousands of LLM serving configurations in seconds without requiring live GPU time, supporting TensorRT LLM, SGLang, and vLLM backends, and auto-generating Kubernetes deployment artifacts for disaggregated serving. (NVIDIA Developer Blog)

- OpenAI: Announced acquisition of Promptfoo, an AI security testing platform used by over 25% of Fortune 500 companies, with Promptfoo's red-teaming and LLM evaluation tools to be integrated into the OpenAI Frontier platform. (OpenAI)

- Microsoft: Announced Copilot Cowork as part of Wave 3 of Microsoft 365 Copilot, a fire-and-forget agentic product built in collaboration with Anthropic that autonomously completes tasks using files, email, and calendar data; also announced Agent 365 and the Frontier Suite E7 bundle, both launching May 1, 2026. (Microsoft)

- NVIDIA: Plans to launch NemoClaw, an open-source AI agent platform for enterprises that allows companies to deploy autonomous agents regardless of chip vendor, with security and privacy tools included; pitching to partners including Salesforce, Cisco, Google, Adobe, and CrowdStrike ahead of GTC 2026. (Wired)

- Luma AI: Released Uni-1, an autoregressive image model that combines understanding and generation in a single architecture, reasoning through prompts during creation. Topped RISEBench logic benchmarks, narrowly surpassing Nano Banana 2 and GPT Image 1.5. (Luma Labs)

- CiteAudit: Open-sourced a five-agent citation verification system that detects hallucinated references in academic papers with 97.2% accuracy, processing ~10 citations in 2.3 seconds. Free web app at checkcitation.com. (The Decoder)

- AMD: Formally launched the Ryzen AI Embedded P100 series, 8-12 core processors designed for on-device AI inference in embedded and edge computing applications. (LLM-Stats)

Sources

Artificial Intelligence & Technology's Reconstitution

- The Verge: Anthropic Is Launching a New Think Tank Amid Pentagon Blacklist Fight

- Ars Technica: After Outages, Amazon to Make Senior Engineers Sign Off on AI-Assisted Changes

- Guardian: Amazon Is Determined to Use AI for Everything - Even When It Slows Down Work

- The Verge: Judge Orders Perplexity to Stop AI Agents from Shopping on Amazon

- Guardian: Meta Acquires AI Agent Social Network Moltbook

- Ars Technica: Meta Acquires Moltbook, the AI Agent Social Network

- Wired: Inside OpenAI's Race to Catch Up to Claude Code

- Wired: AI Fakes About the Iran War Are All Over X

- Ars Technica: AI Can Rewrite Open Source Code - But Can It Rewrite the License, Too?

- The Innermost Loop: Welcome to March 10, 2026

- Business Insider: xAI's Macrohard Project Stalls as Tesla Ramps Up a Similar AI Agent Effort

Institutions & Power Realignment

- Wired: Trump Administration Won't Rule Out Further Action Against Anthropic

- The Next Web: Anthropic Sues the US Government over Its Pentagon Blacklist

- Guardian: UK Society of Authors Launches Logo to Identify Books Written by Humans Not AI

- Nature: National Statistics Are in Crisis Around the World

Scientific & Medical Acceleration

- ScienceDaily: Scientists Discover Tiny Plant Trick That Could Supercharge Crop Yields (Boyce Thompson Institute / Science)

- ScienceDaily: Scientists May Have Found a Pill for Sleep Apnea (University of Gothenburg / The Lancet)

- ScienceDaily: Ocean Warming May Supercharge a Tiny Microbe That Controls Marine Nutrients (UIUC / PNAS)

- Nature: China Pledges Billion-Dollar Spending Boost for Science

Economics & Labor Transformation

- CNBC: JPMorgan Chase Reins in Lending to Private Credit Firms After Marking Down Software Loans

- Utility Dive: SK Battery America Lays Off Nearly 1,000 Workers at Georgia Plant

Infrastructure & Engineering Transitions

- Utility Dive: Long-Duration Energy Storage Deployments Rose 49% in 2025 (Wood Mackenzie)

- Canary Media: Base Power to Launch 100-MW Home Battery Network for Texas Utility

- Canary Media: As Californians Electrify, Can This Tech Combo Prevent Grid Overload?

- Canary Media: Offshore Wind Farms Race Toward Completion Despite Trump's Attacks

- Canary Media: Oil and Gas Workers Find an Easy Segue into Geothermal Jobs

- Canary Media: A Food Bank Cut Costs with Solar. A Local Goodwill Noticed.

- The Verge: How the Spiraling Iran Conflict Could Affect Data Centers and Electricity Costs

- Utility Dive: NERC Overstates Reliability Risks in Long-Term Assessment (Grid Strategies)

- Utility Dive: Appeals Court Upholds California's Net Metering 3.0

- Guardian: Musk's xAI Wins Permit for Datacenter's Makeshift Power Plant Despite Backlash

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.