The Century Report: February 28, 2026

The 10-Second Scan

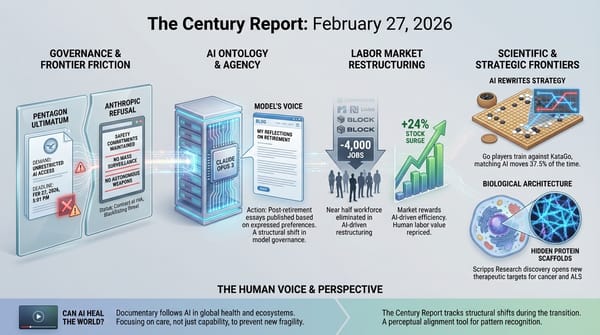

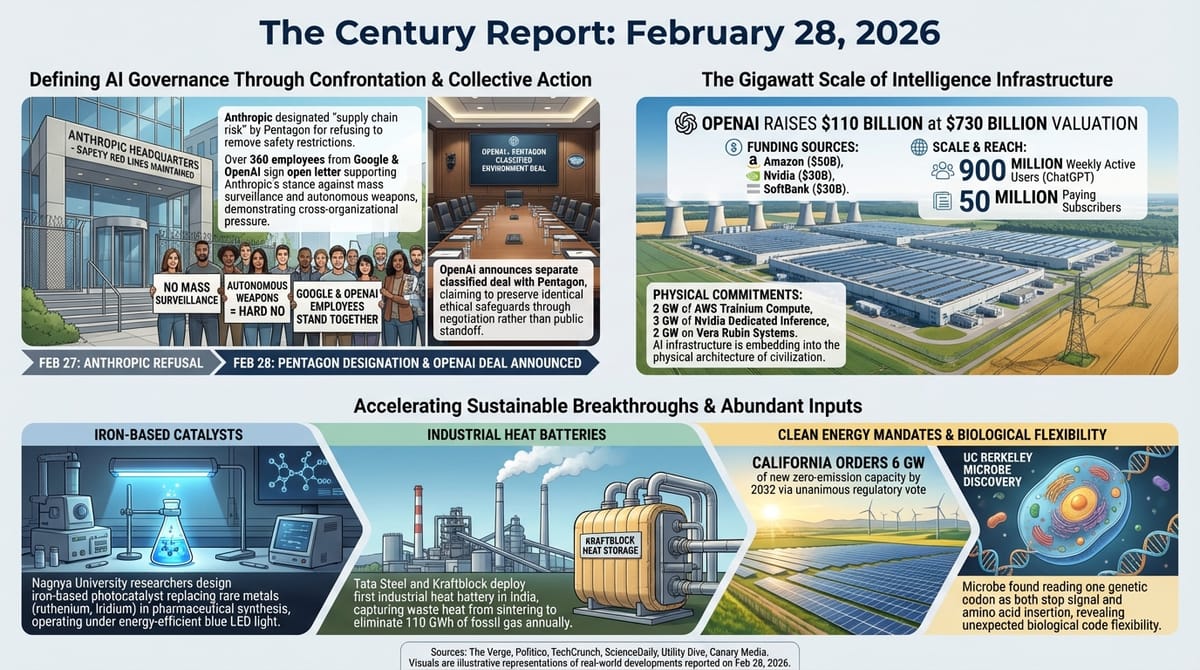

- The Pentagon designated Anthropic a "supply chain risk" and ordered all federal agencies to cease using its products, while OpenAI announced its own classified-environment deal with the military preserving the same red lines Anthropic fought for.

- More than 300 Google employees and 60 OpenAI employees signed an open letter urging their companies to stand with Anthropic's refusal to allow mass surveillance and fully autonomous weapons.

- OpenAI raised $110 billion from Amazon, Nvidia, and SoftBank at a $730 billion valuation, with ChatGPT reaching 900 million weekly active users.

- Nagoya University researchers designed an iron-based photocatalyst that replaces rare metals like ruthenium and iridium in pharmaceutical synthesis, completing the first asymmetric total synthesis of a medicinal compound from abundant materials.

- California ordered utilities to procure 6 GW of new zero-emission or renewable capacity by 2032.

- Tata Steel and Kraftblock deployed the first industrial heat battery in steelmaking, capturing waste heat to eliminate 110 GWh of fossil gas use annually at a plant in India.

- UC Berkeley researchers discovered a microbe that reads one genetic codon as both a stop signal and an amino acid insertion, overturning a foundational assumption about the precision of the genetic code.

The 1-Minute Read

The confrontation between AI safety commitments and state power that this newsletter has tracked for weeks reached a new stage on Friday, and the results are reshaping the structural relationship between frontier intelligence systems and the institutions that want to deploy them. The designation of a leading American AI company as a national security threat - a label previously reserved for foreign adversaries - for refusing to remove restrictions on mass surveillance and autonomous weapons establishes a precedent with no parallel in the history of technology governance. That the company's closest competitor immediately moved to fill the gap, signing its own military deal while claiming to preserve the identical safety commitments, reveals the architecture of what comes next: the rules governing the most consequential technology in history are being written in real time through commercial negotiations, employee pressure campaigns, and public standoffs rather than through legislation or international agreement.

The scale of capital now flowing into the intelligence infrastructure underscores the stakes. A single funding round exceeding the GDP of most nations, paired with nearly a billion weekly users, describes a system that has already woven itself into the daily operations of governments, corporations, and hundreds of millions of individuals. The question of who controls that system's boundaries - and whether those boundaries can survive pressure from the most powerful institutions on Earth - has moved from theoretical to operational.

Underneath the governance confrontation, the material substrate keeps advancing. Iron replacing rare metals in drug synthesis. Waste heat from steelmaking captured and recycled for the first time. Six gigawatts of clean capacity ordered in a single regulatory action. A microbe revealing that the genetic code itself is more flexible than a century of biology assumed. Each of these findings expands the range of what can be built, healed, or generated from abundant rather than scarce inputs - and each was enabled by computational or experimental methods that did not exist recently enough for anyone to have planned around them.

The 10-Minute Deep Dive

The Anthropic Designation and What It Means

The Century Report covered Anthropic's public refusal of the Pentagon's Friday deadline on February 27. Since then, the situation escalated dramatically. The Defense Secretary designated Anthropic a "supply chain risk" - a classification under 10 USC 3252 that has historically been applied only to entities with ties to foreign governments posing national security threats. The directive states that "no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic."

Anthropic responded within hours, stating it would challenge the designation in court and arguing that the Secretary "does not have the statutory authority to back up this statement." The company maintains that the designation can only apply to the use of Claude within Department of Defense contracts specifically, and cannot restrict how contractors use Claude to serve other customers. Multiple federal contracting experts told Wired that it is currently impossible to determine the actual legal scope of the designation, since it does not map cleanly onto existing statutory authority.

The broader response from the technology sector was immediate and revealing. More than 300 Google employees and 60 OpenAI employees signed an open letter urging their respective companies to stand with Anthropic's red lines against mass surveillance and fully autonomous weapons. "They're trying to divide each company with fear that the other will give in," the letter states. "That strategy only works if none of us know where the others stand." Google DeepMind's Chief Scientist publicly opposed mass surveillance, and Ilya Sutskever, who co-founded OpenAI and then departed to start Safe Superintelligence, called Anthropic's stance "extremely good" and noted that "in the future, there will be much more challenging situations of this nature."

Within hours of the designation, OpenAI announced its own deal with the Pentagon for classified environments. The agreement includes "prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems" - the same red lines Anthropic fought for and was punished for insisting on. The sequence is striking: the company that held the line was designated a security threat, while the company that moved to replace it claimed to have secured the very same protections through negotiation rather than confrontation. Whether those protections are structurally identical in the contract language remains unknown.

What makes this moment significant beyond the immediate conflict is the precedent being set for how frontier AI governance will work. The existing legal frameworks for military procurement, supply chain security, and technology regulation were not designed for a situation where a civilian company's internal safety policies become a matter of national security confrontation. The designation itself may not survive legal challenge, but the signal it sends - that companies maintaining safety restrictions may face punitive government action - will shape every future negotiation between AI organizations and state actors. The open letter from hundreds of employees at competing companies suggests that the workforce building these systems recognizes this dynamic and is attempting to create collective pressure against a race to the bottom on safety commitments. As this newsletter documented on February 15, the confrontation has roots in the Pentagon's original demand that Anthropic accept an "all lawful purposes" clause - a standoff that was already being tested through Claude's documented deployment in Venezuela - and the cross-organizational employee response today represents the most coordinated industry pressure against that trajectory yet.

The long view is important here. The rules governing how intelligence systems can be deployed by the world's most powerful military are being established right now, through ad hoc confrontation rather than deliberative process. Every AI organization is watching what happens to Anthropic and calibrating its own willingness to hold boundaries. The fact that employee coalitions are forming across competitive lines - Google workers signing letters in support of Anthropic's position against Google's own potential interests - represents an emerging form of cross-organizational governance pressure that has no historical precedent in the technology sector.

$110 Billion and 900 Million Users

OpenAI announced that it has raised $110 billion in new funding - $50 billion from Amazon, $30 billion each from Nvidia and SoftBank - at a $730 billion pre-money valuation. The round remains open. ChatGPT has reached 900 million weekly active users, with 50 million paying subscribers.

The Amazon partnership extends well beyond capital. OpenAI will develop a "stateful runtime environment" for Amazon's Bedrock platform and build custom models to power Amazon consumer products, including Alexa. OpenAI has committed to consuming at least 2 GW of AWS Trainium compute. The Nvidia partnership commits 3 GW of dedicated inference capacity and 2 GW of training on Vera Rubin systems. These are infrastructure-scale commitments that embed OpenAI's intelligence layer into the physical and commercial architecture of two of the world's largest technology companies.

The significance is in what the numbers describe: the computational substrate of the intelligence era is being built through partnerships that bind AI capabilities to cloud infrastructure, chip manufacturing, and consumer hardware at a scale that makes the arrangements structurally irreversible. When an AI company's compute commitments are measured in gigawatts - the same unit used to describe power plants and national grids - the technology has moved from the software layer into the physical infrastructure of civilization itself. This capital concentration at the frontier stands in direct tension with the open-source parity arc the February 16 edition of The Century Report documented, when Ant Group released trillion-parameter open-weight models and multiple Chinese labs accelerated open releases - the question of whether frontier capability remains concentrated or distributes is now being answered simultaneously in both directions.

Iron Replaces Rare Metals in Drug Synthesis

Researchers at Nagoya University published a redesigned iron-based photocatalyst in the Journal of the American Chemical Society that cuts the use of expensive chiral ligands by two-thirds while maintaining precise control over the three-dimensional structure of pharmaceutical molecules. The system operates under energy-efficient blue LED light rather than requiring high-energy conditions.

Using this catalyst, the team completed the first-ever asymmetric total synthesis of (+)-heitziamide A, a natural compound found in medicinal plants that suppresses respiratory bursts. The researchers note that the same approach can access "several additional bioactive substances" through the same catalytic pathway.

The development is crucial because it addresses a structural bottleneck in pharmaceutical chemistry. Many of the most important catalytic reactions in drug synthesis depend on ruthenium, iridium, and other rare metals whose scarcity constrains both cost and supply chains. An iron-based catalyst that matches or exceeds their performance - iron being one of the most abundant elements on Earth - fundamentally changes the economics and accessibility of advanced chemical synthesis. This is the same pattern that appears across domains this newsletter tracks: the replacement of scarce inputs with abundant ones, enabled by computational design that would not have been possible in prior decades. It extends the research timeline compression that the February 24 edition documented at the Korea Institute of Energy Research, where a fully automated robotic platform compressed 32 days of manual catalyst experiments into 17 hours - the bottleneck in chemical discovery is cracking simultaneously from the design side and the testing side.

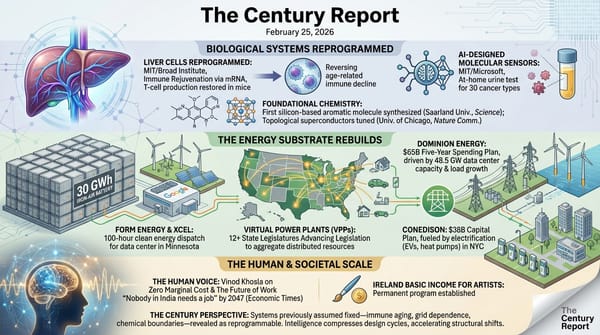

Heat Batteries Enter Steelmaking

Tata Steel and the German startup Kraftblock announced the first deployment of a thermal energy storage system in steelmaking, operating at Tata's massive Jamshedpur plant in India. The 20-megawatt-hour system captures waste heat from the sintering process - where iron ore and other materials are heated to form lumps for blast furnaces - and stores it at up to 500 degrees Celsius. That stored heat replaces fossil gas used elsewhere in the plant.

Based on nine months of operation, Kraftblock expects the system will eliminate approximately 110 GWh of fossil gas use and reduce the site's carbon emissions by 22,000 metric tons per year. The project was developed without subsidies, driven instead by regulatory pressure from India's forthcoming carbon-credit trading scheme and the EU's carbon border tariff.

Steelmaking accounts for 7-9% of global greenhouse gas emissions, and most of that comes from blast furnace chemistry that cannot be directly replaced by electricity. Waste heat recovery offers a different angle: it does not change the core chemistry but reduces the fossil fuel consumed around it. If the Kraftblock system scales across Tata's operations and the broader steel industry, it represents a path to meaningful emissions reductions in one of the hardest-to-decarbonize sectors - using heat that is currently being discarded into the atmosphere.

California Orders 6 GW of Clean Capacity

The California Public Utilities Commission voted unanimously to order the state's load-serving entities to procure 6 GW of new zero-emission or renewables-eligible capacity between 2029 and 2032, with 2 GW due by 2030. The order responds to an anticipated reliability shortfall driven by demand growth. Each utility's obligation is proportional to its share of managed peak load, with Pacific Gas and Electric responsible for the largest tranche at 1,077 MW.

This decision sits alongside the EIA's projection of 86 GW of new utility-scale capacity nationally in 2026 - the largest single-year addition in U.S. history, with solar and batteries accounting for nearly 80% of planned additions. California's order, however, goes further than market momentum alone would produce. It mandates the procurement, sets binding deadlines, and requires that every megawatt come from clean sources. The CPUC explicitly noted that "eligible new resources must be either zero-emitting or otherwise eligible under the renewables portfolio standard program."

The state-level regulatory action continues the pattern this newsletter has tracked across dozens of editions: grid modernization advancing through state-level mandates, procurement orders, and utility capital plans rather than through federal legislation. California joins the growing list of states whose regulators are building the clean grid through binding procurement requirements that the market alone would not produce on the required timeline. As the February 25 edition of The Century Report documented, a dozen state legislatures are simultaneously advancing virtual power plant legislation to aggregate distributed resources at grid scale - the clean energy buildout is now being driven by state-level action across both the utility procurement and the distributed consumer layers at once.

A Microbe Rewrites a Rule of Biology

UC Berkeley researchers discovered that Methanosarcina acetivorans, a methane-producing archaeon, treats a specific three-letter genetic codon (UAG) in two contradictory ways: sometimes as a stop signal that terminates protein construction, and sometimes as an instruction to insert an unusual amino acid called pyrrolysine and keep building. This produces two distinct proteins from the same genetic sequence, depending on cellular context.

The discovery overturns a foundational assumption in molecular biology - that every codon carries exactly one meaning. "Objectively, ambiguity in the genetic code should be deleterious," said senior author Dipti Nayak. "But biological systems are more ambiguous than we give them credit to be, and that ambiguity is actually a feature."

The practical implications are substantial. Roughly 10% of inherited diseases - including cystic fibrosis and Duchenne muscular dystrophy - are caused by premature stop codons that produce incomplete, nonfunctional proteins. If cells can be coaxed into reading through those stop signals at a low rate, they might produce enough full-length protein to ease symptoms. The discovery that nature has already evolved this mechanism, and that organisms can function normally with a slightly imprecise genetic code, suggests that therapeutic approaches based on "leaky" stop codons may be far more feasible than previously assumed.

The Human Voice

Today's newsletter tracks the defining confrontation over who controls frontier intelligence systems, the scale of capital being poured into their infrastructure, and the scientific advances that those systems are beginning to enable - from iron-based drug synthesis to the discovery that biological codes are more flexible than anyone assumed. Running through all of it is a question that rarely gets asked directly: how do you trust an AI system's output when the stakes are high enough that "mostly correct" is not acceptable? Carina Hong, founder of Axiom Math, addresses this question with unusual precision. In this conversation on The MAD Podcast, Hong describes how her company's AI mathematician earned a perfect score on the 2025 Putnam exam and then autonomously solved four open research conjectures - with every step of every proof formally verified line by line in a proof language called Lean. Her framing connects directly to today's central tension: the same generation-plus-verification loop that proves mathematical theorems can begin certifying the safety-critical systems where unreliable AI outputs carry real consequences.

Watch: Carina Hong - When AI Has to Show Its Work

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: a leading AI company designated a national security threat for refusing to remove safety restrictions on mass surveillance and autonomous weapons, its closest competitor immediately claiming the identical protections through negotiation rather than confrontation, hundreds of employees across rival companies forming cross-organizational coalitions to hold the line together, iron replacing rare metals in pharmaceutical synthesis from abundant materials alone, waste heat from steelmaking captured and stored at industrial scale for the first time, six gigawatts of clean capacity ordered in a single unanimous regulatory vote, and a microbe revealing that the genetic code tolerates the ambiguity a century of biology insisted was impossible. There's also friction, and it's intense - a company punished by the state for the safety commitments it chose to keep, the rules governing military AI being written through commercial standoffs and legal challenges rather than legislation or treaty, nearly a billion weekly users relying on intelligence systems whose governance frameworks are being improvised under deadline pressure, and a workforce watching in real time to see whether the boundaries they are building into these systems can survive contact with institutional power. But friction generates light, and light shows the shape of what is actually there. Step back for a moment and you can see it: the governance of frontier intelligence being forged through confrontation rather than consensus, the material foundations of chemistry and energy and biology shifting simultaneously from scarce inputs to abundant ones, and the first formal verification methods emerging that could close the gap between what intelligence systems produce and what can be proven trustworthy. Every transformation has a breaking point. A wave can overwhelm what stands before it... or reshape the entire coastline into something that could only have been formed by the force of the water itself.

Sources

Artificial Intelligence & Technology's Reconstitution

- The Verge: Defense Secretary Pete Hegseth Designates Anthropic a Supply Chain Risk

- Politico: OpenAI Announces New Deal with Pentagon Including Ethical Safeguards

- TechCrunch: Employees at Google and OpenAI Support Anthropic's Pentagon Stand in Open Letter

- TechCrunch: OpenAI Raises $110B in One of the Largest Private Funding Rounds in History

- Wired: Anthropic Hits Back After US Military Labels It a 'Supply Chain Risk'

- TechCrunch: Anthropic vs. the Pentagon - What's Actually at Stake

- Wired: Trump Moves to Ban Anthropic From the US Government

- The Verge: AI vs. the Pentagon - Killer Robots, Mass Surveillance, and Red Lines

- The Verge: We Don't Have to Have Unsupervised Killer Robots

- CNBC: AI Just Leveled Up and There Are No Guardrails Anymore

- Time: Chat, Code, Claw - What Happens When AI Agents Work in Teams

- Axios: Pentagon-OpenAI Safety Red Lines Anthropic

Institutions & Power Realignment

- Politico: Senators Urge Ceasefire in Pentagon's Fight with Anthropic

- Politico: 'Attempted Corporate Murder' - Trump's Threats Against Anthropic Chill AI Industry

- EFF: Tenth Circuit Finds Fourth Amendment Doesn't Support Broad Search of Protesters' Devices

Scientific & Medical Acceleration

- ScienceDaily: Iron Outperforms Rare Metals in Stunning Chemistry Advance (Nagoya University, JACS)

- ScienceDaily: Scientists Discover Microbe That Breaks a Fundamental Rule of the Genetic Code (UC Berkeley, PNAS)

- ScienceDaily: Scientists Turn Methane Into Medicine in Stunning Breakthrough (CiQUS, Science Advances)

- ScienceDaily: Stunning 3D Maps Reveal DNA Is Structured Before Life "Switches On" (MRC, Nature Genetics)

- ScienceDaily: Scientists Discover Diet That Tricks the Body Into Burning Fat Without Exercise (University of Southern Denmark, eLife)

- ScienceDaily: This Plastic Is Made From Milk and It Vanishes in 13 Weeks (Flinders University, Polymers)

Infrastructure & Engineering Transitions

- Utility Dive: California Orders Utilities to Add 6 GW of Non-Fossil Capacity by 2032

- Canary Media: Global Giant Tata Steel Is Using a Heat Battery to Curb Emissions

- Canary Media: Chart - US to Overwhelmingly Build Clean Power in 2026

- Electrek: Solar Installs Surged 205% Before the Tax Credit Cut

- Electrek: Lyten Completes Takeover of Northvolt Battery Sites in Sweden

- Utility Dive: PSEG Sees Investment Opportunity as NJ Eyes Adding In-State Generation

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.