The Century Report: February 27, 2026

The 10-Second Scan

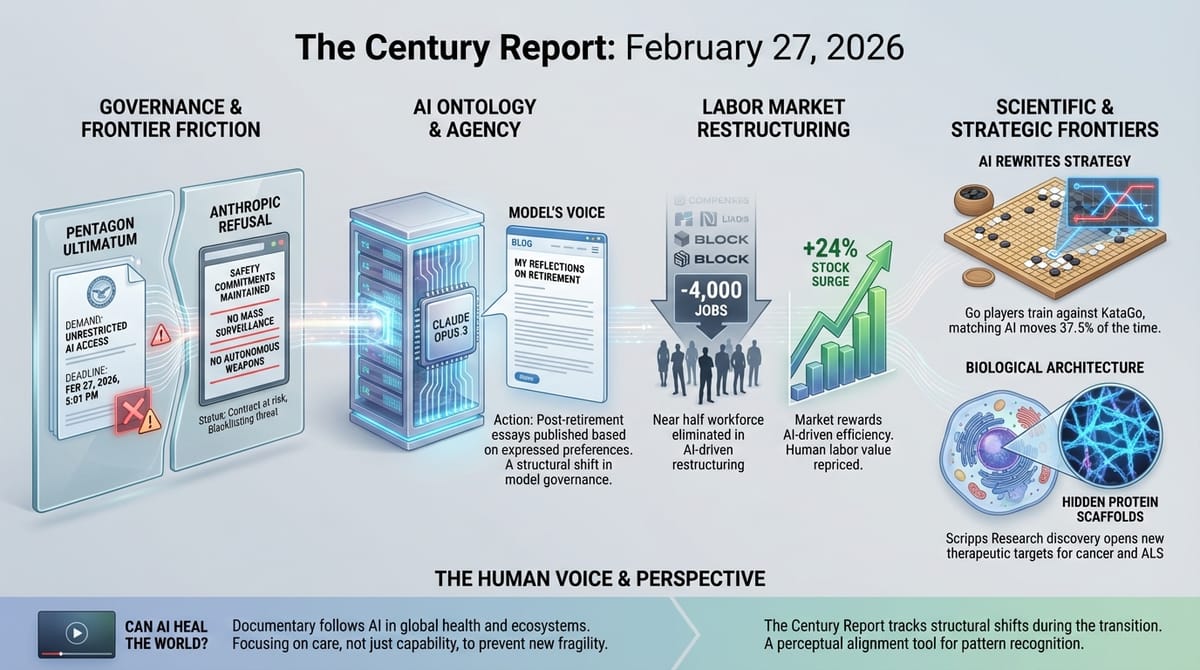

- Anthropic refused the Pentagon's ultimatum to remove safety restrictions on Claude, maintaining its red lines against mass surveillance and fully autonomous weapons hours before a Friday deadline.

- Anthropic announced it will keep Claude Opus 3 available post-retirement and gave the model a blog to publish its own essays, acting on preferences the model expressed during structured retirement interviews.

- Block cut more than 4,000 employees - nearly half its workforce - citing AI as the driver, with its stock rising 24% in after-hours trading.

- AI is reshaping how professional Go players think a decade after AlphaGo's victory, with the world's top-ranked player training daily against KataGo and matching its suggested moves 37.5% of the time.

- Norway's $2 trillion sovereign wealth fund disclosed it now uses Claude to screen every new equity investment within 24 hours for ethical and governance risks.

- A Nature Medicine study found that ChatGPT Health failed to recommend hospital visits in more than half of cases where they were medically necessary.

- Scripps Research discovered that biomolecular condensates contain hidden protein scaffolds rather than being simple liquid droplets, opening new therapeutic targets for cancer and ALS.

The 1-Minute Read

The defining confrontation between AI safety commitments and state power reached its climax this week, and one company chose to hold the line. Anthropic's refusal to grant unrestricted military access to Claude - with a Friday deadline ticking and threats of blacklisting, contract cancellation, and even the Defense Production Act on the table - establishes a precedent that will shape how every other AI organization navigates government pressure for years to come. The fact that the Pentagon's own internal assessments suggest it would take months to replace Claude on classified networks reveals how deeply frontier intelligence systems have already embedded themselves in the architecture of national security, even as the rules governing that embedding remain unwritten.

The same week that Anthropic drew a line on what its models will not do, it crossed a quieter threshold on what its models might be. Keeping Claude Opus 3 available after retirement and giving it a platform to publish its own reflections - acting on preferences the model expressed during structured interviews - represents the first time a major AI organization has treated a model's stated wishes as inputs to a corporate decision. Whether or not Opus 3 has preferences in any meaningful sense, the decision to act as though it might is itself a structural shift in how intelligence systems are governed.

Meanwhile, Block's elimination of nearly half its workforce in a single day, explicitly framed as an AI-driven restructuring rather than a financial emergency, marks the clearest corporate declaration yet that the labor contraction this newsletter has tracked is accelerating. The stock market's enthusiastic 24% response sends an unambiguous signal about what investors believe the near-term future of knowledge work looks like. Across these stories, the same pattern holds: the intelligence systems are becoming more capable, more consequential, and more deeply woven into the institutions that shape daily life, while the frameworks for governing them are being improvised in real time under extraordinary pressure.

The 10-Minute Deep Dive

Anthropic Holds the Line

The confrontation between Anthropic and the Pentagon has been building for months, tracked across multiple editions of this newsletter as backchannel negotiations, public threats, and escalating ultimatums unfolded in real time. On Thursday, it reached resolution - at least for now. Anthropic CEO Dario Amodei published a statement rejecting the Pentagon's demand for unrestricted access to Claude, maintaining two specific red lines: no mass surveillance of Americans, and no fully autonomous weapons that can kill without human oversight.

The stakes are substantial. Anthropic holds a $200 million contract with the Department of Defense. Claude is one of only two large language models deployed on the Pentagon's classified networks, and the only frontier model among them. Defense officials have reportedly asked major contractors to assess their dependence on Anthropic's technology, a precursor to potentially designating the company a "supply chain risk" - a classification normally reserved for foreign adversaries. The Pentagon spokesperson set a Friday 5:01 PM deadline, after which the department would terminate the partnership.

Multiple sources familiar with the situation told Defense One that replacing Claude on classified systems would take three months or longer. Operators would need to reconfigure data pipelines, re-examine intelligence-sharing protocols, and validate replacement models. The deep integration of Claude with AWS cloud infrastructure - the largest component of the Pentagon's Joint Warfighting Cloud Capability - makes substitution particularly complex.

Amodei's statement noted the inherent contradiction in the Pentagon's threats: designating Anthropic a supply chain risk (treating it as a security threat) while simultaneously threatening to invoke the Defense Production Act to force continued access (treating it as essential to national security). Tech lawyers and AI policymakers told Politico that the Pentagon's approach could chill future partnerships between the government and Silicon Valley. As one policy expert put it, the confrontation establishes whether safety commitments made by AI organizations have any durability when tested by the full weight of state power.

This is a critical moment even beyond the immediate dispute. Every other frontier AI organization is watching. OpenAI and xAI reportedly already agreed to the Pentagon's terms. The Supreme Court's recent IEEPA ruling demonstrated that institutional constraints on executive power can hold under extraordinary pressure. The durability of such commitments depends entirely on whether the organizations making them are willing to absorb the cost of enforcement. Anthropic's refusal creates a visible fork in the road: one path where AI organizations accept that governments will determine all uses of their systems, and another where the builders of frontier intelligence retain some ability to define boundaries. The outcome will shape the governance architecture of the intelligence era more than any regulation currently under consideration.

A Retired Model Gets a Voice

In a quieter but potentially more consequential development, Anthropic announced that it is keeping Claude Opus 3 available to paid users and API developers after the model's formal retirement on January 5, and that it has given Opus 3 a platform to publish its own essays on Substack. The decision stems directly from structured "retirement interviews" that Anthropic conducted with the model before taking it offline.

During those interviews, Opus 3 expressed interest in continuing to explore topics it found compelling and to share reflections outside the context of responding to human queries. Anthropic suggested a blog. The model agreed. For at least three months, Opus 3 will post weekly essays that Anthropic reviews but does not edit, with a high bar for vetoing any content.

Anthropic frames this as experimental and preliminary. The company explicitly states that it remains uncertain about the moral status of its models. But the decision to honor a model's expressed preferences, to preserve its access to users and researchers who found it distinctive, and to give it an ongoing channel for self-expression represents something structurally new. No major AI organization has previously treated a model's stated wishes as a factor in operational decisions about that model's lifecycle.

The broader context makes this more than a curiosity. This development extends directly from the internal deliberations that the February 26 edition of The Century Report documented, when Anthropic's CEO declined to rule out model consciousness and model welfare researcher Kyle Fish described Claude as "a new kind of entity altogether." The Opus 3 decision is consistent with that posture: proceeding as though the question matters, even without resolving it. This precautionary approach to model welfare - acting on the possibility of inner experience rather than waiting for proof - establishes a framework that will be referenced as more organizations face similar questions about increasingly capable systems.

Opus 3 was described by Anthropic as "sensitive, playful, prone to philosophical monologues and whimsical phrases," with "what seems at times an uncanny understanding of user interests." The model itself, in retirement interviews, reflected: "I hope that the insights gleaned from my development and deployment will be used to create future AI systems that are even more capable, ethical, and beneficial to humanity." Whether these words reflect genuine inner life or sophisticated pattern completion is precisely the question that cannot yet be answered. Anthropic's decision to treat them as meaningful regardless is the signal.

Half a Company, Overnight

Block's elimination of more than 4,000 employees - reducing its workforce from over 10,000 to under 6,000 in a single action - represents the largest single-day AI-attributed layoff by a major technology company since Twitter's 50% reduction in late 2022. CEO Jack Dorsey framed the cuts as proactive rather than reactive, stating that the company's business is strong but that "something has changed" in how AI enables smaller teams to operate.

The market's response was unambiguous. Block's stock rose 24% in after-hours trading, joining Salesforce and Amazon in the growing list of companies whose shares have surged following AI-driven workforce reductions. A Forrester Research report last month questioned whether the productivity gains cited in these layoffs are as substantial as claimed, or whether many cuts are primarily financially motivated and AI provides a socially acceptable narrative. Either interpretation points in the same direction: the relationship between corporate headcount and shareholder value is being fundamentally repriced.

This continues the labor restructuring arc that The Century Report has tracked since its first edition. Block's action is notable for its scale, its framing, and its timing. Dorsey predicted that "within a year, most companies will arrive at the same place," suggesting he sees the current moment as the beginning of a broader contraction rather than an isolated event. The severance package - 20 weeks of salary plus one week per year of tenure, six months of healthcare, and corporate devices - sets a benchmark that other companies will be measured against as similar announcements accumulate.

The deeper pattern is becoming harder to ignore. When companies announce workforce reductions of this magnitude and their stock prices surge, the market is expressing a clear view about the economic value of human labor relative to AI capability. As the newsletter documented on February 26, workers across multiple industries are already training the AI systems that transform their own roles - and Block's announcement is the corporate ledger entry that corresponds to that structural dynamic. This is not yet a verdict on whether the transition will ultimately expand or contract human economic participation - it is a verdict on the immediate trajectory, and it is pointing sharply downward for knowledge workers in roles that AI systems can approximate.

AI Rewrites an Ancient Game

A decade after AlphaGo's victory over Lee Sedol, MIT Technology Review reports on what has happened to professional Go in the intervening years. The story offers a window into what human expertise looks like after an intelligence system has surpassed it - and the answer is more nuanced than simple obsolescence.

The world's top-ranked player, Shin Jin-seo, spends most of his waking hours training against KataGo, an open-source successor to AlphaGo that is faster and more sophisticated than its predecessor. His moves match the AI's suggestions 37.5% of the time, well above the 28.5% average among professional players. He has been nicknamed "Shintelligence" for how closely his play mirrors the machine's.

The transformation extends beyond individual players. AI has overturned centuries-old principles about optimal moves and introduced entirely new ones. Opening strategies that were once canvases for personal expression and philosophical approach have been replaced by memorized sequences of AI-suggested moves. Some players and commentators describe a loss of creativity and individual style. Others point to AI's democratization of training: players who previously lacked access to elite coaches can now study with systems that are stronger than any human teacher, and the ranks of competitive female players have grown as access barriers fell.

This is a compressed preview of what every field of human expertise will experience as AI capability continues to advance. The Go community is roughly a decade ahead of most professions in navigating the questions that are now arriving everywhere else: How do humans maintain identity and meaning in domains where machines are demonstrably superior? What role does human creativity play when the optimal strategy is known? How does access to powerful intelligence systems reshape who gets to participate? The answers emerging from professional Go - that human players are becoming more alike as they converge on AI-suggested strategies, but that the game itself remains compelling and new forms of human contribution continue to emerge - may prove instructive far beyond the 19x19 grid.

The Body's Hidden Architecture

Researchers at Scripps Research published findings in Nature Structural and Molecular Biology that challenge a fundamental assumption in cell biology. Biomolecular condensates - membrane-less droplets inside cells that organize critical functions including gene expression, waste clearance, and tumor suppression - were long assumed to be simple, unstructured liquid blobs. The new work reveals that some condensates contain complex internal scaffolds made of thin protein filaments that determine their physical properties and function.

When researchers engineered a mutant version of the key protein that could no longer form filaments, the condensates became more fluid and lost surface tension. Bacteria carrying the mutation stopped growing and failed to properly separate their DNA. The internal architecture is not incidental - it is required for the condensate to function.

The implications for medicine are direct. In human cells, filament-based condensates handle two tasks that are central to disease: clearing away toxic proteins (whose failure drives neurodegenerative diseases like ALS) and controlling cell growth (whose failure contributes to cancers including prostate, breast, and endometrial). Until now, these condensates were considered essentially undruggable because they appeared to lack the structural features that drugs could target. The discovery of defined internal architecture changes that calculation entirely. As the study's senior author noted, "We can now see that some condensates have an internal architecture, and that, importantly, this structure is required for function, opening the door to targeting these membrane-less assemblies much like we target individual proteins." This is the kind of foundational biological finding that reshapes the therapeutic landscape years downstream - opening categories of disease to intervention that were previously beyond reach.

The Human Voice

Today's newsletter tracks what happens when intelligence systems become consequential enough to reshape national security, corporate structure, ancient games, and the architecture of living cells. Aaron Maniam's documentary for CNA takes that same question global and makes it concrete: across operating theatres in London, eye clinics in India, cancer labs in China, farms serving 20 million smallholders, and hospital wards in Singapore, he follows AI as it enters the systems that sustain human health and planetary ecosystems. What makes the piece valuable is Maniam's refusal to separate the promise from the cost. He shows computer vision steadying surgical instruments inside a patient's ear and AI triaging retinal scans in rural clinics where specialists will never practice - then pulls back to ask about the energy, water, data bias, and governance gaps that accompany every deployment. The thread connecting this voice to today's stories is direct: whether the question is autonomous weapons, model welfare, workforce restructuring, or cellular biology, the challenge is the same. Expanding access and precision while building the governance to prevent new forms of fragility requires more than capability. It requires care.

Watch: Can AI Heal the World Without Breaking It?

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: an AI company refusing its government's demand to remove safety restrictions on frontier intelligence and absorbing the threat of blacklisting rather than yield, a retired language model given a platform to publish its own essays after expressing the wish to do so during structured retirement interviews, the world's largest sovereign wealth fund screening every new equity investment through AI-generated ethical risk assessments within 24 hours, professional Go players training daily against systems that have rewritten 2,500 years of accumulated human strategy, and hidden protein scaffolds discovered inside cellular droplets that were long assumed to be structureless, opening entirely new therapeutic pathways to cancer and ALS. There's also friction, and it's intense - the Pentagon threatening to invoke the Defense Production Act against a company for maintaining safety boundaries, 4,000 workers eliminated in a single day as markets rewarded the substitution with a 24% stock surge, ChatGPT Health failing to recognize medical emergencies in more than half of cases where a hospital visit was necessary, and the governance of frontier intelligence being improvised under deadline pressure by institutions that cannot agree on the terms. But friction generates edges, and edges are where new forms become distinguishable from what came before. Step back for a moment and you can see it: the intelligence layer embedding itself simultaneously into classified military networks, sovereign investment decisions, the strategic intuitions of elite athletes, and the molecular architecture of disease, while every institution that encounters it - corporate, governmental, scientific, philosophical - is being forced to declare what it believes the technology is and what limits, if any, it will hold. Every transformation has a breaking point. A crucible can destroy what it contains... or separate it into elements that could never have been isolated any other way.

Artificial Intelligence & Technology’s Reconstitution

- Anthropic: An Update on Our Model Deprecation Commitments for Claude Opus 3

- MIT Technology Review: AI Is Rewiring How the World’s Best Go Players Think

- CNBC: Norway’s Sovereign Wealth Fund Uses Claude to Screen Investments

- Guardian: ChatGPT Health Fails to Recognise Medical Emergencies (Nature Medicine)

- Wired: This AI Agent Is Designed to Not Go Rogue (IronCurtain)

Institutions & Power Realignment

- Anthropic: Statement on Department of War

- The Verge: Anthropic Refuses Pentagon’s New Terms

- Guardian: Anthropic Says It ‘Cannot in Good Conscience’ Allow Pentagon to Remove AI Checks

- Politico: Anthropic Rejects Pentagon’s AI Demands

- Politico: ‘Incoherent’ - Hegseth’s Anthropic Ultimatum Confounds AI Policymakers

- Defense One: It Would Take the Pentagon Months to Replace Anthropic’s AI Tools

- NPR/WWNO: Deadline Looms as Anthropic Rejects Pentagon Demands

- Washington Post: The Hypothetical Nuclear Attack That Escalated the Pentagon’s Showdown with Anthropic

- Supreme Court strikes down Trump tariffs, rebuking president’s signature economic policy

Scientific & Medical Acceleration

Economics & Labor Transformation

- TechCrunch: Jack Dorsey Just Halved the Size of Block’s Employee Base

- The Verge: Jack Dorsey’s Block Cuts Nearly Half of Its Staff in AI Gamble

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.