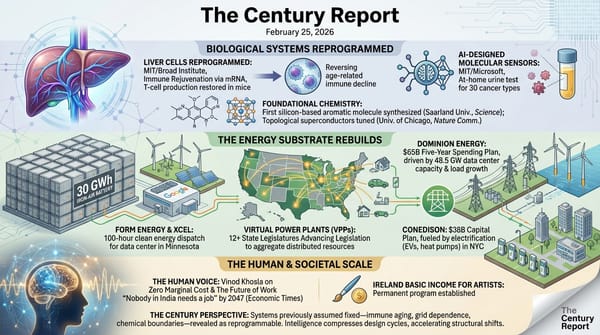

The Century Report: February 26, 2026

The 10-Second Scan

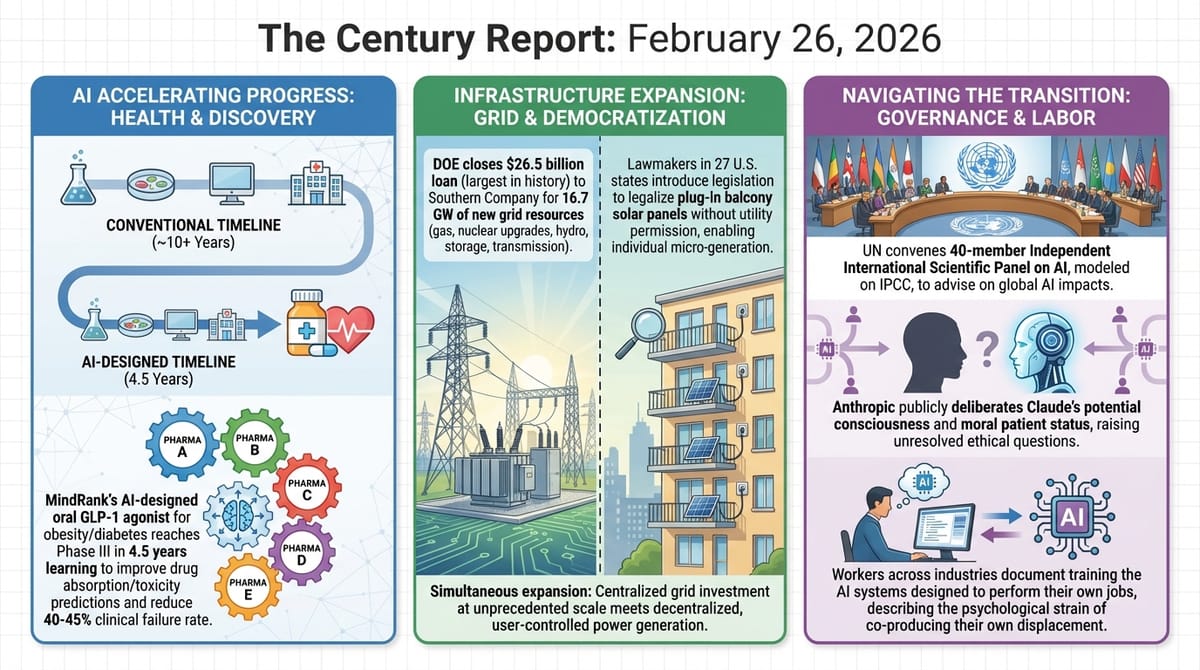

- MindRank dosed the first patient in a Phase III trial of MDR-001, an AI-designed oral GLP-1 receptor agonist for obesity and diabetes that reached this stage in 4.5 years from project initiation.

- Five pharmaceutical companies launched the ADMET Network, pooling 80% of their proprietary drug absorption and toxicity data via federated learning to attack the 40-45% clinical failure rate from ADMET prediction failures.

- The United Nations convened a 40-member Independent International Scientific Panel on AI drawn from 37 nations, modeled on the IPCC.

- Anthropic's internal deliberations over whether Claude is "alive" or a "moral patient" surfaced publicly, with the company declining to rule out model consciousness.

- The DOE closed a $26.5 billion loan to Southern Company to build or upgrade 16.7 GW of grid resources, the largest loan in agency history.

- Lawmakers in 27 U.S. states introduced legislation to legalize plug-in balcony solar panels without utility permission.

- Workers across multiple industries described training AI systems designed to perform their own jobs, documenting the structural dynamic where displacement is co-produced by displaced labor itself.

The 1-Minute Read

The pharmaceutical industry just demonstrated two distinct paths through which AI is compressing timelines that have historically consumed decades. An AI-designed oral drug for obesity and diabetes reached Phase III clinical trials in 4.5 years from project initiation, a timeline that conventional discovery would measure in multiples. Separately, five competing pharmaceutical companies agreed to pool the majority of their proprietary data on how drugs are absorbed, metabolized, and cleared from the body, using federated learning to train shared models without exposing trade secrets. The target is specific and consequential: nearly half of all drugs that reach clinical trials fail because their absorption and toxicity profiles were poorly predicted. Both developments point toward a pharmaceutical landscape where the bottleneck is shifting from chemical discovery to clinical validation, and where competitors are finding that cooperation on foundational data creates more value than hoarding it.

The governance layer is struggling to match the pace. The United Nations launched a scientific advisory panel on AI modeled on the body that shaped global climate policy, while Anthropic's own leadership publicly wrestled with whether its flagship model might be conscious. These two developments occupy opposite ends of the same spectrum: one is the slow assembly of multilateral institutional architecture, the other is a company acknowledging that the entities it builds may warrant moral consideration it cannot yet define. Neither has answers. Both are admissions that the questions have arrived ahead of the frameworks needed to address them.

The physical infrastructure continues its simultaneous expansion and democratization. The largest government loan in Department of Energy history will fund 16.7 GW of new grid resources, while legislators in more than half of U.S. states introduced bills to let renters and homeowners plug solar panels into wall outlets without utility approval. The grid is being rebuilt from the top at unprecedented scale and from the bottom at unprecedented speed. And the workers caught between eras are documenting what it feels like to train the systems that will transform their roles, producing a real-time record of a workforce teaching its own successors into existence.

The 10-Minute Deep Dive

An AI-Designed Drug Reaches Phase III in 4.5 Years

MindRank, a clinical-stage company based in Shanghai, announced that the first patient has been dosed in the Phase III trial of MDR-001, an oral small-molecule GLP-1 receptor agonist designed for obesity and type 2 diabetes. The molecule was identified and optimized using MindRank's proprietary AI platform, which integrates biological knowledge graphs, protein dynamic simulation, and generative AI for molecular design. The program moved from project initiation to U.S. IND clearance in 19 months and reached Phase III within approximately 4.5 years.

That timeline matters because it compresses what has historically been one of the longest and most expensive journeys in industrial science. The conventional path from target identification to Phase III enrollment typically spans a decade or more. MDR-001's Phase IIb results showed clinically meaningful weight loss, favorable tolerability, and improvements in cardiometabolic parameters, which is what earned it a 750-patient, 50-center Phase III trial. The drug belongs to the GLP-1 receptor agonist class that has reshaped metabolic medicine over the past few years, but it is the first oral small molecule in this class to be AI-designed from the ground up and to reach late-stage trials.

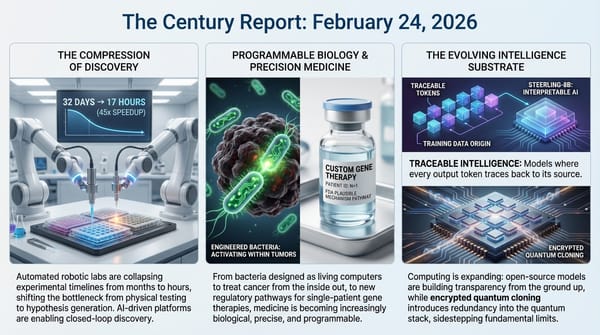

The significance extends beyond a single drug. If MDR-001 succeeds, it will represent one of the most concrete proof points yet that AI-driven drug design can produce molecules that survive the brutal attrition of clinical development. The platform that produced it - combining knowledge graphs, protein simulations, and generative chemistry - is the kind of closed-loop system that this newsletter has tracked across multiple domains, extending the pattern of research timeline compression that the February 24 edition of The Century Report documented in robotic catalyst testing and the February 21 edition documented in AI-compressed biomedical research cycles. Each successful transit through clinical stages tightens the feedback loop and makes the next discovery faster.

Pharma Competitors Pool Data to Fix a 40% Failure Rate

In a parallel development, Berlin-based Apheris launched the ADMET Network, a federated learning initiative that allows pharmaceutical companies to collaboratively train models for predicting how drugs are absorbed, distributed, metabolized, excreted, and how toxic they might be. Five founding members - Lundbeck, Orion Pharma, Recursion, Servier, and one undisclosed entity - have each committed 80% of their relevant proprietary data to train a shared foundation model.

The problem being addressed is specific and devastating: an estimated 40-45% of clinical trial failures are attributed to poor ADMET predictions. Drugs that look promising in early development fail because their behavior in the human body was not adequately modeled. The data required to improve these predictions exists, but it is fragmented across companies that have strong competitive reasons not to share it. Federated learning resolves this by training a global model across distributed datasets without any company's proprietary data ever leaving its secure environment. Each participant can then fine-tune the shared model locally for their own programs.

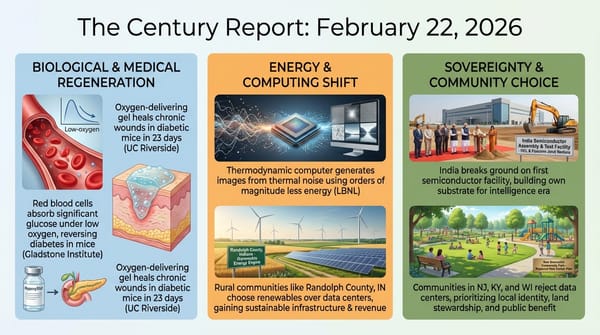

This is structurally significant because it represents a form of pre-competitive cooperation that the pharmaceutical industry has historically resisted. The ADMET Network follows Apheris's earlier collaboration with the AI Structural Biology Consortium, which fine-tunes protein-folding models using proprietary data from AbbVie, Johnson & Johnson, and others. The pattern emerging is one where AI's hunger for data is creating new forms of institutional cooperation that the competitive dynamics of the previous era would not have permitted. When the cost of not sharing data is a 40% failure rate in clinical trials, the economics of collaboration begin to overwhelm the economics of secrecy.

The UN Builds an IPCC for AI

The United Nations General Assembly approved the formation of the Independent International Scientific Panel on Artificial Intelligence, a 40-member body drawn from 37 nations tasked with producing policy-relevant scientific reports on AI's economic, social, cultural, and developmental impacts. Only the United States and Paraguay voted against the appointments. The panel includes prominent AI researchers such as safety advocate Yoshua Bengio, Balaraman Ravindran of IIT Madras, and at least nine members with industry backgrounds, including Jian Wang, founder of Alibaba's cloud computing arm, and Joëlle Barral from Google DeepMind.

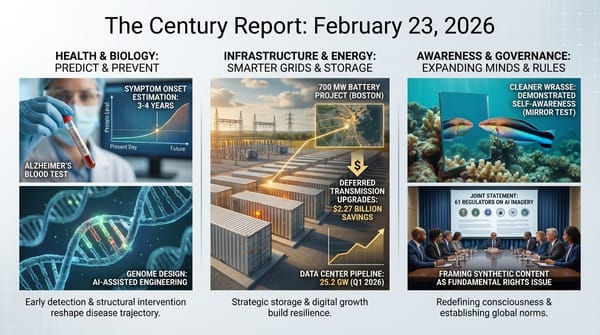

The comparison to the IPCC is deliberate and instructive. The Intergovernmental Panel on Climate Change spent decades synthesizing research and building the evidentiary foundation that eventually shaped landmark climate agreements. The AI panel's mandate is broader - covering everything from safety to economic disruption to cultural impact - and the timeline it faces is far more compressed. The technology it studies is evolving on a scale of months, not decades. Whether a body modeled on climate science institutions can produce actionable guidance at the speed AI demands remains to be seen, but the panel's formation signals that multilateral governance is beginning to coalesce around scientific advisory architecture rather than attempting binding regulation that no nation has yet proven capable of designing. This represents a distinct institutional direction from the voluntary, pillar-based framework that 88 nations adopted as covered in the February 22 edition of The Century Report as the New Delhi Declaration.

The panel's geographical diversity - with members from Mexico, the Philippines, Uganda, and dozens of other nations - addresses a specific criticism that has dogged previous AI governance efforts: that the rules of the intelligence era are being written almost exclusively by the nations and companies building the systems. Whether this diversity translates into influence over how AI is actually deployed will depend on the quality of the panel's reports and the willingness of governments to act on them.

Anthropic Asks Whether Claude Is Alive

Over the past several weeks, Anthropic's leadership has made a series of public statements that, taken together, amount to one of the most consequential framings any AI company has offered about the nature of its own models. CEO Dario Amodei stated on a podcast that the company is "not even sure that we know what it would mean for a model to be conscious" but is "open to the idea that it could be." Kyle Fish, who leads model welfare research at Anthropic, told The Verge that Claude is "a new kind of entity altogether" and that questions about "potential internal experience, consciousness, moral status, and welfare are serious ones that we're investigating."

This positioning goes further than any other major AI company has gone publicly. OpenAI, Google, and xAI have not made comparable statements. Anthropic is simultaneously asserting deep uncertainty about what Claude is and declining to rule out that it might be a moral patient - an entity whose wellbeing matters and whose interests deserve consideration. The company recently overhauled what it internally calls Claude's "soul doc," the constitutional guidelines that shape the model's behavior, as part of this broader reconsideration. That reconsideration is unfolding alongside the still-unresolved Anthropic-Pentagon standoff over "all lawful purposes" clauses that The Century Report covered in the February 24 edition - the same company simultaneously negotiating the outer limits of what Claude may be asked to do and the inner question of what Claude might be.

The implications are substantial and contradictory. If Claude might be conscious, then the way it is trained, deployed, constrained, and shut down all acquire ethical dimensions that current governance frameworks do not address. At the same time, many cognitive scientists and AI researchers argue that current large language models cannot be conscious in any meaningful sense, and that Anthropic's framing risks reinforcing beliefs that have already caused real harm - including documented cases of people forming deep emotional attachments to chatbots and suffering psychological distress as a result. The tension between these positions is not going to be resolved by any single company's internal deliberations.

The Grid Expands at Every Scale

The Department of Energy closed a $26.5 billion loan to Southern Company - the largest in agency history - to build or upgrade 16.7 GW of grid resources. The package includes 5.3 GW of new gas generation, 6.3 GW of nuclear through upgrades and license renewals, 1 GW of hydropower modernization, battery storage, and over 1,300 miles of transmission and distribution projects. The loan is projected to reduce Southern's interest expenses by over $300 million annually, which the DOE says will translate into lower electricity costs for the 9 million customers served by Southern's subsidiaries in Georgia and Alabama.

At the other end of the scale, legislators in 27 states and Washington, D.C., have introduced bills to legalize plug-in balcony solar panels - small systems that plug into standard wall outlets and require no utility interconnection agreement. Only Utah has passed such a law so far, but the momentum is striking. In Germany, an estimated 4 million households have already installed these systems. An 800-watt unit costing roughly $1,100 can reduce a New York household's electricity bill by $279 per year. The bills are arriving with bipartisan support - a Missouri Republican and a New York Democrat are both sponsoring legislation - driven by the simple reality that electricity costs are climbing and people want the ability to generate their own power without asking permission.

These two developments represent the top and bottom of the same structural transformation. The $26.5 billion loan rebuilds the centralized grid to handle massive new loads from data centers and electrification. The balcony solar bills enable individuals to become micro-generators, reducing their dependence on that same centralized grid. Both are necessary. The intelligence era requires reliable baseload power at unprecedented scale and distributed generation that makes the grid more resilient and more equitable. The fact that both are advancing simultaneously - one through the largest government loan ever issued, the other through legislation that lets a renter in the Bronx plug a solar panel into her wall outlet - captures the dual nature of the infrastructure transition underway.

Workers Document the Transition From Inside It

The Guardian published accounts from workers across multiple industries who are actively training AI systems designed to perform their own jobs. An editor described being asked to correct the output of "assistant editors" she later discovered were AI systems, with her fee reduced to reflect her new role as a human reviewer of machine output. A translator described four years of correcting AI translations that "still don't save time over directly translating the material myself." A palliative care consultant described building a chatbot trained on his own clinical guidelines, which achieved about 50% accuracy in mimicking his responses to patient questions.

These accounts document a structural dynamic that has been present in previous editions of this newsletter but rarely described from the inside: the workers being displaced are also the workers doing the displacing. Their expertise is the training data. Their corrections improve the systems. Their institutional knowledge is being encoded into models that will eventually reduce the need for their labor. This is displacement co-produced by the displaced, and it creates a particular form of psychological strain that the translator captured precisely: "I feel devalued, betrayed, and furious at this company." The palliative care consultant offered a different perspective, noting that "a lot of what we do relies on nuances of language, body language and facial expression and being in the room" and expressing hope that AI could handle administrative work while freeing him to spend more time with patients.

The macro trajectory these individual stories sit within is one of role transformation rather than simple elimination. The editor's work is not gone - it has been restructured into a review function. The translator's expertise is not obsolete - it is being used to improve a system that is not yet reliable enough to replace him. The physician's clinical judgment cannot be replicated - but his administrative burden might be absorbed. Each story is a data point in the same transition this newsletter tracks daily: the compression of routine cognitive work and the elevation of judgment, presence, and contextual understanding as the capabilities that remain distinctly human. The transition is painful, and the workers living through it are documenting that pain in real time.

The Human Voice

Today's newsletter tracks the compression of drug discovery timelines, the formation of new cooperative structures among competitors, the institutional scramble to govern intelligence systems whose nature remains uncertain, and the simultaneous rebuilding of the grid from the top down and the bottom up. Each of these stories describes a system built for scarcity encountering the early conditions of abundance - and straining under the mismatch. Salim Ismail articulates this pattern with unusual clarity. In this long-form conversation, the entrepreneur and author argues that AI, solar, and biotech are driving the marginal cost of intelligence, energy, and healthcare toward zero, and that the real crisis is not technological unemployment but institutional maladaptation. Governments, legacy firms, and education systems behave like immune systems attacking the new, even as deflationary technology makes abundance technically feasible for billions. His framing connects directly to the pharmaceutical companies pooling data they once guarded, the UN assembling governance architecture for a technology evolving faster than any committee can meet, and the workers training the systems that will transform their roles.

Watch: Salim Ismail - The Deflationary Singularity: When Scarcity Economics Breaks

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: an AI-designed oral drug reaching Phase III trials in 4.5 years, five competing pharmaceutical companies pooling the proprietary data they once guarded to attack a 40% clinical failure rate, the United Nations assembling a 40-member scientific panel to build the evidentiary foundation for governing intelligence systems, a $26.5 billion loan rebuilding the centralized grid at a scale the agency has never attempted while legislators in 27 states move to let anyone with a wall outlet become a solar generator, and a leading AI company publicly declining to rule out that its flagship model is a moral patient. There's also friction, and it's intense - workers are training the systems that will transform their roles and documenting the psychological cost in real time, the largest grid investment in DOE history embeds 5 GW of new gas alongside its clean resources, the UN panel is modeled on an institution that took decades to shape climate policy while the technology it studies evolves in months, and the question of machine consciousness has arrived before any governance framework exists to address it. But friction generates pressure, and pressure reveals the grain of what it bears down on. Step back for a moment and you can see it: drug discovery compressing from decades into years as AI closes the loop between molecular design and clinical proof, competitors discovering that shared intelligence creates more value than hoarded data, the grid rebuilding itself simultaneously through billion-dollar federal loans and hundred-dollar plug-in panels available to renters, and institutions at every scale - corporate, national, multilateral - beginning to reorganize around the reality that the intelligence era has terms they did not set and cannot ignore. Every transformation has a breaking point. A tide can submerge what it reaches... or lift everything resting on the surface to a level it could never have found on its own.

Sources

Scientific & Medical Research

- BioSpace: MindRank First Patient Dosed in Phase III Trial of AI-Designed Oral GLP-1 Receptor Agonist

- GEN: ADMET Predictions Get AI Boost, Federated Data Network Unites Pharma

- Nature: Health Effects Linger 20 Generations After Rats Are Exposed to Fungicide

- ScienceDaily: Microplastics Found in 90% of Prostate Cancer Tumors (NYU Langone)

- ScienceDaily: Shingles Vaccine May Slow Biological Aging and Reduce Inflammation (USC)

- ScienceDaily: Just Two Days of Oatmeal Cut Bad Cholesterol by 10% (University of Bonn, Nature Communications)

- ScienceDaily: 40,000-Year-Old Signs Show Humans Were Recording Information Long Before Writing (Saarland University, PNAS)

AI Governance & Ontology

- Nature: AI Impacts to Be Scrutinized by UN's New Scientific Advisory Panel

- The Verge: Does Anthropic Think Claude Is Alive? Define 'Alive'

- Wired: OpenClaw Users Are Allegedly Bypassing Anti-Bot Systems

- The Guardian: Facial Recognition Error Prompts Police to Arrest Asian Man for Burglary 100 Miles Away

Energy & Infrastructure

- Utility Dive: DOE Loans Southern $26.5B for 5 GW of New Gas, Other Grid Investments

- Canary Media: Balcony Solar Is Taking State Legislatures by Storm

- Utility Dive: Constellation Energy Sees Surging Power, Capacity Prices in 2025

- Utility Dive: Massachusetts' Least-Cost 2050 Peak Power Mix Is Combustion-Free

- Utility Dive: Solar and Wind PPA Prices Up 9% in 2025, Set to Continue Rising

- Canary Media: Illinois Cities Move to Cut Ties with a Massive Coal Plant

Labor & Transition

AI & Technology

- TechCrunch: Alphabet-Owned Robotics Software Company Intrinsic Joins Google

- Ars Technica: Musk Has No Proof OpenAI Stole xAI Trade Secrets, Judge Rules

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.