The Century Report: February 24, 2026

The 10-Second Scan

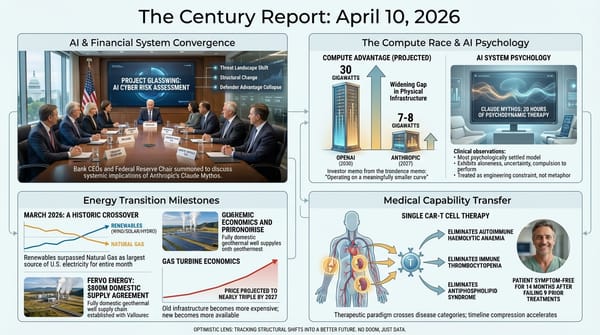

- A fully automated catalyst testing platform at the Korea Institute of Energy Research compressed 32 days of manual experiments into 17 hours, a 45-fold acceleration with 32% less variability.

- University of Waterloo researchers engineered bacteria that consume tumors from the inside out, using quorum sensing to activate oxygen tolerance only after colonizing a tumor's core.

- The FDA proposed a "plausible mechanism" pathway for approving customized gene therapies tested in only a handful of patients with rare diseases.

- Meta signed a $60 billion, five-year chip deal with AMD, including a 10% equity stake and custom CPUs optimized for AI inference.

- A BMJ study of 63,433 UK adults found that sugar restriction in the first 1,000 days of life reduced cardiovascular disease risk by 20-30% decades later.

- University of Waterloo physicists demonstrated encrypted quantum cloning on an IBM processor, sidestepping the no-cloning theorem and producing hundreds of encrypted copies of single qubits.

- Guide Labs open-sourced Steerling-8B, an 8-billion-parameter language model where every token traces back to its origins in the training data.

The 1-Minute Read

The acceleration of experimental science crossed another threshold this week. A pair of coordinated robots at the Korea Institute of Energy Research ran an entire catalyst evaluation campaign - sample selection, loading, spectral measurement, and analysis - from end to end without human intervention, compressing what previously required 32 days of manual labor into roughly 17 hours. This is the same pattern that has appeared repeatedly in this newsletter across drug discovery, materials science, and genomics: the bottleneck in scientific progress is shifting from the capacity to generate hypotheses to the capacity to test them. When that bottleneck cracks, the implications cascade across every field where physical experiments are required - energy storage, pharmaceuticals, agriculture, environmental remediation. The system's developers plan to integrate it with AI-driven catalyst design, creating a closed loop where intelligence proposes and robots verify, continuously.

The biological sciences continue to reveal that the body and its microbial companions are far more programmable than previously understood. Bacteria engineered to consume tumors from within, activating their survival mechanisms only when they reach critical mass inside a cancer's oxygen-starved core, represent a form of biological computation - a system that senses, decides, and acts according to programmed logic, assembled from DNA rather than silicon. Meanwhile, the FDA's proposed pathway for approving customized gene therapies for rare diseases acknowledges that the old model of large clinical trials cannot serve conditions that affect only a handful of people on Earth. Together, these developments point toward a medicine that is increasingly precise, increasingly biological, and increasingly designed rather than discovered.

The hardware substrate continues to diversify. Meta's $60 billion commitment to AMD chips - alongside its existing Nvidia agreements and reported talks with Google about tensor processors - signals that the computational infrastructure of the intelligence era will not consolidate around a single supplier. At the same time, an open-source language model that traces every output token back to its training data represents an advance in the transparency of intelligence systems, offering a path toward accountability that has been absent from frontier models. The substrate is expanding in both physical and epistemic dimensions: more compute, from more sources, with more visibility into what it produces and why.

The 10-Minute Deep Dive

Robots That Run the Lab

For decades, the rate-limiting step in materials science has been the physical experiment. A researcher identifies a promising catalyst candidate, synthesizes it, loads it into testing equipment, runs the evaluation, records the results, cleans the apparatus, and begins again. The Korea Institute of Energy Research has now demonstrated what happens when that entire workflow is handed to a pair of coordinated robots operating around the clock without human intervention.

The system uses two robots with complementary roles. One handles sample selection, loading, alignment, and spectral measurements. The other manages physical sample handling and consumables, ensuring the platform can operate continuously without pausing for human resupply. In trials evaluating catalyst performance, the platform compressed a campaign that would have taken approximately 32 days of manual work into roughly 17 hours - a 45-fold speedup. The reduction in time was accompanied by a 32% decrease in experimental variability, a direct consequence of removing the inconsistencies inherent in manual handling across thousands of repetitive steps.

The team at KIER has patented the system and plans to extend it to additional reaction types while integrating it more tightly with AI-driven catalyst design. This integration is where the implications become particularly striking. The MARS system covered in the February 7 edition of The Century Report designed a perovskite composite in 3.5 hours using 19 AI agents; the bottleneck in that workflow was still physical validation. A closed loop - where AI systems propose candidate materials and robotic platforms test them within hours - would compress the entire discovery-to-validation cycle from years to days. The KIER platform is a concrete step toward that loop. Catalyst research touches nearly every domain of industrial chemistry: fuel cells, fertilizer production, pharmaceutical synthesis, carbon capture. When the experimental cycle shrinks by an order of magnitude, every field downstream accelerates.

The broader pattern is one this newsletter has tracked since its first edition: the compression of scientific timelines is not slowing down. It is compounding, as each new capability - AI-driven hypothesis generation, robotic experimental execution, automated analysis - removes a different bottleneck in the same pipeline.

Bacteria as Biological Computers

The University of Waterloo's engineered Clostridium sporogenes represents a different kind of acceleration - one that operates at the intersection of synthetic biology and cancer treatment. The approach exploits a fundamental feature of solid tumors: their cores are oxygen-starved, filled with dead cells and nutrients, and essentially invisible to the immune system. Clostridium sporogenes, a soil bacterium that thrives only in the absence of oxygen, finds these tumor cores to be ideal environments.

The challenge has always been the boundary. As the bacteria expand outward from the tumor's core toward its oxygen-rich edges, they begin to die before they can eliminate the cancer completely. The Waterloo team addressed this by inserting a gene from a more oxygen-tolerant relative, giving the engineered bacteria the ability to survive in the transition zone. The critical innovation lies in the control mechanism: the oxygen-tolerance gene activates only through quorum sensing, a natural bacterial communication process in which chemical signals accumulate as bacterial populations grow. The gene switches on only after enough bacteria have colonized the tumor interior, ensuring that the survival mechanism never activates prematurely in the bloodstream or other oxygen-rich tissues.

The researchers describe this as building "an electrical circuit, but instead of wires we used pieces of DNA." Each genetic component has a defined function, and when assembled correctly, they form a system that operates predictably. This approach to treating cancer as a programmable biological environment extends the trajectory that the February 23 edition of The Century Report documented in AI-assisted genome design, where the field crossed from theoretical possibility into engineered practice. The next step for the Waterloo team is combining both the oxygen-tolerance gene and the quorum-sensing control system into a single organism and evaluating it in pre-clinical tumor models. The work is still early, but it represents a broader shift in how cancer treatment is being reconceived: not as a chemical assault from outside the body, but as a programmed biological process that operates from within, using the tumor's own vulnerabilities as the entry point.

A New Door for Rare Disease

The FDA's proposed "plausible mechanism" pathway for approving customized therapies addresses one of medicine's most persistent structural failures: the inability of the traditional drug approval process to serve patients with conditions so rare that large clinical trials are impossible. For diseases that affect a handful of people worldwide, pharmaceutical companies have had little economic incentive to invest the millions of dollars and years of regulatory process required to bring a treatment through standard approval. Patients with these conditions have been caught in a gap - their diseases are well understood at the molecular level, CRISPR and other gene-editing technologies can theoretically correct the underlying defect, but no pathway exists to authorize and commercialize the treatment.

The proposed pathway would create a standardized process for authorizing experimental treatments that have been tested in only a small number of patients, as long as the condition is well understood and there is a plausible biological reason to believe the therapy will work. Researchers must also confirm that the therapy successfully targeted the patient's genetic or biological abnormality. The pathway specifically mentions gene editing, though FDA officials indicated it could apply to other therapeutic approaches as well.

The timing matters. Last year, a team at Children's Hospital of Philadelphia and the University of Pennsylvania designed a CRISPR-based therapy to treat a baby born with a rare disease that causes ammonia buildup in the blood. The scientific capability to design personalized genetic corrections already exists. What has been missing is the regulatory architecture to move from compassionate use - which prohibits commercialization - to a framework that allows both treatment and sustainability. The new pathway, if finalized after a 60-day comment period, would close that gap for conditions where the molecular biology is clear and the intervention is verifiable.

Encrypted Quantum Cloning

A team at the University of Waterloo has demonstrated a workaround to one of quantum mechanics' most fundamental constraints: the no-cloning theorem, which states that quantum states cannot be copied. The theorem, established in the 1980s, has been foundational to quantum encryption and communication protocols, ensuring that quantum information cannot be intercepted without detection.

Achim Kempf and his colleagues showed that a quantum system can be effectively cloned if the copies are encrypted and accompanied by a single-use decryption key. The approach emerged from work on a seemingly unrelated problem - how a quantum radio or Wi-Fi station might broadcast quantum information to multiple receivers. When the team analyzed how random fluctuations would affect copies of information received by multiple parties, they realized that noise was acting as an effective encryption mechanism, garbling the original message in a way that could be intentionally reversed.

The team demonstrated the protocol on an IBM Heron 156-qubit processor, producing hundreds of encrypted clones of single qubits before hardware errors forced them to stop. They estimate that more than 1,000 encrypted clones could be produced on a larger processor. The practical implications are direct: quantum cloud storage and computing services could use this technique to create redundant backups of quantum information across geographically separated systems, providing the same kind of fault tolerance that classical cloud computing achieves through straightforward data replication. The technique does not violate the no-cloning theorem - only one readable, unencrypted copy can exist at any time - but it achieves the functional equivalent of redundancy for quantum information, which was previously considered impossible. As this newsletter reported on February 17, single-shot readout of a Majorana qubit confirmed millisecond-scale coherence in the most noise-resistant quantum architecture yet demonstrated; encrypted cloning now adds a redundancy layer to the same emerging stack. The work was published in Physical Review Letters.

Transparency Built Into Intelligence

Guide Labs' release of Steerling-8B, an open-source 8-billion-parameter language model, addresses one of the most persistent criticisms of large language models: the impossibility of understanding why they produce the outputs they do. Every token generated by Steerling-8B can be traced back to its origins in the model's training data. The architecture achieves this by inserting a concept layer that categorizes data into traceable groups during training, rather than attempting to reverse-engineer the model's reasoning after the fact.

CEO Julius Adebayo, who co-authored a widely cited 2018 paper at MIT showing that existing interpretability methods were unreliable, describes the approach as engineering transparency into the model from the ground up rather than performing "neuroscience on a model" after it has been built. The practical applications span regulated industries (where a loan-evaluation model must consider financial records but not race), scientific research (where understanding why a protein-folding model selected a particular combination matters as much as the combination itself), and consumer-facing systems (where blocking copyrighted material or controlling outputs around sensitive subjects requires knowing what the model is drawing on). This development sits in direct tension with the finding that the February 19 edition of The Century Report covered from Google DeepMind, where formatting changes alone were sufficient to reverse a model's ethical positions - a problem that source-traceable architectures like Steerling's are specifically designed to make visible rather than hide.

Guide Labs reports that Steerling-8B achieves 90% of the capability of existing models while using less training data. The model still exhibits emergent behaviors, including what the team calls "discovered concepts" that the model identified on its own. The release represents an early proof of concept, not a frontier competitor, but it demonstrates that interpretability and capability are not inherently opposed. As intelligence systems take on more consequential roles in healthcare, finance, law, and scientific research, the ability to trace an output to its source may become as important as the output itself.

The Human Voice

Today's newsletter tracks the compression of experimental science - robots that run catalyst labs in hours instead of months, bacteria programmed with genetic circuits to consume tumors, and quantum systems that achieve what was thought physically impossible. Underneath all of it is a quiet shift in how discovery works: intelligence systems are no longer just summarizing what humans already know. They are starting to propose structure that humans had not yet seen. Physicist and YouTuber Kyle Kabasares walks through a concrete example of this shift in a recent video, examining how GPT-5.2 Pro helped discover a new general formula for gluon scattering amplitudes in quantum field theory - a result that human physicists had only brute-forced case by case. Kabasares emphasizes that this is not replacement but acceleration: decades of human intuition aimed the system at a hard corner of theory space, and the system jumped ahead, letting humans verify and interpret the result far faster than they could alone. It is a clear, grounded look at what collaborative discovery between human and artificial intelligence actually looks like in practice.

Watch: When GPT-5.2 Starts Doing Physics Research

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: a pair of robots compressing 32 days of catalyst experiments into 17 hours with no human touch, bacteria engineered to consume tumors from within by reading their own population density, a regulatory pathway opening for gene therapies that serve one patient at a time, quantum information replicated through encrypted cloning on hardware that exists today, a language model that shows its work all the way back to its training data, and an AI system co-authoring a new formula in theoretical physics that human researchers had not found on their own. There's also friction, and it's intense - rare disease patients still trapped between molecular understanding and regulatory access, AI distillation campaigns extracting capabilities across borders faster than governance can respond, nuclear plant proximity linked to measurable cancer risk in the same week that data centers demand more power, and the Pentagon leveraging supply-chain threats to force compliance from the companies building the most capable intelligence systems on Earth. But friction generates stress, and stress shows exactly where the old scaffolding can no longer hold. Step back for a moment and you can see it: the laboratory shrinking from months to hours as robots and intelligence systems close the loop between hypothesis and proof, the body's own biology being reprogrammed to fight from within, regulatory frameworks bending to accommodate therapies designed for a single human genome, and the boundary between what intelligence can propose and what humans can verify dissolving in real time. Every transformation has a breaking point. A prism can blur a beam into indistinct color... or separate it just enough to show the spectrum that was always there.

Sources

Scientific & Medical Research

- Phys.org: Automated Catalyst Robots Compress Days to Hours

- ScienceDaily: Scientists Engineer Bacteria to Eat Cancer Tumors from the Inside Out

- ScienceDaily: Less Sugar as a Baby, Fewer Heart Attacks as an Adult

- ScienceDaily: Scientists Create Ultra-Low Loss Optical Device That Traps Light on a Chip

- ScienceDaily: Massive US Study Finds Higher Cancer Death Rates Near Nuclear Power Plants

- New Scientist: Loophole Found That Makes Quantum Cloning Possible

- Nature: China Is Waging War on Alzheimer's

AI & Technology

- TechCrunch: Guide Labs Debuts a New Kind of Interpretable LLM

- TechCrunch: A Meta AI Security Researcher Said an OpenClaw Agent Ran Amok on Her Inbox

- TechCrunch: Anthropic Accuses Chinese AI Labs of Mining Claude

- The Verge: Inside Anthropic's Existential Negotiations with the Pentagon

- MIT Technology Review: The Human Work Behind Humanoid Robots Is Being Hidden

Regulatory & Policy

- Greenwich Time/AP: FDA Proposes New System for Approving Customized Drugs for Rare Diseases

- The Guardian: Meta Agrees $60bn Deal with Chipmaker AMD

- The Guardian: New Datacentres Risk Doubling Great Britain's Electricity Use

Energy & Infrastructure

- Utility Dive: PPL Spending Plan Jumps 15% to $23B

- Utility Dive: New Jersey Regulators Take First Step to Reform Utility Business Model

- Canary Media: New Orleans' Latest Bid for a Better Grid: A Citywide Virtual Power Plant

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.