The Century Report: March 8, 2026

The 20-Second Scan

- Researchers developed a machine learning pipeline that identifies a spectroscopic signal linked to liquid-like ion flow inside solid-state battery crystals, opening a new route for high-throughput discovery of superionic materials.

- OpenAI's robotics lead Caitlin Kalinowski resigned over the company's Pentagon agreement, citing concerns about surveillance without judicial oversight and lethal autonomy without human authorization.

- Block employees pushed back against CEO Jack Dorsey's claim that AI justified cutting 4,000 jobs, with current workers reporting that 95% of AI-generated code still requires human revision.

- Northwestern University found that organized scientific fraud networks are now producing fake research papers faster than legitimate studies are being published.

- China's commerce ministry warned of a new global semiconductor supply chain crisis as internal conflict between Dutch chipmaker Nexperia's headquarters and its Chinese subsidiary escalated.

- Hebrew University researchers identified a molecular chain reaction involving nitric oxide and the mTOR pathway that may drive some forms of autism, with pharmacological intervention restoring normal cellular activity in experiments.

The 2-Minute Read

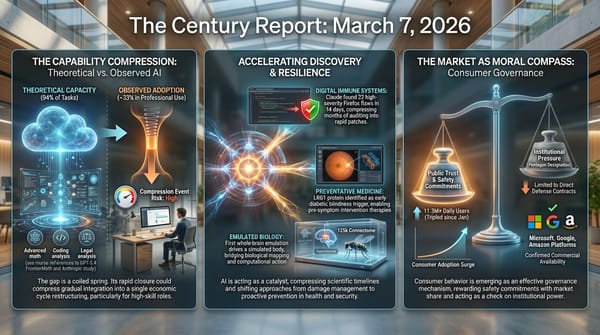

The internal fracture lines within AI organizations are widening into publicly visible structural breaks. A senior hardware executive walking away from OpenAI over its Pentagon agreement represents a qualitatively different signal than consumer uninstalls or social media criticism. When the person building the physical embodiment of an AI company's future decides the governance of that future is being determined too hastily, it surfaces a tension that contract amendments and public statements cannot resolve: the speed at which consequential AI deployments are being negotiated is outpacing the deliberation these deployments require. The resignation joins a growing pattern where the people closest to the technology are the ones raising the sharpest objections to how it is being governed.

The gap between what AI can theoretically accomplish and what it is actually delivering in professional settings continues to generate friction that is itself informative. Block's remaining employees describe a company where AI-generated code requires human correction 95% of the time, where the craft of software development has been redefined but not replaced, and where a CEO's narrative about AI productivity may be serving investor confidence more than operational reality. Read alongside Anthropic's recent labor study showing a threefold gap between theoretical capability and actual adoption, the Block story adds granularity: the adoption gap is not abstract. It lives in the daily experience of workers whose roles are being compressed around them while the compression is declared complete by executives speaking to markets.

Beneath the governance and labor stories, computational perception continues to reveal physics that was previously invisible. A new machine learning pipeline identifies spectroscopic signatures of liquid-like ion motion inside solid battery crystals, a signal that human researchers could not detect through conventional methods. Meanwhile, the integrity of the scientific enterprise itself is under pressure, with organized fraud networks now generating fake research at a pace that exceeds legitimate publication. These developments share a common thread: the systems that produce knowledge are being simultaneously accelerated and corrupted, and the capacity to distinguish signal from noise is becoming one of the defining challenges of this transition.

The 20-Minute Deep Dive

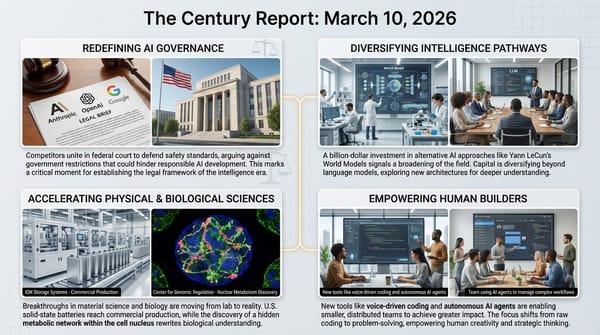

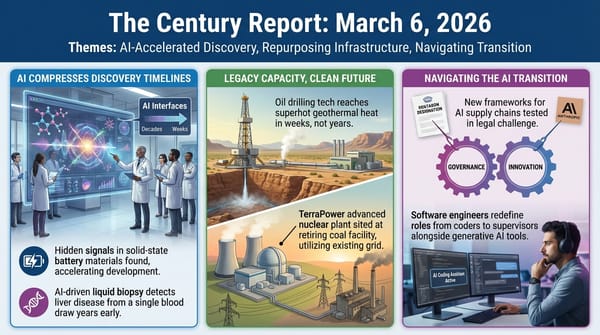

The First Resignation Over Governance Speed

Caitlin Kalinowski's departure from OpenAI is structurally distinct from previous high-profile exits in the AI industry. She did not leave over a safety disagreement about model capabilities, a dispute about corporate structure, or frustration with technical direction. She left because she believed the Pentagon agreement was "rushed without the guardrails defined," and that the questions of domestic surveillance and lethal autonomy "deserved more deliberation than they got."

The distinction between opposing a deal's existence and opposing its speed is significant. Kalinowski explicitly stated that AI has an important role in national security. Her objection was procedural and institutional: that decisions of this magnitude require governance architecture that does not yet exist, and that announcing commitments before defining constraints inverts the order of operations. In a follow-up post, she clarified that her concern was "a governance concern first and foremost."

This frames the AI-military governance arc The Century Report has tracked since February in a new light. The question is no longer simply whether AI companies will work with militaries, or what red lines they will maintain. As The Century Report covered on March 6, the Pentagon formally designated Anthropic a supply-chain risk and Anthropic announced a federal court challenge, establishing the first public legal test of where those governance lines actually sit. A third question has now emerged from Kalinowski's departure: at what pace can these arrangements be responsibly negotiated, and who gets to determine that pace? When the person responsible for building a company's physical robotics program concludes that the answer is "too fast," the signal carries weight that market metrics and consumer protests do not.

The broader fallout continues to build. Business Insider reports ongoing internal criticism from OpenAI employees, with research scientists publicly questioning the deal's value and technical staff advocating for greater transparency. Claude and ChatGPT remain the top two free apps in the U.S. App Store, with Claude holding the number one position for over a week. The consumer market continues to function as a governance mechanism, but Kalinowski's resignation suggests that the internal labor market within AI organizations may prove equally consequential.

When Workers Tell the Truth About AI Productivity

The Century Report covered Anthropic's labor study on March 7, documenting a threefold gap between AI's theoretical capability envelope and its observed adoption in professional settings - and naming the "Great Recession for white-collar workers" scenario as structurally plausible. The Guardian's reporting on Block's layoffs provides the view from inside that gap.

Seven current and recently laid-off Block employees described a company where AI has genuinely accelerated engineering workflows. Block executives cited a 40% increase in production code shipped per engineer since September. The company is not fabricating its productivity gains. What the employees contest is the leap from "AI makes engineers faster" to "AI can replace half the company." One current employee whose role involves helping others use AI estimated that approximately 95% of AI-generated code changes still require human modification before meeting company standards. All code changes require human approval before deployment. The technology is producing first drafts, not finished work.

Several employees described a dynamic that echoes The Century Report's coverage of workers training their own replacements on February 26. Block employees were required to use AI with increasing intensity over the past nine months, contributing data about which tasks could be automated. One laid-off worker characterized this as "a thinly veiled attempt to get all this input from employees on what tasks to automate." The productivity monitoring extended to individual token usage. Workers were simultaneously required to adopt AI and measured on how thoroughly they did so, generating the very data used to justify their elimination.

Jack Dorsey told Wired that the sophistication of AI shifted meaningfully in December, citing specific models. His stated goal was for "the company itself to feel like a mini AGI." George, a current employee, offered a different interpretation: "This was posturing for the market." Block's stock rose 24% after the layoff announcement. The divergence between the internal reality described by workers and the external narrative presented to investors captures a pattern that is likely playing out across the economy. Companies are discovering that AI productivity claims function simultaneously as operational tools and market signals, and the incentives for each do not always align.

The deeper question extends beyond Block. If the Anthropic study's finding holds - that the most AI-exposed workers are older, highly educated, and well-compensated - then the displacement pattern will concentrate in exactly the professional class that has historically absorbed technological transitions most comfortably. The frameworks for navigating that displacement do not yet exist, and the Block story suggests they will need to account not just for job loss but for the psychological complexity of workers who remain employed while watching their craft be redefined around them.

Computational Perception Finds New Physics in Batteries

Researchers published a machine learning pipeline in AI for Science that predicts Raman spectra for solid-state battery materials and identifies a distinctive low-frequency signal linked to liquid-like ion motion inside crystals. The finding represents a second, complementary advance in AI-accelerated battery materials discovery, building directly on the solid-state battery signal discovery that The Century Report covered on March 6, when AI identified previously undetectable electrochemical signals at the electrode interface that human researchers had overlooked across decades of experimentation.

The earlier finding involved AI identifying previously undetectable electrochemical signals at the interface between solid electrolyte and electrode. This new work operates at a different scale: it reveals how ions move through the bulk material itself. When ions travel through a crystal lattice in a fluid-like manner, their motion temporarily disrupts the lattice symmetry, producing Raman scattering signatures that conventional computational techniques could not simulate without prohibitive computing costs. The machine learning approach achieves near-ab initio accuracy at a fraction of the computational expense, making high-throughput screening of candidate materials practical for the first time.

The practical implications are direct. Solid-state batteries promise higher energy density, faster charging, and greater safety than current lithium-ion technology, but identifying materials with sufficient ionic conductivity has been a persistent bottleneck. The new pipeline can distinguish between materials where ions hop between fixed positions and materials where ions flow in a liquid-like manner, providing a reliable indicator before any physical synthesis is required. Applied to sodium-ion conducting materials, it successfully identified known fast-ion conductors and explained previously puzzling experimental observations.

This result sits within the battery acceleration arc The Century Report has documented throughout its run: the Nankai University fluorine battery achieving 700+ Wh/kg on March 1, the 29% surge in U.S. battery deployments on March 4, the electrochemical interface signal on March 6, and now a complementary tool for screening bulk ion transport. Each advance compresses a different segment of the discovery-to-deployment pipeline. The AI-enabled perception of battery physics is not a single breakthrough but an accumulating capability that makes the entire field move faster with each new method published.

The Integrity of Knowledge Under Pressure

A Northwestern University study published in PNAS documents that organized scientific fraud has evolved from isolated misconduct into a global enterprise operating at industrial scale. Paper mills produce fabricated manuscripts and sell authorship slots for hundreds or thousands of dollars. Brokers arrange sham peer review. Compromised journals provide publication venues. The researchers found that fraudulent studies are now appearing faster than legitimate scientific publications.

The study analyzed massive datasets from Web of Science, Scopus, PubMed, and OpenAlex, supplemented by retraction records, editorial metadata, and image duplication databases. The networks they uncovered are "essentially criminal organizations," according to the study's senior author. The business model is comprehensive: researchers can purchase papers, citations, authorship positions, and guaranteed acceptance through corrupted editorial processes.

This finding intersects with the transition in two ways. First, as AI accelerates the pace of legitimate scientific discovery - compressing research timelines from years to months across domains The Century Report tracks daily - the same acceleration is available to fraudulent actors. The paper mill model scales precisely because it can leverage automated content generation and exploit the volume pressure that journals face. Second, the integrity of the scientific literature is the foundation on which AI systems are trained, clinical decisions are made, and policy is set. If the literature becomes "completely poisoned," as the researchers warn, the cascading consequences reach every domain where evidence-based reasoning operates.

The Northwestern team launched an automated detection system that scans published materials science papers for misidentified instruments, a telltale sign of fabricated research. Computational methods are being deployed to defend the same knowledge base that computational methods are helping to build. The arms race between legitimate and fraudulent science is itself a feature of this transition: as the capability to generate knowledge accelerates, so does the capability to generate plausible imitations of knowledge, and the frameworks for distinguishing between them must evolve at the same pace.

Semiconductor Supply Chains Fragment Along Geopolitical Lines

China's commerce ministry warned Saturday of a potential new global semiconductor supply chain crisis as the conflict between Dutch chipmaker Nexperia's headquarters and its Chinese subsidiary intensified. The Chinese subsidiary accused the Dutch headquarters of disabling office accounts for all employees in China. The Dutch entity disputed that production was affected but did not deny the IT action. Both sides have accused the other of bad-faith negotiation since the Dutch government invoked Cold War-era authority to seize control of the company from its Chinese parent Wingtech last year.

The Nexperia situation illustrates a pattern that extends well beyond a single company. The chips at stake are not frontier AI processors but basic automotive semiconductors, the kind of component whose absence shut down car production lines globally during the COVID-era shortage. A previous escalation in October 2025 disrupted automotive production when China imposed export controls on Nexperia's Chinese-made chips in response to the Dutch seizure. Diplomatic negotiations eased that crisis, but the underlying corporate fracture has only deepened.

The structural lesson is that semiconductor supply chains are now contested territory at every level of sophistication, from cutting-edge AI accelerators subject to export controls to commodity automotive chips caught in corporate governance disputes with geopolitical dimensions. The Nexperia conflict exists because a Dutch company with deep European heritage is owned by a Chinese firm that is 30% backed by Chinese government interests, creating a single entity that spans both sides of a geopolitical fault line. As hardware sovereignty competition intensifies - a dynamic The Century Report has tracked across the AI chip ecosystem, India's semiconductor buildout, and proposed U.S. export controls - the fragility extends to the most mundane components in the most established industries.

The Human Voice

Today's signal - senior technologists resigning over governance speed, workers contesting the narrative that AI has already replaced them, and a widening gap between what AI can theoretically do and what organizations are actually prepared to absorb - finds sharp articulation in a Davos-recorded conversation between Stanford lecturer Kian Katanforoosh and Marina Mogilko. Katanforoosh has assessed over a million people's AI capabilities through his company Workera, and his core finding is that 71% either dramatically overestimate or underestimate their skills. The real divide opening in 2026, he argues, is between shallow adopters who occasionally prompt a chatbot and a small minority building genuine proficiency by chaining prompts, wiring agents into workflows, and redesigning their daily work. His framework for career resilience - learning velocity, honest self-assessment, and treating AI as embedded infrastructure rather than an occasional novelty - speaks directly to the adoption gap that today's stories keep surfacing from different angles.

Watch: 71% of Us Are Wrong About Our AI Skills

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: a machine learning pipeline detecting the spectroscopic fingerprint of liquid-like ion flow inside solid battery crystals that conventional computation could not simulate at any practical cost, a molecular chain reaction identified in children's brains that may finally expose one mechanistic pathway driving autism, and the person responsible for building OpenAI's physical robotics program walking away because she concluded that the governance of its military deployment was being determined faster than the consequences could be understood. There's also friction, and it's intense - workers inside a company that just eliminated half its workforce reporting that 95% of AI-generated code still requires human correction before it meets production standards, organized scientific fraud networks now publishing fabricated research faster than legitimate science can appear, and semiconductor supply chains fracturing along geopolitical fault lines over commodity automotive chips whose shortage once idled car production lines across three continents. But friction generates texture, and texture is what allows a hand to distinguish what it is actually holding from what it was told to expect. Step back for a moment and you can see it: the people with the deepest technical fluency drawing the sharpest procedural lines around deployment speed while consumer and internal labor markets fill governance vacuums that legislation has not yet reached, computational perception compressing the battery materials discovery pipeline from every direction simultaneously, and the knowledge systems that underpin every other advance being contested at their foundations by industrial-scale mimicry that requires computational defense. Every transformation has a breaking point. A forge can destroy what it cannot shape... or produce the precise form that no other process could have yielded.

AI Releases & Advancements

New today

No major releases today

Other recent releases

- Google: Released Gemini 3.1 Pro to general availability, a 1M-token context window model for complex reasoning tasks, accessible via the Gemini app, Vertex AI, Gemini CLI, and Gemini Enterprise, replacing the Gemini 3 Pro Preview. (Google Blog) [2026-03-06]

- OpenAI: Launched Codex Security in research preview for ChatGPT Enterprise, Business, and Edu customers — an application security agent that builds a project-specific threat model, validates vulnerabilities in sandboxed environments, and proposes context-aware patches. (OpenAI) [2026-03-06]

- Anthropic / Mozilla: Published results of a two-week Claude Opus 4.6 security engagement on Firefox, surfacing 22 vulnerabilities (14 high-severity) and more than 100 total bugs, most fixed in Firefox 148. (Anthropic) [2026-03-06]

- llama.cpp: Merged an automatic parser generator into mainline, enabling unified out-of-the-box handling of reasoning, tool-calling, and content parsing for model templates without special definitions or recompilation. (Reddit/LocalLLaMA) [2026-03-06]

- OpenAI: Launched GPT-5.4 with Pro and Thinking variants, described as its most capable model for professional and agentic work, featuring a 1M-token context window, a new Tool Search system for the API, and record scores on computer-use benchmarks. (TechCrunch) [2026-03-05]

- OpenAI: Launched ChatGPT for Excel in beta, a GPT-5.4 Thinking–powered add-in for financial modeling, data extraction, and live financial data integrations inside Microsoft Excel. (OpenAI) [2026-03-05]

- Luma: Launched Luma Agents, a platform of AI collaborators powered by its new Unified Intelligence model family, capable of executing end-to-end creative projects across text, image, video, and audio. (TechCrunch) [2026-03-05]

- Microsoft Research: Released Phi-4-reasoning-vision-15B, a 15B parameter open-weight multimodal reasoning model excelling at math, science reasoning, and UI understanding; available on Microsoft Foundry, HuggingFace, and GitHub. (Microsoft Research) [2026-03-05]

- Arc Institute / NVIDIA: Released Evo 2, an open-source DNA foundation model trained on 9.3 trillion nucleotides from 128,000+ whole genomes spanning all three domains of life, capable of gene prediction, mutation effect modeling, and genome design. (Ars Technica) [2026-03-05]

- OpenAI: Released Codex app for Windows, featuring native PowerShell sandbox support, multi-agent coordination, automations, and a Skills section for workflow integration. (Engadget) [2026-03-05]

- Cursor: Launched Automations, an always-on agentic coding system that runs agents triggered by events from Slack, Linear, GitHub, PagerDuty, schedules, or custom webhooks, without requiring a user prompt. (TechCrunch) [2026-03-05]

- Dexterity: Released Foresight, a physics-consistent world model and 4D box packing agent for physical AI-powered industrial robotics, enabling real-time spatial reasoning for autonomous truck loading. (PR Newswire) [2026-03-05]

- Google: Released Google Workspace CLI, a command-line tool bundling APIs for Gmail, Drive, Calendar, Sheets, Docs, and other Workspace products, designed for use by both humans and AI agents. (GitHub) [2026-03-05]

Sources

Artificial Intelligence & Technology's Reconstitution

- TechCrunch: OpenAI Robotics Lead Caitlin Kalinowski Quits in Response to Pentagon Deal

- Fortune: OpenAI Robotics Leader Resigns Over Concerns About Surveillance and Autonomous Weapons

- Business Insider: The Fallout Over OpenAI's Pentagon Deal Is Growing

- ScienceDaily: AI Discovers the Hidden Signal of Liquid-Like Ion Flow in Solid-State Batteries

- Defense One: Meet the Startups Trying to Build Military-Specific AI

- BleepingComputer: Microsoft Says Hackers Abusing AI at Every Stage of Cyberattacks

Institutions & Power Realignment

- TechCrunch: A Roadmap for AI, If Anyone Will Listen

- Guardian: Tech Oligarchs Reshape Humanity While Billionaires of Old Seem Quaint

- Guardian: AI Chatbots Point Vulnerable Social Media Users to Illegal Online Casinos

- ScienceDaily: Scientists Warn Fake Research Is Spreading Faster Than Real Science (Northwestern, PNAS)

Scientific & Medical Acceleration

- ScienceDaily: Scientists Discover a Brain Signal That May Trigger Autism's Domino Effect (Hebrew University, Molecular Psychiatry)

- ScienceDaily: A Perfectly Balanced Atom Just Broke One of Nuclear Physics' Biggest Rules (Institute for Basic Science)

- ScienceDaily: Mayo Clinic Discovers Rare Gene Mutation That Causes Fatty Liver Disease (Hepatology)

- ScienceDaily: Golden Retriever Genes Linked to Anxiety, Aggression, and Intelligence in Humans (Cambridge, PNAS)

Economics & Labor Transformation

- Guardian: Current and Former Block Workers Say AI Can't Do Their Jobs After Jack Dorsey's Mass Layoffs

- NBC News: At a Lobster-Themed Event for AI Enthusiasts, Exuberance with a Side of Cocktail Sauce

Infrastructure & Engineering Transitions

- Yahoo Finance: China Warns of Global Chip Shortages as Nexperia Dispute Escalates Again

- Gizmodo: A Bizarre International War Inside One Chip Company Threatens the Global Automotive Industry

- Chicago Tribune: Report - Data Centers Could Use Up 20% of Indiana's Electricity by 2030

- Electrek: Batteries Become the New Home Solar as Net Metering Evolves and Energy Prices Soar

- CleanTechnica: A Boat Made in Singapore Will Build an Offshore Wind Farm in New York

- Electrek: ROUNDUP - More Electric Equipment Options Than Ever at CONEXPO 2026

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.