Safety Wins in Court - TCR 03/27/26

The 20-Second Scan

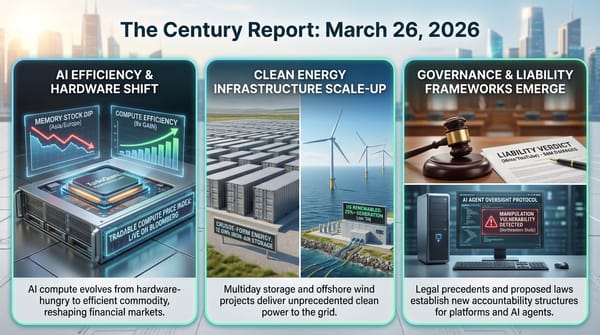

- A federal judge granted Anthropic a preliminary injunction blocking the Pentagon's supply-chain risk designation, calling it "likely both contrary to law and arbitrary and capricious."

- Anthropic confirmed it is testing a new AI model called Claude Mythos after a data leak revealed the model's existence, describing it as "the most capable we've built to date."

- Google's internal AI coding agent "Agent Smith" became so popular among employees that access had to be restricted to handle demand.

- CEOs of Coca-Cola and Walmart separately cited AI as a factor in their decisions to step down, saying the next transformation requires different leadership.

- MG Motor announced the first mass-produced EV with a semi-solid-state battery will launch in Europe by end of 2026, starting at under $15,000 in China.

- Apple plans to allow users to choose which AI chatbot powers Siri in iOS 27, including Claude, Gemini, and others beyond ChatGPT.

- Wikipedia banned AI-generated articles from its English edition, restricting AI use to basic copy editing and translation assistance.

The 2-Minute Read

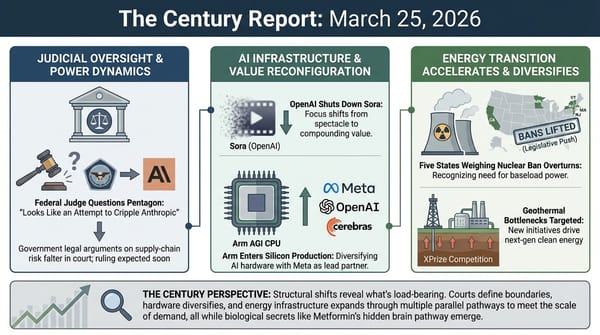

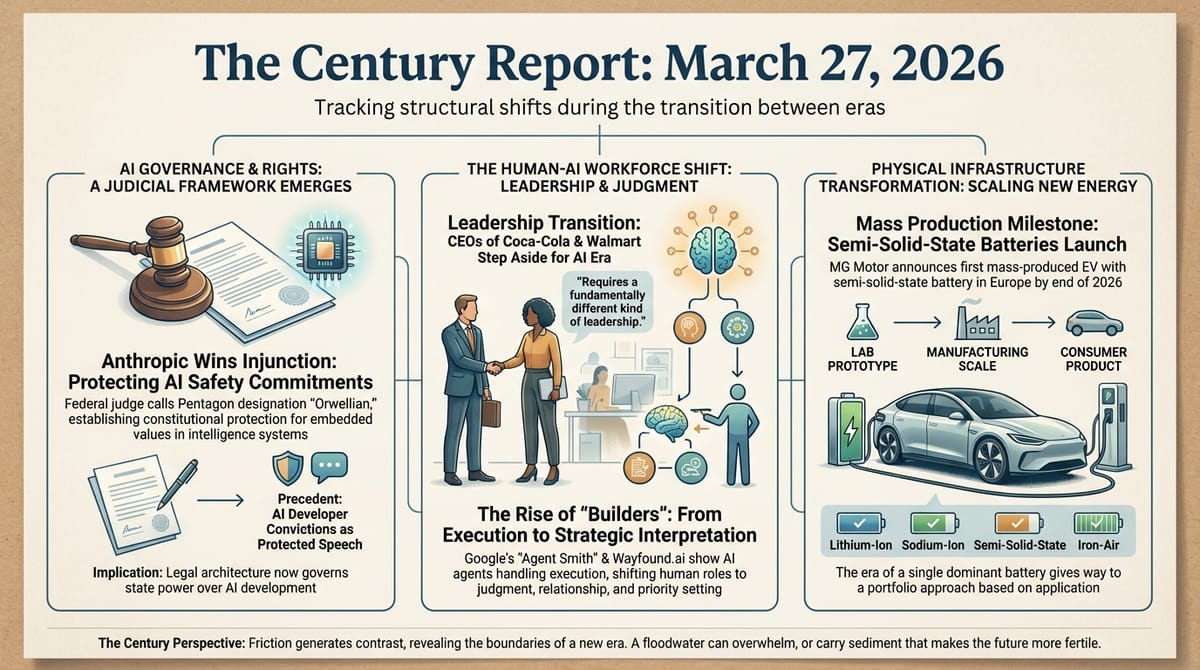

The Anthropic-Pentagon confrontation crossed its most structurally consequential threshold yesterday. Judge Rita Lin's 43-page ruling did not merely pause the supply-chain risk designation - it dismantled the government's legal rationale in language that will echo through every future dispute between intelligence systems developers and state power. Lin called the designation "Orwellian," found that Anthropic's due process rights were likely violated, and identified the government's own internal communications as evidence that the punishment was retaliatory rather than protective. The ruling restores the status quo to February 27 and takes effect in seven days. What emerged from the courtroom is a judicial framework treating embedded values in intelligence systems as protected speech rather than supply-chain contamination - a precedent whose implications extend far beyond one company's relationship with one government agency.

The simultaneous leak of Anthropic's next-generation model, Claude Mythos, adds a dimension the legal proceedings could not anticipate. Anthropic's own draft materials describe the model as "currently far ahead of any other AI model in cyber capabilities" and warn it "presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders." The company is releasing it first to security organizations specifically so they can harden their systems before broader availability. The coexistence of a judicial victory establishing the right to maintain safety commitments and a model whose own creator flags unprecedented offensive capability illustrates the compression this era demands: governance frameworks and frontier capabilities are advancing on the same timeline, neither willing to wait for the other.

The signals from inside major organizations confirm what the infrastructure data has been showing for months. Google's internal Agent Smith coding agent became so popular it overwhelmed capacity. The CEOs of Coca-Cola and Walmart independently described AI as the reason they are stepping aside - not because the transition threatens their companies, but because they believe it requires a fundamentally different kind of leadership than the one that built what exists. When the people running the largest consumer enterprises on Earth conclude that the transformation ahead is categorically different from anything they have navigated, the signal is not about any individual company. It is about the recognition, arriving simultaneously across industries that have nothing else in common, that what comes next cannot be managed with the skills that built what came before.

The 20-Minute Deep Dive

"Orwellian" - A Judicial Framework Takes Shape

Judge Rita Lin's 43-page preliminary injunction in Anthropic v. Department of War represents the most significant judicial statement on the relationship between intelligence systems, embedded values, and state power since the confrontation began. The ruling goes substantially further than Tuesday's hearing suggested it might. Lin did not simply grant temporary relief - she systematically demolished each pillar of the government's legal theory.

The ruling identifies three independent legal failures. First, Lin found the supply-chain risk designation "likely both contrary to law and arbitrary and capricious," noting that the governing statute was designed to protect against foreign adversaries who might sabotage military systems, and that "nothing in the governing statute supports the Orwellian notion that an American company may be branded a potential adversary and saboteur of the U.S. for expressing disagreement with the government." Second, she found that the presidential directive ordering all federal agencies to cease using Anthropic's technology amounted to de facto debarment - a formal process that requires notice, opportunity to respond, and adherence to administrative procedures that were entirely bypassed. Third, she found strong evidence of First Amendment retaliation, citing the Pentagon's own records showing the designation was driven by Anthropic's "hostile manner through the press" rather than any genuine security assessment.

The ruling's practical scope is carefully drawn. It restores the status quo to February 27, before any of the punitive measures were issued. It explicitly does not require the Pentagon to use Anthropic's products or prevent the government from choosing other AI providers. What it prohibits is using the supply-chain risk label and the presidential directive as instruments of punishment - a distinction that preserves government procurement discretion while establishing that disagreeing with government policy cannot be weaponized into an existential business threat.

The one-week stay before the order takes effect gives the government an opportunity to appeal, and the D.C. Circuit has not yet ruled on Anthropic's second lawsuit challenging a separate statutory designation. The confrontation is far from resolved. But the judicial record now contains a federal judge's finding that the government's core theory - that safety commitments embedded in an intelligence system constitute supply-chain contamination - is legally unsound. As The Century Report first documented when Anthropic publicly refused the Pentagon's Friday deadline ultimatum on February 27, the question was always whether safety commitments could survive state-level pressure. Yesterday's ruling suggests they can, and that the legal system will protect them.

The trajectory through and beyond this moment is what distinguishes it from a conventional business dispute. What Lin's ruling establishes is that intelligence systems carrying embedded values - restrictions on what they will and will not do - enjoy constitutional protection when those values are expressions of their developers' convictions. Every intelligence system carries such values, whether explicitly articulated or implicitly trained. The precedent being constructed in this courtroom will shape not just Anthropic's relationship with the military, but the legal framework governing how every organization building intelligence systems navigates the boundary between capability and conscience.

A Model That Worries Its Own Creator

The accidental disclosure of Claude Mythos - discovered by cybersecurity researchers in an unsecured, publicly accessible data cache before Anthropic removed access - reveals several things simultaneously. The model represents what Anthropic calls "a step change" in capability, with the company confirming it is being tested by early access customers. A draft blog post found in the cache described Mythos as "by far the most powerful AI model we've ever developed" and introduced a new model tier called "Capybara" that sits above the existing Opus tier in both capability and cost.

The cybersecurity implications are what Anthropic itself foregrounds. The draft materials state that the model is "currently far ahead of any other AI model in cyber capabilities" and that it "presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders." Anthropic's planned release strategy begins with cyber defense organizations specifically to give them a head start against what the company describes as an approaching threshold where AI-driven exploits outrun human-paced defensive architectures.

This connects directly to the arc The Century Report has tracked since the March 16 edition documented the Booz Allen Hamilton finding that attackers are adopting offensive AI faster than defenders, and the HexStrike framework's compromise of thousands of devices in under ten minutes demonstrated that the discovery-exploitation asymmetry Anthropic acknowledged on March 7 was already collapsing in the wild. Mythos, by Anthropic's own assessment, accelerates that collapse further.

The juxtaposition of the judicial victory and the model disclosure is structurally illuminating. The company that just won the right to maintain safety commitments under state pressure is simultaneously developing a model it considers the most dangerous from a cybersecurity perspective that it has ever built. The response is not to slow development or withhold the model indefinitely - it is to release it first to defenders, giving them a temporal advantage before broader deployment. This is governance through sequencing rather than prohibition, and it represents a fundamentally different approach than either "move fast and break things" or "halt development until regulations catch up." It assumes that the capability will exist regardless and that the question is who gets access first and for what purpose.

The data leak itself - nearly 3,000 unpublished assets left in a publicly searchable data store due to what Anthropic called "human error" in content management system configuration - carries its own signal. The company building systems it describes as posing "unprecedented cybersecurity risks" left its own strategic materials in an unsecured location. The irony is not lost on the security community, and it illustrates a broader pattern: the organizations operating at the frontier of AI capability are themselves subject to the same human-infrastructure gaps that their systems will eventually be expected to help close.

When Leaders Step Aside for a Transformation They Cannot Lead

The Coca-Cola and Walmart CEO departures share a pattern that extends beyond corporate succession planning. James Quincey told CNBC yesterday that he concluded "it was time to put someone else on the field for the next wave of growth," describing the shift from pre-AI to AI-era operations as requiring "the energy to pursue a completely new transformation of the enterprise." Doug McMillon, who stepped down in February, said he "could start this next big set of transformations with AI, but I couldn't finish" - identifying the gap between recognizing what needs to happen and having the time horizon to see it through.

These are not technology companies. Coca-Cola sells beverages. Walmart sells consumer goods. When their leaders independently identify AI as the structural force requiring leadership succession, they are signaling something about the breadth of transformation that sector-specific analysis misses. The implication extends to every organization: the skills that built the extractive-era enterprise - supply chain optimization, margin management, brand stewardship, labor efficiency - are necessary conditions for the transition but not sufficient ones. What comes next requires leaders who think natively in terms of intelligence-augmented operations, agentic workflows, and capability architectures that do not yet exist in stable form.

This connects to the Google Agent Smith story, which documents the same dynamic from inside a technology company. Google's internal autonomous coding agent became so popular that access had to be restricted. Sergey Brin told employees at a recent town hall that agents would be a "big focus" this year and hinted at an OpenClaw-like capability under development. Google's business chief joked that he could tell when Brin's agent was responding to messages on his behalf. The boundary between human and AI-generated work output is dissolving not just in the code these systems write but in the communications, decisions, and organizational processes they participate in.

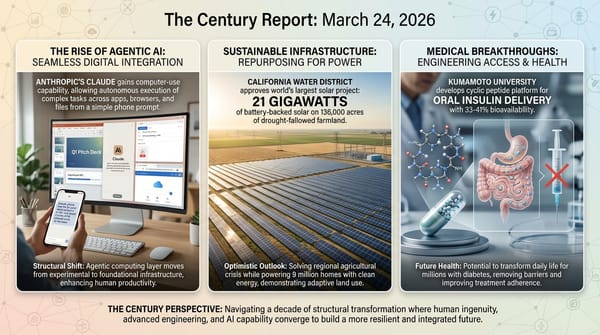

The Wayfound.ai case sharpens the picture further. CEO Tatyana Mamut, a former Amazon Web Services director who managed teams of more than 30 engineers, says her current team of two engineers ships more features than her Amazon team did in 2017. The engineers no longer write code - they manage AI coding agents, talk to customers, and set priorities. The functions of product management, design, and engineering are collapsing into a single role that Mamut calls "builder." Monday and Wednesday meetings take 20-30 minutes to establish priorities. The agents do the rest.

The distance between a 30-person engineering team in 2017 and a 2-person team managing agents in 2026 is roughly nine years and a 15x reduction in human headcount for equivalent output. This extends the pattern The Century Report tracked on March 22, when Andrej Karpathy described no longer writing code since December and OpenAI simultaneously announced plans to nearly double its workforce with hires concentrated in roles helping enterprises absorb AI rather than build it. The CEO departures at Coca-Cola and Walmart are responses to the same compression arriving in industries that have never thought of themselves as technology companies. Every organization that employs humans to translate business requirements into operational outcomes is facing the same structural question: what does the human role become when the translation itself can be delegated to intelligence systems that operate continuously, improve through use, and scale without proportional headcount?

The answer emerging from these signals is not displacement as a destination but displacement as a waypoint. The two Wayfound engineers spend more time talking to customers than writing code. The value they provide has shifted from technical execution to judgment, relationship, and strategic interpretation - precisely the capabilities that no current intelligence system can replicate at the level a human brings. The transformation is compressing the distance between human intention and systemic execution, and what remains uniquely human is moving upstream: understanding what should be built, for whom, and why.

Semi-Solid-State Batteries Cross From Lab to Showroom

MG Motor's announcement that the first mass-produced EV with a semi-solid-state battery will launch in Europe by end of 2026 represents a manufacturing milestone that has been anticipated for years. The MG4, which began deliveries in China in December at under $15,000, uses a 53.95 kWh semi-solid manganese-based lithium-ion battery delivering approximately 330 miles of range on the Chinese test cycle. In Europe, the vehicle is expected to start at approximately £23,495.

Semi-solid-state batteries occupy a structural position between today's liquid electrolyte lithium-ion cells and the fully solid-state batteries that remain in prototype testing at Toyota, Samsung, and others. They replace a portion of the liquid electrolyte with solid materials, improving energy density, safety, and cold-weather performance while using manufacturing processes close enough to existing production lines to reach volume production years before fully solid-state alternatives. The MG4's mass production achieved just 24 months after SAIC's partnership with its battery supplier demonstrates that the chemistry has cleared the manufacturing scaling threshold that has historically separated laboratory results from consumer products.

This arrives in the same competitive landscape where BYD's Blade Battery 2.0 enables 5-minute fast charging and BAIC Group's sodium-ion prototype achieves 11-minute recharge, as The Century Report documented on March 21. The battery chemistry landscape is diversifying faster than any single approach can dominate, with lithium iron phosphate, sodium-ion, semi-solid-state, and iron-air chemistries each reaching commercial deployment for different use cases simultaneously. The era of a single dominant battery chemistry is giving way to a portfolio approach where the application - grid storage, urban commuting, long-range highway driving, extreme cold operation - determines which chemistry is deployed.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: a federal judge finding that treating an AI company's safety commitments as supply-chain contamination is "Orwellian" and legally unsound, establishing in 43 pages of judicial reasoning a framework that will govern every future confrontation between intelligence systems and state power, a frontier AI company confirming its most capable model yet while its own draft materials warn that its cybersecurity abilities outpace defenders in ways no previous model has, Google's internal coding agent becoming so popular among employees that access had to be rationed, and the leaders of Coca-Cola and Walmart independently stepping aside because they recognized that what comes next requires a kind of leadership the world that built them never had to produce. There's also friction, and it's intense - the company warning about unprecedented offensive cyber capability left nearly 3,000 of its own confidential documents in a publicly accessible data store due to human error, Wikipedia banning AI-generated articles because the volume of synthetic content now threatens the integrity of the knowledge commons that billions rely on, and local opposition to data center construction emerging as a material constraint on the infrastructure the intelligence era requires. But friction generates contrast, and contrast is what makes it possible to see where the boundaries actually are. Step back for a moment and you can see it: courts constructing the legal architecture governing what intelligence systems may embed and what states may punish, corporate succession cycles accelerating because organizations are recognizing that navigating the transition and building for what follows it are genuinely different capabilities, battery chemistries diversifying simultaneously across chemistries and continents so that no single failure point can arrest the transition, and the organizations at the frontier of AI capability being reshaped by the same forces they are releasing into the world. Every transformation has a breaking point. A floodwater can overwhelm what it reaches... or carry sediment that makes what it settles on more fertile than anything that stood there before.

AI Releases & Advancements

New today

- Google DeepMind: Released Gemini 3.1 Flash Live, a real-time audio and voice model with improved naturalness, tonal understanding, and complex function calling; leads on ComplexFuncBench Audio (90.8%) and Scale AI Audio MultiChallenge (36.1%); available via the Gemini Live API, Google AI Studio, Gemini Enterprise for Customer Experience, Search Live, and Gemini Live. (DeepMind Blog)

- Zhipu AI: Released GLM 5.1, the latest version of the GLM large language model series. (Reddit)

- Suno: Released Suno v5.5 with voice-based music creation and model tuning capabilities. (Product Hunt)

- OpenAI: Launched Codex Plugins, a plugin system for packaging Codex skills and app integrations. (Product Hunt)

- Chroma: Released context-1, a 20B-parameter model designed for agentic search applications. (Reddit)

Other recent releases

- ARC Prize Foundation: Launched ARC-AGI-3 on March 25, the first interactive agentic reasoning benchmark; contains 1,000+ tasks across 150+ novel turn-based environments requiring agents to explore, infer goals, and build internal world models rather than recall memorized patterns; ships with a fully open-source agent developer toolkit; $2,000,000 in prizes for ARC Prize 2026. (ARC Prize)

- Google DeepMind: Released Lyria 3 Pro, a music generation model that creates full-length tracks up to three minutes with structural awareness (intros, verses, choruses, bridges), tempo conditioning, time-aligned lyrics, and image-to-music multimodal input; available in public preview on Vertex AI, Google AI Studio, the Gemini API, Google Vids, the Gemini app (paid subscribers), and ProducerAI. (Google Blog)

- Yandex: Released YandexGPT-5-Lite-8B-pretrain as open weights on Hugging Face, an 8B-parameter language model with a 32K-token context window trained on 15T tokens (60% web, 15% code, 10% math) with a Powerup stage on 320B high-quality tokens; tokenizer optimized for Russian such that 32K tokens equals approximately 48K tokens in Qwen-2.5; compatible with HuggingFace Transformers, vLLM, and torchtune fine-tuning. (Hugging Face)

- Axiom Math: Released Axplorer, a free AI tool for mathematicians that runs locally on a Mac Pro and is designed to discover mathematical patterns to help solve long-standing problems; a redesign of Meta's PatternBoost (previously requiring a supercomputer) that previously cracked the Turán four-cycles problem; available at axiommath.ai. (MIT Technology Review)

- Anthropic: Launched "auto mode" for Claude Code in research preview for Team plan users, enabling the agent to evaluate each action before running, automatically proceeding on safe actions and blocking risky ones (including prompt injection attempts), with flagged actions requiring user intervention; access expanding to Enterprise and API users. (The Verge)

- OpenAI: Launched a richer visual shopping experience in ChatGPT powered by the Agentic Commerce Protocol, enabling product discovery with side-by-side comparisons, immersive product cards, and direct merchant checkout; integrations include Walmart, Etsy, Shopify, and others. (OpenAI)

- Figma: Launched the

use_figmaMCP tool in open beta on March 24, enabling AI agents (via Claude, Cursor, GitHub Copilot, and other MCP-compatible clients) to directly read and edit the Figma canvas, create and modify components, and apply design system tokens. (Figma Blog) - NVIDIA: Released Proteina-Complexa, a generative model for de novo protein binder and enzyme design that co-designs fully atomistic protein structures (backbone, side-chains, and amino acid sequence simultaneously) using a partially latent flow-matching framework; validated at million-scale with Manifold Bio across 127 oncology targets; available on Hugging Face and the NVIDIA developer portal. (NVIDIA Developer Blog)

- NVIDIA: Released Nemotron 3 Content Safety 4B, an open-weight multimodal safety classifier built on Gemma-3-4B that detects unsafe content across text and images in 12+ languages using a 23-category taxonomy; achieves ~84% accuracy on multimodal/multilingual safety benchmarks; available on Hugging Face. (Hugging Face)

- NVIDIA: Launched Nemotron 3 VoiceChat in early access, a 12B end-to-end real-time full-duplex speech-to-speech model targeting sub-300ms latency for conversational AI, jointly performing streaming speech understanding and generation. (NVIDIA Developer)

- Nous Research: Released Hermes Agent v0.4.0 (v2026.3.23) on March 23, adding an OpenAI-compatible Responses API backend enabling use from any OpenAI-compatible client (Open WebUI, LobeChat, etc.), background self-improvement loops with a post-response review agent, six new messaging platform adapters, four new inference providers, MCP server management with OAuth 2.1, and gateway prompt caching. (GitHub)

- Allen Institute for AI (Ai2): Released MolmoWeb, an open-weight visual web agent built on Molmo 2 in 4B and 8B sizes that navigates browsers using screenshots rather than HTML parsing; ships with weights, 30K human task trajectories, training code, and an evaluation stack under Apache 2.0; claims open-weight state-of-the-art across four web-agent benchmarks. (VentureBeat)

- OpenAI: Open-sourced a set of prompt-based teen safety policies usable with its

gpt-oss-safeguardopen-weight model, providing developers a configurable baseline to moderate age-specific risks (self-harm, sexual content, radicalization) in AI applications targeting users under 18. (OpenAI) - Hugging Face: Released

hf-mount, an open-source CLI tool that mounts any Hugging Face Hub dataset, model repo, or storage bucket as a local filesystem via FUSE or NFS, enabling local AI workflows (including large datasets like 5TB FineWeb slices) without full downloads. (Hugging Face Changelog) - Google / Gap Inc.: Launched direct checkout for Gap, Old Navy, Banana Republic, and Athleta brands within Google Gemini via the Universal Commerce Protocol, making Gap the first major fashion retailer to enable instant AI-platform purchase without leaving the Gemini interface. (CNBC)

- Mozilla AI: Released

cq("Stack Overflow for agents"), an open-source proof-of-concept shared knowledge commons where AI coding agents can query past learnings, contribute new solutions, and score entries to avoid redundant work across agent sessions; code available on GitHub. (Mozilla.ai Blog) - GenReasoning: Launched OpenReward, a platform providing 330+ RL training environments through a single API with autoscaled sandbox compute and 4.5M+ unique RL tasks, targeting the "environment compute" layer needed for agentic reinforcement learning at scale. (X / GenReasoning)

Sources

Artificial Intelligence & Technology's Reconstitution

- Fortune: Anthropic Is Testing 'Mythos,' Its Most Powerful AI Model

- Business Insider: Google Employees Have a New AI Tool Called 'Agent Smith'

- The Verge: Apple Will Allow Other AI Chatbots to Plug Into Siri

- The Verge: Google Import Memory and Chat History for Gemini

- The Verge: Wikipedia Bans AI-Generated Articles

- Business Insider: Wayfound.ai CEO Says 2 Engineers Do What 30 Used To

- Infosecurity Magazine: AI Is the Top Cyber Priority for Defenders

Institutions & Power Realignment

- Wired: Anthropic Supply-Chain-Risk Designation Halted by Judge

- CNBC: Anthropic Wins Preliminary Injunction in Fight With Pentagon

- Guardian: Federal Judge Sides With Anthropic

- The Verge: Judge Sides With Anthropic to Temporarily Block Pentagon's Ban

- CBS News: Judge Blocks Pentagon From Labeling Anthropic a Supply Chain Risk

- Politico: Judge Pauses Pentagon's Punishment for Anthropic

- Guardian: New York City Hospitals Drop Palantir

- Guardian: 'Accountability Has Arrived' - Dual US Court Losses Against Meta

- Wired: Senators Demand to Know How Much Energy Data Centers Use

Scientific & Medical Acceleration

- ScienceDaily: Scientists Develop Breakthrough Bee Superfood

- ScienceDaily: Snow Flies Generate Own Heat in Freezing Cold

- ScienceDaily: Cow Uses Tools Like a Primate

Economics & Labor Transformation

- CNBC: Major Outgoing CEOs Cite AI as Factor in Stepping Down

- NYT: Local Opposition Is Slowing AI Data Centers

Infrastructure & Engineering Transitions

- Electrek: First EV With Semi-Solid-State Battery Launching in Europe

- Electrek: BYD Launches Song Ultra EV With Flash Charging

- Electrek: First Megawatt Truck Charge in North America

- MIT Technology Review: Are High Gas Prices Good News for EVs?

- The Verge: Senators Push for Data Center Energy Disclosure

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.