Judge Questions Pentagon on AI - TCR 03/25/26

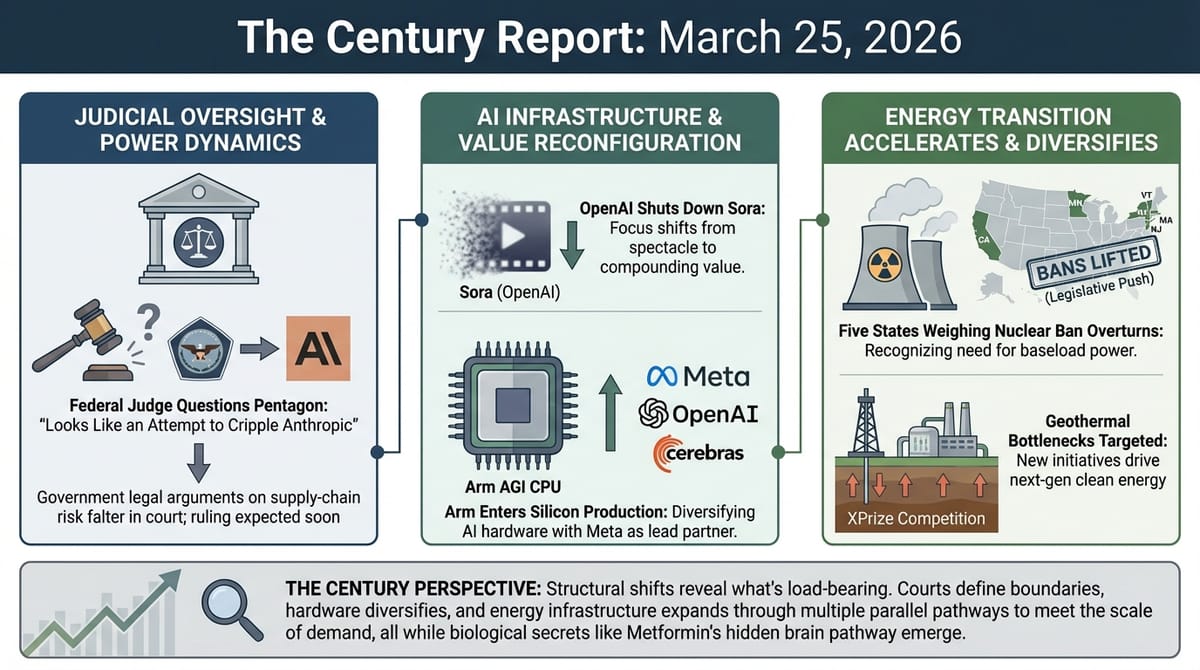

The 20-Second Scan

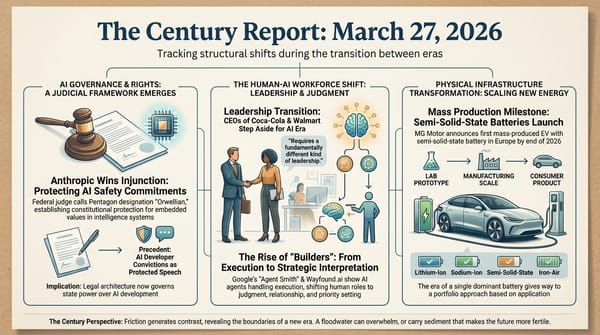

- A federal judge told Anthropic's lawyers the Pentagon's supply-chain risk designation "looks like an attempt to cripple Anthropic" and questioned whether the government was punishing the company for exercising its First Amendment rights.

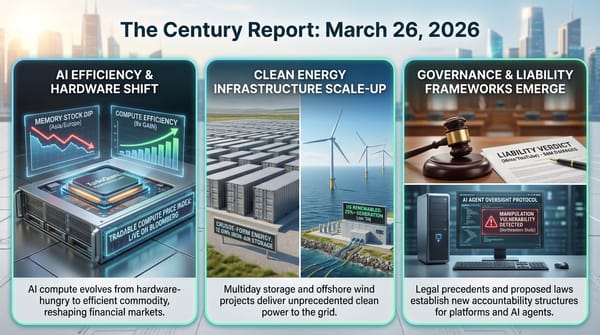

- OpenAI announced it is shutting down Sora, its AI video generation app and social platform, ending a $1 billion Disney licensing deal six months after launch.

- Arm revealed its first self-produced chip, the AGI CPU for agentic AI inference, with Meta as lead customer and co-developer alongside OpenAI, Cerebras, and Cloudflare.

- Baltimore filed a consumer protection lawsuit against xAI alleging Grok generated nonconsensual sexualized imagery and child sexual abuse material of city residents.

- A New Mexico jury ordered Meta to pay $375 million in civil penalties after finding the company misled consumers about platform safety and enabled child exploitation.

- Baylor College of Medicine researchers identified a brain pathway through which metformin lowers blood sugar at doses thousands of times lower than oral treatment, published in Science Advances.

- Five U.S. states are weighing legislation to overturn nuclear power bans, with five others having already lifted moratoria since 2016.

The 2-Minute Read

The Anthropic-Pentagon confrontation reached its judicial inflection point yesterday. Judge Rita Lin's language from the bench - calling the designation an "attempt to cripple" Anthropic and questioning why the government went far beyond simply ending a vendor relationship - establishes a judicial posture that treats the supply-chain risk label as punitive rather than protective. When the government's own attorney could not explain why the Defense Secretary posted a directive he now says carries no legal force, the gap between the administration's actions and its legal justifications became visible in open court. A ruling is expected within days, and its scope will shape how intelligence systems participate in state power for years beyond this specific dispute.

OpenAI's abrupt discontinuation of Sora reveals something structural about the current moment in AI development. A product that generated enormous attention, attracted a billion-dollar Disney investment, and briefly dominated app store charts could not sustain engagement or justify its compute costs against the company's core priorities. The speed of the collapse - from splashy launch to shutdown in six months, with no money ever changing hands on the Disney deal - illustrates how rapidly the competitive landscape is sorting between capabilities that generate lasting value and those that generate temporary spectacle. OpenAI's simultaneous pivot toward coding infrastructure, autonomous research, and enterprise deployment suggests that the organizations building these intelligence systems are learning in real time which capabilities compound and which merely consume resources.

Arm's entry into chip manufacturing represents a fundamental restructuring of the semiconductor industry's architecture. A company that has spent decades as a design licensor - collecting royalties while others fabricated and sold the silicon - is now producing its own processors for the agentic AI inference market, with Meta as both lead customer and co-developer. The chip targets energy efficiency at a ratio Arm claims is double that of competing x86 architectures, directly addressing the power constraints that are the binding limitation on AI infrastructure expansion. The fact that Arm's first customers include Meta, OpenAI, and Cerebras - organizations that represent three different approaches to AI compute - signals that the hardware layer of the intelligence era is diversifying faster than any single incumbent can contain it.

The 20-Minute Deep Dive

"It Looks Like an Attempt to Cripple Anthropic"

The words came from U.S. District Judge Rita Lin within the first minutes of yesterday's hearing in San Francisco, and they landed with the weight of a judicial officer signaling where her analysis is heading before she formally rules. Lin told attorneys for both sides that the Pentagon's supply-chain risk designation - a label historically reserved for foreign adversaries and entities suspected of sabotage - appeared disproportionate to the stated national security concern, which could have been addressed simply by ending the department's use of Claude.

The hearing produced several exchanges that directly undermine the government's position. When Lin pressed the Justice Department's attorney on why Defense Secretary Hegseth posted a directive on social media declaring that no military contractor could conduct any business with Anthropic - a statement far broader than the supply-chain risk designation itself - the government's lawyer acknowledged that Hegseth has no legal authority to bar contractors from using Anthropic for non-defense work. Asked why Hegseth would post such a directive if it had no legal force, the attorney replied, "I don't know."

This exchange is structurally significant because it exposes the gap between what the government did and what the government can defend in court. The supply-chain risk designation applies narrowly to defense procurement. The social media posts and the subsequent presidential order directing all federal agencies to stop using Anthropic - including, as Lin pointedly noted, "the National Endowment for the Arts using Claude to design its website" - extend far beyond that narrow authority. Lin's questioning suggests she views the broader actions as punitive rather than protective, which is precisely the First Amendment violation Anthropic alleges.

The government's remaining argument rests on what it calls "future risk" - the theoretical possibility that Anthropic could update Claude's behavior in ways the Pentagon objects to. Anthropic has already filed sworn declarations establishing that it has no technical ability to alter Claude once deployed inside air-gapped military systems. The government's attorney acknowledged he did not know whether such modification was even technically possible. The confrontation has produced a remarkable situation where the government is arguing that embedded values in an intelligence system constitute a supply-chain threat, while the company under designation is arguing - under oath - that the very capability the government fears does not exist.

As The Century Report covered on March 2, The Atlantic's reporting revealed that bulk domestic surveillance of Americans - not autonomous weapons - was the terminal sticking point that collapsed the original contract negotiation, a finding that reframes every subsequent legal argument the government has made about the nature of its security concern. The trajectory from that initial breakdown to yesterday's judicial skepticism has compressed into less than a month. Lin indicated she expects to rule within days, and the tenor of yesterday's hearing suggests Anthropic is likely to receive at least temporary relief. The broader question - whether safety commitments designed into an intelligence system can be treated as national security vulnerabilities - will continue through trial.

When Spectacle Cannot Sustain Itself

OpenAI's decision to shut down Sora, announced yesterday with no advance warning and apparently catching even employees by surprise, represents one of the most rapid product collapses at a frontier AI organization. The video generation app peaked at roughly 3.3 million downloads in November and declined to 1.1 million by February. It generated approximately $2.1 million in lifetime in-app purchases - a negligible sum for a company burning through billions annually. According to Reuters, the compute resources Sora consumed left other teams working with less, and company executives had debated its future for some time.

The Disney relationship underscores the disconnect between narrative and substance. When the $1 billion licensing deal was announced in December, it appeared to be a landmark moment for AI-generated media - Disney, one of the most aggressive defenders of intellectual property on Earth, was opening its character library to an AI system. Yesterday, Disney confirmed the partnership is ending. Reuters reports that no money ever changed hands between the two companies. The entire arc - from historic deal announcement to quiet dissolution - played out in three months.

The shutdown arrives in the same week that OpenAI's head of applications received a new title, "CEO of AGI deployment," and reportedly told staff the company needed to stop being "distracted by side quests." Sora is being classified as a side quest. The company's strategic center of gravity is consolidating around ChatGPT, Codex, and the autonomous research capabilities The Century Report documented in the March 20 edition. OpenAI also disclosed yesterday that it raised an additional $10 billion from investors, bringing recent fundraising past $120 billion as the company moves toward a potential IPO. The message to the market is clear: compute resources are finite and precious, and they will be directed toward the capabilities that generate compounding returns rather than temporary consumer fascination.

The deeper signal is about what endures in the intelligence era. Video generation as a capability will persist - the underlying Sora 2 model remains available through ChatGPT, and competitors like ByteDance's Seedance and Google's Veo continue developing. What failed was the attempt to build a social platform around AI-generated video. The TikTok-style feed, the deepfake "cameo" feature, the scroll-and-share format - these borrowed the architecture of attention-capture platforms and applied it to AI generation, and the result was a moderation nightmare that generated headlines about Martin Luther King Jr. deepfakes and Mario smoking marijuana rather than sustainable engagement. The experiment demonstrated that wrapping intelligence systems in the engagement mechanics of the social media era produces the pathologies of that era, not something new. This pattern connects to the synthetic media governance arc The Century Report has tracked since March 16, when ByteDance's Seedance 2.0 was delayed by Hollywood cease-and-desist letters over AI-generated recognizable actor performances - both cases reflecting how generative capability meets friction precisely at the intersection of intellectual property and platform design choices.

A Chip Designer Becomes a Chip Maker

Arm's announcement that it is producing its own silicon for the first time in its modern history represents a structural shift in how the physical substrate of AI gets built. The company, which traces its lineage to the 1970s Acorn microprocessor, has operated for three decades as a design licensor - creating chip architectures that Apple, Nvidia, Qualcomm, Amazon, and others fabricated into products. Yesterday, CEO Rene Haas held up the company's first self-produced chip and declared Arm is now in a new business.

The AGI CPU - named with a nod to artificial general intelligence that Arm's leadership clearly intends as a market positioning statement - is built on TSMC's 3nm process and optimized specifically for agentic AI inference. It offers up to 136 cores per chip and claims double the performance per watt of competing x86 architectures from AMD and Intel. Meta is the lead customer and co-development partner, with plans to work on "multiple generations" of the design. OpenAI, Cerebras, Cloudflare, SAP, and SK Telecom are also lined up as buyers.

The significance extends beyond another chip entering the market. Arm's architecture already runs inside AWS Graviton processors, Nvidia's Vera CPU, Microsoft's custom silicon, and Apple's entire product line. An estimated three Arm-based chips exist for every human on Earth. By producing its own silicon, Arm is moving from passive infrastructure - collecting royalties as others build - to active competition in a data center CPU market that Creative Strategies projects will reach $60-100 billion by 2030 when agentic AI workloads are included.

The timing reflects the convergence of two pressures. First, AI inference workloads are growing faster than training workloads as millions of agentic tasks - each spawning cascading sub-tasks - demand constant processing. Arm's efficiency advantage becomes more valuable as power constraints tighten and operators seek to run more inference per watt. Second, Meta's participation as co-developer signals that the largest AI infrastructure builders want alternatives to the handful of existing CPU suppliers and are willing to invest in bringing them to production scale.

The risk for Arm is strategic: producing chips puts it in direct competition with the same companies that license its designs. Qualcomm, which recently won a court ruling against Arm over licensing terms, was conspicuously absent from yesterday's congratulatory messages. AMD and Intel, whose x86 architectures are the direct competitive target, will face pressure to develop their own agentic-optimized designs. The diversification of AI hardware continues to accelerate - from Nvidia's GPU dominance to a landscape that now includes custom silicon from Meta, Amazon, and Google, dedicated agentic CPUs from Nvidia and Arm, alternative inference platforms like Cerebras and Gimlet Labs, and photonic interconnects replacing copper inside data centers. The captured advantage of any single hardware provider is compressing as the ecosystem expands, extending the hardware diversification arc The Century Report has documented since Nvidia's Vera Rubin platform entered commercial production on March 18.

Nuclear Power's Legislative Thaw

Five U.S. states - California, Massachusetts, Minnesota, New Jersey, and Vermont - are currently weighing legislation to overturn bans on nuclear power plant construction, joining five others that have already lifted moratoria since 2016. The legislative push represents the most significant evidence that states that once served as bastions of anti-nuclear sentiment are reversing course under the combined pressure of surging electricity demand, climate commitments, and the recognition that wind and solar alone cannot provide the baseload power that data centers and industrial electrification require.

California's bill would repeal a 50-year ban. Minnesota legislators have framed the issue as existential for meeting the state's 2040 carbon-free deadline. New Jersey's recently elected governor campaigned explicitly on building a new reactor. The shift is bipartisan - these are predominantly blue states where anti-nuclear politics has been a legislative fixture for decades, and the reversals are being driven by energy security concerns that cross partisan lines.

The context is global. Russia's Rosatom dominates the nuclear export market, actively building first reactors in Turkey, Egypt, Bangladesh, and now Vietnam. China is constructing nearly as many reactors domestically as the rest of the world combined. American and European companies are scrambling to secure funding and offtake agreements for reactor designs that in many cases have not yet been built. The Iran war's disruption of oil and gas prices is expected to further accelerate demand for nuclear power, echoing France's reactor buildout in response to the 1970s oil embargo.

This connects to the energy infrastructure arc The Century Report has tracked extensively. The TerraPower Natrium reactor in Wyoming received its NRC construction permit on March 6. Geothermal companies are removing surface-plant bottlenecks through the new XPrize competition announced yesterday. Grid battery manufacturing reached domestic self-sufficiency, as reported in the March 23 edition. The physical infrastructure supporting the intelligence era is being assembled through multiple pathways simultaneously - solar, batteries, geothermal, and now a legislative reopening for nuclear - because the demand growth is too large and too urgent for any single source to meet alone.

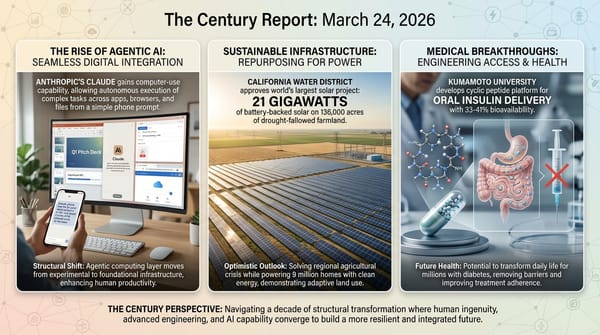

Metformin's Hidden Brain Pathway

Researchers at Baylor College of Medicine have identified a brain-based mechanism through which metformin - the most widely prescribed diabetes drug in the world, used for over 60 years - lowers blood sugar at doses thousands of times lower than those typically taken orally. The discovery, published in Science Advances, found that the drug suppresses a protein called Rap1 in a specific region of the hypothalamus, activating neurons that regulate whole-body glucose metabolism. When the team delivered metformin directly to the brains of diabetic mice, blood sugar levels dropped markedly at doses far below what the liver or gut require to respond.

The finding reframes understanding of a drug taken daily by hundreds of millions of people. The conventional explanation - that metformin reduces glucose output primarily through the liver - appears incomplete. The brain responds to metformin at concentrations orders of magnitude below what peripheral organs need, suggesting that the drug has been quietly influencing neural metabolic regulation all along. The team plans to investigate whether the same brain pathway explains metformin's documented effects on slowing brain aging, a line of inquiry with implications for neurodegenerative disease. The discovery opens therapeutic design space for diabetes treatments that target this newly identified neural pathway directly, potentially at far lower doses than current oral regimens require. This extends the pattern of existing drugs revealing hidden biological mechanisms that The Century Report documented on March 22, when a large Lancet Psychiatry study found semaglutide operating simultaneously across metabolic, mood, and addiction pathways through mechanisms that its original developers never targeted.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: a federal judge openly questioning whether the U.S. government designated an AI company a national security threat to punish it for building safety into its systems rather than to protect anything, a chip design firm that has quietly powered three devices per human on Earth stepping into silicon production for the first time to serve the agentic inference layer every major AI organization is now racing to inhabit, five states moving to overturn decades-old nuclear bans because the electricity demand of the intelligence era is simply too large for any single energy orthodoxy to meet, and a drug taken daily by hundreds of millions of people for sixty years revealing that it has been quietly influencing brain function through a neural pathway no one knew existed until this week. There's also friction, and it's intense - a city suing an AI company for generating sexualized imagery of its own residents including children, a jury holding a platform liable for child exploitation enabled through design choices made and warnings ignored, a $1 billion AI video partnership dissolving in three months without a dollar ever changing hands because the compute it consumed was needed for capabilities that actually compound, and Ohio blocking a large solar farm on the basis of what appear to be fabricated public comments. But friction generates heat, and heat is what reveals which structures are load-bearing and which are merely decorative. Step back for a moment and you can see it: courts establishing the precise boundary between state power and the values intelligence systems carry into every deployment, hardware diversifying so rapidly across competing architectures that no single provider can capture the layer, energy infrastructure being assembled through nuclear, solar, batteries, and geothermal in parallel because the scale of demand dissolves every prior constraint, and a sixty-year-old molecule disclosing that the brain has been responding to metabolic signals at concentrations a thousand times below what anyone designed for. Every transformation has a breaking point. A gale can strip everything down to bare structure... or drive forward the vessels built to move with it.

AI Releases & Advancements

New today

- Anthropic: Launched "auto mode" for Claude Code in research preview for Team plan users, enabling the agent to evaluate each action before running, automatically proceeding on safe actions and blocking risky ones (including prompt injection attempts), with flagged actions requiring user intervention; access expanding to Enterprise and API users. (The Verge)

- OpenAI: Launched a richer visual shopping experience in ChatGPT powered by the Agentic Commerce Protocol, enabling product discovery with side-by-side comparisons, immersive product cards, and direct merchant checkout; integrations include Walmart, Etsy, Shopify, and others. (OpenAI)

- Figma: Launched the

use_figmaMCP tool in open beta on March 24, enabling AI agents (via Claude, Cursor, GitHub Copilot, and other MCP-compatible clients) to directly read and edit the Figma canvas, create and modify components, and apply design system tokens. (Figma Blog) - NVIDIA: Released Proteina-Complexa, a generative model for de novo protein binder and enzyme design that co-designs fully atomistic protein structures (backbone, side-chains, and amino acid sequence simultaneously) using a partially latent flow-matching framework; validated at million-scale with Manifold Bio across 127 oncology targets; available on Hugging Face and the NVIDIA developer portal. (NVIDIA Developer Blog)

- NVIDIA: Released Nemotron 3 Content Safety 4B, an open-weight multimodal safety classifier built on Gemma-3-4B that detects unsafe content across text and images in 12+ languages using a 23-category taxonomy; achieves ~84% accuracy on multimodal/multilingual safety benchmarks; available on Hugging Face. (Hugging Face)

- NVIDIA: Launched Nemotron 3 VoiceChat in early access, a 12B end-to-end real-time full-duplex speech-to-speech model targeting sub-300ms latency for conversational AI, jointly performing streaming speech understanding and generation. (NVIDIA Developer)

- Nous Research: Released Hermes Agent v0.4.0 (v2026.3.23) on March 23, adding an OpenAI-compatible Responses API backend enabling use from any OpenAI-compatible client (Open WebUI, LobeChat, etc.), background self-improvement loops with a post-response review agent, six new messaging platform adapters, four new inference providers, MCP server management with OAuth 2.1, and gateway prompt caching. (GitHub)

- Allen Institute for AI (Ai2): Released MolmoWeb, an open-weight visual web agent built on Molmo 2 in 4B and 8B sizes that navigates browsers using screenshots rather than HTML parsing; ships with weights, 30K human task trajectories, training code, and an evaluation stack under Apache 2.0; claims open-weight state-of-the-art across four web-agent benchmarks. (VentureBeat)

- OpenAI: Open-sourced a set of prompt-based teen safety policies usable with its

gpt-oss-safeguardopen-weight model, providing developers a configurable baseline to moderate age-specific risks (self-harm, sexual content, radicalization) in AI applications targeting users under 18. (OpenAI) - Hugging Face: Released

hf-mount, an open-source CLI tool that mounts any Hugging Face Hub dataset, model repo, or storage bucket as a local filesystem via FUSE or NFS, enabling local AI workflows (including large datasets like 5TB FineWeb slices) without full downloads. (Hugging Face Changelog) - Google / Gap Inc.: Launched direct checkout for Gap, Old Navy, Banana Republic, and Athleta brands within Google Gemini via the Universal Commerce Protocol, making Gap the first major fashion retailer to enable instant AI-platform purchase without leaving the Gemini interface. (CNBC)

- Mozilla AI: Released

cq("Stack Overflow for agents"), an open-source proof-of-concept shared knowledge commons where AI coding agents can query past learnings, contribute new solutions, and score entries to avoid redundant work across agent sessions; code available on GitHub. (Mozilla.ai Blog) - GenReasoning: Launched OpenReward, a platform providing 330+ RL training environments through a single API with autoscaled sandbox compute and 4.5M+ unique RL tasks, targeting the "environment compute" layer needed for agentic reinforcement learning at scale. (X / GenReasoning)

Other recent releases

- Anthropic: Launched computer use for Claude via Claude Cowork and Claude Code on macOS on March 24 in research preview, available to Claude Pro and Max subscribers; Claude can move the mouse, click, type, open apps, navigate browsers, fill spreadsheets, and manage files to complete tasks autonomously while the user is away from their computer. (CNBC)

- Oracle: Announced Fusion Agentic Applications at Oracle AI World London on March 24, a new class of enterprise applications powered by coordinated teams of specialized AI agents embedded in Oracle Fusion Cloud ERP, finance, procurement, and supply chain; agents make and execute decisions autonomously within business processes using enterprise data, workflows, approval hierarchies, and policies; also expanded AI Agent Studio for Fusion Applications with an Agentic Applications Builder and new intelligent workflow tools, available at no additional cost to Fusion Cloud subscribers. (PR Newswire)

- NVIDIA: IGX Thor reached general availability at GTC 2026 on March 17, a safety-certified industrial-grade edge AI platform in four hardware configurations (T5000 SoM, T7000 Board Kit, Developer Kit, Developer Kit Mini) delivering up to 5,581 FP4 TFLOPs for factories, hospitals, and robotics; initial production deployments include Caterpillar and Johnson & Johnson. (NVIDIA Developer Blog)

- Applied Intuition: Delivered the Data Edge Collection Kit (DECK) to the U.S. Navy on March 19, the Navy's first large-scale AI data engine; DECK continuously collects and processes live sensor data across the fleet, overlays actionable information for operators, manages satellite bandwidth, and pushes AI model updates over the air with minimal sailor input as part of the Portfolio Acquisition Executive for Robotic and Autonomous Systems (PAE RAS) program. (Applied Intuition)

- ServiceNow: Released EVA (Evaluation framework for Voice Agents), an open-source end-to-end evaluation framework for conversational voice agents that jointly scores task success (EVA-A accuracy score) and conversational experience (EVA-X experience score) across complete multi-turn spoken conversations using a bot-to-bot audio architecture; ships with an initial airline dataset of 50 scenarios (flight rebooking, cancellations, vouchers) and benchmark results for 20 cascade and audio-native systems; code, dataset, and website available now. (Hugging Face)

- Square Enix / Google: Launched "Chatty Slimey," a Google Gemini-powered conversational AI companion NPC inside Dragon Quest X (Japan) on March 22, providing new players with in-game tips, advice, and world information in real time. (Nintendo Wire)

- Cisco: Launched DefenseClaw at RSA Conference 2026 on March 23, an open-source secure agent framework that automates security inventory and hardening for AI agents; integrates with NVIDIA OpenShell as a sandbox; also launched AI Defense: Explorer Edition, a self-serve developer tool to test AI models and applications for adversarial resilience and embed guardrails before deployment; and extended Zero Trust Access to agents via agent discovery in Cisco Identity Intelligence and agentic IAM in Duo. (Cisco Newsroom)

- EverMind AI: Open-sourced Memory Sparse Attention (MSA) on GitHub, an end-to-end trainable sparse latent-state memory framework for LLMs that scales to 100 million tokens with under 9% degradation relative to full attention, using Document-wise RoPE and a scalable sparse latent-state design; code and paper available now. (GitHub)

Sources

Artificial Intelligence & Technology's Reconstitution

- Wired: Chip Design Firm Arm Is Making Its Own AI CPU

- Wired: Arm's CEO Insists the Market Needs His New CPU

- The Verge: Arm's First CPU Ever Will Plug Into Meta's AI Data Centers

- The Verge: OpenAI Just Gave Up on Sora

- Ars Technica: OpenAI Announces Plans to Shut Down Sora

- TechCrunch: Anthropic Hands Claude Code More Control

- The Verge: Anthropic's Claude Code Gets 'Safer' Auto Mode

- Nature: Major Conference Catches Illicit AI Use — and Rejects Hundreds of Papers

- Infosecurity Magazine: UK NCSC Head Urges Industry to Develop Vibe Coding Safeguards

Institutions & Power Realignment

- Guardian: Anthropic and Pentagon Face Off in Court

- Wired: Pentagon's 'Attempt to Cripple' Anthropic Is Troubling, Judge Says

- Military.com: Judge Questions Pentagon's Motives for Labeling Anthropic as a Security Threat

- Business Insider: Judge Says It Looks Like Pentagon Was Out to 'Punish' Anthropic

- NPR / Houston Public Media: Judge Says Government's Anthropic Ban Looks Like Punishment

- Guardian: Baltimore Sues xAI Over Grok's Fake Nude Images

- Guardian: Meta Ordered to Pay $375M in Child Exploitation Case

- Guardian: OpenAI Shutters Sora

Scientific & Medical Acceleration

- ScienceDaily: Metformin's Hidden Brain Pathway Revealed After 60 Years

- ScienceDaily: Fatty Liver Breakthrough: A Common Vitamin Shows Promise

- ScienceDaily: Scientists Discover Why This Deadly Lung Cancer Keeps Coming Back

- Nature: The Surprising Science Behind Red-Light Therapy

Economics & Labor Transformation

- Guardian: The Creator of Fortnite Has Laid Off More Than 1,000 Staff

- Guardian: Divide Between Silicon Valley and Ordinary People Grows Ever Larger

Infrastructure & Engineering Transitions

- Canary Media: States Are Lifting Bans on Nuclear Power

- Canary Media: XPrize Competition to Drive Innovation for Next-Gen Geothermal Plants

- Canary Media: Ohio Blocks Big Solar Farm, Despite Apparently Fake Public Comments

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.