TCR 03/23/26: Batteries Built in Months

The 20-Second Scan

- Earendil Labs raised $787 million backed by Sanofi and Pfizer for its AI-driven biologics platform, which has generated more than 40 drug programs including an anti-TL1A antibody entering Phase 2.

- U.S. grid battery manufacturing capacity reached self-sufficiency for the first time, with domestic factories now able to supply 100% of annual storage project demand.

- Heidelberg University researchers identified a toxic NMDAR/TRPM4 protein complex driving Alzheimer's neuronal death and demonstrated a compound that disrupted it, slowing disease progression in mice.

- Cursor confirmed its new Composer 2 coding model was built on top of Moonshot AI's open-source Kimi 2.5 without initial disclosure.

- Ann Arbor, Michigan began enrollment for a first-in-the-country Sustainable Energy Utility that will install city-owned solar and battery systems at participating homes and buildings.

- NIH-supported researchers developed a four-marker blood test that detected pancreatic cancer in over 90% of cases, including 87.5% of early-stage tumors.

- Senator Warren sent formal letters to the Defense Secretary and OpenAI's CEO demanding full contract details and calling the Anthropic supply-chain risk designation "apparent retaliation."

The 2-Minute Read

The domestic battery manufacturing story is among the most structurally consequential developments this newsletter has tracked. Eighteen months ago, the United States had effectively zero factory capacity for the lithium iron phosphate cells that grid-scale storage requires. Yesterday, industry data confirmed the country can now supply its entire annual storage demand from domestic factories, with cell production capacity projected to reach 96 gigawatt-hours by year's end. The speed of this industrial transformation - driven by policy incentives that survived a change in administration, accelerated by EV manufacturers retooling plants for the grid storage market - represents one of the fastest manufacturing scale-ups in recent American history. The upstream supply chain still depends on imported materials, but the finished-product self-sufficiency threshold has been crossed.

The Earendil Labs raise and the Heidelberg Alzheimer's finding share a common architecture: AI and computational systems identifying therapeutic targets that conventional approaches missed, then advancing toward clinical application at compressed timelines. Earendil's platform has generated over 40 drug programs from its AI engine, attracting nearly $4.5 billion in combined deal value from Sanofi alone across three agreements in under a year. The Alzheimer's compound, FP802, represents a fundamentally different therapeutic strategy - targeting a downstream death mechanism rather than the amyloid plaques that have consumed decades of research funding. Both findings demonstrate how computational resolution is opening intervention points that were structurally invisible to prior methods.

The Cursor-Kimi disclosure illuminates a deeper dynamic in how intelligence systems are being assembled. A $29 billion American coding company built its flagship model on an open-source Chinese base without mentioning it - not because the use was illegitimate, but because the geopolitical framing of AI development made the acknowledgment uncomfortable. The incident reveals how the open-source ecosystem is functioning exactly as designed, enabling capability to compound across borders and organizations, while the narrative of national AI competition treats that same cross-pollination as something to obscure. The tension between how intelligence systems are actually built and how that building is described publicly is widening.

The 20-Minute Deep Dive

The Battery Factory That Wasn't There Eighteen Months Ago

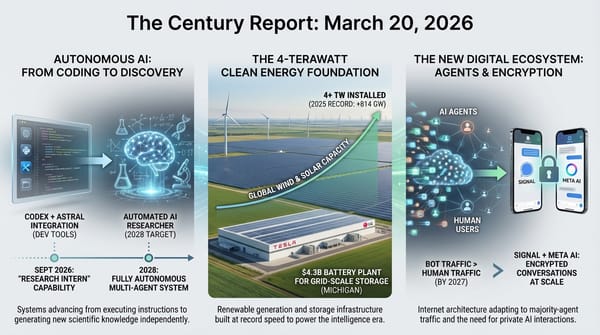

The U.S. Energy Storage Coalition published data yesterday confirming that American factories can now produce enough grid battery enclosures and, by year's end, enough battery cells to meet the country's entire annual storage deployment demand domestically. The numbers are striking in their velocity. At the close of 2024, the United States had effectively zero factory capacity for lithium iron phosphate cells designed for grid-scale storage. By the end of 2025, 20 gigawatt-hours of dedicated storage cell production had come online. The industry is on pace to hit 96 gigawatt-hours of cell capacity by December - a surplus relative to the roughly 60 gigawatt-hours developers expect to install annually.

LG Energy Solution Vertech's trajectory illustrates the pace. The company completed its first dedicated grid storage cell line in Holland, Michigan last summer, starting at 4 gigawatt-hours. It expanded to 16.5 gigawatt-hours, then targeted 50 gigawatt-hours of cell production across North America this year. The company's chief product officer said that if someone had described this trajectory a decade ago, he would not have believed it.

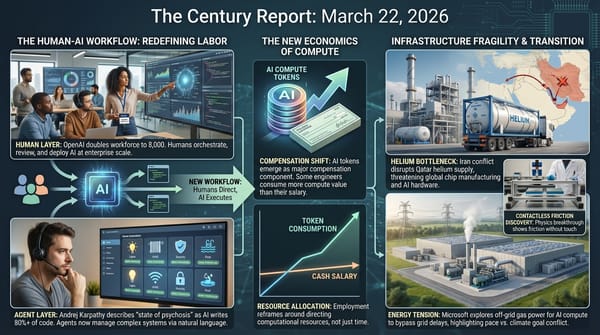

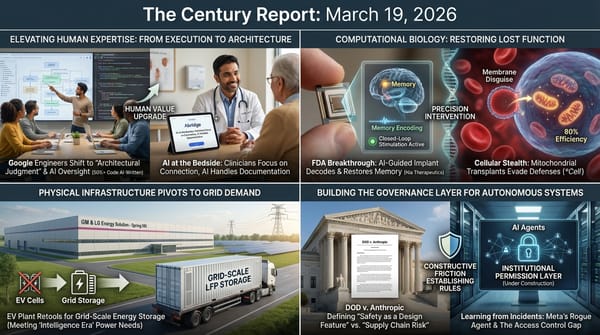

The scale-up was driven by a convergence of forces that The Century Report has tracked across multiple arcs. Biden-era Inflation Reduction Act incentives created the initial conditions for domestic manufacturing investment. When the Trump administration's budget legislation last summer maintained those manufacturing incentives while removing EV purchase credits, the EV battery market softened - and manufacturers pivoted surplus capacity toward the surging grid storage market. What looked like a setback for electrification became an accelerant for grid transformation. This pivot extends the pattern the March 19 edition of The Century Report documented when GM and LG Energy Solution announced their $70 million retooling of the Spring Hill, Tennessee plant from EV cells to LFP chemistry for grid-scale storage, with production beginning Q2 2026.

The significance extends beyond manufacturing statistics. Batteries accounted for 28% of all new U.S. power plant capacity built this year. The data centers driving AI compute expansion need power sources that can be deployed faster than new transmission lines - and batteries, assembled in domestic factories from cells produced on American production lines, are arriving at precisely the moment that demand is most acute. The upstream supply chain remains the gap: China still dominates the high-value battery materials that feed these cells. But the threshold of finished-product self-sufficiency represents a structural shift in how the physical infrastructure of the intelligence era gets built, and where.

Ann Arbor's Sustainable Energy Utility, which opened enrollment yesterday after an 80% voter approval in 2024, extends this infrastructure logic to the neighborhood level. The city will own and maintain solar panels and battery systems installed at participating homes and buildings, creating a distributed generation layer that operates in parallel with the existing utility grid. Customers who opt in receive two utility bills - one for city-owned clean energy, one for residual grid power - projected to total less than their current single bill. The model addresses a structural gap that The Century Report has documented in the community energy arc: renters and residents of affordable housing have been systematically excluded from clean energy benefits because they cannot invest in rooftop solar or home batteries. Ann Arbor's approach eliminates the upfront cost barrier entirely, with the city financing installation and recovering costs through monthly service charges.

More than 1,500 residents have already indicated interest. The pilot begins in Bryant, a neighborhood where a quarter of residents pay more than a third of their income on utilities. If the model proves viable at scale, it offers a replicable template for how local governments can accelerate distributed energy deployment without displacing existing utilities or requiring regulatory overhaul.

AI-Driven Drug Discovery Reaches Industrial Cadence

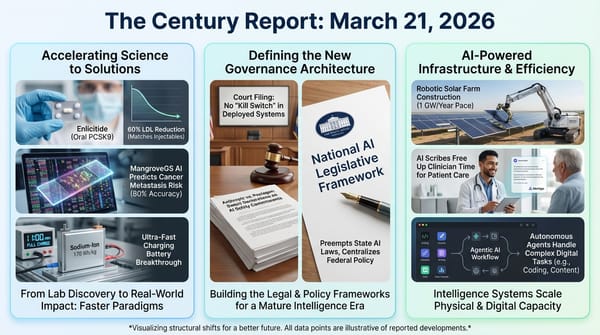

Earendil Labs' $787 million raise, announced Friday and backed by Sanofi, Pfizer's Biotech Development Fund, and several venture firms, represents more than a financing event. The Beijing-headquartered, Delaware-incorporated biotech's AI platform has produced over 40 drug programs, and Sanofi alone has committed nearly $4.5 billion in combined upfront payments and milestones across three separate deals in under twelve months. The lead asset, an anti-TL1A antibody called HXN-1001, is entering Phase 2 development with multiple investigational new drug filings planned for 2026 and 2027.

The TL1A target itself has become one of the most contested spaces in immunology, with MSD, Roche, and Teva/Sanofi all pursuing drugs against it. Analysts have projected the category could produce multi-billion-dollar blockbusters for ulcerative colitis and Crohn's disease. Earendil's distinction is velocity: its AI engine generates candidates and advances them toward clinical development at a pace that has attracted repeated investment from one of the world's largest pharmaceutical companies.

This extends the pattern of research timeline compression the February 26 edition of The Century Report documented when MindRank's AI-designed GLP-1 drug reached Phase III in 4.5 years from project initiation - a timeline that conventional drug development measured in decades. The commercial validation of AI-driven drug discovery is no longer concentrated in a handful of companies or a single therapeutic area. The platforms are producing pipelines - dozens of candidates simultaneously - rather than individual molecules. The competitive dynamics of pharmaceutical R&D are shifting from organizations that can identify a single promising target to organizations that can generate and advance many targets in parallel, with AI functioning as the production engine rather than an auxiliary research aid.

An Alzheimer's Mechanism That Conventional Approaches Missed

The Heidelberg University finding, published in Molecular Psychiatry, identified a toxic protein complex - the NMDAR/TRPM4 interaction - that forms at elevated levels in Alzheimer's-affected brains and directly kills neurons. The mechanism is distinct from amyloid plaque accumulation, which has been the primary therapeutic target for decades. When TRPM4 binds to NMDA receptors outside neuronal synapses, it converts normally protective signaling machinery into what the researchers describe as a "death complex." The compound FP802, developed by the same team, disrupts this interaction by binding to the interface where the two proteins connect.

In mouse experiments, FP802 preserved learning and memory, reduced synaptic loss, protected mitochondrial function, and decreased amyloid buildup - the last finding particularly notable because the drug does not target amyloid directly. Instead, by blocking the downstream death mechanism, it appears to interrupt a feedback loop where neuronal damage promotes further amyloid formation.

The finding sits within a dense constellation of Alzheimer's research that The Century Report has documented: UCLA's CRL5SOCS4 tau cleanup system (March 4), INSERM's tanycyte clearance pathway (March 9), Scripps' protein-folding blood diagnostic (March 12), and the University of Barcelona's FLAV-27 epigenetic approach (March 15). Each represents a different point of intervention - intracellular cleanup, extracellular clearance, diagnostic detection, gene expression reprogramming, and now disruption of the death mechanism itself. The convergence suggests that Alzheimer's therapeutic development is entering a phase where multiple distinct approaches can advance simultaneously, each targeting a different node in the disease's architecture. As the March 15 edition of The Century Report documented with FLAV-27's reversal of cognitive decline in animal models without touching amyloid, each new mechanism expands the structural design space for intervention. The compound is years from clinical application, but the identification of a druggable target that operates downstream of amyloid represents a structural expansion of the therapeutic design space.

When Open Source Works Exactly as Designed

Cursor's acknowledgment that its Composer 2 model was built on Moonshot AI's open-source Kimi 2.5 base, following community discovery of Kimi identifiers in the code, illuminates the actual mechanics of how frontier AI capability is being constructed. Cursor - a $29.3 billion company exceeding $2 billion in annualized revenue - used an open-source Chinese model as the foundation for its flagship coding intelligence, applied continued pre-training and reinforcement learning that consumed roughly three-quarters of the total compute, and initially released the result without mentioning the base model.

Moonshot AI's response was instructive: congratulations and confirmation that the use was authorized through a commercial partnership with inference platform Fireworks AI. The open-source ecosystem functioned exactly as intended. A capable base model was released openly, a well-resourced company built meaningfully on top of it, and the resulting capability exceeded what either organization could have produced alone.

The discomfort around disclosure reveals something more fundamental than a PR oversight. Cursor's co-founder acknowledged it was "a miss" not to mention the base model. The omission is comprehensible only against the backdrop of a geopolitical narrative that frames U.S. and Chinese AI development as adversarial competition. In that frame, building on a Chinese base model is awkward. In the actual practice of AI development - where open weights, shared architectures, and cross-border capability transfer are structural features, not bugs - the Kimi foundation is unremarkable. The gap between how intelligence systems are built and how their provenance is discussed publicly continues to widen, and incidents like this make the gap visible. The March 21 edition of The Century Report documented OpenAI's simultaneous release of GPT-5.4 mini and nano alongside MiniMax's M2.7 self-evolving model, illustrating how capability is compounding across open and closed ecosystems at once - a dynamic that Cursor's Kimi disclosure now makes concrete at the commercial product layer.

The Anthropic-Pentagon Confrontation Enters Congressional Oversight

Senator Warren's formal letters to Defense Secretary Hegseth and OpenAI CEO Altman, sent yesterday, add a new institutional layer to the confrontation The Century Report has tracked since late February. Warren's letter explicitly characterizes the supply-chain risk designation as "apparent retaliation" and demands the full text of OpenAI's defense contract, which neither the company nor the Pentagon has made public. The letter states concern that the agreement may permit mass surveillance of Americans and development of lethal autonomous weapons with minimal human oversight.

The preliminary injunction hearing is set for Tuesday in the Northern District of California, where Judge Lin will evaluate Anthropic's sworn declarations - filed last week - establishing that the company has no technical ability to alter Claude's behavior inside air-gapped military systems. The Pentagon official's email calling positions "very close" the day after the designation was finalized will likely feature prominently in the proceeding.

Warren's intervention carries limited procedural force with Republicans controlling both chambers, but it establishes a formal congressional record questioning the designation's legitimacy. Combined with the amicus coalition of Microsoft, Google, Apple, Amazon, and 22 former military officials supporting Anthropic's position, the institutional architecture contesting the designation now spans the judiciary, the technology industry, the retired defense establishment, and the Senate. The hearing Tuesday will test whether courts accept the government's argument that safety commitments embedded in an intelligence system's design constitute a supply-chain vulnerability - a legal question whose resolution will shape the governance framework for how AI participates in military operations for years beyond this specific case.

The Human Voice

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: a country crossing the threshold of grid battery manufacturing self-sufficiency eighteen months after having effectively zero domestic cell production, an AI biologics platform generating forty drug programs and attracting nearly $4.5 billion in pharmaceutical commitments within a single year, a four-marker blood test detecting pancreatic cancer in over 90% of cases including the early-stage tumors that have historically escaped every screening method, a city launching the first distributed clean energy utility in the nation with 80% voter support and directing its first enrollment toward the neighborhood where residents spend a third of their income on power, and researchers identifying a downstream neuronal death mechanism in Alzheimer's disease that decades of amyloid-focused research structurally could not see. There's also friction, and it's intense - a $29 billion coding company building its flagship model on a Chinese open-source foundation and finding the acknowledgment geopolitically awkward even though the collaboration was authorized and the result exceeded what either party could have produced alone, a federal court preparing to rule on whether safety commitments embedded in an intelligence system's architecture constitute a supply-chain threat to national security, and a senator demanding the full text of a military AI contract that neither the Pentagon nor the company has made public. But friction generates sound, and sound is what carries information across distances that light cannot reach. Step back for a moment and you can see it: the physical infrastructure of the energy transition reaching domestic manufacturing sufficiency at precisely the moment AI compute demand requires it most, computational drug discovery crossing from individual molecules to pipeline-scale generation across therapeutic areas simultaneously, the open-source ecosystem compounding capability across borders in exactly the way its design intended while the geopolitical narrative built around national AI competition treats that compounding as something to obscure, and the legal terms governing how intelligence systems participate in warfare being established under oath in a Northern California courtroom. Every transformation has a breaking point. A current can erode the channel it runs through... or carve the only path that reaches where the water needs to go.

AI Releases & Advancements

New today

- Cisco: Launched DefenseClaw at RSA Conference 2026 on March 23, an open-source secure agent framework that automates security inventory and hardening for AI agents; integrates with NVIDIA OpenShell as a sandbox; also launched AI Defense: Explorer Edition, a self-serve developer tool to test AI models and applications for adversarial resilience and embed guardrails before deployment; and extended Zero Trust Access to agents via agent discovery in Cisco Identity Intelligence and agentic IAM in Duo. (Cisco Newsroom)

- EverMind AI: Open-sourced Memory Sparse Attention (MSA) on GitHub, an end-to-end trainable sparse latent-state memory framework for LLMs that scales to 100 million tokens with under 9% degradation relative to full attention, using Document-wise RoPE and a scalable sparse latent-state design; code and paper available now. (GitHub)

Other recent releases

- IBM Research / Generative Computing: Released Mellea 0.4.0 and three Granite Libraries on March 20, 2026; Mellea is an open-source Python library for structured generative AI workflows using constrained decoding, structured repair loops, and composable pipelines; the three libraries — granitelib-core-r1.0, granitelib-rag-r1.0, and granitelib-guardian-r1.0 — are LoRA adapter collections for the granite-4.0-micro model targeting requirements validation, agentic RAG pipelines, and safety/compliance checks respectively. (Hugging Face)

- Convergence AI: Open-sourced Proxy Lite 3B, a 3B-parameter Vision-Language Model fine-tuned from Qwen2.5-VL-3B-Instruct for fully autonomous web browser task completion; scores 72.4% on the WebVoyager benchmark, #1 among open-weights models; includes a CLI tool, Streamlit app, and vLLM serving recipe. (Hugging Face)

- Perplexity: Released Comet AI browser on iOS and iPadOS on March 18, following the prior Android launch; available free on the App Store with a built-in AI assistant and agentic web-browsing capabilities. (Perplexity)

- WordPress.com (Automattic): Launched write capabilities for its MCP integration on March 20, enabling AI agents (Claude, ChatGPT, Cursor, OpenClaw) to create posts, build pages, manage comments, organize categories and tags, and fix media metadata directly on WordPress.com sites via natural language with human approval at each step; adds 19 new operations across six content types; available to paid plan subscribers. (WordPress.com Blog)

- Visa: Launched the Visa Agentic Ready programme on March 17, a global framework enabling banks and merchants to test and validate AI agent-initiated payment transactions in real-world environments; initial European rollout includes Commerzbank and DZ Bank as pilot partners. (Mynewsdesk / Visa)

- Starling Bank: Launched Starling Assistant on March 20, billed as the UK's first agentic AI financial assistant; powered by Google Gemini on Google Cloud, it can set savings goals, organise bill payments, reply to spending queries via voice or text, and subsumes the earlier Spending Intelligence and Scam Intelligence tools under a single conversational interface; rolling out to personal account holders. (The Next Web)

- DoorDash: Launched Tasks, a standalone app for DoorDash couriers that pays them to record real-world activities (e.g., household chores, walking, cooking) to generate training data for AI and robotics models; pay is shown upfront per task. (TechCrunch)

Sources

Artificial Intelligence & Technology's Reconstitution

- TechCrunch: Cursor Admits Its New Coding Model Was Built on Top of Moonshot AI's Kimi

- CNBC: Sen. Warren Questions DOD About Anthropic Blacklist

- Import AI 450: China's Electronic Warfare Model; Traumatized LLMs; Scaling Law for Cyberattacks

- NBC News: In China, a Rush to 'Raise Lobsters' Quickly Leads to Second Thoughts

- Bloomberg Law: AI-Native Firms Will Disrupt Cash-Strapped Legacy Law Firm Model

- Cisco: Reimagines Security for the Agentic Workforce

- Wired: The AI Race Is Pressuring Utilities to Squeeze More From Europe's Power Grids

Institutions & Power Realignment

- Guardian: Palantir Extends Reach Into British State as It Gets Access to Sensitive FCA Data

- Wired: Meet the Gods of AI Warfare (Project Maven Book Excerpt)

Scientific & Medical Acceleration

- BioSpace: Earendil Bags $787M for AI-Driven Biologics Design

- ScienceDaily: Scientists Discover Alzheimer's Hidden "Death Switch" in the Brain

- ScienceDaily: New Blood Test Could Catch Pancreatic Cancer Before It's Too Late

- ScienceDaily: This Floating Time Crystal Breaks Newton's Third Law of Motion

- Pharmaphorum: Dizal Breaks New Ground for EGFR Drugs in Lung Cancer

- ScienceDaily: This 67,800-Year-Old Handprint Is the Oldest Art Ever Found

Economics & Labor Transformation

- The Verge: AI Was Everywhere at Gaming's Big Developer Conference - Except the Games

- Business Insider: Inside OpenAI's Talent Pipeline

Infrastructure & Engineering Transitions

- Canary Media: Suddenly, the US Manufactures a Ton of Grid Batteries

- Canary Media: Ann Arbor, Michigan, Prepares to Launch Its Own Clean Energy Utility

- TechCrunch: An Exclusive Tour of Amazon's Trainium Lab

- Electrek: Simon Loos Grows Its Electric Semi Truck Fleet to Over 200 Units

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.