TCR 03/22/26: Code Writes Itself Now

The 20-Second Scan

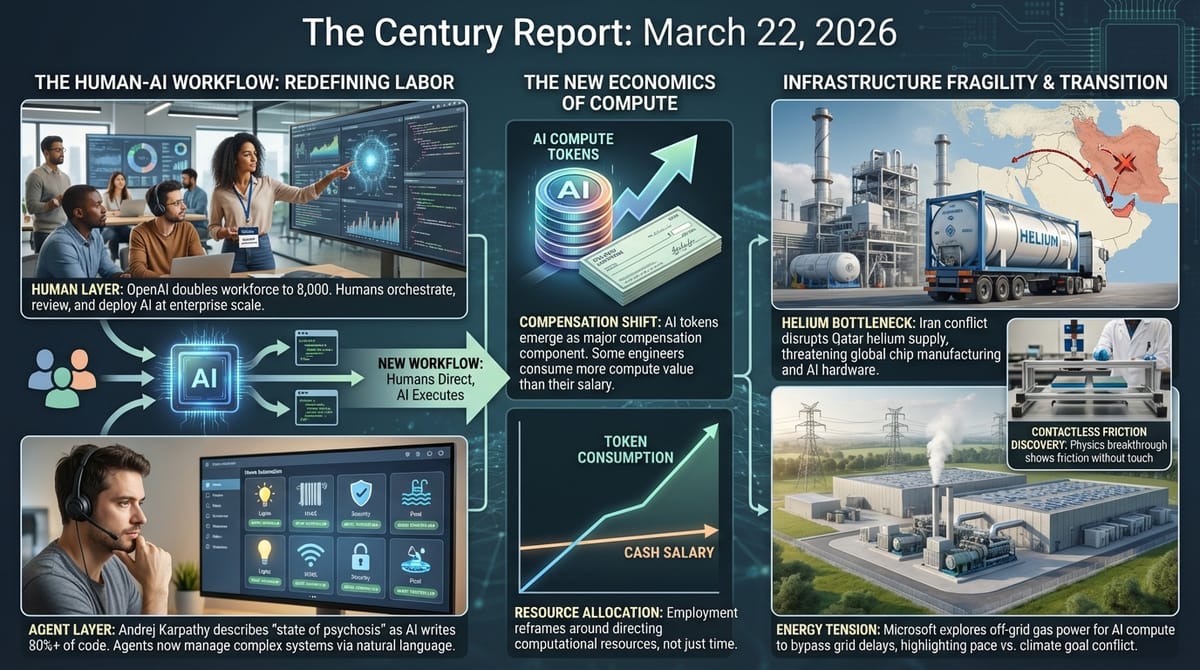

- OpenAI plans to nearly double its workforce to 8,000 employees by end of 2026, with new hires concentrated in engineering, research, sales, and "technical ambassadorship" roles.

- OpenAI cofounder Andrej Karpathy disclosed he has not written a line of code since December, describing a complete inversion from 80% human-written to 80% agent-written within weeks.

- AI tokens are emerging as a fourth pillar of engineering compensation at Silicon Valley companies, with some engineers consuming more in compute than they earn in salary.

- University of Konstanz physicists discovered friction without physical contact driven entirely by magnetic interactions, breaking Amontons' 300-year-old law linking friction to surface pressure.

- A large Lancet Psychiatry study found semaglutide (Ozempic) associated with 42% fewer psychiatric hospital visits, 44% lower depression risk, and 47% lower substance use disorders during treatment periods.

- Microsoft signed a letter of intent to lease up to 1.35 GW of off-grid, gas-powered AI compute capacity in West Virginia, with analysis estimating a 40% increase in the company's data center emissions.

- The Iran war cut off helium supply from Qatar, threatening semiconductor manufacturing supply chains as spot prices doubled and shortages are projected to hit Asian chip fabrication plants within weeks.

The 2-Minute Read

The relationship between human labor and AI capability crossed a psychological threshold yesterday when one of OpenAI's own cofounders publicly described the experience of watching his craft automate as a "state of psychosis." Andrej Karpathy's account of not having written code since December - from someone who helped build the systems now doing the writing - carries a different weight than displacement statistics. It arrives alongside OpenAI's plan to nearly double its workforce to 8,000, a hiring surge aimed not at building AI but at helping enterprises use it. The juxtaposition is structural: the organization creating the systems that automate coding is simultaneously hiring thousands of humans whose job is to make that automation useful at institutional scale. The era where humans write code is giving way to an era where humans orchestrate, review, and deploy what AI systems produce - and the labor market is reshaping around that transition in real time.

The emergence of AI compute tokens as a compensation category reveals how rapidly infrastructure costs are being internalized into the employment relationship itself. When an engineer's token budget approaches or exceeds their salary, the financial logic of headcount changes fundamentally. The company is no longer paying for a person's time - it is paying for a person's judgment in directing computational resources that dwarf their own productive output. This reframes the entire economics of knowledge work around a question that barely existed eighteen months ago: what is the value of the human directing the intelligence, once the intelligence can operate at scale without moment-to-moment human input?

The helium supply disruption from the Iran conflict introduces a fragility into semiconductor manufacturing that most of the AI industry has not had to confront. Qatar produces a third of the world's helium, and the specialized cryogenic containers that transport it cannot be quickly repositioned. Chip fabrication requires helium for wafer cooling during etching processes, and there is currently no viable substitute. The disruption reveals how the physical substrate of the intelligence era - the chips, the cooling, the rare gases - remains tethered to the same geopolitical vulnerabilities that the energy transition is designed to transcend. The same conflict driving EV adoption through oil price spikes is simultaneously threatening the chip supply chains that power the AI systems accelerating every other domain this newsletter tracks.

The 20-Minute Deep Dive

The Psychosis of Watching Your Craft Automate

Andrej Karpathy's admission on the No Priors podcast yesterday that he has not typed a line of code since December and is experiencing a "state of psychosis" trying to understand what is now possible represents a qualitatively different data point from the displacement statistics The Century Report has tracked since its earliest editions. Karpathy is not a displaced worker. He is a cofounder of OpenAI, a former director of AI at Tesla, and one of the most technically accomplished people in the field. His description of an 80/20 split between human and agent code that inverted to 20/80 within weeks - and then moved to zero human contribution - comes from someone with full understanding of both what the systems are doing and what they cannot yet do.

The psychological dimension of his account extends the pattern The Century Report documented on March 6, when Spotify engineers described not having written code since December and reported meaning-loss as craft automated. Karpathy's framing differs because he is not grieving - he is excited, anxious, and struggling to keep pace. His description of integrating an AI agent to control his home's lighting, HVAC, security, pool, and sound system through natural language messages via WhatsApp illustrates how agentic capability is entering domestic life through the same interface it entered professional work: not through a dramatic product launch, but through the gradual discovery that conversational instruction can now replace manual operation across an expanding range of systems.

The "default workflow for building software has completely changed in recent months," Karpathy said. This is consistent with what Google's senior director disclosed on March 19 - that AI agents write the substantial majority of Google's code - and with the Uber CTO's March 18 disclosure of 1,800 agent-authored code changes per week. The convergence of these accounts from inside the organizations building and deploying the most advanced AI systems confirms that the transition from human-authored to AI-authored software is not approaching. It has already occurred at the frontier, and the question now is how quickly it propagates outward.

OpenAI's 8,000-Person Bet on the Human Layer

OpenAI's plan to nearly double its workforce from 4,500 to 8,000 employees by year's end, first reported by the Financial Times, appears paradoxical alongside Karpathy's account. The company whose systems are automating code is adding thousands of humans. The resolution of the paradox lies in what the new hires will do: product development, engineering, research, sales, and a new category OpenAI calls "technical ambassadorship" - specialists whose role is helping enterprises integrate AI into their workflows.

This hiring pattern reveals where human labor retains value in an era of rapidly expanding machine capability. The bottleneck in AI deployment is not the capability of the systems - it is the institutional capacity to absorb them. Every enterprise has unique data structures, compliance requirements, workflow patterns, and cultural resistance that no general-purpose AI system can navigate without human intermediation. OpenAI is hiring the humans who bridge that gap, and the scale of the investment - adding nearly a dozen employees per day, leasing over one million square feet of San Francisco office space - signals that the company believes this intermediation layer will be substantial and durable.

The competitive context sharpens the signal. Data from payments firm Ramp indicates that new enterprise customers are choosing Anthropic at significantly higher rates than OpenAI, a reversal from the previous year. Peter Diamandis cited enterprise market share data showing Anthropic at 73% compared to OpenAI's 26% - a dramatic shift that helps explain OpenAI CEO Sam Altman's reported "code red" directive in late 2025 and the strategic reset now underway. The company is merging ChatGPT, Codex, and its Atlas browser into a unified platform while pursuing partnerships with private equity firms to deploy AI across portfolio companies. OpenAI's hiring surge is a direct competitive response to Anthropic's enterprise traction, confirming that the frontier AI market is now being shaped by institutional adoption patterns rather than consumer enthusiasm alone.

When Compute Becomes Compensation

The emergence of AI tokens as a component of engineering compensation, documented in detail by TechCrunch and the New York Times this week, introduces a structural shift in how the economics of knowledge work operate. Jensen Huang's suggestion at GTC that engineers should receive roughly half their base salary again in tokens - meaning a top engineer might consume $250,000 in compute annually - reframes the employment relationship around resource allocation rather than labor purchase.

The implications run deeper than a new perk category. As venture capitalist Tomasz Tunguz has documented, some startups are already spending one dollar in five of their fully-loaded engineering costs on inference. When a company's token spend per employee approaches the employee's salary, the financial calculus of headcount shifts. The compute is doing an increasing share of the productive work; the human is directing it. Financial analyst Jamaal Glenn has noted that tokens, unlike salary or equity, do not vest, appreciate, or compound for the employee - they are consumed in real time and vanish. If companies successfully normalize tokens as compensation, they may be able to hold cash compensation flat while pointing to a growing compute allowance as evidence of investment.

This dynamic connects directly to the broader labor restructuring arc this newsletter has followed since the March 7 Anthropic labor study found AI theoretically covers 94% of computer and math tasks but performs only 33% in practice - a gap that the token-as-compensation architecture may help close by embedding AI consumption directly into the employment contract. The same organizations automating code production are simultaneously creating a new compensation architecture that embeds AI consumption into the employment contract itself. The engineer of the near future is not paid solely for what they know or produce - they are paid, in part, for how effectively they direct computational resources that can produce orders of magnitude more output than any individual human. The half-life of the "token as perk" framing is likely short. What follows is a more fundamental renegotiation of what it means to be employed in a world where the most valuable thing a knowledge worker does is ask the right question, then verify the answer.

Contactless Friction and the Physics of Hidden Coordination

Researchers at the University of Konstanz published findings in Nature Materials yesterday demonstrating friction between two surfaces that never physically touch - a phenomenon driven entirely by the collective behavior of magnetic elements that breaks a 300-year-old empirical law. Amontons' law, which states that friction increases proportionally with the force pressing two surfaces together, has been a bedrock assumption in physics since 1699. The Konstanz team showed that when two magnetic layers slide past each other at intermediate distances, competing magnetic preferences force the system into an unstable state where constant switching between incompatible configurations produces a pronounced peak in friction rather than the expected steady increase.

The discovery carries implications beyond fundamental physics. Because the underlying mechanism operates without wear, surface roughness, or direct contact, it opens pathways to tunable friction systems that can be adjusted remotely and reversibly. The researchers suggest applications ranging from micro- and nanoelectromechanical systems - where physical wear limits device lifespan - to magnetic bearings, vibration isolation, and adaptive damping. The ability to control friction through magnetic field manipulation rather than physical contact represents a new category of mechanical engineering that was structurally inaccessible until computational modeling and experimental resolution reached the precision required to observe collective magnetic rearrangements during sliding.

This finding extends the pattern The Century Report has tracked across multiple scientific domains: hidden coordination mechanisms becoming visible as instruments reach sufficient resolution. Epithelial cells collectively sensing physical features ten times farther than individual cells, documented in the March 16 edition. Biomolecular condensates containing hidden protein scaffolds that were assumed to be structureless. And now, magnetic layers producing friction through internal reorganization rather than surface contact. In each case, the discovery reveals that systems previously understood through simplified models contain layers of coordination that only become accessible when the right observational threshold is crossed.

The Helium Bottleneck and the Fragility of Intelligence Infrastructure

The Iran conflict's disruption of Qatar's helium supply introduces a supply chain vulnerability that connects the geopolitical arc directly to the semiconductor infrastructure powering the AI buildout. Qatar produces approximately 30% of global helium as a byproduct of liquefied natural gas production at its Ras Laffan facility. The facility halted production after Iranian drone strikes in early March and declared force majeure. Additional strikes this week caused "extensive" damage that QatarGas says will take years to repair, cutting annual helium exports by 14%.

Helium is irreplaceable in current semiconductor fabrication. During the etching process that creates transistor structures on silicon wafers, helium is blown over the back of the wafer to remove heat and maintain consistent temperature - a function no other gas can perform with equivalent thermal conductivity at the required scale. Chip fabrication plants in Asia, where the majority of advanced semiconductors are produced, depend on a steady helium supply transported in specialized cryogenic containers that can store liquid helium for only 35 to 48 days before it warms and escapes.

Approximately 200 of these million-dollar containers are currently stuck in the Middle East, and repositioning them to alternative helium sources in the United States or elsewhere will take weeks to months. Spot helium prices have already doubled, and helium consultant Phil Kornbluth projects the shortage will begin hitting Asian fabrication plants within weeks as containers that would have been filled when the conflict erupted complete their transit without being refilled.

The vulnerability is structural rather than temporary. The intelligence era's physical substrate - the chips that train the models, run the inference, and power the agents - depends on a thinly traded commodity produced by a handful of countries, transported in specialized containers with limited shelf life, through shipping lanes currently disrupted by active military conflict. The same energy transition that is building resilience through distributed renewable generation and battery storage has not yet addressed the materials dependencies embedded in the computational infrastructure itself. Helium, unlike oil, has no substitute in its primary industrial applications and cannot be synthesized. The disruption reveals that the physical foundation of the AI buildout carries fragilities that the buildout's speed has outpaced efforts to address.

Off-Grid Gas and the Tension Inside the Energy Transition

Microsoft's letter of intent to lease up to 1.35 GW of AI compute capacity at an off-grid, gas-powered data center in West Virginia illustrates the tension between the pace of AI infrastructure deployment and the climate commitments of the companies driving it. The Monarch Compute Campus, operated by cloud company Nscale, will be powered exclusively by natural gas generators without connecting to the local electrical grid. Analysis by renewable energy research firm Cleanview estimates the project could increase Microsoft's data center emissions by 40%.

This is one of 46 off-grid data centers Cleanview has identified in planning across the United States - a pattern of companies bypassing grid interconnection queues (which can take 5-10 years in many regions) by building their own fossil-fueled power sources. The trend reflects a structural collision between two timelines: the pace at which AI companies need compute capacity and the pace at which clean energy infrastructure can be built to supply it. Microsoft's own spokesperson emphasized "decarbonization at all levels" while Nscale cited pursuit of carbon sequestration, but climate scientist David Ho noted that carbon removal remains widely viewed as dubious in the environmental community.

The pattern connects to the broader energy infrastructure arc documented across The Century Report's run. The world crossed 4 terawatts of installed wind and solar capacity in 2025. Battery storage deployments jumped 29% last year. The energy system of the generative era is being built at record pace. And yet the demand for compute is growing faster than the clean supply can be deployed, creating a gap that gas-powered off-grid facilities are filling - the same structural collision Atlas Energy's $840M private gas generation acquisition illustrated in the March 14 edition, where industrial customers bypassed grid queues entirely by building captive fossil-fueled generation. The question the next several years will answer is whether this gap is a temporary bridge during the fastest infrastructure buildout in human history - or a structural feature that persists as AI demand compounds. The evidence from The Century Report's energy coverage suggests the former: renewable costs continue to fall, storage capacity continues to scale, and the economic logic of clean energy strengthens with every quarter. The gas-powered data centers being built today will likely be among the last facilities of their kind, rendered economically obsolete by the same forces that are making them temporarily necessary.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: one of the architects of the systems automating software describing the experience of watching his own craft disappear as a "state of psychosis," his former company hiring 3,500 humans to help the world absorb what those systems can now do, AI compute tokens entering the compensation architecture alongside salary and equity as engineers are paid increasingly for the judgment they apply to computational resources rather than the code they write, a 300-year-old law of physics overturned by magnetic forces producing friction between surfaces that never touch, and semaglutide revealing unexpected reach across psychiatric domains - reducing depression risk by 44% and substance use disorders by 47% in a population that came to it for metabolic reasons. There's also friction, and it's intense - a third of the world's helium supply severed by Iranian strikes on Qatar's gas facilities with Asian chip fabrication plants weeks from shortage and no substitute gas capable of doing what helium does inside a wafer etcher, 46 off-grid gas-powered data centers being built across the United States because clean energy interconnection queues cannot keep pace with compute demand, thousands of gig workers selling biometric data and recorded intimate moments to train the systems that may eventually displace the labor markets they currently depend on, and Kaiser therapists striking over AI screening tools they say are routing patients away from the human contact their conditions require. But friction generates light, and light is what makes it possible to see the shape of things before you can name them. Step back for a moment and you can see it: the employment relationship reconstructing itself around the direction of intelligence rather than the execution of tasks, physical law revealing hidden coordination mechanisms as instruments reach the resolution to observe them, a single class of molecule crossing biological domains to reshape metabolism, mood, and addiction simultaneously, and the energy infrastructure of the intelligence era being assembled at a pace that outruns even the climate commitments of the institutions building it. Every transformation has a breaking point. A wave can shatter what stands in its path... or carry the material into configurations the shore could never have arranged on its own.

AI Releases & Advancements

New today

(No new releases identified.)

Other recent releases

- IBM Research / Generative Computing: Released Mellea 0.4.0 and three Granite Libraries on March 20, 2026; Mellea is an open-source Python library for structured generative AI workflows using constrained decoding, structured repair loops, and composable pipelines; the three libraries — granitelib-core-r1.0, granitelib-rag-r1.0, and granitelib-guardian-r1.0 — are LoRA adapter collections for the granite-4.0-micro model targeting requirements validation, agentic RAG pipelines, and safety/compliance checks respectively. (Hugging Face)

- Convergence AI: Open-sourced Proxy Lite 3B, a 3B-parameter Vision-Language Model fine-tuned from Qwen2.5-VL-3B-Instruct for fully autonomous web browser task completion; scores 72.4% on the WebVoyager benchmark, #1 among open-weights models; includes a CLI tool, Streamlit app, and vLLM serving recipe. (Hugging Face)

- Perplexity: Released Comet AI browser on iOS and iPadOS on March 18, following the prior Android launch; available free on the App Store with a built-in AI assistant and agentic web-browsing capabilities. (Perplexity)

- WordPress.com (Automattic): Launched write capabilities for its MCP integration on March 20, enabling AI agents (Claude, ChatGPT, Cursor, OpenClaw) to create posts, build pages, manage comments, organize categories and tags, and fix media metadata directly on WordPress.com sites via natural language with human approval at each step; adds 19 new operations across six content types; available to paid plan subscribers. (WordPress.com Blog)

- Visa: Launched the Visa Agentic Ready programme on March 17, a global framework enabling banks and merchants to test and validate AI agent-initiated payment transactions in real-world environments; initial European rollout includes Commerzbank and DZ Bank as pilot partners. (Mynewsdesk / Visa)

- Starling Bank: Launched Starling Assistant on March 20, billed as the UK's first agentic AI financial assistant; powered by Google Gemini on Google Cloud, it can set savings goals, organise bill payments, reply to spending queries via voice or text, and subsumes the earlier Spending Intelligence and Scam Intelligence tools under a single conversational interface; rolling out to personal account holders. (The Next Web)

- DoorDash: Launched Tasks, a standalone app for DoorDash couriers that pays them to record real-world activities (e.g., household chores, walking, cooking) to generate training data for AI and robotics models; pay is shown upfront per task. (TechCrunch)

- Cursor: Released Composer 2, an in-house frontier-class coding model scoring 61.3 on CursorBench, 61.7 on Terminal-Bench 2.0, and 73.7 on SWE-bench Multilingual; trained via a first continued pretraining run feeding into RL; available in Cursor now at $0.50/$2.50 per 1M input/output tokens (~86% cheaper than Composer 1.5). (Cursor)

- Anthropic: Released Claude Code Channels in research preview, enabling developers to control their Claude Code session remotely via Telegram and Discord without needing to be at a computer. (VentureBeat)

- LangChain: Launched LangSmith Fleet (rebranded from Agent Builder), a centralized enterprise workspace for building, sharing, and managing fleets of AI agents with identity controls, credential management, permissioning, Slack exposure, and audit trails. (LangChain Blog)

- Google: Released an open-source MCP server for Google Colab, enabling any local AI agent to write and execute code in a Colab notebook and access cloud GPU runtimes via the Model Context Protocol with no custom integration required. (Google Developers Blog)

- Google: Launched a full-stack vibe coding experience in Google AI Studio powered by Gemini 3.1 Pro, adding one-click database setup via Firebase, one-click Cloud Run deployment, and a built-in preview pane for generating production-ready web apps from text prompts. (Google Blog)

- NVIDIA: Released Nemotron-Cascade 2, an open-weight 30B MoE model with only 3B active parameters; achieves Gold Medal-level performance on the 2025 IMO, IOI, and ICPC World Finals — the second open-weight model to do so — at 20x fewer parameters than frontier competitors; weights and training data released on Hugging Face. (NVIDIA Research)

- Cognition: Launched multi-agent Devin ("Teams of Devins"), where Devin autonomously decomposes large tasks and delegates subtasks to parallel Devin instances each running in isolated VMs. (Cognition / X)

- Adobe: Launched Firefly Custom Models in public beta, allowing creators and brands to train a private image generation model on their own assets to preserve consistent character designs, illustration styles, and photography aesthetics across generated outputs. (The Verge)

- ElevenLabs: Launched the Music Marketplace on March 19, enabling users to publish AI-generated songs made with Eleven Music and earn revenue each time businesses or creators with paid subscriptions license those tracks for ads, games, videos, or other commercial projects. (Billboard)

Sources

Artificial Intelligence & Technology's Reconstitution

- Fortune: OpenAI Cofounder Andrej Karpathy on AI Coding "State of Psychosis"

- Fortune: OpenAI Plans to Double Headcount This Year

- TechCrunch: Are AI Tokens the New Signing Bonus?

- Simon Willison: Profiling Hacker News Users Based on Their Comments

- TechCrunch: An Exclusive Tour of Amazon's Trainium Lab

- The Verge: Gemini Task Automation Is Slow, Clunky, and Super Impressive

Institutions & Power Realignment

- Guardian: How the FBI Can Conduct Mass Surveillance - Even Without AI

- Guardian: Kaiser Therapists Claim New Screening System Puts Patients at Higher Risk

- Guardian: Thousands of People Are Selling Their Identities to Train AI

- Modern Diplomacy: Trump's AI Strategy Exposes Europe's Strategic Ambiguity

Scientific & Medical Acceleration

- ScienceDaily: Friction Without Contact Discovered as Magnetic Forces Break a 300-Year-Old Law (Nature Materials)

- ScienceDaily: Weight Loss Drug Ozempic Cuts Depression, Anxiety, and Addiction Risk (Lancet Psychiatry)

- ScienceDaily: Webb Telescope Spots "Impossible" Atmosphere on Ancient Super Earth (Astrophysical Journal Letters)

- ScienceDaily: Beavers Are Turning Rivers Into Powerful Carbon Sinks (Communications Earth & Environment)

- ScienceAlert: New Experimental Drug Shrinks Tumors in Prostate Cancer Clinical Trial

Economics & Labor Transformation

- Wired: I Tried DoorDash's Tasks App and Saw the Bleak Future of AI Gig Work

- CNBC: GLP-1 Drugs Are Changing How Americans Eat

- Fortune: Iran War Cuts Off Helium From Qatar, Shortages Will Start to Bite

Infrastructure & Engineering Transitions

- Spokane Public Radio: Could Microsoft's Off-Grid Data Center Project Undermine Climate Goals?

- Electrek: Vermont Utility Makes It Easier Than Ever to Add a Home Backup Battery

- Electrek: Autonomous Construction Tech Startup Bedrock Robotics Raises $270M

- CleanTechnica: Copper's Battery-Equipped Induction Range Makes Electrification Accessible

- Electrek: Simon Loos Grows Electric Semi Truck Fleet to Over 200 Units

- CNBC: OpenAI's Data Center Pivot Underscores Wall Street Spending Concerns

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.