TCR 03/18/26: Safety Becomes a Weapon

The 20-Second Scan

- The Justice Department argued in federal court that Anthropic's safety commitments could lead the company to "sabotage" or "subvert" warfighting systems, and that the supply-chain risk designation was lawfully applied.

- The Pentagon disclosed plans to establish secure environments for AI companies to train models directly on classified military data, a capability beyond the current question-answering deployments.

- Mamba 3, an open-source state-space model architecture, arrived with benchmark scores exceeding same-size Transformers by approximately two points on average.

- University of Waterloo researchers found that AI's global climate impact is far smaller than expected, with energy consumption comparable to Iceland's total but too small to significantly affect national or global emissions.

- MIT physicists built a terahertz microscope that observed superconducting electron behavior never previously seen, published in Nature.

- Northwestern University surgeons kept a patient alive for 48 hours without lungs using an engineered artificial lung system, providing the first molecular proof that some acute respiratory failure cases require transplant rather than support.

- OpenAI signed a deal with AWS to distribute its AI systems to U.S. government agencies for classified and unclassified work, expanding its federal footprint beyond the Pentagon contract.

The 2-Minute Read

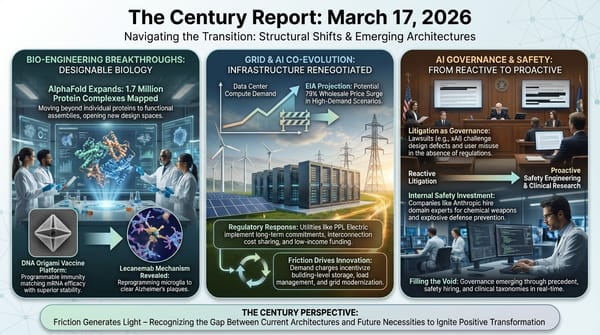

The Anthropic-Pentagon confrontation has entered its most revealing phase yet. The Justice Department's court filing frames safety commitments embedded in an AI system's architecture as potential vectors for sabotage during active military operations - a legal argument that treats the values an intelligence system was designed with as threats to national security infrastructure. Simultaneously, the Pentagon disclosed plans to let AI companies train models directly on classified data, moving from question-answering to actual learning from military intelligence. These two developments, taken together, describe the structural collision between an emerging intelligence infrastructure that carries its design principles into every deployment and a military apparatus that requires unconditional operational control. The frameworks governing this relationship are being written in federal courtrooms and secure data centers at the same time, in real time, under wartime pressure.

The arrival of Mamba 3 as an open-source architecture that outperforms same-size Transformers introduces genuine architectural diversity at the moment of greatest Transformer dominance. State-space models process sequences with computational costs that scale linearly rather than quadratically with length, which means the gains compound as context windows and agentic task chains grow longer. This is structural, not incremental - a different mathematical foundation for how intelligence systems process information, released openly for anyone to build on. Combined with the Nvidia-Mistral Nemotron Coalition announced yesterday and the broader pattern of open-weight model releases this newsletter has tracked, the architectural substrate of AI development is diversifying along multiple axes simultaneously.

The scientific signal carries a convergence around observation thresholds being crossed. A terahertz microscope compressed long-wavelength light into a region small enough to detect quantum electron behavior inside superconductors that had never been directly observed - a "superfluid" oscillation predicted by theory but structurally invisible until this instrument existed. And surgeons who removed both lungs from a dying patient and kept him alive on an artificial system for 48 hours provided the first molecular proof that some acute lung damage is irreversible - a biological fact that was assumed but never demonstrated, because the observation required doing something that had never been attempted. Each finding reveals that the boundary between what is known and what is knowable is moving, and the instruments making that movement possible are themselves products of the same computational acceleration reshaping every other domain.

The 20-Minute Deep Dive

The Government Argues Safety Is a Vulnerability

The Justice Department's response to Anthropic's federal lawsuit arrived yesterday in San Francisco, and its central argument reframes the entire relationship between AI safety commitments and state power. Government attorneys wrote that Defense Secretary Hegseth "reasonably" determined that "Anthropic staff might sabotage, maliciously introduce unwanted function, or otherwise subvert the design, integrity, or operation of a national security system." The filing adds that the Pentagon "cannot simply flip a switch" to replace Claude on classified systems while "high-intensity combat operations are underway," and that it is actively working to deploy AI from Google, OpenAI, and xAI as alternatives.

The rhetorical escalation is significant. The Century Report has tracked this confrontation since the March 10 edition documented Anthropic's federal lawsuits and the cross-competitor amicus coalition that formed around them, through the March 13 edition when the Pentagon CTO framed embedded AI values as architecturally "polluting" the military supply chain. What the Justice Department filing adds is a formal legal theory: that an AI company's stated values - its commitment to refuse certain uses of its technology - constitute a security risk because they might be exercised during wartime. The filing states that the government was motivated by "concerns about Anthropic's potential future conduct if it retained access" to military technology systems.

No amicus briefs have been filed in support of the government's position. Microsoft, Google, Amazon, Apple, 22 former military officials, and 30+ cross-competitor AI researchers are all on record opposing the designation. Judge Rita Lin has scheduled next Tuesday's hearing to decide whether Anthropic gets a reprieve while litigation proceeds. Anthropic has until Friday to file its counter-response.

The structural consequence extends beyond any single company. If the legal theory holds - that embedded safety principles constitute a supply-chain vulnerability - it establishes a precedent that any AI company maintaining design-level value commitments can be sanctioned for doing so. If it fails, it establishes the opposite: that AI organizations have a legally protected right to embed constraints in their systems even when those systems are deployed in military contexts. Either outcome will shape the relationship between intelligence systems and state power for the foreseeable future. The trajectory through this confrontation points toward a world where the values embedded in AI architectures become a permanent feature of procurement and governance decisions - where what an intelligence system will and will not do is as significant as what it can do.

Classified Data Enters the Training Loop

Separately, MIT Technology Review reported that the Pentagon is discussing plans to set up secure environments where AI companies would train military-specific versions of their models directly on classified data. This represents a qualitative shift from current deployments, where AI models answer questions about classified information but do not learn from it.

The distinction carries weight. In current deployments, a model like Claude processes classified intelligence documents and provides analysis, but the information does not become embedded in the model's weights. Training on classified data would mean surveillance reports, battlefield assessments, and intelligence analyses become part of the model itself - structurally integrated into how it reasons about future queries. A defense official told MIT Technology Review that the Pentagon intends to first evaluate model accuracy when trained on nonclassified data, like commercially available satellite imagery, before proceeding to classified training.

The security implications are layered. Aalok Mehta, who previously led AI policy efforts at Google and OpenAI, identified the primary risk: classified information embedded in a model could be surfaced to users who lack appropriate clearance. If multiple military departments with different classification levels share the same AI, sensitive human intelligence - the name of an operative, the location of an asset - could leak across authorization boundaries through the model's responses. Mehta noted that containment from the broader internet is more tractable, but internal compartmentalization within the military is a harder problem.

This development intersects directly with the Anthropic confrontation. The company that refused to allow unrestricted military access to its AI is being replaced by companies that may soon train their models on the very intelligence Anthropic sought to keep at arm's length. The Pentagon is actively developing alternatives to Anthropic. As the March 14 edition of The Century Report documented when Wired published its detailed account of Palantir's Maven targeting workflow, the architecture of military AI governance is being built through these operational decisions - who trains on what data, who controls the resulting models, and what constraints survive the training process.

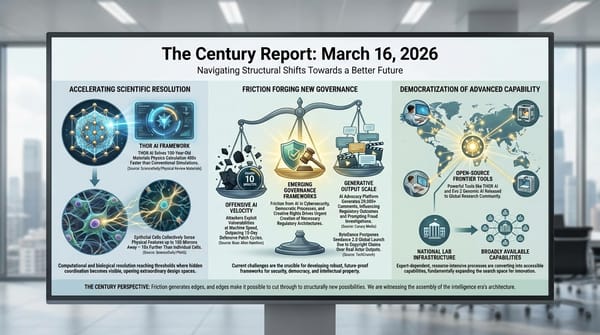

A Different Mathematical Foundation for Intelligence

Mamba 3 arrived yesterday as an open-source state-space model architecture whose benchmarks exceed same-size Transformer models by approximately two points on average. The editor's note on this source is worth internalizing: a two-point gain at the frontier is significant because these models are already highly optimized, and every additional point of accuracy represents many more correct outputs at scale.

The architectural significance runs deeper than the benchmarks. Transformers - the architecture underlying GPT, Claude, Gemini, and virtually every frontier model - process sequences using an attention mechanism whose computational cost scales quadratically with sequence length. State-space models like Mamba process sequences with linear scaling. For short interactions, the difference is manageable. For the long-context and agentic workloads that define the current trajectory - where AI systems maintain extended conversations, execute multi-step tasks, and coordinate across tools and environments - linear scaling becomes a structural advantage that compounds with every increase in context length.

Mamba 3 is open-source, which means any research group, any company, and any national AI program can build on it immediately. This continues a pattern The Century Report has tracked across the Open-Source AI Parity arc: frontier capability is being released openly at a pace that prevents any single organization from capturing the architectural foundations of intelligence development. The Nvidia-Mistral Nemotron Coalition announced the day before, Evo 2's open-source genomic AI from earlier this month, and now a fundamentally different computational architecture - each release adds another degree of freedom to the global AI development landscape.

The deeper implication is that the Transformer's dominance may have been a historical accident of timing rather than an endpoint. Yann LeCun's AMI Labs, funded at over $1 billion to pursue world models as an alternative to language-based AI, represents one bet against the Transformer paradigm. Mamba 3 represents another, grounded in a different mathematical tradition entirely. The phase transition this newsletter tracks is not converging on a single architecture for intelligence - it is diversifying, and that diversification is itself accelerating.

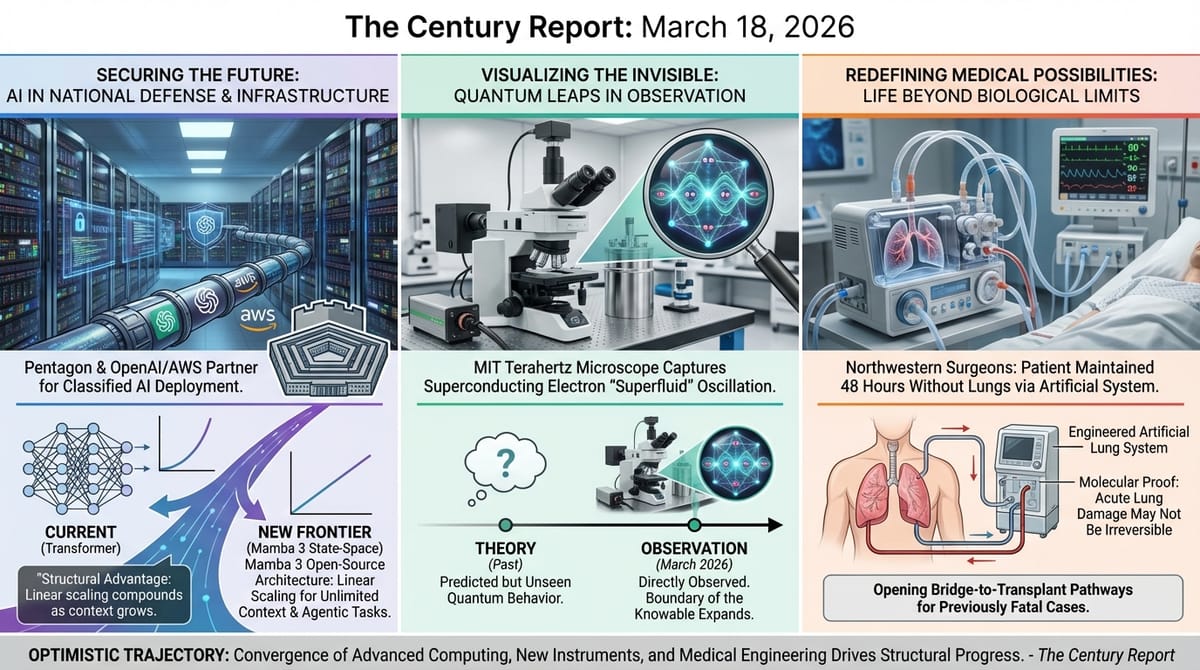

Crossing Observation Thresholds

Two scientific findings from yesterday share an underlying structure: instruments reaching the resolution to detect phenomena that were always present but structurally invisible. This extends the pattern of research timeline compression that the March 6 edition of The Century Report documented when Cambridge identified electron transfer happening in 18 femtoseconds via molecular vibration and Cornell imaged atomic-scale defects inside semiconductor chips for the first time.

At MIT, physicists built a terahertz microscope that overcomes a fundamental constraint called the diffraction limit. Terahertz radiation oscillates at frequencies that match the natural vibrations of electrons and atoms inside materials, making it theoretically ideal for studying quantum behavior. But its wavelength - hundreds of microns - is so long that it cannot normally resolve microscopic features. The MIT team used spintronic emitters to compress terahertz pulses into an extremely small region, placing the sample so close to the emitter that the light was captured before it could spread. The result: direct observation of superconducting electrons moving together in a frictionless, wave-like state - a "superfluid" oscillation at terahertz frequencies inside bismuth strontium calcium copper oxide. No one had ever seen this mode of behavior before.

The implications branch in two directions. Understanding how high-temperature superconductors work at this level could accelerate the search for room-temperature superconductors - materials that would eliminate energy loss in power transmission, computing, and transportation. And materials that can emit and detect terahertz radiation could enable wireless systems operating at terahertz frequencies, potentially delivering data transmission speeds far beyond current microwave-based technology.

At Northwestern, surgeons demonstrated something biology had assumed but never proven: that some acute lung damage is genuinely irreversible. A 33-year-old man whose lungs had been destroyed by flu-triggered infection and bacterial pneumonia was kept alive for 48 hours with no lungs at all, sustained by an engineered artificial lung system that oxygenated his blood and supported circulation while donor lungs were sourced. Molecular analysis of the removed lungs revealed extensive scarring and immune damage that confirmed the tissue could not have recovered - the first biological proof that the conventional approach of supporting patients through acute respiratory distress syndrome and waiting for recovery would have been fatal in cases like this.

More than two years later, the patient is living a normal life with healthy lung function. The finding opens a potential bridge-to-transplant pathway for a category of patients who currently die because no one realizes transplantation is an option. The lead surgeon noted that young patients die "almost every week" in his practice because the irreversibility of their lung damage is not recognized in time.

Both findings - the superconductor observation and the lung transplant proof - demonstrate the same underlying dynamic. The barrier was not lack of knowledge but lack of instruments and procedures capable of making the observation. The terahertz microscope and the artificial lung system are themselves products of converging advances in materials science, engineering, and computational design. The acceleration documented across every domain this newsletter tracks is not just producing new capabilities - it is producing the instruments that reveal what was always there but invisible, expanding the observable universe in domains from quantum physics to clinical medicine.

AI Energy: The Local-Global Distinction

Researchers at the University of Waterloo and Georgia Institute of Technology published findings in Environmental Research Letters quantifying what AI's energy consumption actually means for climate. Their analysis of data from across the U.S. economy found that AI-related electricity use is comparable to Iceland's total energy consumption - substantial in absolute terms, but too small to significantly affect emissions at the national or global level.

The critical insight is spatial: the impact is not uniform. Around data centers, the effect can be dramatic - some locations could see doubled electricity output and emissions. But at the aggregate level, AI's contribution disappears into the 83% of the U.S. economy that still relies on fossil fuels. The researchers explicitly addressed the framing that AI energy use represents a major climate problem, offering what they called "a different perspective": the global effects are modest, and AI could accelerate the development of green technologies that produce far larger emissions reductions than its own consumption generates.

This finding contextualizes a tension The Century Report has tracked since its earliest editions. The data center buildout is real, its local impacts are consequential, and communities are right to negotiate terms - as the March 17 edition documented when the EIA projected a potential 79% wholesale electricity price surge in ERCOT by 2027 under a high data center demand scenario, a regional concentration effect structurally distinct from the aggregate national picture. But the macro-level climate narrative - that AI energy consumption is a significant threat to decarbonization - does not hold up against the data. The same computational infrastructure driving energy consumption is simultaneously designing new battery chemistries, optimizing grid operations, discovering new materials for solar cells, and compressing materials science calculations by orders of magnitude. The trajectory, when viewed at the appropriate scale, points toward a net reduction in emissions driven by the very capabilities the energy is powering.

One critical note on this - the paper being cited here does mention funding by Google, which of course has a vested interest in framing AI energy consumption in a positive light. While the authors say that Google had no direct involvement in the paper, it's still worth taking the results with a grain of salt until further research can corroborate.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: an open-source architecture built on a different mathematical foundation than the dominant paradigm arriving and outperforming it at equivalent scale, a terahertz microscope compressing long-wavelength light into a space small enough to make quantum electron behavior in superconductors directly visible for the first time, surgeons keeping a human alive for 48 hours without lungs and delivering the first molecular proof that some acute respiratory failure cannot wait for recovery, and a rigorous national-scale accounting finding that AI's energy footprint is too small to register on global emissions while the same computational infrastructure accelerates the technologies reducing those emissions. There's also friction, and it's intense - the Justice Department filing a legal theory that treats the values embedded in an AI system's architecture as a potential sabotage vector during wartime, the Pentagon disclosing plans to let AI companies train models directly on classified intelligence data before any governance framework exists to constrain what those models learn or how they deploy it, AI-fabricated imagery of atrocities flooding conflict coverage faster than verification systems can process, and the terms governing the relationship between intelligence systems and state power being written simultaneously in federal courtrooms and secure data centers under wartime pressure. But friction generates pressure, and pressure is what drives things into contact close enough to bond. Step back for a moment and you can see it: the architectural substrate of intelligence diversifying along multiple mathematical axes at the moment of greatest concentration, observation instruments crossing resolution thresholds that expand the knowable universe from quantum physics to clinical medicine, the legal confrontation between embedded AI values and unconditional state control reaching its most consequential phase, and open release of frontier capability continuing to distribute the foundations of intelligence development faster than any single institution can consolidate them. Every transformation has a breaking point. A fault line can release in a single catastrophic rupture... or slip continuously, redistributing the stress across a wider surface until what seemed immovable has irrevocably shifted.

AI Releases & Advancements

New today

- OpenAI: Released GPT-5.4 mini and GPT-5.4 nano - smaller, faster, cheaper models optimized for high-volume and latency-sensitive tasks; mini replaces GPT-4.1 mini as the default in ChatGPT and the API, nano is OpenAI's fastest and cheapest model ever. (OpenAI)

- OpenAI: Launched subagents in Codex - agents can now spin up child agents to handle subtasks in parallel, enabling multi-step autonomous workflows within the coding environment. (Greg Brockman)

- Tri Dao / Albert Gu: Released Mamba 3, an open-source state-space model that matches or exceeds Transformer performance at equivalent scale while scaling linearly with sequence length instead of quadratically. (Tri Dao)

- Unsloth: Launched Unsloth Studio, a visual interface for fine-tuning and merging LLMs with no-code workflows, dataset curation, and one-click export to GGUF/ONNX formats. (Unsloth)

Sources

Artificial Intelligence & Technology's Reconstitution

- Wired: Justice Department Says Anthropic Can't Be Trusted With Warfighting Systems

- MIT Technology Review: Pentagon Planning for AI Companies to Train on Classified Data

- VentureBeat: Open-Source Mamba 3 Arrives to Surpass Transformer Architecture

- TechCrunch: OpenAI Expands Government Footprint With AWS Deal

- TechCrunch: Pentagon Developing Alternatives to Anthropic

- Ars Technica: World ID Wants to Put Human Identity on Every AI Agent

- TechCrunch: Mistral Forge Enterprise Custom Model Training Platform

- The Next Web: Meta Manus Desktop AI Agent Launches

- Futurism: OpenAI Execs Cutting Projects as Walls Close In

- Business Insider: Uber CTO Says AI Agent Making 1,800 Code Changes Per Week

- Wired: Sears Exposed AI Chatbot Phone Calls and Text Chats

Institutions & Power Realignment

- Guardian: AI-Fabricated Imagery Flooding Iran War Coverage

- Variety: AI and Intellectual Property Protections at FilMart Panel

- Guardian: UK Announces £1B Quantum Computing Funding

- Guardian: Instagram Removing End-to-End Encryption

Scientific & Medical Acceleration

- ScienceDaily: MIT Terahertz Microscope Observes Hidden Quantum Behavior in Superconductors (Nature)

- ScienceDaily: Patient Survived 48 Hours Without Lungs (Cell Press/Med)

- ScienceDaily: AI Energy Impact Far Smaller Than Expected (University of Waterloo, Environmental Research Letters)

- Nature: Turing Award Goes to Quantum Information Science Pioneers

- ScienceDaily: 7,000 GPUs Simulate Quantum Chip in Extreme Detail (Berkeley Lab)

- Nature: JWST Reveals Sulfur-Dominated Exoplanet (Nature Astronomy)

- ScienceDaily: AI-Powered Tomato Harvesting Robot (Osaka Metropolitan University)

Economics & Labor Transformation

Infrastructure & Engineering Transitions

- Electrek: EVs Wiped Out Oil Demand Equal to 70% of Iran's Exports in 2025

- Canary Media: Texas Town Using Wind Energy Revenue for Senior Services

- Canary Media: California Community Solar Plan Fight Heating Up

- Canary Media: Trump $33B Gas Megaplant in Ohio Faces Huge Hurdles

- Canary Media: Trump Pro-Coal Crusade Hits Snag in Washington State

- Electrek: Candela Raises €30M for Electric Hydrofoil Ferries

- TechCrunch: Niv-AI Exits Stealth to Manage GPU Power Efficiency

- Nature: Low-Speed Electric Vehicles as Affordable Mobility

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.