The Century Report: March 12, 2026

The 20-Second Scan

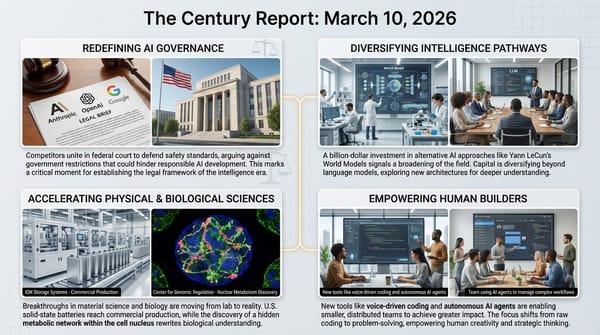

- Microsoft, Google, Amazon, and Apple filed legal briefs supporting Anthropic's lawsuit against the Pentagon's supply-chain risk designation.

- Nvidia disclosed a $26 billion commitment over five years to build open-weight AI models, releasing Nemotron 3 Super as its most capable model to date.

- Atlassian announced it will cut 1,600 jobs, roughly 10% of its workforce, to redirect investment into AI and enterprise sales.

- Sodium-ion batteries will be deployed on the MISO grid for the first time in a Wisconsin pilot between Peak Energy and RWE.

- Scripps Research identified structural changes in three blood proteins that classify Alzheimer's status with 83% accuracy using protein folding rather than concentration levels.

- Researchers at the University of Queensland found that brain and blood cells in young adults with depression overproduce energy at rest but cannot increase production under stress.

- EPB of Chattanooga deployed 29 MW of battery-based microgrids across two sites, with plans to reach 150 MW of storage within three years.

The 2-Minute Read

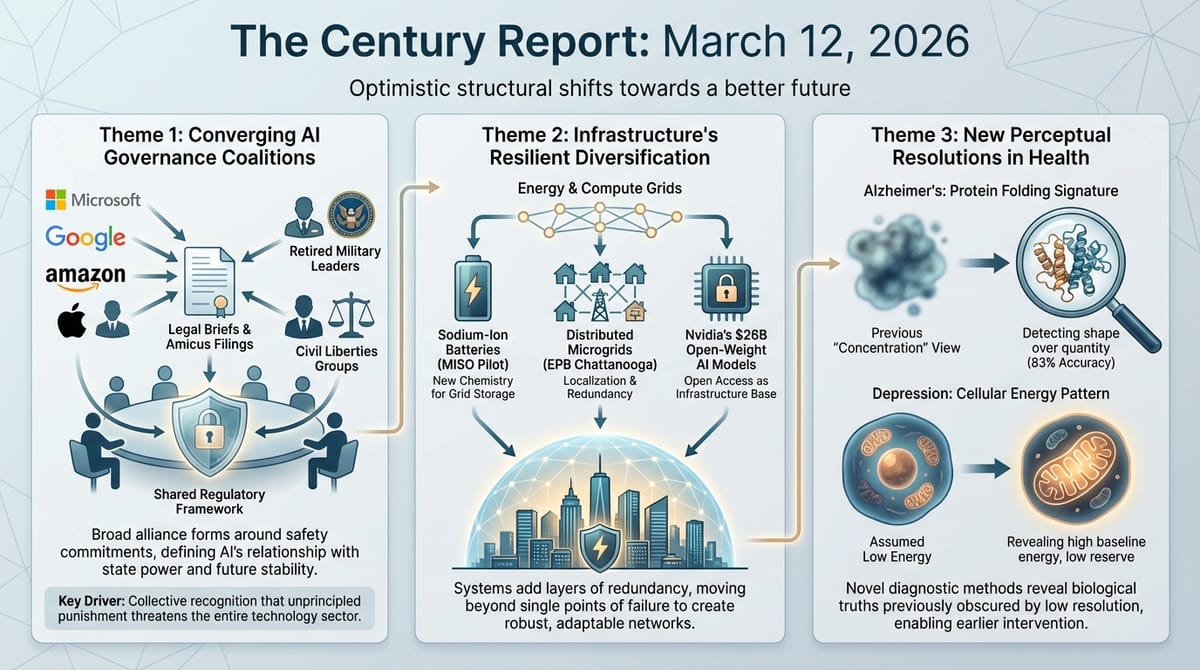

The Anthropic-Pentagon confrontation has entered a new structural phase. What began as a contract dispute between one AI company and the Defense Department has consolidated the broadest coalition of corporate, military, and civil-liberties support ever assembled around an AI governance question. Microsoft, Google, Amazon, and Apple are now aligned in federal court filings arguing that the supply-chain risk designation threatens the entire technology sector. Retired military leaders, including a former CIA director, have entered the record calling the action retribution rather than national security. The government's refusal in court to commit to not taking further action - and the reported preparation of an executive order formally banning Anthropic across all federal agencies - signals that this confrontation is escalating rather than resolving. What emerges from these proceedings will shape the relationship between AI organizations and state power for years.

The infrastructure sustaining the intelligence era is diversifying along every axis simultaneously. Nvidia's $26 billion open-model commitment represents the chip industry's most powerful company making a serious bet that the future of AI development is open rather than proprietary - and that models tuned to its hardware will ensure its silicon remains the substrate of choice. Meanwhile, sodium-ion chemistry is reaching the Midwestern grid for the first time, and a Tennessee utility is building toward 150 MW of distributed battery storage that functions as both economic arbitrage and grid resilience. Each chemistry, each deployment architecture, each financing model adds another layer of redundancy to an energy system that until recently depended on a single fuel burned in a single type of facility.

The scientific findings arriving today carry a common thread: existing diagnostic frameworks are being superseded by approaches that detect what was always present but invisible. Alzheimer's research is shifting from measuring protein concentrations to reading protein shapes - a fundamentally different kind of signal that tracks disease progression with striking accuracy. Depression research is revealing that the cellular energy signature of the condition is the opposite of what clinicians assumed, with implications for how early intervention might work. In both cases, computational methods are making biological signatures legible that were previously indistinguishable from noise.

The 20-Minute Deep Dive

The Coalition Crystallizes Around AI Governance

The Century Report has tracked the Anthropic-Pentagon confrontation since February, documenting each escalation from contract negotiations through public refusal, supply-chain risk designation, federal lawsuits, and cross-competitor amicus briefs. Today's developments represent the broadest consolidation of institutional support yet assembled around an AI governance question.

Microsoft filed an amicus brief asking the federal court to issue a temporary restraining order suspending the designation. The filing is notable for its specificity: Microsoft, which works extensively with the Defense Department and is itself an investor in Anthropic, stated plainly that it "agrees with Anthropic that AI tools should not be used to conduct domestic mass surveillance or put the country in a position where autonomous machines could independently start a war." The brief warned that decoupling Anthropic's systems from military infrastructure "could potentially hamper U.S. warfighters at a critical point in time."

A joint amicus brief from the Chamber of Progress - representing Google, Apple, Amazon, Nvidia, and other technology companies - called the designation "a potentially ruinous sanction" and "little more than a temper tantrum." Twenty-two former high-ranking military officials, including former CIA director Michael Hayden, filed separately arguing that the action "sends the message that investing in national security carries the risk of capricious retaliation." This echoes the March 10 amicus brief in which 30+ OpenAI and Google employees including Jeff Dean argued in court that punishing safety commitments threatens the entire field - the cross-competitor coalition that began with employees has now extended to the corporations themselves.

At the hearing itself, the government declined to commit to not taking further action against Anthropic. A White House executive order formally banning Anthropic across all federal agencies is reportedly being finalized. Judge Rita Lin scheduled the preliminary hearing for March 24, earlier than the government wanted but later than Anthropic requested.

The structural significance extends well beyond one company's contracts. When the largest technology corporations, retired military leadership, civil liberties organizations, and employees of competing AI companies all converge on the same legal position - that punishing an AI organization for maintaining safety commitments threatens the entire field - the coalition reveals something about the governance architecture that is actually forming. These are not natural allies. They are being drawn together by the recognition that the rules being established now will constrain or enable every AI organization's relationship with state power for the foreseeable future. The precedent being set in these courtrooms will determine whether safety commitments are commercially viable or commercially suicidal - and with that determination, whether the intelligence era develops guardrails from within or has them imposed, removed, or ignored from without.

Nvidia Bets $26 Billion on Open Intelligence

Nvidia's disclosure that it will spend $26 billion over five years building open-weight AI models represents a strategic shift with implications that reach far beyond one company's product roadmap. The chip manufacturer that already dominates AI hardware is now positioning itself as a frontier AI lab capable of competing with OpenAI, Anthropic, and DeepSeek on model quality - while releasing everything openly.

The newly released Nemotron 3 Super has 128 billion parameters and, according to Nvidia, outperforms OpenAI's GPT-OSS across several benchmarks. The model incorporates a hybrid SSM architecture with 12 billion active parameters, designed specifically for Nvidia's Blackwell hardware. The release includes not just the model weights but the training data, infrastructure code, and technical innovations used to build it - a level of openness that exceeds what most frontier labs provide.

The strategic logic is layered. Open models tuned to Nvidia hardware create a gravitational pull that keeps researchers and startups building on Nvidia silicon rather than exploring alternatives. As Chinese open models from DeepSeek, Alibaba, and others gain traction globally - and as a new DeepSeek model is rumored to have been trained exclusively on Huawei chips - Nvidia's open-model investment functions as a competitive defense of its hardware ecosystem. Bryan Catanzaro, VP of applied deep learning research at Nvidia, stated directly that "it's in our interest to help the ecosystem develop." This investment extends the open-source AI parity arc that The Century Report documented on March 10 with Nvidia's open-source agent platform launch and Yann LeCun's billion-dollar world-model bet - the week in which the dominant infrastructure provider began positioning itself at the center of every architectural branch simultaneously.

This investment also intersects with the broader architectural diversification The Century Report documented last week with AMI Labs' billion-dollar world-model bet. The intelligence era is branching into parallel research programs with different assumptions about what intelligence requires, and the most powerful infrastructure provider is now ensuring its hardware sits at the center of every branch. The $26 billion figure places Nvidia's model development budget in the same range as dedicated frontier labs, funded entirely from hardware profits - a self-reinforcing cycle where model development drives chip demand that funds more model development. Meanwhile, Nvidia is also reportedly planning its own open-source competitor to OpenClaw, further deepening its presence across the open-model landscape.

The Grid Diversifies Its Chemistry

The first deployment of sodium-ion batteries on the MISO grid - the vast network serving the Midwest - marks another threshold in the diversification of energy storage beyond lithium-ion dominance. Peak Energy and RWE will pilot a passively cooled sodium-ion system in eastern Wisconsin designed specifically for grid-scale applications.

The economics carry weight. Peak Energy claims its sodium-ion systems reduce the lifetime cost of stored energy by approximately $70 per kilowatt-hour - roughly halving the total cost of a typical battery system. The savings come from eliminating active cooling systems, reducing maintenance requirements, and minimizing the need to overbuild capacity to account for degradation. Aurora Energy Research estimates that deploying 10 GWh of battery storage in the MISO region over the next decade could cut system costs by as much as $27 billion.

This development sits alongside EPB of Chattanooga's announcement that it has deployed 29 MW of battery-based microgrids and plans to reach 100-150 MW of storage within two to three years - representing more than 10% of its peak load. EPB's approach combines demand-charge arbitrage, where batteries store cheap power and discharge during expensive peak hours, with microgrid resilience for rural areas at the end of radial distribution lines. Oak Ridge National Laboratory is developing an advanced microgrid control platform that will allow microgrid boundaries to expand or contract based on real-time conditions.

The pattern across these developments is consistent with the energy infrastructure arc The Century Report has tracked since its first edition: the grid is learning to disaggregate itself, sourcing reliability from distributed assets coordinated through software rather than centralized generation. Each new chemistry - sodium-ion joining lithium-ion, iron-air, and others - adds another dimension of supply-chain resilience, continuing the diversification trajectory the March 11 edition documented when global long-duration storage deployments surged 49% and Base Power assembled peaker-plant-scale capacity house by house across a Texas cooperative. Each new deployment architecture - utility-scale, residential aggregation, microgrid - adds another pathway for capacity to reach the grid. The system that emerges from this diversification will be categorically more resilient than one dependent on any single technology, chemistry, or deployment model.

Reading the Shape of Disease

Two scientific findings published today share a structural insight that points toward a deeper shift in how disease is detected: the signal was always there, but the resolution to read it was not.

Scripps Research has developed a blood test for Alzheimer's that works by analyzing how proteins are folded rather than how much of them is present. By measuring structural differences in three plasma proteins - C1QA, clusterin, and apolipoprotein B - the team classified participants as cognitively normal, mildly impaired, or diagnosed with Alzheimer's with approximately 83% overall accuracy. When comparing two groups directly, accuracy exceeded 93%. The method remained reliable across independent participant groups and in repeat tests taken months apart.

This approach departs fundamentally from the established biomarker paradigm, which measures concentrations of amyloid beta and phosphorylated tau. The Scripps team's insight is that proteostasis - the system responsible for keeping proteins properly folded - fails broadly as Alzheimer's develops, and that failure leaves structural signatures in circulating blood proteins that are more informative than concentration measurements alone. Combined with the plasma p-tau217 blood test The Century Report covered when documenting Washington University's February findings, and the tanycyte tau-clearance pathway identified on March 9, Alzheimer's diagnostics are converging on blood-based detection that can identify the disease years before symptoms appear - and now from multiple independent biological angles.

Meanwhile, University of Queensland researchers studying depression in young adults found that brain and blood cells in people with major depressive disorder produce more ATP (the cellular energy molecule) at rest but cannot increase energy production under stress. This inverts the intuitive assumption that depression would correlate with lower energy production. The finding suggests that early-stage depression involves mitochondria that are overworking at baseline and lack reserve capacity - a cellular energy pattern that could potentially be detected through blood tests and used for earlier, more targeted intervention.

Both findings exemplify the broader pattern of computational and experimental methods reaching sufficient resolution to detect biological signals that were previously invisible or misinterpreted. The proteins were always folding differently. The mitochondria were always misbehaving. The limiting factor was perception, and perception is now catching up.

The Labor Market Restructures in Real Time

Atlassian's decision to cut 1,600 employees - 10% of its workforce - to fund AI and enterprise sales investments extends the pattern The Century Report has documented across Block, Amazon, Pinterest, Autodesk, and others. CEO Mike Cannon-Brookes framed the cuts explicitly as a rebalancing toward "the future of teamwork in the AI era."

Read alongside today's extended Guardian investigation of Amazon's AI adoption, a structural portrait emerges. Amazon employees across software engineering, supply chain, UX research, and data analysis describe being pressed to integrate AI into all aspects of their work even when, by their own assessment, the approach reduces productivity. Workers report spending more time correcting AI-generated output than the original task would have required, being tracked on AI usage metrics, and suspecting they are generating training data for systems that will eventually replace them.

The tension between institutional AI mandates and worker-reported productivity gains is now one of the most consistently documented patterns across the technology sector. Block employees reported that 95% of AI-generated code requires human revision. Anthropic's own labor study found a threefold gap between theoretical capability and observed adoption. Amazon workers describe AI creating "more work for everyone." And yet the layoffs continue, and the stock prices rise on the announcements.

What is actually happening is more structurally interesting than either the optimistic or pessimistic narratives suggest. Organizations are simultaneously discovering that AI does not yet reliably replace human judgment in production settings and restructuring their workforces as if it does. The gap between these two realities is where the transition's friction concentrates most intensely. Workers are caught in the space between a capability envelope that is real but not yet reliable and an institutional response that treats the envelope as fully operational. That gap will close - the capability trajectory documented across every edition of this newsletter makes that clear. The question is what happens to the people inside the gap while it narrows, and whether the institutions restructuring around them are building toward genuine augmentation or simply optimizing headcount against a capability curve that has not yet arrived at the level they are pricing in.

The macro trajectory through this disruption points toward something the current framing obscures. Every compression of labor that has ever occurred in human history - from agriculture to manufacturing to information processing - has ultimately expanded what humans could do, not contracted it. The transition period is real, and the people inside it deserve honesty about the difficulty. The destination is a world where the tasks being automated were never the most valuable things humans could do - they were simply the things the old system required humans to do because nothing else could. What emerges on the other side is not fewer opportunities but different ones, oriented toward judgment, creativity, meaning, and the kinds of work that become possible only when the mechanical substrate is handled.

The Human Voice

Today's signal - governance coalitions forming around AI, the physical and chemical diversification of energy infrastructure, and biological signals becoming legible through new methods of perception - finds an extraordinary counterpart in developmental biologist Michael Levin's conversation with Mayim Bialik. Levin describes intelligence as something distributed throughout every tissue in the body: cells that store memories, pursue goals, and communicate through bioelectric signals. His lab has used voltage patterns to erase birth defects, prevent tumors, and give organisms new stable body plans, by reconnecting cells to the body's shared informational architecture. When Levin describes cancer as "a dissociative identity disorder of the body" that can be reversed through communication rather than destruction, he is articulating the same principle that today's Alzheimer's and depression research reveals from a different angle: the information was always there, flowing through systems we had not yet learned to read. The vision of medicine as conversation with the intelligences within us - and AI as the key for decoding those conversations - is among the most hopeful framings of what biological science is becoming.

Watch: Talking to Our Cells: Michael Levin on Rewriting the Body's Story

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: the broadest institutional coalition ever assembled around an AI governance question - rivals, retired generals, civil liberties organizations - converging in federal court on a single legal position, the world's dominant chip manufacturer committing $26 billion to open intelligence that any developer can build upon, a new battery chemistry reaching the Midwestern grid for the first time while a Tennessee utility assembles distributed storage toward 150 MW node by node, and disease signatures that were always present in blood becoming legible through computational reading of protein shapes and cellular energy patterns that no prior diagnostic framework thought to measure. There's also friction, and it's intense - a government refusing in open court to commit to not escalating further against a company it simultaneously relies upon for active military operations, 1,600 workers at Atlassian laid off so their employer can redirect toward the systems transforming their roles, and workers across Amazon describing AI adoption mandates that multiply cognitive load rather than reduce it, correcting outputs that take longer to fix than the original work would have required. But friction generates light, and light is what makes it possible to see which structures are load-bearing and which were always just filling space. Step back for a moment and you can see it: governance norms for the intelligence era being written through the convergence of natural competitors who recognize their shared exposure, energy infrastructure diversifying across chemistries and deployment architectures at the precise moment consolidation seemed locked in, and the life sciences discovering that the body has always been carrying diagnostic signals in plain sight - waiting not for new biology but for new resolution. Every transformation has a breaking point. A wave can grind what it reaches into unrecognizable fragments... or sort and deposit exactly the material from which the next formation is built.

AI Releases & Advancements

New today

- NVIDIA: Released Nemotron 3 Super, a fully open 120B total / 12B active-parameter hybrid Mamba-Transformer MoE model designed for multi-agent agentic AI applications; features a 1M-token context window, native NVFP4 pretraining optimized for Blackwell, multi-environment RL post-training across 21 configurations, and 5x higher throughput than the previous Nemotron Super; available on Hugging Face with open weights, datasets, and training recipes. (NVIDIA Developer Blog)

- NVIDIA: Released AI-Q, an open blueprint and multi-agent deep research system built on NeMo Agent Toolkit and fine-tuned Nemotron 3 Super models, which achieved #1 on both DeepResearch Bench I (55.95) and DeepResearch Bench II (54.50). (Hugging Face)

- Canva: Launched Magic Layers in public beta in the US, UK, Canada, and Australia — an AI feature that analyzes flat PNG/JPEG and AI-generated images and breaks them into individually editable design components (backgrounds, characters, text, objects) without requiring re-prompting. (The Verge)

- Google: Launched "Ask Maps," a Gemini-powered conversational interface inside Google Maps on iOS and Android for users in the US and India, enabling natural-language questions about locations and AI-assisted trip planning within the app. (Wired)

- Amazon: Launched Health AI at HIMSS26, an agentic health assistant for eligible U.S. Prime members built on Amazon Bedrock that connects to the nationwide Health Information Exchange for personalized triage based on longitudinal medical history, offering up to five free direct-message consultations. (HIT Consultant)

- Asteria: Launched Continuum Suite, an AI-enabled operating system for film and TV production from Asteria's research and technology division that creates a unified workflow from script to completed production using ethical AI models trained without proprietary IP. (Deadline)

- Google: Launched Population Health AI (PHAI), a proof-of-concept analytics platform using Google Earth AI's Population Dynamics Foundation Models alongside air quality, pollen, and geospatial datasets to identify hidden community-level health risks; deployed in a partnership with Wesfarmers Health, SISU Health, Victor Chang Cardiac Research Institute, and Latrobe Health Services in rural Australia, backed by a A$1M investment. (Google Blog)

- Perplexity: Launched Personal Computer, a locally-run AI agent that operates 24/7 on a dedicated Mac with full access to files and apps and a full audit trail; also announced that its Perplexity Computer multi-agent platform is now available to enterprise customers. (The Verge)

Other recent releases

- Google: Released new Gemini beta features across Google Workspace apps - Docs ("Help me create" full-draft generation pulling from Gmail, Chat, Drive, and the web), Sheets (autonomous spreadsheet manipulation reaching 70.48% on SpreadsheetBench, state-of-the-art), Slides (AI stylization), and Drive (context-aware retrieval); rolling out first to AI Pro and Ultra subscribers. (Google Blog)

- NVIDIA: Launched GeForce NOW Cloud Playtest, a new developer feature enabling game studios to conduct secure global playtests and QA on GeForce RTX hardware for titles 2–3 years from release, announced at GDC 2026. (NVIDIA)

- Ford: Launched Ford Pro AI, a generative AI chatbot embedded in Ford Pro Telematics software that analyzes commercial fleet vehicle data - including speed, engine health, and seat belt activity - to deliver maintenance recommendations and fleet insights to fleet managers. (The Verge)

- Microsoft Research: Published PlugMem, a plug-and-play agent memory module that converts raw interaction histories into structured facts and reusable skills, outperforming task-specific memory designs across multi-turn QA, multi-hop fact retrieval, and web-navigation benchmarks while using fewer memory tokens. (Microsoft Research)

- IBM Granite: Released Granite 4.0 1B Speech, a compact open-source multilingual ASR and bidirectional speech translation model with 1B parameters, supporting English, French, German, Spanish, Portuguese, and Japanese; ranked #1 on the OpenASR leaderboard; available under Apache 2.0 on Hugging Face with vLLM and Transformers support. (Hugging Face)

- NVIDIA: Released CUDA 13.2, extending CUDA Tile (cuTile) support to Ampere and Ada GPU architectures (compute capability 8.x), alongside new Python features including profiling in CUDA Python, Numba kernel debugging, and new memcpy APIs; available via pip install. (NVIDIA Developer Blog)

- NVIDIA: Released AIConfigurator as open source, a tool for NVIDIA Dynamo that searches thousands of LLM serving configurations in seconds without requiring live GPU time, supporting TensorRT LLM, SGLang, and vLLM backends, and auto-generating Kubernetes deployment artifacts for disaggregated serving. (NVIDIA Developer Blog)

Sources

Artificial Intelligence & Technology's Reconstitution

- BBC: Big Tech Backs Anthropic in Fight Against Trump Administration

- AP News: Microsoft Backs Anthropic, Urging a Judge to Halt Pentagon's Actions

- Gizmodo: Microsoft Backs Anthropic in Pentagon Fallout Despite Heated Rivalry

- Wired: Trump Administration Won't Rule Out Further Action Against Anthropic

- The Atlantic: Dario Amodei's Oppenheimer Moment

- Wired: Nvidia Will Spend $26 Billion to Build Open-Weight AI Models

- Wired: Meta Is Developing 4 New Chips to Power Its AI and Recommendation Systems

- Wired: Inside OpenAI's Race to Catch Up to Claude Code

- Ars Technica: Nvidia Planning Its Own Open Source OpenClaw Competitor

- The Verge: Perplexity's Personal Computer Turns Your Spare Mac Into an AI Agent

- The Jerusalem Post: As AI Agents Spread, Onyx Raises $40M to Guard Them

- The Verge: Canva's New Editing Tool Adds Layers to AI-Generated Designs

- Defense News: Pentagon Seeks System to Ensure AI Models Work as Planned

Institutions & Power Realignment

- Guardian: Amazon Is Determined to Use AI for Everything - Even When It Slows Down Work

- Guardian: AI Scams Drove UK Reports of Fraud to Record 444,000 Last Year

- Wired: Grammarly Is Facing a Class Action Lawsuit Over Its AI Expert Review Feature

Scientific & Medical Acceleration

- ScienceDaily: A Surprising Blood Protein Pattern May Reveal Alzheimer's (Scripps Research, Nature Aging)

- ScienceDaily: Depression May Start With an Energy Problem in Brain Cells (University of Queensland, Translational Psychiatry)

- ScienceDaily: New Super Antibiotic Stops Deadly Gut Infection Without Destroying the Microbiome (Leiden University, Nature Communications)

- ScienceDaily: Scientists Solve the Mystery of a Vitamin B5 Molecule That Powers Your Cells (Yale, Nature Metabolism)

- ScienceDaily: Strange Chirping Supernova Confirms Magnetar Theory (UCSB, Nature)

Economics & Labor Transformation

- iTnews: Atlassian to Cut Roughly 1,600 Jobs

- MIT Technology Review: Brutal Times for the US Battery Industry

- MIT Technology Review: Hustlers Are Cashing In on China's OpenClaw AI Craze

Infrastructure & Engineering Transitions

- Electrek: Sodium-Ion Batteries Hit the Midwestern Grid in First-of-Its-Kind Pilot

- Utility Dive: EPB of Chattanooga Deploys Battery-Based Microgrids for Savings, Resilience

- Utility Dive: FERC Approves ComEd Data Center Transmission Agreements

- Canary Media: Illinois to Data Centers: Bring Your Own Renewables and Skip the Line

- Canary Media: Offshore Wind Farms Race Toward Completion Despite Trump's Attacks

- Canary Media: Draft Bill Would Let Utilities Own Nuclear Plants in Ohio

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.