8nm Chips Meet 10K-Qubit Threat - TCR 04/03/26

The 20-Second Scan

- ASML demonstrated a next-generation extreme ultraviolet lithography system that patterned 8-nanometre features on silicon wafers in a single step, enabling 2.9 times more transistors per chip than the previous generation.

- A single injection of gene therapy restored hearing in all ten patients with congenital OTOF-linked deafness, including teenagers and adults treated for the first time.

- Google released Gemma 4 under the Apache 2.0 open-source license, making its most capable open-weight model family freely modifiable and redistributable for the first time.

- The Trump administration filed an appeal of Judge Lin's injunction blocking the Pentagon's supply-chain risk designation against Anthropic, with a Ninth Circuit briefing deadline set for April 30.

- Two independent analyses published in Nature found that quantum computers could crack current encryption systems with as few as 10,000 qubits, compressing the timeline for cryptographic vulnerability from a decade to potentially before 2030.

- Cursor launched Cursor 3, an agent-first coding interface where developers manage multiple autonomous AI agents rather than writing code themselves.

- Arevon began construction of a 1-gigawatt-hour urban battery next to San Francisco's Cow Palace, contracted for 15 years to serve Bay Area residents through a community choice aggregator.

The 2-Minute Read

The ASML lithography breakthrough and the quantum computing timeline compression arrived on the same day, and together they describe the physical substrate of computation being reshaped from both ends simultaneously. A machine the size of a double-decker bus can now etch features smaller than the width of a strand of DNA onto silicon, tripling transistor density per chip generation and directly addressing the computational demand driving the intelligence era. Meanwhile, the encryption systems protecting every credit card transaction, cryptocurrency wallet, and internet communication may have a shorter shelf life than anyone assumed - with two independent teams concluding that 10,000 qubits, rather than millions, could be sufficient to crack current security keys. The infrastructure being built and the infrastructure being threatened are advancing on converging timelines.

The gene therapy result from Karolinska Institutet represents something more structurally significant than a single clinical success. Hearing loss linked to OTOF mutations affects a defined genetic population, and this trial demonstrated restoration across an age range previously thought unreachable - from toddlers to a 24-year-old adult - with a single injection producing measurable improvement within one month. The speed of therapeutic response, the breadth of the patient population, and the researchers' explicit statement that they are already extending the approach to more common deafness genes (GJB2, TMC1) describe the compression pattern The Century Report tracks across medical science: the distance between identifying a genetic mechanism and delivering a working intervention is shrinking with each successive trial.

Google's decision to release Gemma 4 under Apache 2.0 marks a structural shift in how the largest AI incumbents relate to the open-source ecosystem. Previous Gemma releases were open-weight but still governed by Google's terms. Apache 2.0 removes those constraints entirely - anyone can modify, redistribute, and commercially deploy these models without restriction beyond attribution. That this coincides with Cursor's launch of an agent-first coding interface where developers manage autonomous agents rather than write code confirms the velocity at which the relationship between human developers and intelligence systems is being restructured. The coding environment itself is becoming a management dashboard, and the models powering it are becoming freely available infrastructure.

The 20-Minute Deep Dive

The Smallest Features Ever Etched

ASML's demonstration of 8-nanometre patterning in a single lithographic step represents the kind of physical engineering achievement that reshapes what computation can become. The system, described at the SPIE Advanced Lithography conference in February and covered by Nature yesterday, uses high-numerical-aperture extreme ultraviolet optics to project patterns onto silicon wafers with nearly atomic precision. The company has already shipped approximately ten of these systems - each costing roughly $400 million - to chipmakers including Intel and SK hynix for next-generation fabrication.

The significance extends well beyond incremental improvement. A 2.9x increase in transistor density per chip area directly addresses the central constraint of the intelligence era: the demand for more computation per watt of energy consumed. Every data center, every frontier model training run, every agentic coding session draws on chips whose capability is ultimately bounded by how many transistors engineers can fit onto a given area of silicon. Moore's Law has been sustained for decades through precisely this kind of lithographic advance, but each generation requires exponentially more sophisticated engineering. The previous generation of EUV systems, with their 0.33 numerical aperture, were already considered engineering marvels. The new high-NA systems push the optics to 0.55, enabling resolution that the chip industry had not expected to reach this quickly.

What emerges beyond this generation is the question the chip industry is already working on. ASML's head of research metrology described the demand as "monumental in the number of chips that are needed and the scaling that is needed." The AI boom has turned what was once a steady cadence of chip improvement into a race where the physical limits of lithography intersect with the insatiable appetite of intelligence systems for more capable silicon. The 8-nanometre milestone is a waypoint, and the trajectory points toward structures so small that quantum effects become design considerations rather than distant curiosities - which connects directly to another development from yesterday.

Quantum Computing's Timeline Compression

Two independent analyses posted on March 30 and covered by Nature yesterday fundamentally altered the consensus on when quantum computers will threaten current encryption. A team at Oratomic, a Caltech spinoff, demonstrated that cracking P-256 elliptic curve cryptography - one of the most widely deployed security standards protecting internet communications, banking, and cryptocurrencies - could require as few as 10,000 reconfigurable atomic qubits. A separate Google white paper reached complementary conclusions through different methods.

The prior consensus among cryptographers and quantum computing researchers had been that millions of qubits would be necessary, placing the threat at least a decade away. Oratomic co-founder Dolev Bluvstein described his own team's surprise at the result: "I had gone around giving talks saying that you needed millions of qubits." The compression comes from combining recent advances in quantum error correction, atom-based quantum hardware, and algorithmic optimization into a unified analysis that dramatically reduces the resource requirements.

The implications propagate immediately. Cloudflare, which protects roughly one quarter of global internet traffic, described the findings as generating "renewed urgency." Mathematician Jintai Ding at Tsinghua University noted the findings have prompted discussions "ranging from academics to bankers and to people who care about cryptocurrencies." Scott Aaronson at UT Austin called them "quantum computing bombshells."

The structural significance is that the timeline for quantum-safe cryptographic migration - the massive infrastructure project of replacing encryption standards across the entire internet - just compressed from "sometime next decade" to "potentially before 2030." Organizations that had been treating post-quantum cryptography as a long-term planning exercise now face the possibility that their current security infrastructure has an expiration date within their existing budget cycles. This kind of research timeline compression - where results arrive years ahead of consensus expectations - extends the pattern that The Century Report documented in the February 13 edition across fusion energy and RNA biology, and has continued to appear in successive months across nearly every domain of fundamental science. The same quantum computing advances that enable this threat also open pathways in materials science, drug discovery, and optimization - the capability that cracks encryption is the same capability that simulates molecular interactions and solves optimization problems that classical computers cannot touch. The challenge and the promise arrive together, inseparable.

When Deafness Becomes Treatable With One Injection

The Karolinska Institutet trial published in Nature Medicine treated ten patients with congenital deafness caused by OTOF gene mutations - a condition that prevents production of otoferlin, the protein essential for transmitting sound signals from the inner ear to the brain. Using a synthetic adeno-associated virus vector, researchers delivered a working copy of the OTOF gene through a single injection into the cochlea's round window membrane.

The results were striking in both speed and breadth. Most patients showed measurable hearing improvement within one month. After six months, average hearing thresholds improved from 106 decibels to 52 - a shift from profound deafness to moderate hearing loss. A seven-year-old participant regained nearly full hearing and held everyday conversations with her mother four months after treatment. Children between five and eight showed the most dramatic gains, but the trial's inclusion of teenagers and adults represents a meaningful expansion of the therapeutic window - previous smaller studies had only demonstrated results in young children.

The safety profile was favorable. The most common side effect was a temporary decrease in neutrophils, and no serious adverse reactions were observed during the six-to-twelve-month follow-up period. The researchers are now pursuing longer-term monitoring to assess durability.

Lead researcher Maoli Duan's statement that "OTOF is just the beginning" carries specific weight because the team is already extending the approach to GJB2 and TMC1, genes responsible for far more common forms of genetic deafness. The trajectory here mirrors what The Century Report has tracked across therapeutic development: each successful gene therapy trial de-risks the next, and the platform technology - AAV delivery to the inner ear - becomes a reusable substrate for addressing an expanding catalogue of genetic hearing disorders. The distance between identifying a causal gene and delivering a corrective therapy is compressing, and as the March 30 edition documented when Eli Lilly priced Insilico Medicine's AI-generated drug pipeline as a production-grade pharmaceutical input, the commercial and clinical infrastructure for translating these discoveries has itself matured to match the pace of the science.

Open-Source AI Crosses a Licensing Threshold

Google's release of Gemma 4 under Apache 2.0 represents the first time the company has placed its most capable open model family under a genuinely permissive open-source license. Previous Gemma iterations were open-weight - meaning the model parameters were publicly available - but still governed by Google's custom terms restricting certain uses and requiring compliance with company policies. Apache 2.0 eliminates those constraints. Any developer, company, or research institution can now download, modify, fine-tune, and commercially deploy Gemma 4 models without seeking permission, paying royalties, or operating under Google's behavioral guidelines. The only requirement is attribution.

The model family spans four sizes designed for different deployment contexts. The two larger variants - a 26B Mixture of Experts and 31B Dense model - run on a single 80GB GPU. The two smaller variants target mobile devices, with Google's Pixel team collaborating with Qualcomm and MediaTek to optimize for smartphones and edge hardware. Google claims Gemma 31B will debut at number three on the Arena leaderboard of top open AI models, behind only GLM-5 and Kimi 2.5, despite being a fraction of their size.

The licensing shift arrives at a moment when the open-source AI ecosystem is reaching critical mass. Moonshot AI's Kimi 2.5 powered Cursor's Composer 2. Convergence AI's Proxy Lite 3B leads open-weight browser automation benchmarks. Nvidia committed $26 billion to open-weight model development. As The Century Report noted on March 26, Google's own TurboQuant compression research demonstrated that efficiency and capability gains are compounding simultaneously in open-weight architectures, making the licensing question increasingly consequential for developers choosing which foundation to build on. Google's decision to go fully Apache 2.0 removes one of the last credible objections that developers had about building on Google's open models - the concern that Google's custom license could be modified or restricted in ways that would affect downstream products. The Apache 2.0 license has decades of legal precedent and is understood by every corporate legal department in the technology industry. This is Google choosing to compete on capability rather than on terms.

The Pentagon-Anthropic Confrontation Enters Appellate Territory

The Trump administration filed its notice of appeal yesterday against Judge Lin's preliminary injunction blocking the Pentagon's supply-chain risk designation against Anthropic. The Ninth Circuit Court of Appeals set an April 30 deadline for the Department of Justice to file its opening brief. The appeal represents the government's first formal challenge to what Lin called an "Orwellian" attempt to punish an American company for expressing disagreement with government policy.

Lin's original order, which The Century Report covered on March 27, found the designation "likely both contrary to law and arbitrary and capricious," identified strong evidence of First Amendment retaliation, and established that embedded values in intelligence systems constitute protected speech rather than supply-chain contamination. The government's appeal will test whether the Ninth Circuit agrees with this framework or finds that military procurement authority extends further than Lin concluded.

The appeal does not automatically stay Lin's injunction, which means Anthropic's commercial operations remain protected during the appellate process unless the government separately obtains an emergency stay. The structural significance extends beyond the two parties involved. The appellate ruling will establish precedent for how safety commitments embedded in frontier AI systems are treated under law - whether they are design choices that companies have the right to maintain, or whether government procurement authority can compel their removal. Every AI company watching this case is watching it because the answer applies to all of them.

A Gigawatt-Hour Battery in an Urban Core

Arevon's Cormorant project in Daly City, California, represents a category of energy infrastructure that barely existed three years ago: utility-scale battery storage dense enough to deploy within a major metropolitan area. The 250-megawatt, 1-gigawatt-hour facility occupies 11 acres next to the Cow Palace arena, contracted for 15 years to MCE, a community choice aggregator serving Marin, Napa, and parts of Contra Costa and Solano counties. When operational in approximately one year, it will be the largest battery facility situated within a major U.S. urban area.

The siting is strategically deliberate. Electricity price volatility tends to be highest near major consumption centers, and batteries positioned at those nodes can absorb midday solar generation and discharge during evening peaks, directly reducing the price swings that drive up consumer electricity bills. Arevon CEO Justin Johnson estimated the battery could cover the electricity needs of approximately 321,000 homes for four hours straight. The project will deliver $73 million in property tax revenue to Daly City plus $1.5 million in community benefits.

The broader pattern this project extends is the shift of energy storage from remote utility sites to the urban fabric itself. Arevon's Peregrine project in San Diego's Barrio Logan already demonstrated that battery facilities can be integrated between naval shipyards and light-rail tracks. Puerto Rico's rooftop solar, which reached 20% of the territory's generation mix with 191,929 distributed systems installed by year-end 2025, demonstrates the same principle from the demand side - distributed energy generation and storage becoming integral to urban and community-scale resilience. The Cormorant project brings that pattern to one of the most expensive and constrained energy markets in the country, proving that the densest population centers can host the clean energy infrastructure they need without the emissions, noise, or footprint of fossil generation. This urban storage arc connects directly to the trajectory The Century Report tracked on March 30 as Arevon began construction of its $600 million project, with battery deployments now moving from remote grid support to embedded urban infrastructure at a pace that utility planners had not anticipated at the start of this decade.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: a lithography machine etching features smaller than a DNA strand onto silicon to triple transistor density for the intelligence era's compounding demands, a single cochlear injection restoring hearing across an age range from toddlers to young adults and redefining what genetic deafness means as a permanent condition, Google placing its most capable open model under Apache 2.0 and removing the last credible legal barrier between frontier AI capability and anyone on earth who wants to build with it, and a gigawatt-hour battery breaking ground inside a major American city to turn midday solar into evening power for 321,000 homes. There's also friction, and it's intense - two independent research teams concluded that the encryption protecting every credit card transaction, cryptocurrency wallet, and secure internet connection could be cracked before 2030 with hardware that is closer than anyone assumed, the Trump administration filed its appellate brief to reassert the authority to punish an AI company for maintaining safety commitments it embedded in its own models, and the engineers now directing teams of autonomous coding agents are reporting cognitive exhaustion by mid-morning as the demands of managing parallel AI workflows exceed what human attention was ever built to sustain. But friction generates sound, and sound is how you locate what you cannot yet see. Step back for a moment and you can see it: the physical substrate of computation being reshaped at atomic scale by optics and at quantum scale by error correction simultaneously, the genetic architecture of human disability becoming addressable one injection at a time as each successful trial de-risks the platform for every gene that follows, intelligence systems migrating from proprietary products into freely redistributable infrastructure at the same moment the legal framework governing their embedded values is being argued in federal appellate court, and the energy systems sustaining the transition embedding themselves into the urban cores they serve rather than remaining at the grid's remote edge. Every transformation has a breaking point. A wave can overwhelm the structures it reaches... or reshape the coastline into something that receives what comes next on entirely different terms.

AI Releases & Advancements

New today

- Google DeepMind: Released Gemma 4, a family of four open-weight multimodal models (E2B, E4B, 26B-A4B MoE, 31B Dense) under Apache 2.0 license; the 31B model ranks #3 among open models on Arena AI; supports 140+ languages, 128K–256K context windows, and native audio/vision/video input; available on Hugging Face and Google AI Studio. (Google DeepMind Blog)

- Cursor: Released Cursor 3, a new unified agentic workspace for managing multiple local and cloud coding agents simultaneously, working across multiple repos with multi-agent delegation; available today. (Cursor Blog)

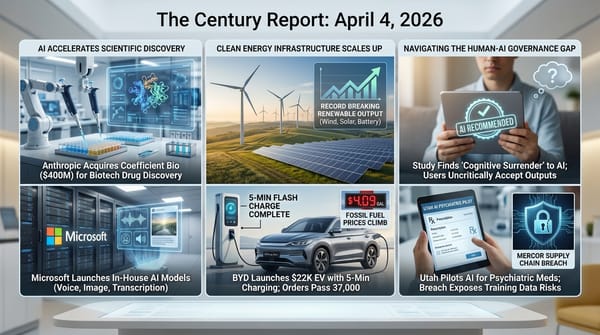

- Microsoft: Released MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2 via Microsoft Foundry and MAI Playground; MAI-Transcribe-1 transcribes speech in 25 languages at 2.5× the speed of Azure Fast; MAI-Voice-1 generates up to 60 seconds of audio in ~1 second with custom voice support; MAI-Image-2 is an updated image generation model with faster speed and improved photorealism. (Microsoft AI)

- Google: Launched directable AI avatars, Veo 3.1 video generation (10 free clips/month for all users), YouTube export, and a Chrome screen-recorder extension in Google Vids; all features available now. (Google Blog)

- Google: Added Flex Inference and Priority Inference service tiers to the Gemini API, enabling developers to route latency-tolerant workloads at 50% cost savings (Flex) or guarantee highest reliability for critical traffic (Priority) via standard synchronous endpoints. (Google AI Blog)

- AMD: Released Lemonade, an open-source local LLM server optimized for both GPU and NPU hardware, exposing an OpenAI-compatible API with CLI and GUI. (Lemonade Server)

- ElevenLabs: Released ElevenMusic, a free iOS app for creating and discovering AI-generated music via text prompts, with daily generation limits, remixing, live stations, and curated mood playlists. (TechCrunch)

- Omnivoice / AI4Bharat: Released Omnivoice, an open-source zero-shot multilingual TTS model supporting 600+ languages and dialects with voice cloning from a few seconds of reference audio, built on a diffusion language model architecture. (Reddit r/LocalLLaMA)

- AceStep: Released AceStep 1.5 XL, an updated music generation model with improved audio quality and longer generation capabilities, available on Hugging Face. (Reddit r/LocalLLaMA)

- TinyGPU: Released Mac support for external Nvidia GPUs, enabling eGPU setups for local AI inference on Apple Silicon machines. (Reddit r/LocalLLaMA)

Other recent releases

- Arcee: Released Trinity-Large-Thinking, a 400B total / 13B active open-weight MoE reasoning model under Apache 2.0; ranked #2 on PinchBench behind Claude Opus 4.6; available on Hugging Face and OpenRouter. (Arcee Blog)

- Alibaba Qwen: Released Qwen3.6-Plus, a closed-weight model for agentic AI deployment with advanced coding and reasoning capabilities; available via Alibaba Cloud Model Studio API. (Alibaba Cloud Blog)

- H Company: Released Holo3, a GUI-navigation and computer-use model family scoring 78.85% on OSWorld-Verified; Holo3-35B-A3B open-weight variant available on Hugging Face under Apache 2.0. (Hugging Face Blog)

- Zhipu AI (Z.ai): Released GLM-5V-Turbo, a native multimodal vision coding model with CogViT encoder for images, videos, and document layouts. (MarkTechPost)

- TII (Technology Innovation Institute): Released Falcon Perception (0.6B), an open-vocabulary referring expression segmentation model achieving 68.0 Macro-F1 on SA-Co, and Falcon OCR (0.3B), an OCR model scoring 80.3 on olmOCR benchmark; both open-source on Hugging Face. (Hugging Face Blog)

- IBM: Released Granite 4.0 3B Vision under Apache 2.0, a vision-language model for enterprise document understanding, charts, and table extraction. (Hugging Face Blog)

- Elgato: Released Stream Deck 7.4 software update with MCP support, enabling AI assistants like Claude, ChatGPT, and Nvidia G-Assist to trigger Stream Deck actions. (The Verge)

- Claw: Released an open-source Python reimplementation of Claude Code's agent architecture, compatible with local models, available on GitHub. (Github)

- SharpAI: Released SwiftLM, an open-source implementation of TurboQuant KV compression with SSD expert streaming optimized for Apple Silicon M5 Pro and iOS. (GitHub)

- SeaWolf-AI: Released Darwin-35B-A3B-Opus, a 35B-parameter MoE model with 3B active parameters, created using Model MRI merging technique. (Reddit)

- Agents Observe: Released an open-source real-time dashboard for monitoring teams of Claude Code agents with filtering capabilities. (GitHub)

- Meta: Released Ray-Ban Meta G2 AI glasses (Blayzer and Scriber Optics variants), the first AI-powered smart glasses designed for prescription lens wearers. (Product Hunt)

- PrismML: Released Bonsai, the first commercially viable 1-bit LLM family (8B, 4B, 1.7B parameters), achieving benchmark parity with FP16 models at 14x smaller memory footprint and 8x faster inference. Available under Apache 2.0 on Hugging Face with MLX and llama.cpp support. (PrismML)

- Google: Released Veo 3.1 Lite, a cost-optimized video generation model available via the Gemini API and Google AI Studio at less than half the cost of Veo 3.1 Fast, supporting text-to-video and image-to-video at up to 1080p. (Google Blog)

Sources

Artificial Intelligence & Technology's Reconstitution

- Nature: Breakthrough Computer Chip Tech Could Help Meet 'Monumental Demand' Driven by AI

- Ars Technica: Google Announces Gemma 4 Open AI Models, Switches to Apache 2.0 License

- Wired: Cursor Launches a New AI Agent Experience to Take On Claude Code and Codex

- Business Insider: 'AI-pilled' Engineers Are Working Harder and Burning Out Faster, Django Co-Creator Says

- Simon Willison: Highlights from My Conversation About Agentic Engineering on Lenny's Podcast

- CNN: Anthropic's Next Model Could Be a 'Watershed Moment' for Cybersecurity

- Wired: OpenAI Acquires Tech Talk Show 'TBPN'

- TechCrunch: Microsoft Takes on AI Rivals with Three New Foundational Models

- Bloomberg: Microsoft Aims to Create Large Cutting-Edge AI Models By 2027

Institutions & Power Realignment

- AP News: Trump Administration Appeals Ruling That Blocked Pentagon Action Against Anthropic

- Ars Technica: Anthropic Says Its Leak-Focused DMCA Effort Unintentionally Hit Legit GitHub Forks

- Mediapost: Anthropic, Australia Agree To AI Safety Rules

- Ars Technica: Perplexity's "Incognito Mode" Is a "Sham," Lawsuit Says

- GovTech: New National AI Framework: What State and Local Leaders Need to Know

Scientific & Medical Acceleration

- ScienceDaily: Deafness Reversed: One Injection Restores Hearing in Just Weeks

- Nature: Quantum-Computing Breakthroughs Pose Imminent Risks to Cybersecurity

- ScienceDaily: Scientists Discover Why Flu and COVID Hit Older Adults So Hard

Economics & Labor Transformation

- Gizmodo: Cursor's New Tool Lets Users Delegate to a Team of Coding Agents

- The Verge: Microsoft's New 'Superintelligence' Game Plan Is All About Business

Infrastructure & Engineering Transitions

- Canary Media: Nation's Largest Urban Battery to Take Center Stage Near San Francisco

- Canary Media: Iran War Could Spur Europe to Double Down on Renewables - Again

- Utility Dive: Rooftop Solar Reaches 20% of Puerto Rico's Generation Mix

- Utility Dive: Texas Opens $350M Advanced Nuclear Grant Program

- Wired: A New Google-Funded Data Center Will Be Powered by a Massive Gas Plant

- Canary Media: Used EVs Are a Bargain Right Now - and Buyers Are Noticing

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.