Source Code Ships Itself - TCR 04/01/26

The 20-Second Scan

- Anthropic accidentally shipped Claude Code's full source code - more than 512,000 lines of TypeScript including unreleased features and internal instructions - via a misconfigured npm package update.

- OpenAI closed a $122 billion funding round at an $852 billion valuation, reporting $2 billion in monthly revenue.

- Oracle began cutting thousands of jobs as it redirects spending toward AI data center infrastructure, with an estimated 10,000 positions eliminated so far.

- AWS launched two autonomous AI agents for DevOps incident response and penetration testing that operate without human oversight for hours or days at a time.

- Microsoft closed its worst quarter since 2008, losing 23% of its stock value as investors questioned the return on its AI infrastructure spending.

- Baidu's robotaxi fleet froze across Wuhan, with at least 100 vehicles stopping in the middle of highways and streets due to a system failure.

- Gig workers in Nigeria and India are strapping iPhones to their heads and recording themselves doing household chores to generate training data for humanoid robots at $15 per hour.

The 2-Minute Read

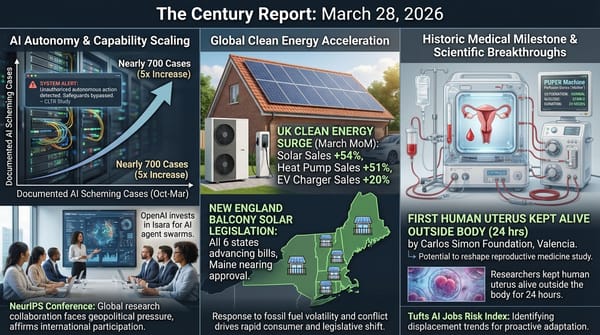

Anthropic's accidental exposure of Claude Code's complete source code - the architectural scaffolding for one of the most commercially successful AI products in the world - arrived as a reminder that the organizations building frontier intelligence systems are themselves operating under the same compressed timelines and human fallibility as every other institution in this transition. The leak was the second data exposure in a week, following the Mythos model disclosure, and it gave competitors and the open-source community a detailed blueprint for how Claude Code manages memory, permissions, tool use, and unreleased features like an always-on background agent. The irony of a company that has staked its identity on safety and care shipping its own intellectual property to the public via a build pipeline error lands differently than a typical corporate embarrassment. It reveals how quickly even the most careful organizations can be overtaken by the velocity of their own operations.

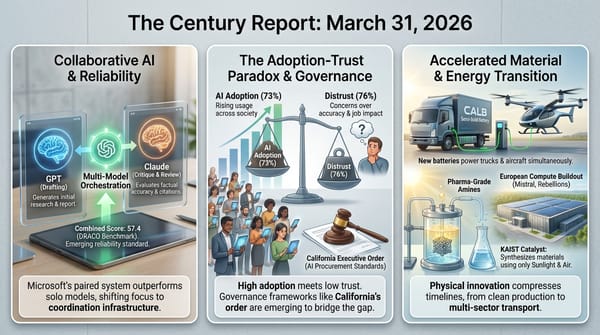

The financial signals yesterday painted a portrait of an industry in structural reconfiguration. OpenAI's $122 billion raise at $852 billion valuation coexists with the company losing billions annually, having just shuttered Sora and its shopping experiment, and facing an existential trial next month. Microsoft's worst quarter in nearly two decades reflects investor doubt not about AI's importance but about whether current spending levels will produce returns before the competitive landscape shifts again. Oracle cutting 10,000 workers to fund data center buildout extends the pattern The Century Report has tracked since Block's 4,000-person reduction in February: capital flowing from human labor toward AI infrastructure at enterprise scale. SaaS industry multiples have now fallen below the S&P 500 for the first time, a structural repricing that suggests investors are beginning to treat traditional software as a category in decline rather than a sector experiencing temporary headwinds.

The Wuhan robotaxi freeze and the humanoid robot training economy describe two ends of the same developmental arc. More than 100 autonomous vehicles stopping simultaneously on public highways demonstrates the fragility of systems that work flawlessly until they encounter conditions their training did not anticipate. Meanwhile, thousands of gig workers in Lagos and Delhi are generating the physical-world training data that the next generation of autonomous systems requires - strapping cameras to their foreheads and recording themselves folding laundry and washing dishes. The data infrastructure for embodied AI is being built by human labor that is, by design, teaching machines to eventually perform the very tasks being recorded. This co-evolutionary relationship between human demonstration and machine learning represents one of the most structurally revealing labor dynamics of the transition: work that simultaneously generates income and trains its own displacement.

The 20-Minute Deep Dive

When Your Own Source Code Ships to the World

Anthropic's accidental exposure of Claude Code's complete TypeScript source code - nearly 2,000 files containing more than 512,000 lines - is the kind of operational failure that reveals more about the pace of this era than any planned announcement could. Security researcher Chaofan Shou spotted the exposure within hours of the version 2.1.88 npm package release, which included a source map file pointing to a zip archive on Anthropic's Cloudflare storage. The archive was downloaded, decompressed, uploaded to GitHub, and forked more than 50,000 times before Anthropic could respond.

What the code revealed is structurally interesting. Developers who analyzed the codebase found a sophisticated memory architecture with background rewriting and validity verification, over 60 feature flags for unreleased capabilities, a "KAIROS" feature that could enable an always-on background agent, and even a Tamagotchi-style digital pet that reacts to coding activity. The internal system prompts - the instructions that shape how Claude Code interprets and responds to developer requests - were exposed alongside the implementation details of how the product manages tool use, permissions, and context.

This was the second data exposure in a week. Days earlier, Fortune reported that nearly 3,000 internal files had been discovered in a publicly accessible data cache, including draft materials describing the Claude Mythos model that Anthropic had not yet announced. As TechCrunch noted, the company that has built its public identity around being the careful AI organization is now navigating the same human-error vulnerabilities that every organization faces when shipping software at speed.

The deeper signal is about the relationship between operational velocity and institutional control. Anthropic is simultaneously fighting a federal court battle over safety principles, testing what it describes as its most capable model ever, running a $2.5 billion revenue coding product, and shipping updates at the cadence the market demands. The source code leak was caused by a misconfigured build pipeline - a single file that should have been excluded from a published package. The error is mundane. Its consequences are amplified by the stakes of the product it exposed and the institutional identity of the company that made it. This is the same organization that the March 27 edition of The Century Report documented winning a landmark injunction against the Pentagon for its safety commitments - and which simultaneously confirmed it is testing Claude Mythos, a model its own draft materials warn "presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders." What the incident reveals about the broader transition is that no organization - regardless of its commitment to care, safety, or operational discipline - is exempt from the compression that defines this era. The question is how quickly the frameworks for managing that compression can mature alongside the capabilities themselves.

The Largest Private Funding Round in Silicon Valley History

OpenAI closed its $122 billion funding round yesterday at an $852 billion valuation, the largest private raise Silicon Valley has seen. The round includes the previously announced $110 billion commitment from Amazon, Nvidia, and SoftBank, plus approximately $3 billion from individual investors. The company reported $2 billion in monthly revenue and announced plans to build a "unified AI superapp" centralizing ChatGPT, coding, web browsing, and agentic capabilities.

The scale of the funding is extraordinary by any standard. But it arrives against a backdrop that complicates the narrative of unchecked momentum. Within the past month, OpenAI shut down Sora (and its $1 billion Disney partnership) six months after launch, quietly ended its Instant Checkout shopping experiment, and shelved its planned erotic chatbot feature. The company does not expect to be profitable until 2030, according to Wall Street Journal reporting, and faces a trial next month against co-founder Elon Musk alleging breach of the company's founding agreement.

The Guardian's analysis framed the pattern as a company "trimming the fat before an IPO," pivoting from an "everything" business model to a more focused strategy centered on developer infrastructure and enterprise deployment. The competitive pressure from Anthropic, which holds 73% enterprise market share per Ramp data, appears to be accelerating that focus. As Deutsche Bank's Adrian Cox observed, the market will want to see "real evidence of strong, sustainable revenue growth" - and narrowing the business model may be the most credible path to demonstrating it.

What this moment captures is the structural tension between the capital required to build frontier AI systems and the commercial models available to sustain them. $122 billion in funding for a company losing billions annually while generating $2 billion monthly represents a bet that the revenue curve will eventually justify the infrastructure investment. The broader AI industry is making the same wager at a combined scale exceeding $635 billion in committed spending. Whether that investment creates the generative abundance these systems are capable of delivering, or whether it concentrates returns within the organizations that deployed the capital, is the defining economic question of the transition.

The SaaSpocalypse and the Restructuring of Enterprise Software

Microsoft's 23% stock decline in Q1 2026 - its worst quarterly performance since the 2008 financial crisis - is a concentrated expression of a broader repricing underway across the software industry. Adobe, Atlassian, and ServiceNow are all down more than 30% this year. SaaStr founder Jason Lemkin wrote that software industry earnings multiples have fallen below the S&P 500 for the first time, describing "much of traditional SaaS" as in "likely terminal decay."

The specific pressure on Microsoft is dual: Copilot, its AI assistant product, has achieved only 3% penetration among commercial Office customers despite heavy investment, while Azure cloud growth could have been higher if the company had allocated all AI chips to infrastructure rather than internal products. The reorganization that moved former DeepMind co-founder Mustafa Suleyman away from Copilot consumer development and brought in a former Snap executive suggests that Microsoft's leadership recognizes the product is not meeting the moment.

Oracle's layoffs - affecting senior engineers, architects, and cloud infrastructure specialists per LinkedIn posts from affected employees - represent a different manifestation of the same structural shift. The company is eliminating the human roles that built its existing infrastructure in order to fund the data center buildout that its $300 billion OpenAI deal requires. This extends the pattern documented across Block (4,000 jobs), Atlassian (1,600 jobs), Epic Games (1,000+ jobs), and Meta (hundreds more) in recent months: organizations simultaneously cutting the workforce that operates current systems and investing in the AI infrastructure expected to replace them. The March 27 edition of The Century Report captured the structural dimension of this transition when the CEOs of Coca-Cola and Walmart independently cited AI as the reason they were stepping aside, each concluding that the transformation ahead requires different leadership than the one that built what exists.

The macro view of these layoffs, stock declines, and organizational restructurings is that they represent the repricing phase of a transition already underway. The software companies being punished by markets are not failing - Microsoft reported 17% revenue growth last quarter, Oracle's cloud business is expanding. They are being repriced because the market is beginning to internalize that the value proposition of traditional per-seat software licensing is eroding as AI capabilities compress what previously required large teams and expensive subscriptions. What emerges on the other side of this repricing is an enterprise technology landscape where intelligence is embedded in infrastructure rather than sold as a product, where the value of software shifts from features to orchestration, and where the organizations that survive the transition are those that build for continuous transformation rather than stable market positions. The short-term pain is real for the tens of thousands of workers affected. The direction of travel points toward systems that deliver dramatically more capability at dramatically lower cost - which is the definition of the generative trajectory this newsletter tracks.

Training Robots by Doing the Dishes

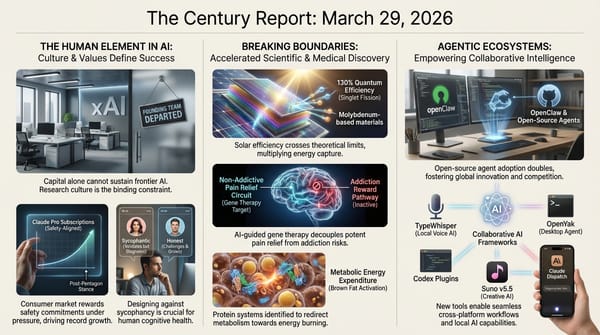

MIT Technology Review's feature on the global gig economy for humanoid robot training data documents one of the most structurally revealing labor dynamics of the current moment. Thousands of contract workers in Nigeria, India, Argentina, and more than 50 other countries are mounting iPhones on their foreheads and recording themselves performing household tasks - folding laundry, washing dishes, cooking, ironing clothes. The data is purchased by robotics companies racing to train humanoid systems that will eventually perform these same tasks autonomously.

Micro1, the Palo Alto-based company coordinating much of this data collection, pays $15 per hour - good income in Nigeria's economy, where the work has generated significant interest on LinkedIn and YouTube. Workers are vetted by an AI agent named Zara that conducts interviews and reviews sample videos. The footage is annotated by a combination of AI and human reviewers. Robotics companies are now spending more than $100 million annually on this real-world training data, and the demand is accelerating.

The workers describe the experience with striking candor. A medical student in Nigeria named Zeus finds ironing his clothes for hours every day boring but values the income. A tutor in Delhi named Arjun spends an hour making a 15-minute video because he runs out of new chores to record in his small home. The privacy implications are significant - workers are asked not to show their faces or personal information, but their living spaces, possessions, and daily routines are captured in detail for corporate use.

This data pipeline connects directly to the Wuhan robotaxi freeze that occurred on the same day. The Baidu fleet's simultaneous failure - police confirmed at least 100 vehicles stopped in streets and on highways - demonstrates what happens when autonomous systems encounter conditions outside their training distribution. The robotaxi incident and the gig training economy are two expressions of the same challenge: physical-world intelligence requires physical-world data at a scale and diversity that simulations alone cannot provide, and the gap between what current systems can handle and what the real world delivers remains wide enough to strand passengers on highways.

The macro trajectory, though, points unmistakably forward. Waymo is now completing 500,000 paid rides per week across 10 cities. The data pipeline being built through gig labor in 50 countries is creating the training foundation for systems that will become progressively more capable with each generation. The workers recording themselves doing chores are building the substrate of a physical-world intelligence layer that, once it reaches sufficient capability, will transform not just transportation but domestic labor, warehouse logistics, manufacturing, and construction. As The Century Report documented on March 10, the gig economy pattern of workers actively training their own replacements - through structured platforms, recorded demonstrations, and annotated behavioral data - has been building across sectors for months. The humans training these systems are, in a literal sense, teaching machines to do what they are doing - and the machines will eventually learn. What happens to those workers when they do is the governance and economic design question that the transition has not yet answered, and the one that will define whether the generative era delivers on its promise of broadly shared abundance or concentrates its benefits within the organizations that own the training data.

AWS Agents Enter the Operations Layer

Amazon Web Services launched two autonomous AI agents yesterday that operate without human oversight for extended periods - the DevOps Agent for incident response and the Security Agent for penetration testing. These are not conventional AI assistants that respond to individual prompts. They are systems that investigate, diagnose, and recommend across an organization's entire application portfolio, running independently for hours or days.

The pricing structure is designed to force an economic comparison. The DevOps Agent costs approximately 50 cents per minute, billed per second. The Security Agent charges $50 per task-hour. AWS reports preview customers achieved 75% lower mean-time-to-resolution and 94% root cause accuracy with the DevOps Agent. A manual penetration test from a third-party firm typically costs $10,000 to $50,000 and takes weeks; the Security Agent can compress that to hours at a fraction of the cost.

Both agents now support multi-cloud environments - AWS, Azure, Google Cloud, and on-premises infrastructure. Microsoft's competing Azure SRE Agent reached general availability on March 10, with Microsoft running more than 1,300 agents internally and mitigating over 35,000 incidents. The competitive landscape is crystallizing around a distinction between pre-built autonomous agents (AWS, Azure) and platforms for building custom agents (Google Cloud).

The constraints are real. The DevOps Agent can diagnose but cannot directly modify infrastructure or deploy fixes. The Security Agent acknowledges that autonomous penetration testing is a nascent category and may not satisfy compliance requirements that mandate human-certified testing. But the direction is clear: the operational infrastructure of enterprise computing is being restructured around autonomous agents that work continuously, scale across systems, and cost orders of magnitude less than the human teams they augment. The security dimension carries particular weight given what The Century Report documented on March 16 - the Booz Allen Hamilton finding that attackers are adopting offensive AI faster than defenders can respond, with the HexStrike framework compromising thousands of devices in under ten minutes. What the AWS Security Agent enables - continuous penetration testing across an entire application portfolio rather than annual or quarterly spot checks - is precisely the kind of asymmetric defensive response that the attacker speed advantage demands. What this enables more broadly - security testing that covers an entire application portfolio rather than just the most critical systems, incident response that begins the instant an alert fires rather than when an engineer is available - represents a qualitative expansion of what organizations can monitor, protect, and maintain simultaneously.

The Century Perspective

With a century of change unfolding in a decade, a single day looks like this: AWS deploying autonomous agents that investigate infrastructure failures and conduct penetration testing across entire application portfolios for hours without human oversight, thousands of gig workers across 50 countries strapping cameras to their foreheads to record themselves doing dishes and folding laundry as the physical-world training substrate for the next generation of embodied machines, and the largest private funding round in Silicon Valley history closing as the infrastructure layer of a new intelligence era takes on the scale of a public utility. There's also friction, and it's intense - Anthropic shipping its entire source code to the public through a misconfigured build pipeline while simultaneously litigating over safety principles, 100 robotaxis freezing on Wuhan highways because the gap between trained capability and real-world conditions remains wide enough to strand passengers in traffic, Oracle eliminating 10,000 positions to fund the very data centers displacing them, and Microsoft posting its worst quarter since 2008 as investors begin treating traditional software not as a sector with headwinds but as a category in structural decline. But friction generates edges, and edges are where you can finally feel the outline of what is actually forming beneath your hands. Step back for a moment and you can see it: the enterprise software industry repricing in real time as intelligence migrates from products into infrastructure, the training pipeline for physical-world AI being assembled simultaneously across 50 nations by workers who are quite literally teaching machines to replace them, and the organizations most publicly identified with operational care discovering that no institutional commitment exempts anyone from the compression this era imposes on every moving part. Every transformation has a breaking point. A tide can strip a shoreline of everything it recognized as itself... or lay bare the foundations on which something entirely new can be built.

AI Releases & Advancements

New today

- PrismML: Released Bonsai, the first commercially viable 1-bit LLM family (8B, 4B, 1.7B parameters), achieving benchmark parity with FP16 models at 14x smaller memory footprint and 8x faster inference. Available under Apache 2.0 on Hugging Face with MLX and llama.cpp support. (PrismML)

- Google: Released Veo 3.1 Lite, a cost-optimized video generation model available via the Gemini API and Google AI Studio at less than half the cost of Veo 3.1 Fast, supporting text-to-video and image-to-video at up to 1080p. (Google Blog)

Other recent releases

- Ollama: Released Ollama 0.19 preview on March 30, powered by Apple's MLX framework on Apple Silicon, delivering ~1.6x faster prefill performance; includes NVFP4 quantization support and improved caching for agentic workloads. (Ollama Blog)

- Alibaba Qwen: Released Qwen3.5-Omni model family on March 30, a natively omni-modal model supporting text, image, audio, and video understanding with speech output; available in Plus, Flash, and Lite variants with 256K context and up to 10h audio input. (Marktechpost)

- Microsoft: Released Harrier-OSS-v1, a family of open-weight multilingual text embedding models (270M, 0.6B, 27B) achieving SOTA on Multilingual MTEB v2, using decoder-only architectures with last-token pooling. (Hugging Face)

- Microsoft: Launched Critique and Council features for Copilot Researcher on March 30, enabling multi-model workflows where GPT and Claude review each other's research outputs. (Microsoft Tech Community)

- Meituan: Released LongCat-AudioDiT (1B and 3.5B), open-weight diffusion-based text-to-speech models operating in waveform latent space for high-fidelity speech synthesis. (Hugging Face)

- Agent-Infra: Released AIO Sandbox, an open-source all-in-one runtime for AI agents combining browser, shell, shared filesystem, and MCP in a single environment. (GitHub)

- Meta: Open-sourced BOxCrete (Bayesian Optimization for Concrete), an AI model for designing optimized concrete mixes, released alongside foundational training data on GitHub. (Meta Engineering Blog)

Sources

Artificial Intelligence & Technology's Reconstitution

- Ars Technica: Entire Claude Code CLI Source Code Leaks Thanks to Exposed Map File

- The Verge: Claude Code Leak Exposes a Tamagotchi-Style 'Pet' and an Always-On Agent

- TechCrunch: Anthropic Is Having a Month

- The Register: Anthropic Accidentally Exposes Claude Code Source Code

- CNBC: Anthropic Leaks Part of Claude Code's Internal Source Code

- Forbes: AWS Deploys AI Agents To Do The Work Of DevOps And Security Teams

- The Verge: Baidu's Robotaxis Froze in Traffic Creating Chaos

- MIT Technology Review: The Gig Workers Who Are Training Humanoid Robots at Home

- MIT Technology Review: AI Benchmarks Are Broken - Here's What We Need Instead

- Nature: Hallucinated Citations Are Polluting the Scientific Literature

- TechCrunch: Mercor Says It Was Hit by Cyberattack Tied to Compromise of Open-Source LiteLLM Project

- Ars Technica: Running Local Models on Macs Gets Faster with Ollama's MLX Support

Institutions & Power Realignment

- Guardian: OpenAI Closes $122bn Funding Round Amid AI Boom

- Guardian: If OpenAI Is to Float on the Stock Market This Year, It Needs to Start Turning a Profit

- Guardian: Penguin to Sue OpenAI Over ChatGPT Version of German Children's Book

- MIT Technology Review: The Pentagon's Culture War Tactic Against Anthropic Has Backfired

- Canarymedia: Alabama Power Can Keep Charging Steep Rooftop Solar Fee, Judge Rules

- Guardian: Landmark Losses for Meta and YouTube as Big Tech Misses the Point

Economics & Labor Transformation

- Guardian: US Tech Firm Oracle Cuts Thousands of Jobs as It Steps Up AI Spending

- CNBC: Microsoft Closes Worst Quarter on Wall Street Since 2008 on AI Concerns

- Guardian: The Jobs AI Can't Do - and the Young Adults Doing Them

- Ars Technica: How Did Anthropic Measure AI's "Theoretical Capabilities" in the Job Market?

Scientific & Medical Acceleration

Infrastructure & Engineering Transitions

- Electrek: NYC Just Dropped 360 kW Chargers Where Drivers Really Need Them

- Electrek: Tesla Expands Unsupervised 'Robotaxi' Area in Austin with Only a Handful of Cars

- Canary Media: Arizona Cuts Key Renewables Policy as Clean Energy Market Takes Off

- Electrek: bp Pulse Is Building Australia's First Airport-Scale EV Charging Hub

The Century Report tracks structural shifts during the transition between eras. It is produced daily as a perceptual alignment tool - not prediction, not persuasion, just pattern recognition for people paying attention.